nodejs crawler superagent and cheerio experience case

I have heard of crawlers for a long time, and I have started to learn nodejs in the past few days. I crawl the article titles, user names, reading numbers, recommendation numbers and user avatars on the homepage of the blog park. Now I will make a short summary.

Use these points:

1. The core module of node--File system

2. Third-party module used for http requests-- superagent

3. Third-party module for parsing DOM--cheerio

Please go to each link for detailed explanations and APIs of several modules. There are only simple usages in the demo.

Preparation

Use npm to manage dependencies, and the dependency information will be stored in package.json

//安装用到的第三方模块 cnpm install --save superagent cheerio

Introduce the required functional modules

//引入第三方模块,superagent用于http请求,cheerio用于解析DOM

const request = require('superagent');

const cheerio = require('cheerio');

const fs = require('fs');Request + parse page

If you want to crawl to the content on the homepage of the blog park, you must first request the homepage address and get the returned html. Here, superagent is used to make http requests. The basic usage method is as follows:

request.get(url)

.end(error,res){

//do something

}Initiate a get request to the specified url. When the request is incorrect, an error will be returned (if there is no error, error is null or undefined), and res is the returned data.

After getting the html content, to get the data we want, we need to use cheerio to parse the DOM. Cheerio needs to load the target html first and then parse it. The API is very similar to the jquery API. , familiar with jquery and getting started very quickly. Directly look at the code example

//目标链接 博客园首页

let targetUrl = 'https://www.cnblogs.com/';

//用来暂时保存解析到的内容和图片地址数据

let content = '';

let imgs = [];

//发起请求

request.get(targetUrl)

.end( (error,res) => {

if(error){ //请求出错,打印错误,返回

console.log(error)

return;

}

// cheerio需要先load html

let $ = cheerio.load(res.text);

//抓取需要的数据,each为cheerio提供的方法用来遍历

$('#post_list .post_item').each( (index,element) => {

//分析所需要的数据的DOM结构

//通过选择器定位到目标元素,再获取到数据

let temp = {

'标题' : $(element).find('h3 a').text(),

'作者' : $(element).find('.post_item_foot > a').text(),

'阅读数' : +$(element).find('.article_view a').text().slice(3,-2),

'推荐数' : +$(element).find('.diggnum').text()

}

//拼接数据

content += JSON.stringify(temp) + '\n';

//同样的方式获取图片地址

if($(element).find('img.pfs').length > 0){

imgs.push($(element).find('img.pfs').attr('src'));

}

});

//存放数据

mkdir('./content',saveContent);

mkdir('./imgs',downloadImg);

})Storing data

After parsing the DOM above, the required information content has been spliced, and the URL of the image has been obtained. Now it is stored and the content is stored in the specified location. txt file in the directory, and download the picture to the specified directory

Create the directory first and use the nodejs core file system

//创建目录

function mkdir(_path,callback){

if(fs.existsSync(_path)){

console.log(`${_path}目录已存在`)

}else{

fs.mkdir(_path,(error)=>{

if(error){

return console.log(`创建${_path}目录失败`);

}

console.log(`创建${_path}目录成功`)

})

}

callback(); //没有生成指定目录不会执行

}After you have the specified directory, you can write data, the txt file The content is already there, just write it directly. Use writeFile()

//将文字内容存入txt文件中

function saveContent() {

fs.writeFile('./content/content.txt',content.toString());

}to get the link to the image, so you need to use superagent to download the image and store it locally. superagent can directly return a response stream, and then cooperate with the nodejs pipeline to directly write the image content to the local

//下载爬到的图片

function downloadImg() {

imgs.forEach((imgUrl,index) => {

//获取图片名

let imgName = imgUrl.split('/').pop();

//下载图片存放到指定目录

let stream = fs.createWriteStream(`./imgs/${imgName}`);

let req = request.get('https:' + imgUrl); //响应流

req.pipe(stream);

console.log(`开始下载图片 https:${imgUrl} --> ./imgs/${imgName}`);

} )

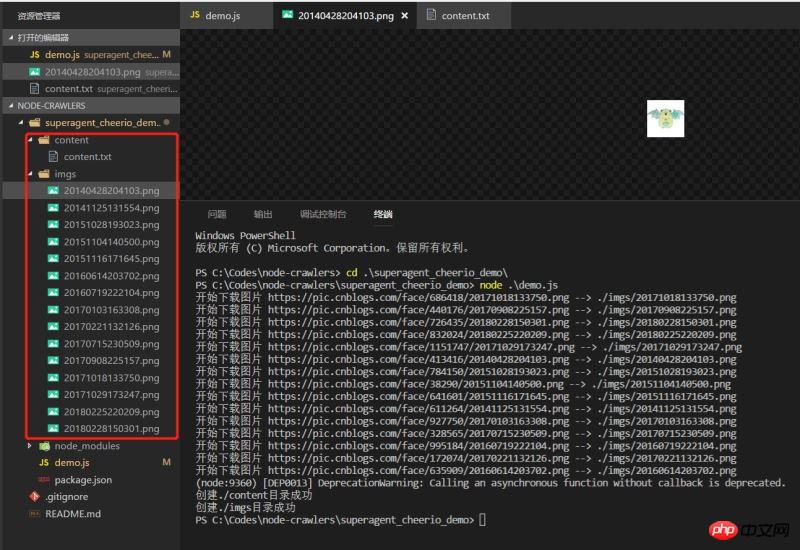

}Effect

Execute the demo and see the effect. The data has climbed down normally

A very simple demo, it may not be that rigorous, but it is always the first small step towards node.

Related recommendations:

node.js [superAgent] request usage example_node.js

NodeJS crawler detailed explanation

Detailed explanation of the web request module of Node.js crawler

The above is the detailed content of nodejs crawler superagent and cheerio experience case. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1653

1653

14

14

1413

1413

52

52

1305

1305

25

25

1251

1251

29

29

1224

1224

24

24

How to implement an online speech recognition system using WebSocket and JavaScript

Dec 17, 2023 pm 02:54 PM

How to implement an online speech recognition system using WebSocket and JavaScript

Dec 17, 2023 pm 02:54 PM

How to use WebSocket and JavaScript to implement an online speech recognition system Introduction: With the continuous development of technology, speech recognition technology has become an important part of the field of artificial intelligence. The online speech recognition system based on WebSocket and JavaScript has the characteristics of low latency, real-time and cross-platform, and has become a widely used solution. This article will introduce how to use WebSocket and JavaScript to implement an online speech recognition system.

WebSocket and JavaScript: key technologies for implementing real-time monitoring systems

Dec 17, 2023 pm 05:30 PM

WebSocket and JavaScript: key technologies for implementing real-time monitoring systems

Dec 17, 2023 pm 05:30 PM

WebSocket and JavaScript: Key technologies for realizing real-time monitoring systems Introduction: With the rapid development of Internet technology, real-time monitoring systems have been widely used in various fields. One of the key technologies to achieve real-time monitoring is the combination of WebSocket and JavaScript. This article will introduce the application of WebSocket and JavaScript in real-time monitoring systems, give code examples, and explain their implementation principles in detail. 1. WebSocket technology

How to use JavaScript and WebSocket to implement a real-time online ordering system

Dec 17, 2023 pm 12:09 PM

How to use JavaScript and WebSocket to implement a real-time online ordering system

Dec 17, 2023 pm 12:09 PM

Introduction to how to use JavaScript and WebSocket to implement a real-time online ordering system: With the popularity of the Internet and the advancement of technology, more and more restaurants have begun to provide online ordering services. In order to implement a real-time online ordering system, we can use JavaScript and WebSocket technology. WebSocket is a full-duplex communication protocol based on the TCP protocol, which can realize real-time two-way communication between the client and the server. In the real-time online ordering system, when the user selects dishes and places an order

How to implement an online reservation system using WebSocket and JavaScript

Dec 17, 2023 am 09:39 AM

How to implement an online reservation system using WebSocket and JavaScript

Dec 17, 2023 am 09:39 AM

How to use WebSocket and JavaScript to implement an online reservation system. In today's digital era, more and more businesses and services need to provide online reservation functions. It is crucial to implement an efficient and real-time online reservation system. This article will introduce how to use WebSocket and JavaScript to implement an online reservation system, and provide specific code examples. 1. What is WebSocket? WebSocket is a full-duplex method on a single TCP connection.

JavaScript and WebSocket: Building an efficient real-time weather forecasting system

Dec 17, 2023 pm 05:13 PM

JavaScript and WebSocket: Building an efficient real-time weather forecasting system

Dec 17, 2023 pm 05:13 PM

JavaScript and WebSocket: Building an efficient real-time weather forecast system Introduction: Today, the accuracy of weather forecasts is of great significance to daily life and decision-making. As technology develops, we can provide more accurate and reliable weather forecasts by obtaining weather data in real time. In this article, we will learn how to use JavaScript and WebSocket technology to build an efficient real-time weather forecast system. This article will demonstrate the implementation process through specific code examples. We

Simple JavaScript Tutorial: How to Get HTTP Status Code

Jan 05, 2024 pm 06:08 PM

Simple JavaScript Tutorial: How to Get HTTP Status Code

Jan 05, 2024 pm 06:08 PM

JavaScript tutorial: How to get HTTP status code, specific code examples are required. Preface: In web development, data interaction with the server is often involved. When communicating with the server, we often need to obtain the returned HTTP status code to determine whether the operation is successful, and perform corresponding processing based on different status codes. This article will teach you how to use JavaScript to obtain HTTP status codes and provide some practical code examples. Using XMLHttpRequest

How to use insertBefore in javascript

Nov 24, 2023 am 11:56 AM

How to use insertBefore in javascript

Nov 24, 2023 am 11:56 AM

Usage: In JavaScript, the insertBefore() method is used to insert a new node in the DOM tree. This method requires two parameters: the new node to be inserted and the reference node (that is, the node where the new node will be inserted).

How to get HTTP status code in JavaScript the easy way

Jan 05, 2024 pm 01:37 PM

How to get HTTP status code in JavaScript the easy way

Jan 05, 2024 pm 01:37 PM

Introduction to the method of obtaining HTTP status code in JavaScript: In front-end development, we often need to deal with the interaction with the back-end interface, and HTTP status code is a very important part of it. Understanding and obtaining HTTP status codes helps us better handle the data returned by the interface. This article will introduce how to use JavaScript to obtain HTTP status codes and provide specific code examples. 1. What is HTTP status code? HTTP status code means that when the browser initiates a request to the server, the service