Backend Development

Backend Development

Python Tutorial

Python Tutorial

How to implement convolutional neural network CNN on PyTorch

How to implement convolutional neural network CNN on PyTorch

How to implement convolutional neural network CNN on PyTorch

This article mainly introduces the method of implementing convolutional neural network CNN on PyTorch. Now I will share it with you and give you a reference. Let’s take a look together

1. Convolutional Neural Network

Convolutional Neural Network (Convolutional Neural Network, CNN) was originally designed to solve image recognition Designed for such problems, the current applications of CNN are not limited to images and videos, but can also be used for time series signals, such as audio signals and text data. The initial appeal of CNN as a deep learning architecture is to reduce the requirements for image data preprocessing and avoid complex feature engineering. In a convolutional neural network, the first convolutional layer will directly accept the pixel-level input of the image. Each layer of convolution (filter) will extract the most effective features in the data. This method can extract the most basic features of the image. Features are then combined and abstracted to form higher-order features, so CNN is theoretically invariant to image scaling, translation and rotation.

The key points of convolutional neural network CNN are local connection (LocalConnection), weight sharing (WeightsSharing) and down-sampling (Down-Sampling) in the pooling layer (Pooling). Among them, local connections and weight sharing reduce the amount of parameters, greatly reduce the training complexity and alleviate over-fitting. At the same time, weight sharing also gives the convolutional network tolerance to translation, and pooling layer downsampling further reduces the amount of output parameters and gives the model tolerance to mild deformation, improving the generalization ability of the model. The convolution operation of the convolution layer can be understood as the process of extracting similar features at multiple locations in the image with a small number of parameters.

2. Code implementation

import torch

import torch.nn as nn

from torch.autograd import Variable

import torch.utils.data as Data

import torchvision

import matplotlib.pyplot as plt

torch.manual_seed(1)

EPOCH = 1

BATCH_SIZE = 50

LR = 0.001

DOWNLOAD_MNIST = True

# 获取训练集dataset

training_data = torchvision.datasets.MNIST(

root='./mnist/', # dataset存储路径

train=True, # True表示是train训练集,False表示test测试集

transform=torchvision.transforms.ToTensor(), # 将原数据规范化到(0,1)区间

download=DOWNLOAD_MNIST,

)

# 打印MNIST数据集的训练集及测试集的尺寸

print(training_data.train_data.size())

print(training_data.train_labels.size())

# torch.Size([60000, 28, 28])

# torch.Size([60000])

plt.imshow(training_data.train_data[0].numpy(), cmap='gray')

plt.title('%i' % training_data.train_labels[0])

plt.show()

# 通过torchvision.datasets获取的dataset格式可直接可置于DataLoader

train_loader = Data.DataLoader(dataset=training_data, batch_size=BATCH_SIZE,

shuffle=True)

# 获取测试集dataset

test_data = torchvision.datasets.MNIST(root='./mnist/', train=False)

# 取前2000个测试集样本

test_x = Variable(torch.unsqueeze(test_data.test_data, dim=1),

volatile=True).type(torch.FloatTensor)[:2000]/255

# (2000, 28, 28) to (2000, 1, 28, 28), in range(0,1)

test_y = test_data.test_labels[:2000]

class CNN(nn.Module):

def __init__(self):

super(CNN, self).__init__()

self.conv1 = nn.Sequential( # (1,28,28)

nn.Conv2d(in_channels=1, out_channels=16, kernel_size=5,

stride=1, padding=2), # (16,28,28)

# 想要con2d卷积出来的图片尺寸没有变化, padding=(kernel_size-1)/2

nn.ReLU(),

nn.MaxPool2d(kernel_size=2) # (16,14,14)

)

self.conv2 = nn.Sequential( # (16,14,14)

nn.Conv2d(16, 32, 5, 1, 2), # (32,14,14)

nn.ReLU(),

nn.MaxPool2d(2) # (32,7,7)

)

self.out = nn.Linear(32*7*7, 10)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = x.view(x.size(0), -1) # 将(batch,32,7,7)展平为(batch,32*7*7)

output = self.out(x)

return output

cnn = CNN()

print(cnn)

'''''

CNN (

(conv1): Sequential (

(0): Conv2d(1, 16, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): ReLU ()

(2): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

)

(conv2): Sequential (

(0): Conv2d(16, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): ReLU ()

(2): MaxPool2d (size=(2, 2), stride=(2, 2), dilation=(1, 1))

)

(out): Linear (1568 -> 10)

)

'''

optimizer = torch.optim.Adam(cnn.parameters(), lr=LR)

loss_function = nn.CrossEntropyLoss()

for epoch in range(EPOCH):

for step, (x, y) in enumerate(train_loader):

b_x = Variable(x)

b_y = Variable(y)

output = cnn(b_x)

loss = loss_function(output, b_y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if step % 100 == 0:

test_output = cnn(test_x)

pred_y = torch.max(test_output, 1)[1].data.squeeze()

accuracy = sum(pred_y == test_y) / test_y.size(0)

print('Epoch:', epoch, '|Step:', step,

'|train loss:%.4f'%loss.data[0], '|test accuracy:%.4f'%accuracy)

test_output = cnn(test_x[:10])

pred_y = torch.max(test_output, 1)[1].data.numpy().squeeze()

print(pred_y, 'prediction number')

print(test_y[:10].numpy(), 'real number')

'''''

Epoch: 0 |Step: 0 |train loss:2.3145 |test accuracy:0.1040

Epoch: 0 |Step: 100 |train loss:0.5857 |test accuracy:0.8865

Epoch: 0 |Step: 200 |train loss:0.0600 |test accuracy:0.9380

Epoch: 0 |Step: 300 |train loss:0.0996 |test accuracy:0.9345

Epoch: 0 |Step: 400 |train loss:0.0381 |test accuracy:0.9645

Epoch: 0 |Step: 500 |train loss:0.0266 |test accuracy:0.9620

Epoch: 0 |Step: 600 |train loss:0.0973 |test accuracy:0.9685

Epoch: 0 |Step: 700 |train loss:0.0421 |test accuracy:0.9725

Epoch: 0 |Step: 800 |train loss:0.0654 |test accuracy:0.9710

Epoch: 0 |Step: 900 |train loss:0.1333 |test accuracy:0.9740

Epoch: 0 |Step: 1000 |train loss:0.0289 |test accuracy:0.9720

Epoch: 0 |Step: 1100 |train loss:0.0429 |test accuracy:0.9770

[7 2 1 0 4 1 4 9 5 9] prediction number

[7 2 1 0 4 1 4 9 5 9] real number

'''## 3. Analysis and Interpretation

By using torchvision.datasets, you can quickly obtain data in dataset format that can be placed directly in DataLoader. Use the train parameter to control whether to obtain a training data set or a test The data set can also be directly converted into the data format required for training when obtained. The construction of a convolutional neural network is achieved by defining a CNN class. The convolutional layers conv1, conv2 and out layers are defined in the form of class attributes. The connection information between each layer is defined in forward. The defined Always pay attention to the number of neurons in each layer. The network structure of CNN is as follows:CNN ( (conv1): Sequential ( (0): Conv2d(1, 16,kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (1): ReLU () (2): MaxPool2d (size=(2,2), stride=(2, 2), dilation=(1, 1)) ) (conv2): Sequential ( (0): Conv2d(16, 32,kernel_size=(5, 5), stride=(1, 1), padding=(2, 2)) (1): ReLU () (2): MaxPool2d (size=(2,2), stride=(2, 2), dilation=(1, 1)) ) (out): Linear (1568 ->10) )

Detailed explanation of PyTorch batch training and optimizer comparison

The above is the detailed content of How to implement convolutional neural network CNN on PyTorch. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1245

1245

24

24

How to recover deleted contacts on WeChat (simple tutorial tells you how to recover deleted contacts)

May 01, 2024 pm 12:01 PM

How to recover deleted contacts on WeChat (simple tutorial tells you how to recover deleted contacts)

May 01, 2024 pm 12:01 PM

Unfortunately, people often delete certain contacts accidentally for some reasons. WeChat is a widely used social software. To help users solve this problem, this article will introduce how to retrieve deleted contacts in a simple way. 1. Understand the WeChat contact deletion mechanism. This provides us with the possibility to retrieve deleted contacts. The contact deletion mechanism in WeChat removes them from the address book, but does not delete them completely. 2. Use WeChat’s built-in “Contact Book Recovery” function. WeChat provides “Contact Book Recovery” to save time and energy. Users can quickly retrieve previously deleted contacts through this function. 3. Enter the WeChat settings page and click the lower right corner, open the WeChat application "Me" and click the settings icon in the upper right corner to enter the settings page.

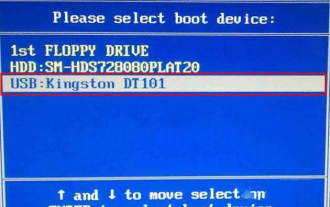

How to enter bios on Colorful motherboard? Teach you two methods

Mar 13, 2024 pm 06:01 PM

How to enter bios on Colorful motherboard? Teach you two methods

Mar 13, 2024 pm 06:01 PM

Colorful motherboards enjoy high popularity and market share in the Chinese domestic market, but some users of Colorful motherboards still don’t know how to enter the bios for settings? In response to this situation, the editor has specially brought you two methods to enter the colorful motherboard bios. Come and try it! Method 1: Use the U disk startup shortcut key to directly enter the U disk installation system. The shortcut key for the Colorful motherboard to start the U disk with one click is ESC or F11. First, use Black Shark Installation Master to create a Black Shark U disk boot disk, and then turn on the computer. When you see the startup screen, continuously press the ESC or F11 key on the keyboard to enter a window for sequential selection of startup items. Move the cursor to the place where "USB" is displayed, and then

How to write a novel in the Tomato Free Novel app. Share the tutorial on how to write a novel in Tomato Novel.

Mar 28, 2024 pm 12:50 PM

How to write a novel in the Tomato Free Novel app. Share the tutorial on how to write a novel in Tomato Novel.

Mar 28, 2024 pm 12:50 PM

Tomato Novel is a very popular novel reading software. We often have new novels and comics to read in Tomato Novel. Every novel and comic is very interesting. Many friends also want to write novels. Earn pocket money and edit the content of the novel you want to write into text. So how do we write the novel in it? My friends don’t know, so let’s go to this site together. Let’s take some time to look at an introduction to how to write a novel. Share the Tomato novel tutorial on how to write a novel. 1. First open the Tomato free novel app on your mobile phone and click on Personal Center - Writer Center. 2. Jump to the Tomato Writer Assistant page - click on Create a new book at the end of the novel.

The secret of hatching mobile dragon eggs is revealed (step by step to teach you how to successfully hatch mobile dragon eggs)

May 04, 2024 pm 06:01 PM

The secret of hatching mobile dragon eggs is revealed (step by step to teach you how to successfully hatch mobile dragon eggs)

May 04, 2024 pm 06:01 PM

Mobile games have become an integral part of people's lives with the development of technology. It has attracted the attention of many players with its cute dragon egg image and interesting hatching process, and one of the games that has attracted much attention is the mobile version of Dragon Egg. To help players better cultivate and grow their own dragons in the game, this article will introduce to you how to hatch dragon eggs in the mobile version. 1. Choose the appropriate type of dragon egg. Players need to carefully choose the type of dragon egg that they like and suit themselves, based on the different types of dragon egg attributes and abilities provided in the game. 2. Upgrade the level of the incubation machine. Players need to improve the level of the incubation machine by completing tasks and collecting props. The level of the incubation machine determines the hatching speed and hatching success rate. 3. Collect the resources required for hatching. Players need to be in the game

Summary of methods to obtain administrator rights in Win11

Mar 09, 2024 am 08:45 AM

Summary of methods to obtain administrator rights in Win11

Mar 09, 2024 am 08:45 AM

A summary of how to obtain Win11 administrator rights. In the Windows 11 operating system, administrator rights are one of the very important permissions that allow users to perform various operations on the system. Sometimes, we may need to obtain administrator rights to complete some operations, such as installing software, modifying system settings, etc. The following summarizes some methods for obtaining Win11 administrator rights, I hope it can help you. 1. Use shortcut keys. In Windows 11 system, you can quickly open the command prompt through shortcut keys.

Detailed explanation of Oracle version query method

Mar 07, 2024 pm 09:21 PM

Detailed explanation of Oracle version query method

Mar 07, 2024 pm 09:21 PM

Detailed explanation of Oracle version query method Oracle is one of the most popular relational database management systems in the world. It provides rich functions and powerful performance and is widely used in enterprises. In the process of database management and development, it is very important to understand the version of the Oracle database. This article will introduce in detail how to query the version information of the Oracle database and give specific code examples. Query the database version of the SQL statement in the Oracle database by executing a simple SQL statement

How to set font size on mobile phone (easily adjust font size on mobile phone)

May 07, 2024 pm 03:34 PM

How to set font size on mobile phone (easily adjust font size on mobile phone)

May 07, 2024 pm 03:34 PM

Setting font size has become an important personalization requirement as mobile phones become an important tool in people's daily lives. In order to meet the needs of different users, this article will introduce how to improve the mobile phone use experience and adjust the font size of the mobile phone through simple operations. Why do you need to adjust the font size of your mobile phone - Adjusting the font size can make the text clearer and easier to read - Suitable for the reading needs of users of different ages - Convenient for users with poor vision to use the font size setting function of the mobile phone system - How to enter the system settings interface - In Find and enter the "Display" option in the settings interface - find the "Font Size" option and adjust it. Adjust the font size with a third-party application - download and install an application that supports font size adjustment - open the application and enter the relevant settings interface - according to the individual

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

Paper address: https://arxiv.org/abs/2307.09283 Code address: https://github.com/THU-MIG/RepViTRepViT performs well in the mobile ViT architecture and shows significant advantages. Next, we explore the contributions of this study. It is mentioned in the article that lightweight ViTs generally perform better than lightweight CNNs on visual tasks, mainly due to their multi-head self-attention module (MSHA) that allows the model to learn global representations. However, the architectural differences between lightweight ViTs and lightweight CNNs have not been fully studied. In this study, the authors integrated lightweight ViTs into the effective