Hadoop1.1.2 Eclipse 插件编译

可以直接下载我编译好的插件 hadoop-eclipse-plugin-1.1.2 http://download.csdn.net/detail/wind520/5784389 1:方法一: copy src\contrib\build-contrib.xml 到src\contrib\eclipse-plugin目录下,然后修改 ?xml version=1.0?!-- Licensed to the Apache S

可以直接下载我编译好的插件

hadoop-eclipse-plugin-1.1.2

http://download.csdn.net/detail/wind520/5784389

1:方法一:

copy src\contrib\build-contrib.xml 到src\contrib\eclipse-plugin目录下,然后修改

<?xml version="1.0"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<!-- Imported by contrib/*/build.xml files to share generic targets. -->

<project name="hadoopbuildcontrib" xmlns:ivy="antlib:org.apache.ivy.ant">

<property name="name" value="${ant.project.name}"></property>

<property name="eclipse.home" location="D:/oracle/get/eclipse"></property>

<property name="version" value="1.1.2"></property>

<property name="root" value="${basedir}"></property>

<property name="hadoop.root" location="${root}/../../../"></property>

<!-- Load all the default properties, and any the user wants -->

<!-- to contribute (without having to type -D or edit this file -->

<property file="${user.home}/${name}.build.properties"></property>

<property file="${root}/build.properties"></property>

<property file="${hadoop.root}/build.properties"></property>

<property name="src.dir" location="${root}/src/java"></property>

<property name="src.test" location="${root}/src/test"></property>

<property name="src.test.data" location="${root}/src/test/data"></property>

<!-- Property added for contrib system tests -->

<property name="build-fi.dir" location="${hadoop.root}/build-fi"></property>

<property name="system-test-build-dir" location="${build-fi.dir}/system"></property>

<property name="src.test.system" location="${root}/src/test/system"></property>

<property name="src.examples" location="${root}/src/examples"></property>

<available file="${src.examples}" type="dir" property="examples.available"></available>

<available file="${src.test}" type="dir" property="test.available"></available>

<!-- Property added for contrib system tests -->

<available file="${src.test.system}" type="dir" property="test.system.available"></available>

<property name="conf.dir" location="${hadoop.root}/conf"></property>

<property name="test.junit.output.format" value="plain"></property>

<property name="test.output" value="no"></property>

<property name="test.timeout" value="900000"></property>

<property name="build.contrib.dir" location="${hadoop.root}/build/contrib"></property>

<property name="build.dir" location="${hadoop.root}/build/contrib/${name}"></property>

<property name="build.classes" location="${build.dir}/classes"></property>

<property name="build.test" location="${build.dir}/test"></property>

<property name="build.examples" location="${build.dir}/examples"></property>

<property name="hadoop.log.dir" location="${build.dir}/test/logs"></property>

<!-- all jars together -->

<property name="javac.deprecation" value="off"></property>

<property name="javac.debug" value="on"></property>

<property name="build.ivy.lib.dir" value="${hadoop.root}/build/ivy/lib"></property>

<property name="javadoc.link" value="http://java.sun.com/j2se/1.4/docs/api/"></property>

<property name="build.encoding" value="ISO-8859-1"></property>

<fileset id="lib.jars" dir="${root}" includes="lib/*.jar"></fileset>

<!-- Property added for contrib system tests -->

<property name="build.test.system" location="${build.dir}/system"></property>

<property name="build.system.classes" location="${build.test.system}/classes"></property>

<!-- IVY properties set here -->

<property name="ivy.dir" location="ivy"></property>

<property name="ivysettings.xml" location="${hadoop.root}/ivy/ivysettings.xml"></property>

<loadproperties srcfile="${ivy.dir}/libraries.properties"></loadproperties>

<loadproperties srcfile="${hadoop.root}/ivy/libraries.properties"></loadproperties>

<property name="ivy.jar" location="${hadoop.root}/ivy/ivy-${ivy.version}.jar"></property>

<property name="ivy_repo_url" value="http://repo2.maven.org/maven2/org/apache/ivy/ivy/${ivy.version}/ivy-${ivy.version}.jar"></property>

<property name="build.dir" location="build"></property>

<property name="build.ivy.dir" location="${build.dir}/ivy"></property>

<property name="build.ivy.lib.dir" location="${build.ivy.dir}/lib"></property>

<property name="build.ivy.report.dir" location="${build.ivy.dir}/report"></property>

<property name="common.ivy.lib.dir" location="${build.ivy.lib.dir}/${ant.project.name}/common"></property>

<!--this is the naming policy for artifacts we want pulled down-->

<property name="ivy.artifact.retrieve.pattern" value="${ant.project.name}/[conf]/[artifact]-[revision].[ext]"></property>

<!-- the normal classpath -->

<path id="contrib-classpath">

<pathelement location="${build.classes}"></pathelement>

<pathelement location="${hadoop.root}/build/tools"></pathelement>

<fileset refid="lib.jars"></fileset>

<pathelement location="${hadoop.root}/build/classes"></pathelement>

<fileset dir="${hadoop.root}/lib">

<include name="**/*.jar"></include>

</fileset>

<path refid="${ant.project.name}.common-classpath"></path>

<pathelement path="${clover.jar}"></pathelement>

</path>

<!-- the unit test classpath -->

<path id="test.classpath">

<pathelement location="${build.test}"></pathelement>

<pathelement location="${hadoop.root}/build/test/classes"></pathelement>

<pathelement location="${hadoop.root}/src/contrib/test"></pathelement>

<pathelement location="${conf.dir}"></pathelement>

<pathelement location="${hadoop.root}/build"></pathelement>

<pathelement location="${build.examples}"></pathelement>

<pathelement location="${hadoop.root}/build/examples"></pathelement>

<path refid="contrib-classpath"></path>

</path>

<!-- The system test classpath -->

<path id="test.system.classpath">

<pathelement location="${hadoop.root}/src/contrib/${name}/src/test/system"></pathelement>

<pathelement location="${build.test.system}"></pathelement>

<pathelement location="${build.test.system}/classes"></pathelement>

<pathelement location="${build.examples}"></pathelement>

<pathelement location="${hadoop.root}/build-fi/system/classes"></pathelement>

<pathelement location="${hadoop.root}/build-fi/system/test/classes"></pathelement>

<pathelement location="${hadoop.root}/build-fi"></pathelement>

<pathelement location="${hadoop.root}/build-fi/tools"></pathelement>

<pathelement location="${hadoop.home}"></pathelement>

<pathelement location="${hadoop.conf.dir}"></pathelement>

<pathelement location="${hadoop.conf.dir.deployed}"></pathelement>

<pathelement location="${hadoop.root}/build"></pathelement>

<pathelement location="${hadoop.root}/build/examples"></pathelement>

<pathelement location="${hadoop.root}/build-fi/test/classes"></pathelement>

<path refid="contrib-classpath"></path>

<fileset dir="${hadoop.root}/src/test/lib">

<include name="**/*.jar"></include>

<exclude name="**/excluded/"></exclude>

</fileset>

<fileset dir="${hadoop.root}/build-fi/system">

<include name="**/*.jar"></include>

<exclude name="**/excluded/"></exclude>

</fileset>

<fileset dir="${hadoop.root}/build-fi/test/testjar">

<include name="**/*.jar"></include>

<exclude name="**/excluded/"></exclude>

</fileset>

<fileset dir="${hadoop.root}/build/contrib/${name}">

<include name="**/*.jar"></include>

<exclude name="**/excluded/"></exclude>

</fileset>

</path>

<!-- to be overridden by sub-projects -->

<target name="check-contrib"></target>

<target name="init-contrib"></target>

<!-- ====================================================== -->

<!-- Stuff needed by all targets -->

<!-- ====================================================== -->

<target name="init" depends="check-contrib" unless="skip.contrib">

<echo message="contrib: ${name}"></echo>

<mkdir dir="${build.dir}"></mkdir>

<mkdir dir="${build.classes}"></mkdir>

<mkdir dir="${build.test}"></mkdir>

<!-- The below two tags added for contrib system tests -->

<mkdir dir="${build.test.system}"></mkdir>

<mkdir dir="${build.system.classes}"></mkdir>

<mkdir dir="${build.examples}"></mkdir>

<mkdir dir="${hadoop.log.dir}"></mkdir>

<antcall target="init-contrib"></antcall>

</target>

<!-- ====================================================== -->

<!-- Compile a Hadoop contrib's files -->

<!-- ====================================================== -->

<target name="compile" depends="init, ivy-retrieve-common" unless="skip.contrib">

<echo message="contrib: ${name}"></echo>

<javac encoding="${build.encoding}" srcdir="${src.dir}" includes="**/*.java" destdir="${build.classes}" debug="${javac.debug}" deprecation="${javac.deprecation}">

<classpath refid="contrib-classpath"></classpath>

</javac>

</target>

<!-- ======================================================= -->

<!-- Compile a Hadoop contrib's example files (if available) -->

<!-- ======================================================= -->

<target name="compile-examples" depends="compile" if="examples.available">

<echo message="contrib: ${name}"></echo>

<javac encoding="${build.encoding}" srcdir="${src.examples}" includes="**/*.java" destdir="${build.examples}" debug="${javac.debug}">

<classpath refid="contrib-classpath"></classpath>

</javac>

</target>

<!-- ================================================================== -->

<!-- Compile test code -->

<!-- ================================================================== -->

<target name="compile-test" depends="compile-examples" if="test.available">

<echo message="contrib: ${name}"></echo>

<javac encoding="${build.encoding}" srcdir="${src.test}" includes="**/*.java" excludes="system/**/*.java" destdir="${build.test}" debug="${javac.debug}">

<classpath refid="test.classpath"></classpath>

</javac>

</target>

<!-- ================================================================== -->

<!-- Compile system test code -->

<!-- ================================================================== -->

<target name="compile-test-system" depends="compile-examples" if="test.system.available">

<echo message="contrib: ${name}"></echo>

<javac encoding="${build.encoding}" srcdir="${src.test.system}" includes="**/*.java" destdir="${build.system.classes}" debug="${javac.debug}">

<classpath refid="test.system.classpath"></classpath>

</javac>

</target>

<!-- ====================================================== -->

<!-- Make a Hadoop contrib's jar -->

<!-- ====================================================== -->

<target name="jar" depends="compile" unless="skip.contrib">

<echo message="contrib: ${name}"></echo>

<jar jarfile="${build.dir}/hadoop-${name}-${version}.jar" basedir="${build.classes}"></jar>

</target>

<!-- ====================================================== -->

<!-- Make a Hadoop contrib's examples jar -->

<!-- ====================================================== -->

<target name="jar-examples" depends="compile-examples" if="examples.available" unless="skip.contrib">

<echo message="contrib: ${name}"></echo>

<jar jarfile="${build.dir}/hadoop-${name}-examples-${version}.jar">

<fileset dir="${build.classes}">

</fileset>

<fileset dir="${build.examples}">

</fileset>

</jar>

</target>

<!-- ====================================================== -->

<!-- Package a Hadoop contrib -->

<!-- ====================================================== -->

<target name="package" depends="jar, jar-examples" unless="skip.contrib">

<mkdir dir="${dist.dir}/contrib/${name}"></mkdir>

<copy todir="${dist.dir}/contrib/${name}" includeemptydirs="false" flatten="true">

<fileset dir="${build.dir}">

<include name="hadoop-${name}-${version}.jar"></include>

</fileset>

</copy>

</target>

<!-- ================================================================== -->

<!-- Run unit tests -->

<!-- ================================================================== -->

<target name="test" depends="compile-test, compile" if="test.available">

<echo message="contrib: ${name}"></echo>

<delete dir="${hadoop.log.dir}"></delete>

<mkdir dir="${hadoop.log.dir}"></mkdir>

<junit printsummary="yes" showoutput="${test.output}" haltonfailure="no" fork="yes" maxmemory="512m" errorproperty="tests.failed" failureproperty="tests.failed" timeout="${test.timeout}">

<sysproperty key="test.build.data" value="${build.test}/data"></sysproperty>

<sysproperty key="build.test" value="${build.test}"></sysproperty>

<sysproperty key="src.test.data" value="${src.test.data}"></sysproperty>

<sysproperty key="contrib.name" value="${name}"></sysproperty>

<!-- requires fork=yes for:

relative File paths to use the specified user.dir

classpath to use build/contrib/*.jar

-->

<sysproperty key="user.dir" value="${build.test}/data"></sysproperty>

<sysproperty key="fs.default.name" value="${fs.default.name}"></sysproperty>

<sysproperty key="hadoop.test.localoutputfile" value="${hadoop.test.localoutputfile}"></sysproperty>

<sysproperty key="hadoop.log.dir" value="${hadoop.log.dir}"></sysproperty>

<sysproperty key="taskcontroller-path" value="${taskcontroller-path}"></sysproperty>

<sysproperty key="taskcontroller-ugi" value="${taskcontroller-ugi}"></sysproperty>

<classpath refid="test.classpath"></classpath>

<formatter type="${test.junit.output.format}"></formatter>

<batchtest todir="${build.test}" unless="testcase">

<fileset dir="${src.test}" includes="**/Test*.java" excludes="**/${test.exclude}.java, system/**/*.java"></fileset>

</batchtest>

<batchtest todir="${build.test}" if="testcase">

<fileset dir="${src.test}" includes="**/${testcase}.java" excludes="system/**/*.java"></fileset>

</batchtest>

</junit>

<antcall target="checkfailure"></antcall>

</target>

<!-- ================================================================== -->

<!-- Run system tests -->

<!-- ================================================================== -->

<target name="test-system" depends="compile, compile-test-system, jar" if="test.system.available">

<delete dir="${build.test.system}/extraconf"></delete>

<mkdir dir="${build.test.system}/extraconf"></mkdir>

<property name="test.src.dir" location="${hadoop.root}/src/test"></property>

<property name="test.junit.printsummary" value="yes"></property>

<property name="test.junit.haltonfailure" value="no"></property>

<property name="test.junit.maxmemory" value="512m"></property>

<property name="test.junit.fork.mode" value="perTest"></property>

<property name="test.all.tests.file" value="${test.src.dir}/all-tests"></property>

<property name="test.build.dir" value="${hadoop.root}/build/test"></property>

<property name="basedir" value="${hadoop.root}"></property>

<property name="test.timeout" value="900000"></property>

<property name="test.junit.output.format" value="plain"></property>

<property name="test.tools.input.dir" value="${basedir}/src/test/tools/data"></property>

<property name="c++.src" value="${basedir}/src/c++"></property>

<property name="test.include" value="Test*"></property>

<property name="c++.libhdfs.src" value="${c++.src}/libhdfs"></property>

<property name="test.build.data" value="${build.test.system}/data"></property>

<property name="test.cache.data" value="${build.test.system}/cache"></property>

<property name="test.debug.data" value="${build.test.system}/debug"></property>

<property name="test.log.dir" value="${build.test.system}/logs"></property>

<patternset id="empty.exclude.list.id"></patternset>

<exec executable="sed" inputstring="${os.name}" outputproperty="nonspace.os">

<arg value="s/ /_/g"></arg>

</exec>

<property name="build.platform" value="${nonspace.os}-${os.arch}-${sun.arch.data.model}"></property>

<property name="build.native" value="${hadoop.root}/build/native/${build.platform}"></property>

<property name="lib.dir" value="${hadoop.root}/lib"></property>

<property name="install.c++.examples" value="${hadoop.root}/build/c++-examples/${build.platform}"></property>

<condition property="tests.testcase">

<and>

<isset property="testcase"></isset>

</and>

</condition>

<property name="test.junit.jvmargs" value="-ea"></property>

<macro-system-test-runner test.file="${test.all.tests.file}" classpath="test.system.classpath" test.dir="${build.test.system}" fileset.dir="${hadoop.root}/src/contrib/${name}/src/test/system" hadoop.conf.dir.deployed="${hadoop.conf.dir.deployed}">

</macro-system-test-runner>

</target>

<macrodef name="macro-system-test-runner">

<attribute name="test.file"></attribute>

<attribute name="classpath"></attribute>

<attribute name="test.dir"></attribute>

<attribute name="fileset.dir"></attribute>

<attribute name="hadoop.conf.dir.deployed" default=""></attribute>

<sequential>

<delete dir="@{test.dir}/data"></delete>

<mkdir dir="@{test.dir}/data"></mkdir>

<delete dir="@{test.dir}/logs"></delete>

<mkdir dir="@{test.dir}/logs"></mkdir>

<copy file="${test.src.dir}/hadoop-policy.xml" todir="@{test.dir}/extraconf"></copy>

<copy file="${test.src.dir}/fi-site.xml" todir="@{test.dir}/extraconf"></copy>

<junit showoutput="${test.output}" printsummary="${test.junit.printsummary}" haltonfailure="${test.junit.haltonfailure}" fork="yes" forkmode="${test.junit.fork.mode}" maxmemory="${test.junit.maxmemory}" dir="${basedir}" timeout="${test.timeout}" errorproperty="tests.failed" failureproperty="tests.failed">

<jvmarg value="${test.junit.jvmargs}"></jvmarg>

<sysproperty key="java.net.preferIPv4Stack" value="true"></sysproperty>

<sysproperty key="test.build.data" value="@{test.dir}/data"></sysproperty>

<sysproperty key="test.tools.input.dir" value="${test.tools.input.dir}"></sysproperty>

<sysproperty key="test.cache.data" value="${test.cache.data}"></sysproperty>

<sysproperty key="test.debug.data" value="${test.debug.data}"></sysproperty>

<sysproperty key="hadoop.log.dir" value="@{test.dir}/logs"></sysproperty>

<sysproperty key="test.src.dir" value="@{fileset.dir}"></sysproperty>

<sysproperty key="taskcontroller-path" value="${taskcontroller-path}"></sysproperty>

<sysproperty key="taskcontroller-ugi" value="${taskcontroller-ugi}"></sysproperty>

<sysproperty key="test.build.extraconf" value="@{test.dir}/extraconf"></sysproperty>

<sysproperty key="hadoop.policy.file" value="hadoop-policy.xml"></sysproperty>

<sysproperty key="java.library.path" value="${build.native}/lib:${lib.dir}/native/${build.platform}"></sysproperty>

<sysproperty key="install.c++.examples" value="${install.c++.examples}"></sysproperty>

<syspropertyset dynamic="no">

<propertyref name="hadoop.tmp.dir"></propertyref>

</syspropertyset>

<!-- set compile.c++ in the child jvm only if it is set -->

<syspropertyset dynamic="no">

<propertyref name="compile.c++"></propertyref>

</syspropertyset>

<!-- Pass probability specifications to the spawn JVM -->

<syspropertyset id="FaultProbabilityProperties">

<propertyref regex="fi.*"></propertyref>

</syspropertyset>

<sysproperty key="test.system.hdrc.deployed.hadoopconfdir" value="@{hadoop.conf.dir.deployed}"></sysproperty>

<classpath refid="@{classpath}"></classpath>

<formatter type="${test.junit.output.format}"></formatter>

<batchtest todir="@{test.dir}" unless="testcase">

<fileset dir="@{fileset.dir}" excludes="**/${test.exclude}.java aop/** system/**">

<patternset>

<includesfile name="@{test.file}"></includesfile>

</patternset>

</fileset>

</batchtest>

<batchtest todir="@{test.dir}" if="testcase">

<fileset dir="@{fileset.dir}" includes="**/${testcase}.java"></fileset>

</batchtest>

</junit>

<antcall target="checkfailure"></antcall>

</sequential>

</macrodef>

<target name="checkfailure" if="tests.failed">

<touch file="${build.contrib.dir}/testsfailed"></touch>

<fail unless="continueOnFailure">Contrib Tests failed!</fail>

</target>

<!-- ================================================================== -->

<!-- Clean. Delete the build files, and their directories -->

<!-- ================================================================== -->

<target name="clean">

<echo message="contrib: ${name}"></echo>

<delete dir="${build.dir}"></delete>

</target>

<target name="ivy-probe-antlib">

<condition property="ivy.found">

<typefound uri="antlib:org.apache.ivy.ant" name="cleancache"></typefound>

</condition>

</target>

<target name="ivy-download" description="To download ivy " unless="offline">

<get src="%24%7Bivy_repo_url%7D" dest="${ivy.jar}" usetimestamp="true"></get>

</target>

<target name="ivy-init-antlib" depends="ivy-download,ivy-probe-antlib" unless="ivy.found">

<typedef uri="antlib:org.apache.ivy.ant" onerror="fail" loaderref="ivyLoader">

<classpath>

<pathelement location="${ivy.jar}"></pathelement>

</classpath>

</typedef>

<fail>

<condition>

<not>

<typefound uri="antlib:org.apache.ivy.ant" name="cleancache"></typefound>

</not>

</condition>

You need Apache Ivy 2.0 or later from http://ant.apache.org/

It could not be loaded from ${ivy_repo_url}

</fail>

</target>

<target name="ivy-init" depends="ivy-init-antlib">

<configure settingsid="${ant.project.name}.ivy.settings" file="${ivysettings.xml}"></configure>

</target>

<target name="ivy-resolve-common" depends="ivy-init">

<resolve settingsref="${ant.project.name}.ivy.settings" conf="common"></resolve>

</target>

<target name="ivy-retrieve-common" depends="ivy-resolve-common" description="Retrieve Ivy-managed artifacts for the compile/test configurations">

<retrieve settingsref="${ant.project.name}.ivy.settings" pattern="${build.ivy.lib.dir}/${ivy.artifact.retrieve.pattern}" sync="true"></retrieve>

<cachepath pathid="${ant.project.name}.common-classpath" conf="common"></cachepath>

</target>

</project>

修改 eclipse-plugin目录下的build.xml 文件

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<project default="jar" name="eclipse-plugin">

<import file="build-contrib.xml"></import>

<path id="eclipse-sdk-jars">

<fileset dir="${eclipse.home}/plugins/">

<include name="org.eclipse.ui*.jar"></include>

<include name="org.eclipse.jdt*.jar"></include>

<include name="org.eclipse.core*.jar"></include>

<include name="org.eclipse.equinox*.jar"></include>

<include name="org.eclipse.debug*.jar"></include>

<include name="org.eclipse.osgi*.jar"></include>

<include name="org.eclipse.swt*.jar"></include>

<include name="org.eclipse.jface*.jar"></include>

<include name="org.eclipse.team.cvs.ssh2*.jar"></include>

<include name="com.jcraft.jsch*.jar"></include>

</fileset>

</path>

<!-- Override classpath to include Eclipse SDK jars -->

<path id="classpath">

<pathelement location="${build.classes}"></pathelement>

<pathelement location="${hadoop.root}/hadoop-core-1.1.2.jar"></pathelement>

<pathelement location="${hadoop.root}/build/classes"></pathelement>

<path refid="eclipse-sdk-jars"></path>

</path>

<!-- Skip building if eclipse.home is unset. -->

<target name="check-contrib" unless="eclipse.home">

<property name="skip.contrib" value="yes"></property>

<echo message="eclipse.home unset: skipping eclipse plugin"></echo>

</target>

<target name="compile" depends="init, ivy-retrieve-common" unless="skip.contrib">

<echo message="contrib: ${name}"></echo>

<javac encoding="${build.encoding}" srcdir="${src.dir}" includes="**/*.java" destdir="${build.classes}" debug="${javac.debug}" deprecation="${javac.deprecation}">

<classpath refid="classpath"></classpath>

</javac>

</target>

<!-- Override jar target to specify manifest -->

<target name="jar" depends="compile" unless="skip.contrib">

<mkdir dir="${build.dir}/lib"></mkdir>

<copy file="${hadoop.root}/hadoop-core-${version}.jar" tofile="${build.dir}/lib/hadoop-core.jar" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-cli-1.2.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-configuration-1.6.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-httpclient-3.0.1.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/jackson-core-asl-1.8.8.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-lang-2.4.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/jackson-mapper-asl-1.8.8.jar" todir="${build.dir}/lib" verbose="true"></copy>

<jar jarfile="${build.dir}/hadoop-${name}-${version}.jar" manifest="${root}/META-INF/MANIFEST.MF">

<fileset dir="${build.dir}" includes="classes/ lib/"></fileset>

<fileset dir="${root}" includes="resources/ plugin.xml"></fileset>

</jar>

</target>

</project>

修改src\contrib\eclipse-plugin\META-INF下的MANIFEST.MF

Manifest-Version: 1.0 Bundle-ManifestVersion: 2 Bundle-Name: MapReduce Tools for Eclipse Bundle-SymbolicName: org.apache.hadoop.eclipse;singleton:=true Bundle-Version: 0.18 Bundle-Activator: org.apache.hadoop.eclipse.Activator Bundle-Localization: plugin Require-Bundle: org.eclipse.ui, org.eclipse.core.runtime, org.eclipse.jdt.launching, org.eclipse.debug.core, org.eclipse.jdt, org.eclipse.jdt.core, org.eclipse.core.resources, org.eclipse.ui.ide, org.eclipse.jdt.ui, org.eclipse.debug.ui, org.eclipse.jdt.debug.ui, org.eclipse.core.expressions, org.eclipse.ui.cheatsheets, org.eclipse.ui.console, org.eclipse.ui.navigator, org.eclipse.core.filesystem, org.apache.commons.logging Eclipse-LazyStart: true Bundle-ClassPath: classes/,lib/hadoop-core.jar,lib/commons-cli-1.2.jar,lib/commons-configuration-1.6.jar,lib/commons-httpclient-3.0.1.jar,lib/commons-lang-2.4.jar,lib/jackson-core-asl-1.8.8.jar,lib/jackson-mapper-asl-1.8.8.jar Bundle-Vendor: Apache Hadoop

编译:

Buildfile: E:\big\Hadoop\hadoop-1.1.2\src\contrib\eclipse-plugin\build.xml

check-contrib:

init:

[echo] contrib: eclipse-plugin

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\classes

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\test

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\system

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\system\classes

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\examples

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\test\logs

init-contrib:

ivy-download:

[get] Getting: http://repo2.maven.org/maven2/org/apache/ivy/ivy/2.1.0/ivy-2.1.0.jar

[get] To: E:\big\Hadoop\hadoop-1.1.2\ivy\ivy-2.1.0.jar

[get] Not modified - so not downloaded

ivy-probe-antlib:

ivy-init-antlib:

ivy-init:

[ivy:configure] :: Ivy 2.1.0 - 20090925235825 :: http://ant.apache.org/ivy/ ::

[ivy:configure] :: loading settings :: file = E:\big\Hadoop\hadoop-1.1.2\ivy\ivysettings.xml

ivy-resolve-common:

[ivy:resolve] :: resolving dependencies :: org.apache.hadoop#eclipse-plugin;working@lenovo-PC

[ivy:resolve] confs: [common]

[ivy:resolve] found commons-logging#commons-logging;1.0.4 in maven2

[ivy:resolve] found log4j#log4j;1.2.15 in maven2

[ivy:resolve] :: resolution report :: resolve 335ms :: artifacts dl 8ms

---------------------------------------------------------------------

| | modules || artifacts |

| conf | number| search|dwnlded|evicted|| number|dwnlded|

---------------------------------------------------------------------

| common | 2 | 0 | 0 | 0 || 2 | 0 |

---------------------------------------------------------------------

ivy-retrieve-common:

[ivy:retrieve] :: retrieving :: org.apache.hadoop#eclipse-plugin [sync]

[ivy:retrieve] confs: [common]

[ivy:retrieve] 2 artifacts copied, 0 already retrieved (419kB/114ms)

[ivy:cachepath] DEPRECATED: 'ivy.conf.file' is deprecated, use 'ivy.settings.file' instead

[ivy:cachepath] :: loading settings :: file = E:\big\Hadoop\hadoop-1.1.2\ivy\ivysettings.xml

compile:

[echo] contrib: eclipse-plugin

[javac] E:\big\Hadoop\hadoop-1.1.2\src\contrib\eclipse-plugin\build.xml:62: warning: 'includeantruntime' was not set, defaulting to build.sysclasspath=last; set to false for repeatable builds

[javac] Compiling 45 source files to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\classes

[javac] 注意:某些输入文件使用或覆盖了已过时的 API。

[javac] 注意:要了解详细信息,请使用 -Xlint:deprecation 重新编译。

[javac] 注意:某些输入文件使用了未经检查或不安全的操作。

[javac] 注意:要了解详细信息,请使用 -Xlint:unchecked 重新编译。

jar:

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\hadoop-core-1.1.2.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\hadoop-core.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-cli-1.2.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-cli-1.2.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-configuration-1.6.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-configuration-1.6.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-httpclient-3.0.1.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-httpclient-3.0.1.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\jackson-core-asl-1.8.8.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\jackson-core-asl-1.8.8.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-lang-2.4.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-lang-2.4.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\jackson-mapper-asl-1.8.8.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\jackson-mapper-asl-1.8.8.jar

[jar] Building jar: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\hadoop-eclipse-plugin-1.1.2.jar

BUILD SUCCESSFUL

Total time: 10 seconds

方法2:

修改 eclipse-plugin目录下的build.xml 文件

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<project default="jar" name="eclipse-plugin">

<property name="name" value="${ant.project.name}"></property>

<property name="root" value="${basedir}"></property>

<property name="hadoop.root" location="E:/big/Hadoop/hadoop-1.1.2"></property>

<property name="version" value="1.1.2"></property>

<property name="eclipse.home" location="D:/oracle/get/eclipse"></property>

<property name="build.dir" location="${hadoop.root}/build/contrib/${name}"></property>

<property name="build.classes" location="${build.dir}/classes"></property>

<property name="src.dir" location="${root}/src/java"></property>

<path id="eclipse-sdk-jars">

<fileset dir="${eclipse.home}/plugins/">

<include name="org.eclipse.ui*.jar"></include>

<include name="org.eclipse.jdt*.jar"></include>

<include name="org.eclipse.core*.jar"></include>

<include name="org.eclipse.equinox*.jar"></include>

<include name="org.eclipse.debug*.jar"></include>

<include name="org.eclipse.osgi*.jar"></include>

<include name="org.eclipse.swt*.jar"></include>

<include name="org.eclipse.jface*.jar"></include>

<include name="org.eclipse.team.cvs.ssh2*.jar"></include>

<include name="com.jcraft.jsch*.jar"></include>

</fileset>

</path>

<!-- Override classpath to include Eclipse SDK jars -->

<path id="classpath">

<fileset dir="${hadoop.root}">

<include name="*.jar"></include>

</fileset>

<path refid="eclipse-sdk-jars"></path>

</path>

<target name="compile">

<mkdir dir="${build.dir}/classes"></mkdir>

<javac encoding="ISO-8859-1" srcdir="${src.dir}" includes="**/*.java" destdir="${build.classes}" debug="on" deprecation="off">

<classpath refid="classpath"></classpath>

</javac>

</target>

<!-- Override jar target to specify manifest-->

<target name="jar" depends="compile">

<mkdir dir="${build.dir}/lib"></mkdir>

<copy file="${hadoop.root}/hadoop-core-${version}.jar" tofile="${build.dir}/lib/hadoop-core.jar" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-cli-1.2.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-configuration-1.6.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-httpclient-3.0.1.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/jackson-core-asl-1.8.8.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/commons-lang-2.4.jar" todir="${build.dir}/lib" verbose="true"></copy>

<copy file="${hadoop.root}/lib/jackson-mapper-asl-1.8.8.jar" todir="${build.dir}/lib" verbose="true"></copy>

<jar jarfile="${build.dir}/hadoop-${name}-${version}.jar" manifest="${root}/META-INF/MANIFEST.MF">

<fileset dir="${build.dir}" includes="classes/ lib/"></fileset>

<fileset dir="${root}" includes="resources/ plugin.xml"></fileset>

</jar>

</target>

</project>修改src\contrib\eclipse-plugin\META-INF下的MANIFEST.MF

同方法一的

为了和方法一步冲突,我修改buildOK.xml

编译:

Buildfile: E:\big\Hadoop\hadoop-1.1.2\src\contrib\eclipse-plugin\buildOK.xml

compile:

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\classes

[javac] E:\big\Hadoop\hadoop-1.1.2\src\contrib\eclipse-plugin\buildOK.xml:42: warning: 'includeantruntime' was not set, defaulting to build.sysclasspath=last; set to false for repeatable builds

[javac] Compiling 45 source files to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\classes

[javac] 注意:某些输入文件使用或覆盖了已过时的 API。

[javac] 注意:要了解详细信息,请使用 -Xlint:deprecation 重新编译。

[javac] 注意:某些输入文件使用了未经检查或不安全的操作。

[javac] 注意:要了解详细信息,请使用 -Xlint:unchecked 重新编译。

jar:

[mkdir] Created dir: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\hadoop-core-1.1.2.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\hadoop-core.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-cli-1.2.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-cli-1.2.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-configuration-1.6.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-configuration-1.6.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-httpclient-3.0.1.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-httpclient-3.0.1.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\jackson-core-asl-1.8.8.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\jackson-core-asl-1.8.8.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\commons-lang-2.4.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\commons-lang-2.4.jar

[copy] Copying 1 file to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib

[copy] Copying E:\big\Hadoop\hadoop-1.1.2\lib\jackson-mapper-asl-1.8.8.jar to E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\lib\jackson-mapper-asl-1.8.8.jar

[jar] Building jar: E:\big\Hadoop\hadoop-1.1.2\build\contrib\eclipse-plugin\hadoop-eclipse-plugin-1.1.2.jar

BUILD SUCCESSFUL

Total time: 2 seconds

Heiße KI -Werkzeuge

Undresser.AI Undress

KI-gestützte App zum Erstellen realistischer Aktfotos

AI Clothes Remover

Online-KI-Tool zum Entfernen von Kleidung aus Fotos.

Undress AI Tool

Ausziehbilder kostenlos

Clothoff.io

KI-Kleiderentferner

Video Face Swap

Tauschen Sie Gesichter in jedem Video mühelos mit unserem völlig kostenlosen KI-Gesichtstausch-Tool aus!

Heißer Artikel

Heiße Werkzeuge

Notepad++7.3.1

Einfach zu bedienender und kostenloser Code-Editor

SublimeText3 chinesische Version

Chinesische Version, sehr einfach zu bedienen

Senden Sie Studio 13.0.1

Leistungsstarke integrierte PHP-Entwicklungsumgebung

Dreamweaver CS6

Visuelle Webentwicklungstools

SublimeText3 Mac-Version

Codebearbeitungssoftware auf Gottesniveau (SublimeText3)

Heiße Themen

1393

1393

52

52

1206

1206

24

24

So passen Sie die Hintergrundfarbeinstellungen in Eclipse an

Jan 28, 2024 am 09:08 AM

So passen Sie die Hintergrundfarbeinstellungen in Eclipse an

Jan 28, 2024 am 09:08 AM

Wie stelle ich die Hintergrundfarbe in Eclipse ein? Eclipse ist eine bei Entwicklern beliebte integrierte Entwicklungsumgebung (IDE) und kann für die Entwicklung in einer Vielzahl von Programmiersprachen verwendet werden. Es ist sehr leistungsstark und flexibel und Sie können das Erscheinungsbild der Benutzeroberfläche und des Editors über Einstellungen anpassen. In diesem Artikel wird erläutert, wie Sie die Hintergrundfarbe in Eclipse festlegen, und es werden spezifische Codebeispiele bereitgestellt. 1. Ändern Sie die Hintergrundfarbe des Editors. Öffnen Sie Eclipse und rufen Sie das Menü „Windows“ auf. Wählen Sie „Einstellungen“. Navigieren Sie nach links

PyCharm-Einsteigerhandbuch: Umfassendes Verständnis der Plug-In-Installation!

Feb 25, 2024 pm 11:57 PM

PyCharm-Einsteigerhandbuch: Umfassendes Verständnis der Plug-In-Installation!

Feb 25, 2024 pm 11:57 PM

PyCharm ist eine leistungsstarke und beliebte integrierte Entwicklungsumgebung (IDE) für Python, die eine Fülle von Funktionen und Tools bereitstellt, damit Entwickler Code effizienter schreiben können. Der Plug-In-Mechanismus von PyCharm ist ein leistungsstarkes Tool zur Erweiterung seiner Funktionen. Durch die Installation verschiedener Plug-Ins können PyCharm um verschiedene Funktionen und benutzerdefinierte Funktionen erweitert werden. Daher ist es für PyCharm-Neulinge von entscheidender Bedeutung, die Installation von Plug-Ins zu verstehen und zu beherrschen. In diesem Artikel erhalten Sie eine detaillierte Einführung in die vollständige Installation des PyCharm-Plug-Ins.

![Fehler beim Laden des Plugins in Illustrator [Behoben]](https://img.php.cn/upload/article/000/465/014/170831522770626.jpg?x-oss-process=image/resize,m_fill,h_207,w_330) Fehler beim Laden des Plugins in Illustrator [Behoben]

Feb 19, 2024 pm 12:00 PM

Fehler beim Laden des Plugins in Illustrator [Behoben]

Feb 19, 2024 pm 12:00 PM

Erscheint beim Starten von Adobe Illustrator eine Meldung über einen Fehler beim Laden des Plug-Ins? Bei einigen Illustrator-Benutzern ist dieser Fehler beim Öffnen der Anwendung aufgetreten. Der Meldung folgt eine Liste problematischer Plugins. Diese Fehlermeldung weist darauf hin, dass ein Problem mit dem installierten Plug-In vorliegt, es kann jedoch auch andere Gründe haben, beispielsweise eine beschädigte Visual C++-DLL-Datei oder eine beschädigte Einstellungsdatei. Wenn dieser Fehler auftritt, werden wir Sie in diesem Artikel bei der Behebung des Problems unterstützen. Lesen Sie daher weiter unten weiter. Fehler beim Laden des Plug-Ins in Illustrator Wenn Sie beim Versuch, Adobe Illustrator zu starten, die Fehlermeldung „Fehler beim Laden des Plug-Ins“ erhalten, können Sie Folgendes verwenden: Als Administrator

Profi-Anleitung: Expertenrat und Schritte zur erfolgreichen Installation des Eclipse Lombok-Plug-Ins

Jan 28, 2024 am 09:15 AM

Profi-Anleitung: Expertenrat und Schritte zur erfolgreichen Installation des Eclipse Lombok-Plug-Ins

Jan 28, 2024 am 09:15 AM

Professionelle Anleitung: Expertenrat und Schritte zur Installation des Lombok-Plug-Ins in Eclipse. Es sind spezifische Codebeispiele erforderlich. Zusammenfassung: Lombok ist eine Java-Bibliothek, die das Schreiben von Java-Code durch Annotationen vereinfacht und einige leistungsstarke Tools bereitstellt. In diesem Artikel werden die Leser in die Schritte zur Installation und Konfiguration des Lombok-Plug-Ins in Eclipse eingeführt und einige spezifische Codebeispiele bereitgestellt, damit die Leser das Lombok-Plug-In besser verstehen und verwenden können. Laden Sie zuerst das Lombok-Plug-in herunter, das wir benötigen

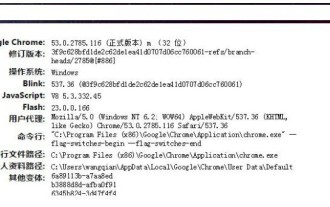

Was ist das Installationsverzeichnis der Chrome-Plug-in-Erweiterung?

Mar 08, 2024 am 08:55 AM

Was ist das Installationsverzeichnis der Chrome-Plug-in-Erweiterung?

Mar 08, 2024 am 08:55 AM

Was ist das Installationsverzeichnis der Chrome-Plug-in-Erweiterung? Unter normalen Umständen lautet das Standardinstallationsverzeichnis von Chrome-Plug-In-Erweiterungen wie folgt: 1. Der Standard-Installationsverzeichnis-Speicherort von Chrome-Plug-Ins in Windows XP: C:\DocumentsandSettings\Benutzername\LocalSettings\ApplicationData\Google\Chrome\UserData\ Default\Extensions2. Chrome in Windows7 Der Standardinstallationsverzeichnisspeicherort des Plug-Ins: C:\Benutzer\Benutzername\AppData\Local\Google\Chrome\User

Teilen Sie drei Lösungen mit, warum der Edge-Browser dieses Plug-in nicht unterstützt

Mar 13, 2024 pm 04:34 PM

Teilen Sie drei Lösungen mit, warum der Edge-Browser dieses Plug-in nicht unterstützt

Mar 13, 2024 pm 04:34 PM

Wenn Benutzer den Edge-Browser verwenden, fügen sie möglicherweise einige Plug-Ins hinzu, um weitere Anforderungen zu erfüllen. Beim Hinzufügen eines Plug-Ins wird jedoch angezeigt, dass dieses Plug-In nicht unterstützt wird. Heute stellt Ihnen der Herausgeber drei Lösungen vor. Methode 1: Versuchen Sie es mit einem anderen Browser. Methode 2: Der Flash Player im Browser ist möglicherweise veraltet oder fehlt, sodass das Plug-in nicht unterstützt wird. Sie können die neueste Version von der offiziellen Website herunterladen. Methode 3: Drücken Sie gleichzeitig die Tasten „Strg+Umschalt+Entf“. Klicken Sie auf „Daten löschen“ und öffnen Sie den Browser erneut.

Aufzeigen von Lösungen für Probleme bei der Ausführung von Eclipse-Code: Unterstützung bei der Behebung verschiedener Ausführungsfehler

Jan 28, 2024 am 09:22 AM

Aufzeigen von Lösungen für Probleme bei der Ausführung von Eclipse-Code: Unterstützung bei der Behebung verschiedener Ausführungsfehler

Jan 28, 2024 am 09:22 AM

Die Lösung für Probleme bei der Ausführung von Eclipse-Code wird aufgezeigt: Sie hilft Ihnen, verschiedene Fehler bei der Ausführung von Code zu beseitigen und erfordert spezifische Codebeispiele. Einführung: Eclipse ist eine häufig verwendete integrierte Entwicklungsumgebung (IDE), die in der Java-Entwicklung weit verbreitet ist. Obwohl Eclipse über leistungsstarke Funktionen und eine benutzerfreundliche Benutzeroberfläche verfügt, ist es unvermeidlich, dass beim Schreiben und Debuggen von Code verschiedene Ausführungsprobleme auftreten. In diesem Artikel werden einige häufig auftretende Probleme bei der Ausführung von Eclipse-Code aufgezeigt und Lösungen bereitgestellt. Bitte beachten Sie, dass dies zum besseren Verständnis der Leser erforderlich ist

Schritt-für-Schritt-Anleitung zum Ändern der Hintergrundfarbe mit Eclipse

Jan 28, 2024 am 08:28 AM

Schritt-für-Schritt-Anleitung zum Ändern der Hintergrundfarbe mit Eclipse

Jan 28, 2024 am 08:28 AM

Bringen Sie Ihnen Schritt für Schritt bei, wie Sie die Hintergrundfarbe in Eclipse ändern. Dazu sind spezifische Codebeispiele erforderlich. Eclipse ist eine sehr beliebte integrierte Entwicklungsumgebung (IDE), die häufig zum Schreiben und Debuggen von Java-Projekten verwendet wird. Standardmäßig ist die Hintergrundfarbe von Eclipse weiß, einige Benutzer möchten jedoch möglicherweise die Hintergrundfarbe nach ihren Wünschen ändern oder die Belastung der Augen verringern. In diesem Artikel erfahren Sie Schritt für Schritt, wie Sie die Hintergrundfarbe in Eclipse ändern, und stellen spezifische Codebeispiele bereit. Schritt 1: Öffnen Sie zuerst Eclipse