Golang hat seit 1.5 dreifarbige GC eingeführt. Nach vielen Verbesserungen kann die GC-Pausezeit der aktuellen Version 1.9 extrem kurz sein Aufgrund der „Antwortzeit“ eignet sich Go auch besser zum Schreiben von Netzwerkdienstprogrammen.

In diesem Artikel wird das Implementierungsprinzip der dreifarbigen GC in Go anhand der Analyse des Quellcodes von Golang erläutert.

Grundlegende Konzepte

Speicherstruktur

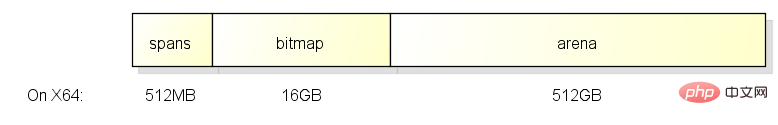

Wenn go das Programm startet, weist es einen Teil des Speichers mit einer kontinuierlichen virtuellen Speicheradresse zu. Die Struktur ist wie folgt:

Dieser Speicher ist in 3 Bereiche unterteilt: 512M, 16G und 512G. Ihre Funktionen sind wie folgt:

arena

Der Arena-Bereich ist das, was wir normalerweise als Heap bezeichnen. Der von go vom Heap zugewiesene Speicher befindet sich in diesem Bereich.

Bitmap

Der Bitmap-Bereich wird verwendet um anzugeben, welche Adressen im Arena-Bereich Objekte speichern und welche Adressen im Objekt Zeiger enthalten.

Ein Byte (8 Bit) im Bitmap-Bereich entspricht vier Speichern in Zeigergröße im Arena-Bereich, d. h. 2 Bits entsprechen einem Speicher in Zeigergröße.

Die Größe des Bitmap-Bereichs beträgt also 512 GB / Zeigergröße (8 Byte) / 4 = 16 GB.

Ein Byte im Bitmap-Bereich entspricht den vier Zeiger-Speichern im Arena-Bereich. Die Struktur jedes Zeiger-Speichers ist wie folgt Scannen Sie weiter und ob es Zeiger enthält:

Die entsprechende Beziehung zwischen Byte und Arena in der Bitmap beginnt am Ende, das heißt, sie wird mit der Speicherzuweisung auf beide Seiten erweitert :

Spans

Spans-Bereich wird verwendet, um anzugeben, zu welchem Span eine bestimmte Seite (Seite) im Arena-Bereich gehört unten.

Ein Zeiger (8 Byte) im Spans-Bereich entspricht einer Seite im Arena-Bereich (eine Seite = 8 KB in go).

Die Größe der Spannen beträgt also 512 GB / Seitengröße (8 KB) * Zeigergröße (8 Byte) = 512 MB.

Ein Zeiger im Spans-Bereich entspricht einer Seite im Arena-Bereich. Die Struktur ist wie folgt:

Was? Beim Zuweisen von Objekten vom Heap

In vielen Artikeln und Büchern über Go wurde erwähnt, dass Go automatisch bestimmt, welche Objekte auf dem Stapel und welche auf dem Heap platziert werden sollen.

Einfach ausgedrückt: Wenn auf den Inhalt eines Objekts zugegriffen werden kann, nachdem die Funktion, die das Objekt generiert hat, endet, wird das Objekt auf dem Heap zugewiesen.

Zu den Situationen der Zuweisung von Objekten auf dem Heap gehören:

1. Zurückgeben des Zeigers des Objekts

2. Übergeben des Zeigers des Objekts an andere Funktionen

3 . Objekte werden in Abschlüssen verwendet und die Objekte müssen geändert werden

4. Die Verwendung von new

in der C-Sprache ist eine sehr gefährliche Sache für Funktionen, um Zeiger auf Objekte auf der zurückzugeben Stapel, aber in go Dies ist jedoch sicher, da dieses Objekt automatisch auf dem Heap zugewiesen wird.

Der Prozess der Entscheidung, ob der Heap zum Zuweisen von Objekten verwendet werden soll, wird auch als „Escape-Analyse“ bezeichnet.

GC Bitmap

GC Beim Markieren müssen Sie wissen, welche Stellen Zeiger enthalten. Der oben erwähnte Bitmap-Bereich deckt beispielsweise die Zeigerinformationen im Arenabereich ab.

Darüber hinaus muss der GC auch wissen, wo der Stapelraum Zeiger enthält. Da der Stapelraum nicht zum Arena-Bereich gehört, befinden sich die Zeigerinformationen des Stapelraums in den Funktionsinformationen.

Außerdem muss GC beim Zuweisen von Objekten auch den Bitmap-Bereich entsprechend dem Typ des Objekts festlegen. Die Quellzeigerinformationen befinden sich in den Typinformationen.

Zusammenfassend gibt es die folgenden GC-Bitmaps in go:

1. Bitmap-Bereich: deckt den Arena-Bereich ab und verwendet 2 Bits, um einen Speicher in Zeigergröße darzustellen

2. Funktionsinformationen: Deckt den Stapelspeicher der Funktion ab und verwendet 1 Bit zur Darstellung eines zeigergroßen Speichers (befindet sich in stackmap.bytedata)

3. Typinformationen: Beim Zuweisen von Objekten wird dieser kopiert Der Bitmap-Bereich verwendet 1 Bit zur Darstellung eines Speichers in Zeigergröße (befindet sich in _type.gcdata).

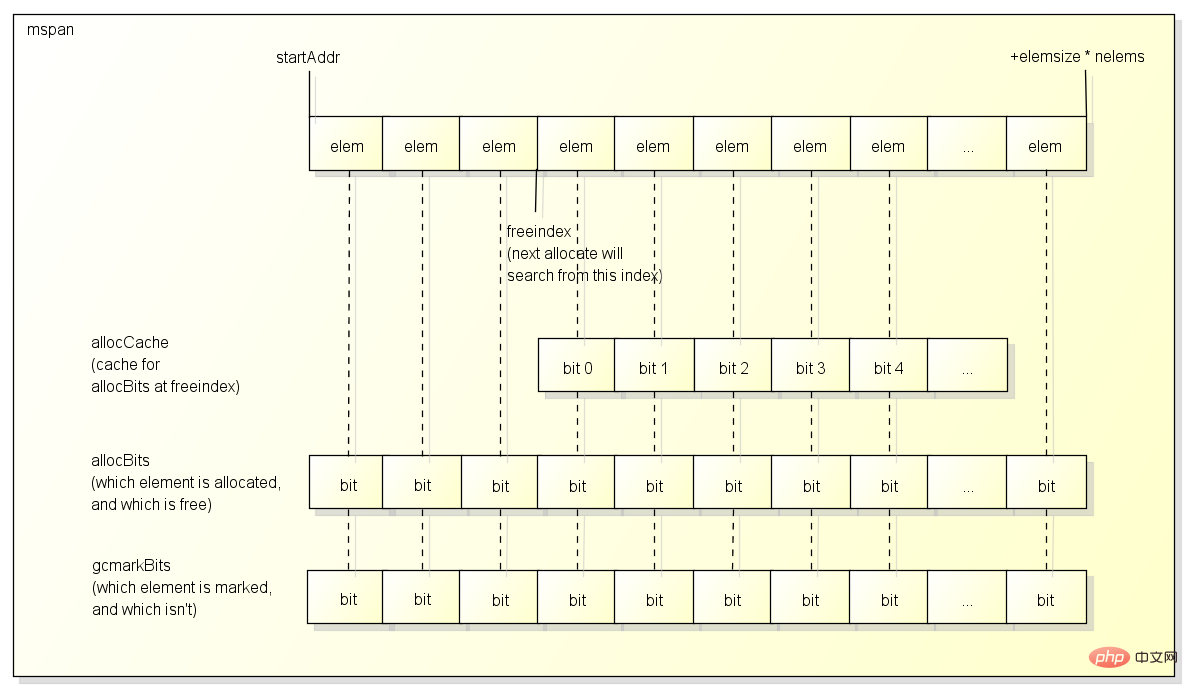

Span

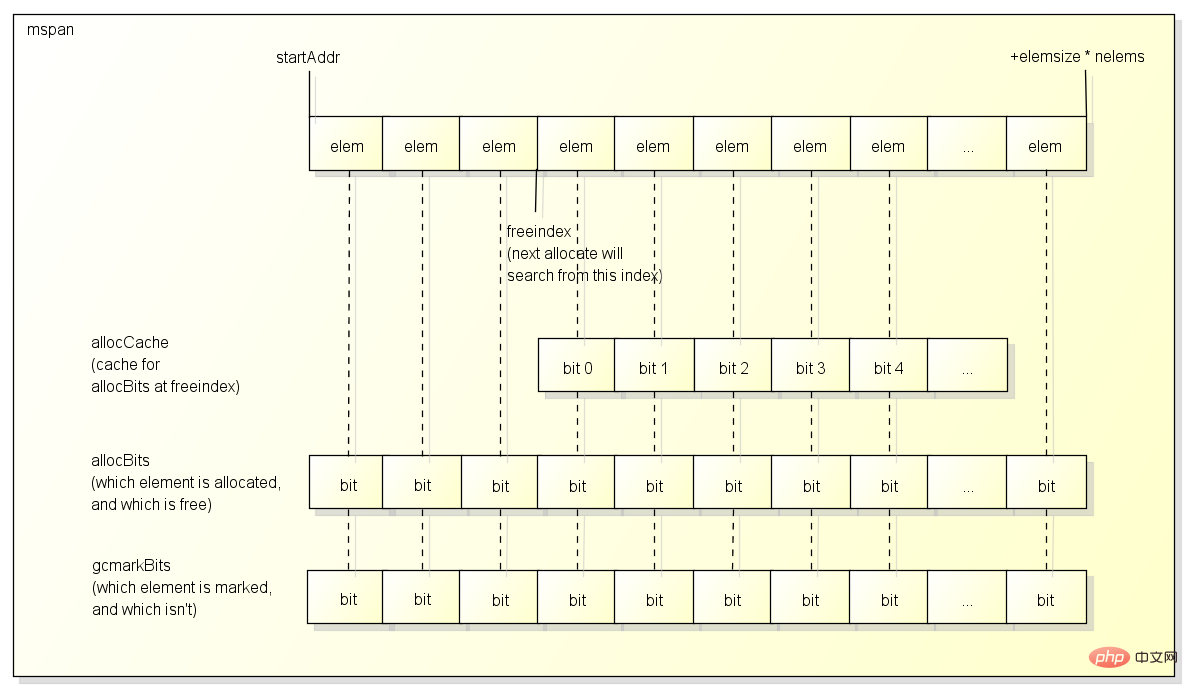

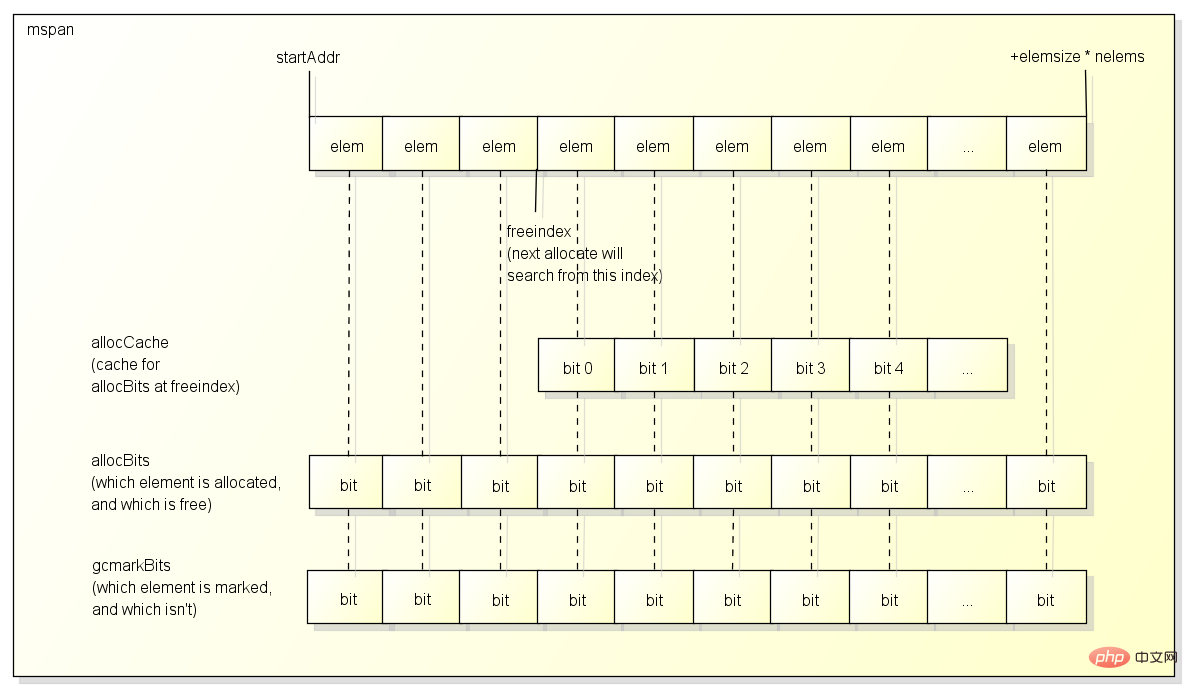

span ist ein Block, der zum Zuweisen von Objekten verwendet wird Struktur von Span:

Normalerweise enthält ein Span mehrere Elemente derselben Größe, und jedes Element speichert ein Objekt, es sei denn:

Normalerweise enthält ein Span mehrere Elemente derselben Größe, und jedes Element speichert ein Objekt, es sei denn:

1. Der Span wird zum Speichern großer Objekte verwendet , in diesem Fall hat span nur ein Element

2. Span wird verwendet, um kleine Objekte zu speichern, die keine Zeiger enthalten. In diesem Fall verwendet span ein Element, um mehrere Objekte zu speichern >Es gibt einen Freeindex, um die nächste Zuweisung zu markieren. Nach der Zuweisung wird der Freeindex erhöht. Die Elemente nach Freeindex wurden möglicherweise zugewiesen zugeteilt worden.

Span recycelt möglicherweise einige Elemente, nachdem jedes GC verwendet wurde, um zu markieren, welche Elemente zugewiesen sind und welche Elemente nicht zugewiesen sind.

Mit freeindex + allocBits können zugewiesene Elemente während der Zuweisung übersprungen und das Objekt in nicht zugewiesene Elemente gesetzt werden. Dies ist jedoch langsamer, da der Zugriff auf allocBits jedes Mal langsamer ist. Es gibt einen ganzzahligen Typ in span allocCache Um die Bitmap ab Freeindex zwischenzuspeichern, ist der zwischengespeicherte Bitwert dem ursprünglichen Wert entgegengesetzt.

gcmarkBits werden verwendet, um zu markieren, welche Objekte während der GC aktiv sind.

Es ist zu beachten, dass der Speicher der Span-Struktur selbst vom System zugewiesen wird Der oben erwähnte Bereich und der Bitmap-Bereich sind nur ein Index

Span-Typ

Span kann je nach Größe wie folgt in 67 Typen unterteilt werden:

// class bytes/obj bytes/span objects tail waste max waste // 1 8 8192 1024 0 87.50% // 2 16 8192 512 0 43.75% // 3 32 8192 256 0 46.88% // 4 48 8192 170 32 31.52% // 5 64 8192 128 0 23.44% // 6 80 8192 102 32 19.07% // 7 96 8192 85 32 15.95% // 8 112 8192 73 16 13.56% // 9 128 8192 64 0 11.72% // 10 144 8192 56 128 11.82% // 11 160 8192 51 32 9.73% // 12 176 8192 46 96 9.59% // 13 192 8192 42 128 9.25% // 14 208 8192 39 80 8.12% // 15 224 8192 36 128 8.15% // 16 240 8192 34 32 6.62% // 17 256 8192 32 0 5.86% // 18 288 8192 28 128 12.16% // 19 320 8192 25 192 11.80% // 20 352 8192 23 96 9.88% // 21 384 8192 21 128 9.51% // 22 416 8192 19 288 10.71% // 23 448 8192 18 128 8.37% // 24 480 8192 17 32 6.82% // 25 512 8192 16 0 6.05% // 26 576 8192 14 128 12.33% // 27 640 8192 12 512 15.48% // 28 704 8192 11 448 13.93% // 29 768 8192 10 512 13.94% // 30 896 8192 9 128 15.52% // 31 1024 8192 8 0 12.40% // 32 1152 8192 7 128 12.41% // 33 1280 8192 6 512 15.55% // 34 1408 16384 11 896 14.00% // 35 1536 8192 5 512 14.00% // 36 1792 16384 9 256 15.57% // 37 2048 8192 4 0 12.45% // 38 2304 16384 7 256 12.46% // 39 2688 8192 3 128 15.59% // 40 3072 24576 8 0 12.47% // 41 3200 16384 5 384 6.22% // 42 3456 24576 7 384 8.83% // 43 4096 8192 2 0 15.60% // 44 4864 24576 5 256 16.65% // 45 5376 16384 3 256 10.92% // 46 6144 24576 4 0 12.48% // 47 6528 32768 5 128 6.23% // 48 6784 40960 6 256 4.36% // 49 6912 49152 7 768 3.37% // 50 8192 8192 1 0 15.61% // 51 9472 57344 6 512 14.28% // 52 9728 49152 5 512 3.64% // 53 10240 40960 4 0 4.99% // 54 10880 32768 3 128 6.24% // 55 12288 24576 2 0 11.45% // 56 13568 40960 3 256 9.99% // 57 14336 57344 4 0 5.35% // 58 16384 16384 1 0 12.49% // 59 18432 73728 4 0 11.11% // 60 19072 57344 3 128 3.57% // 61 20480 40960 2 0 6.87% // 62 21760 65536 3 256 6.25% // 63 24576 24576 1 0 11.45% // 64 27264 81920 3 128 10.00% // 65 28672 57344 2 0 4.91% // 66 32768 32768 1 0 12.50%

mit Typ (Klasse) als Beispiel: Die Elementgröße in

span beträgt 1 Seite, was insgesamt 1024 Objekten entspricht Bei der Zuweisung von Objekten wird anhand der Größe des Objekts bestimmt, welcher Span-Typ verwendet wird.

Sie können diesem Beispiel auch entnehmen, dass Zuweisungsobjekte mit 17 und 32 Byte Span 3 verwenden, was bedeutet, dass Objekte mit Teilgröße bei der Zuweisung eine gewisse Menge an Speicherplatz verschwenden.

Einige Leute bemerken möglicherweise, dass die Elementgröße der größten Spanne oben 32 KB beträgt. Wo werden Objekte über 32 KB zugewiesen?

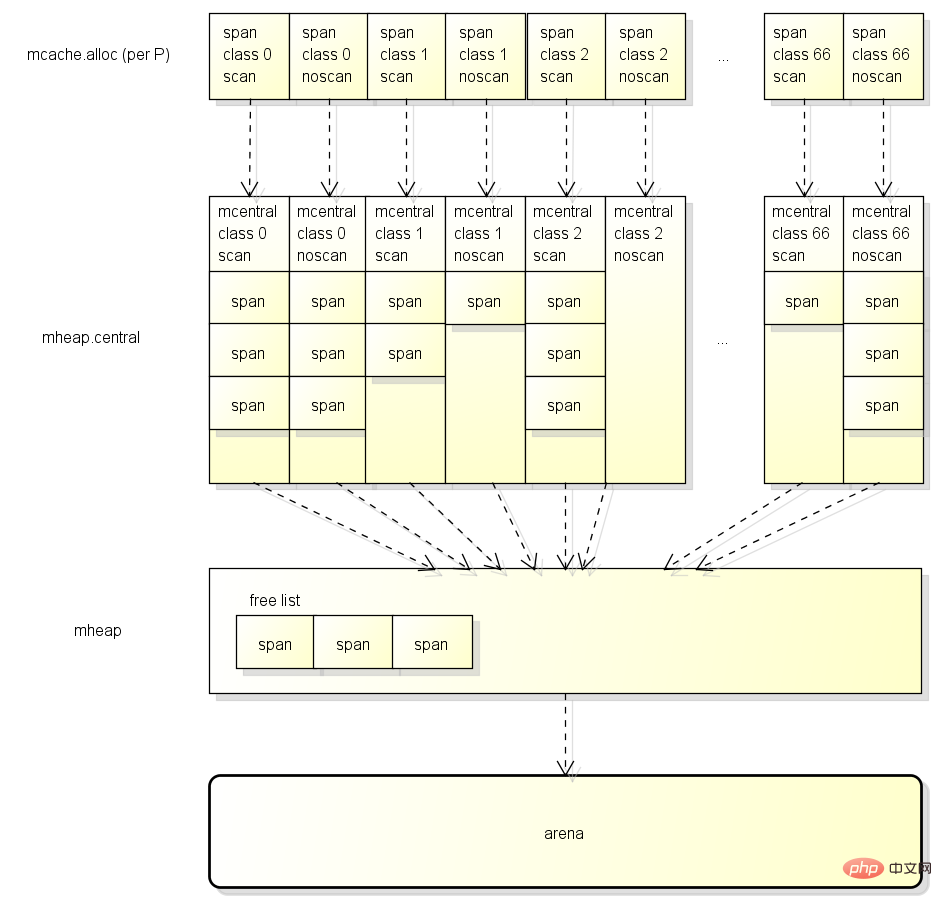

Der Speicherort von Span

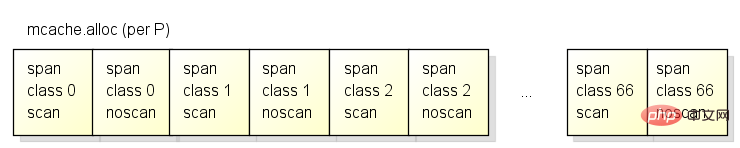

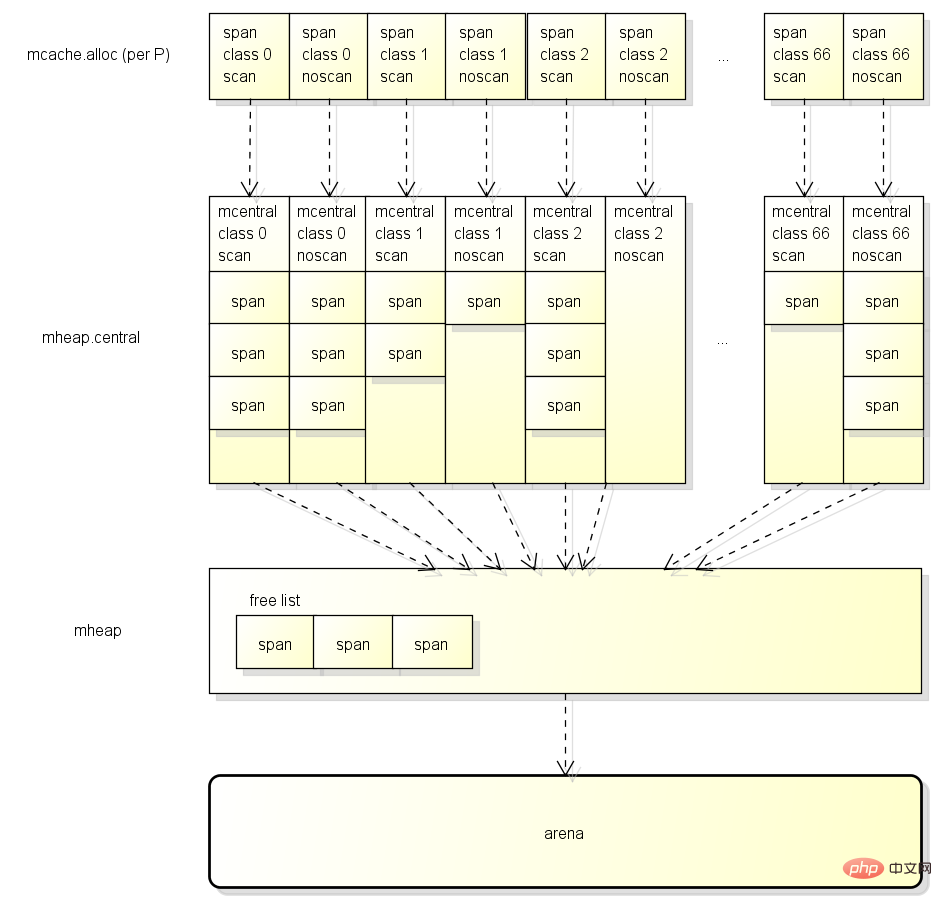

Um eine bessere Leistung beim Zuweisen von Objekten zu erzielen, verfügt jedes P über einen Span-Cache (auch mcache genannt). Die Struktur ist wie folgt:

Jedes P hat einen Span-Typ. Der Unterschied besteht darin, dass es 67*2=134 Span-Caches gibt.

Der Unterschied zwischen Scan und noscan bedeutet, dass  wenn das Objekt einen Zeiger enthält, die Scanspanne bei der Zuweisung des Objekts verwendet wird,

wenn das Objekt einen Zeiger enthält, die Scanspanne bei der Zuweisung des Objekts verwendet wird,

Zuerst aus dem Cache der P-(mcache)-Erfassung, wenn eine zwischengespeicherte Spanne vorhanden ist ist nicht voll, verwenden Sie es. Für diesen Schritt ist keine Sperre erforderlich.

Es wird zunächst überprüft, ob der GC funktioniert und der aktuelle G eine bestimmte Speichergröße zugewiesen hat.

Dies Der Mechanismus heißt GC Assist und dient dazu, zu verhindern, dass das GC-Recycling zu schnell Speicher zuweist.

Anschließend wird beurteilt, ob es sich um ein kleines oder ein großes Objekt handelt Objekt, largeAlloc wird direkt aufgerufen, um es vom Heap zuzuordnen.

Wenn es sich um ein kleines Objekt handelt, wird die verfügbare Spanne in drei Schritten ermittelt und das Objekt dann von der Spanne zugewiesenHolen Sie es zuerst aus dem Cache von P (mcache)

und dann aus dem globalen Cache (mcentral). Im globalen Cache befindet sich eine Liste der verfügbaren Spans

, der schließlich von mheap abgerufen wird. Es gibt auch eine kostenlose Liste von Spans in mheap. Wenn die Erfassung fehlschlägt, wird sie aus dem Arena-Bereich zugewiesen

Die bei der Zuweisung von Objekten beteiligten Datentypen umfassen:

g: Vor As In einem Artikel erwähnt, ist G Goroutine

mspan: der Block, der zum Zuweisen von Objekten verwendet wird Wenn go Objekte aus dem Heap zuordnet, ruft es die Funktion newobject auf:

Wenn go Objekte aus dem Heap zuordnet, ruft es die Funktion newobject auf:

// implementation of new builtin

// compiler (both frontend and SSA backend) knows the signature

// of this function

func newobject(typ *_type) unsafe.Pointer {

return mallocgc(typ.size, typ, true)

}newobject调用了mallocgc函数:

// Allocate an object of size bytes.

// Small objects are allocated from the per-P cache's free lists.

// Large objects (> 32 kB) are allocated straight from the heap.

func mallocgc(size uintptr, typ *_type, needzero bool) unsafe.Pointer {

if gcphase == _GCmarktermination {

throw("mallocgc called with gcphase == _GCmarktermination")

}

if size == 0 {

return unsafe.Pointer(&zerobase)

}

if debug.sbrk != 0 {

align := uintptr(16)

if typ != nil {

align = uintptr(typ.align)

}

return persistentalloc(size, align, &memstats.other_sys)

}

// 判断是否要辅助GC工作

// gcBlackenEnabled在GC的标记阶段会开启

// assistG is the G to charge for this allocation, or nil if

// GC is not currently active.

var assistG *g

if gcBlackenEnabled != 0 {

// Charge the current user G for this allocation.

assistG = getg()

if assistG.m.curg != nil {

assistG = assistG.m.curg

}

// Charge the allocation against the G. We'll account

// for internal fragmentation at the end of mallocgc.

assistG.gcAssistBytes -= int64(size)

// 会按分配的大小判断需要协助GC完成多少工作

// 具体的算法将在下面讲解收集器时说明

if assistG.gcAssistBytes = maxTinySize.

//

// SetFinalizer has a special case for objects potentially coming

// from tiny allocator, it such case it allows to set finalizers

// for an inner byte of a memory block.

//

// The main targets of tiny allocator are small strings and

// standalone escaping variables. On a json benchmark

// the allocator reduces number of allocations by ~12% and

// reduces heap size by ~20%.

off := c.tinyoffset

// Align tiny pointer for required (conservative) alignment.

if size&7 == 0 {

off = round(off, 8)

} else if size&3 == 0 {

off = round(off, 4)

} else if size&1 == 0 {

off = round(off, 2)

}

if off+size typ.size {

// Array allocation. If there are any

// pointers, GC has to scan to the last

// element.

if typ.ptrdata != 0 {

scanSize = dataSize - typ.size + typ.ptrdata

}

} else {

scanSize = typ.ptrdata

}

c.local_scan += scanSize

}

// 内存屏障, 因为x86和x64的store不会乱序所以这里只是个针对编译器的屏障, 汇编中是ret

// Ensure that the stores above that initialize x to

// type-safe memory and set the heap bits occur before

// the caller can make x observable to the garbage

// collector. Otherwise, on weakly ordered machines,

// the garbage collector could follow a pointer to x,

// but see uninitialized memory or stale heap bits.

publicationBarrier()

// 如果当前在GC中, 需要立刻标记分配后的对象为"黑色", 防止它被回收

// Allocate black during GC.

// All slots hold nil so no scanning is needed.

// This may be racing with GC so do it atomically if there can be

// a race marking the bit.

if gcphase != _GCoff {

gcmarknewobject(uintptr(x), size, scanSize)

}

// Race Detector的处理(用于检测线程冲突问题)

if raceenabled {

racemalloc(x, size)

}

// Memory Sanitizer的处理(用于检测危险指针等内存问题)

if msanenabled {

msanmalloc(x, size)

}

// 重新允许当前的G被抢占

mp.mallocing = 0

releasem(mp)

// 除错记录

if debug.allocfreetrace != 0 {

tracealloc(x, size, typ)

}

// Profiler记录

if rate := MemProfileRate; rate > 0 {

if size <p>接下来看看如何从span里面分配对象, 首先会调用nextFreeFast尝试快速分配:</p><pre class="brush:php;toolbar:false">// nextFreeFast returns the next free object if one is quickly available.

// Otherwise it returns 0.

func nextFreeFast(s *mspan) gclinkptr {

// 获取第一个非0的bit是第几个bit, 也就是哪个元素是未分配的

theBit := sys.Ctz64(s.allocCache) // Is there a free object in the allocCache?

// 找到未分配的元素

if theBit >= uint(theBit + 1)

s.freeindex = freeidx

// 返回元素所在的地址

v := gclinkptr(result*s.elemsize + s.base())

// 添加已分配的元素计数

s.allocCount++

return v

}

}

return 0

}如果在freeindex后无法快速找到未分配的元素, 就需要调用nextFree做出更复杂的处理:

// nextFree returns the next free object from the cached span if one is available.

// Otherwise it refills the cache with a span with an available object and

// returns that object along with a flag indicating that this was a heavy

// weight allocation. If it is a heavy weight allocation the caller must

// determine whether a new GC cycle needs to be started or if the GC is active

// whether this goroutine needs to assist the GC.

func (c *mcache) nextFree(spc spanClass) (v gclinkptr, s *mspan, shouldhelpgc bool) {

// 找到下一个freeindex和更新allocCache

s = c.alloc[spc]

shouldhelpgc = false

freeIndex := s.nextFreeIndex()

// 如果span里面所有元素都已分配, 则需要获取新的span

if freeIndex == s.nelems {

// The span is full.

if uintptr(s.allocCount) != s.nelems {

println("runtime: s.allocCount=", s.allocCount, "s.nelems=", s.nelems)

throw("s.allocCount != s.nelems && freeIndex == s.nelems")

}

// 申请新的span

systemstack(func() {

c.refill(spc)

})

// 获取申请后的新的span, 并设置需要检查是否执行GC

shouldhelpgc = true

s = c.alloc[spc]

freeIndex = s.nextFreeIndex()

}

if freeIndex >= s.nelems {

throw("freeIndex is not valid")

}

// 返回元素所在的地址

v = gclinkptr(freeIndex*s.elemsize + s.base())

// 添加已分配的元素计数

s.allocCount++

if uintptr(s.allocCount) > s.nelems {

println("s.allocCount=", s.allocCount, "s.nelems=", s.nelems)

throw("s.allocCount > s.nelems")

}

return

}如果mcache中指定类型的span已满, 就需要调用refill函数申请新的span:

// Gets a span that has a free object in it and assigns it

// to be the cached span for the given sizeclass. Returns this span.

func (c *mcache) refill(spc spanClass) *mspan {

_g_ := getg()

// 防止G被抢占

_g_.m.locks++

// Return the current cached span to the central lists.

s := c.alloc[spc]

// 确保当前的span所有元素都已分配

if uintptr(s.allocCount) != s.nelems {

throw("refill of span with free space remaining")

}

// 设置span的incache属性, 除非是全局使用的空span(也就是mcache里面span指针的默认值)

if s != &emptymspan {

s.incache = false

}

// 向mcentral申请一个新的span

// Get a new cached span from the central lists.

s = mheap_.central[spc].mcentral.cacheSpan()

if s == nil {

throw("out of memory")

}

if uintptr(s.allocCount) == s.nelems {

throw("span has no free space")

}

// 设置新的span到mcache中

c.alloc[spc] = s

// 允许G被抢占

_g_.m.locks--

return s

}向mcentral申请一个新的span会通过cacheSpan函数:

mcentral首先尝试从内部的链表复用原有的span, 如果复用失败则向mheap申请.

// Allocate a span to use in an MCache.

func (c *mcentral) cacheSpan() *mspan {

// 让当前G协助一部分的sweep工作

// Deduct credit for this span allocation and sweep if necessary.

spanBytes := uintptr(class_to_allocnpages[c.spanclass.sizeclass()]) * _PageSize

deductSweepCredit(spanBytes, 0)

// 对mcentral上锁, 因为可能会有多个M(P)同时访问

lock(&c.lock)

traceDone := false

if trace.enabled {

traceGCSweepStart()

}

sg := mheap_.sweepgen

retry:

// mcentral里面有两个span的链表

// - nonempty表示确定该span最少有一个未分配的元素

// - empty表示不确定该span最少有一个未分配的元素

// 这里优先查找nonempty的链表

// sweepgen每次GC都会增加2

// - sweepgen == 全局sweepgen, 表示span已经sweep过

// - sweepgen == 全局sweepgen-1, 表示span正在sweep

// - sweepgen == 全局sweepgen-2, 表示span等待sweep

var s *mspan

for s = c.nonempty.first; s != nil; s = s.next {

// 如果span等待sweep, 尝试原子修改sweepgen为全局sweepgen-1

if s.sweepgen == sg-2 && atomic.Cas(&s.sweepgen, sg-2, sg-1) {

// 修改成功则把span移到empty链表, sweep它然后跳到havespan

c.nonempty.remove(s)

c.empty.insertBack(s)

unlock(&c.lock)

s.sweep(true)

goto havespan

}

// 如果这个span正在被其他线程sweep, 就跳过

if s.sweepgen == sg-1 {

// the span is being swept by background sweeper, skip

continue

}

// span已经sweep过

// 因为nonempty链表中的span确定最少有一个未分配的元素, 这里可以直接使用它

// we have a nonempty span that does not require sweeping, allocate from it

c.nonempty.remove(s)

c.empty.insertBack(s)

unlock(&c.lock)

goto havespan

}

// 查找empty的链表

for s = c.empty.first; s != nil; s = s.next {

// 如果span等待sweep, 尝试原子修改sweepgen为全局sweepgen-1

if s.sweepgen == sg-2 && atomic.Cas(&s.sweepgen, sg-2, sg-1) {

// 把span放到empty链表的最后

// we have an empty span that requires sweeping,

// sweep it and see if we can free some space in it

c.empty.remove(s)

// swept spans are at the end of the list

c.empty.insertBack(s)

unlock(&c.lock)

// 尝试sweep

s.sweep(true)

// sweep以后还需要检测是否有未分配的对象, 如果有则可以使用它

freeIndex := s.nextFreeIndex()

if freeIndex != s.nelems {

s.freeindex = freeIndex

goto havespan

}

lock(&c.lock)

// the span is still empty after sweep

// it is already in the empty list, so just retry

goto retry

}

// 如果这个span正在被其他线程sweep, 就跳过

if s.sweepgen == sg-1 {

// the span is being swept by background sweeper, skip

continue

}

// 找不到有未分配对象的span

// already swept empty span,

// all subsequent ones must also be either swept or in process of sweeping

break

}

if trace.enabled {

traceGCSweepDone()

traceDone = true

}

unlock(&c.lock)

// 找不到有未分配对象的span, 需要从mheap分配

// 分配完成后加到empty链表中

// Replenish central list if empty.

s = c.grow()

if s == nil {

return nil

}

lock(&c.lock)

c.empty.insertBack(s)

unlock(&c.lock)

// At this point s is a non-empty span, queued at the end of the empty list,

// c is unlocked.

havespan:

if trace.enabled && !traceDone {

traceGCSweepDone()

}

// 统计span中未分配的元素数量, 加到mcentral.nmalloc中

// 统计span中未分配的元素总大小, 加到memstats.heap_live中

cap := int32((s.npages >= s.freeindex % 64

return s

}mcentral向mheap申请一个新的span会使用grow函数:

// grow allocates a new empty span from the heap and initializes it for c's size class.

func (c *mcentral) grow() *mspan {

// 根据mcentral的类型计算需要申请的span的大小(除以8K = 有多少页)和可以保存多少个元素

npages := uintptr(class_to_allocnpages[c.spanclass.sizeclass()])

size := uintptr(class_to_size[c.spanclass.sizeclass()])

n := (npages <p>mheap分配span的函数是alloc:</p><pre class="brush:php;toolbar:false">func (h *mheap) alloc(npage uintptr, spanclass spanClass, large bool, needzero bool) *mspan {

// 在g0的栈空间中调用alloc_m函数

// 关于systemstack的说明请看前一篇文章

// Don't do any operations that lock the heap on the G stack.

// It might trigger stack growth, and the stack growth code needs

// to be able to allocate heap.

var s *mspan

systemstack(func() {

s = h.alloc_m(npage, spanclass, large)

})

if s != nil {

if needzero && s.needzero != 0 {

memclrNoHeapPointers(unsafe.Pointer(s.base()), s.npages<p>alloc函数会在g0的栈空间中调用alloc_m函数:</p><pre class="brush:php;toolbar:false">// Allocate a new span of npage pages from the heap for GC'd memory

// and record its size class in the HeapMap and HeapMapCache.

func (h *mheap) alloc_m(npage uintptr, spanclass spanClass, large bool) *mspan {

_g_ := getg()

if _g_ != _g_.m.g0 {

throw("_mheap_alloc not on g0 stack")

}

// 对mheap上锁, 这里的锁是全局锁

lock(&h.lock)

// 为了防止heap增速太快, 在分配n页之前要先sweep和回收n页

// 会先枚举busy列表然后再枚举busyLarge列表进行sweep, 具体参考reclaim和reclaimList函数

// To prevent excessive heap growth, before allocating n pages

// we need to sweep and reclaim at least n pages.

if h.sweepdone == 0 {

// TODO(austin): This tends to sweep a large number of

// spans in order to find a few completely free spans

// (for example, in the garbage benchmark, this sweeps

// ~30x the number of pages its trying to allocate).

// If GC kept a bit for whether there were any marks

// in a span, we could release these free spans

// at the end of GC and eliminate this entirely.

if trace.enabled {

traceGCSweepStart()

}

h.reclaim(npage)

if trace.enabled {

traceGCSweepDone()

}

}

// 把mcache中的本地统计数据加到全局

// transfer stats from cache to global

memstats.heap_scan += uint64(_g_.m.mcache.local_scan)

_g_.m.mcache.local_scan = 0

memstats.tinyallocs += uint64(_g_.m.mcache.local_tinyallocs)

_g_.m.mcache.local_tinyallocs = 0

// 调用allocSpanLocked分配span, allocSpanLocked函数要求当前已经对mheap上锁

s := h.allocSpanLocked(npage, &memstats.heap_inuse)

if s != nil {

// Record span info, because gc needs to be

// able to map interior pointer to containing span.

// 设置span的sweepgen = 全局sweepgen

atomic.Store(&s.sweepgen, h.sweepgen)

// 放到全局span列表中, 这里的sweepSpans的长度是2

// sweepSpans[h.sweepgen/2%2]保存当前正在使用的span列表

// sweepSpans[1-h.sweepgen/2%2]保存等待sweep的span列表

// 因为每次gcsweepgen都会加2, 每次gc这两个列表都会交换

h.sweepSpans[h.sweepgen/2%2].push(s) // Add to swept in-use list.

// 初始化span成员

s.state = _MSpanInUse

s.allocCount = 0

s.spanclass = spanclass

if sizeclass := spanclass.sizeclass(); sizeclass == 0 {

s.elemsize = s.npages <p>继续查看allocSpanLocked函数:</p><pre class="brush:php;toolbar:false">// Allocates a span of the given size. h must be locked.

// The returned span has been removed from the

// free list, but its state is still MSpanFree.

func (h *mheap) allocSpanLocked(npage uintptr, stat *uint64) *mspan {

var list *mSpanList

var s *mspan

// 尝试在mheap中的自由列表分配

// 页数小于_MaxMHeapList(128页=1M)的自由span都会在free列表中

// 页数大于_MaxMHeapList的自由span都会在freelarge列表中

// Try in fixed-size lists up to max.

for i := int(npage); i 0 {

sysUsed(unsafe.Pointer(s.base()), s.npages npage {

// Trim extra and put it back in the heap.

t := (*mspan)(h.spanalloc.alloc())

t.init(s.base()+npage> _PageShift

if p > 0 {

h.spans[p-1] = s

}

h.spans[p] = t

h.spans[p+t.npages-1] = t

t.needzero = s.needzero

s.state = _MSpanManual // prevent coalescing with s

t.state = _MSpanManual

h.freeSpanLocked(t, false, false, s.unusedsince)

s.state = _MSpanFree

}

s.unusedsince = 0

// 设置spans区域, 哪些地址对应哪个mspan对象

p := (s.base() - h.arena_start) >> _PageShift

for n := uintptr(0); n <p>继续查看allocLarge函数:</p><pre class="brush:php;toolbar:false">// allocLarge allocates a span of at least npage pages from the treap of large spans.

// Returns nil if no such span currently exists.

func (h *mheap) allocLarge(npage uintptr) *mspan {

// Search treap for smallest span with >= npage pages.

return h.freelarge.remove(npage)

}freelarge的类型是mTreap, 调用remove函数会在树里面搜索一个至少npage且在树中的最小的span返回:

// remove searches for, finds, removes from the treap, and returns the smallest

// span that can hold npages. If no span has at least npages return nil.

// This is slightly more complicated than a simple binary tree search

// since if an exact match is not found the next larger node is

// returned.

// If the last node inspected > npagesKey not holding

// a left node (a smaller npages) is the "best fit" node.

func (root *mTreap) remove(npages uintptr) *mspan {

t := root.treap

for t != nil {

if t.spanKey == nil {

throw("treap node with nil spanKey found")

}

if t.npagesKey = npages {

t = t.left

} else {

result := t.spanKey

root.removeNode(t)

return result

}

}

return nil

}向arena区域申请新span的函数是mheap类的grow函数:

// Try to add at least npage pages of memory to the heap,

// returning whether it worked.

//

// h must be locked.

func (h *mheap) grow(npage uintptr) bool {

// Ask for a big chunk, to reduce the number of mappings

// the operating system needs to track; also amortizes

// the overhead of an operating system mapping.

// Allocate a multiple of 64kB.

npage = round(npage, (64 npage>_PageShift)

p := (s.base() - h.arena_start) >> _PageShift

for i := p; i <p>继续查看mheap的sysAlloc函数:</p><pre class="brush:php;toolbar:false">// sysAlloc allocates the next n bytes from the heap arena. The

// returned pointer is always _PageSize aligned and between

// h.arena_start and h.arena_end. sysAlloc returns nil on failure.

// There is no corresponding free function.

func (h *mheap) sysAlloc(n uintptr) unsafe.Pointer {

// strandLimit is the maximum number of bytes to strand from

// the current arena block. If we would need to strand more

// than this, we fall back to sysAlloc'ing just enough for

// this allocation.

const strandLimit = 16 h.arena_end-h.arena_alloc {

// If we haven't grown the arena to _MaxMem yet, try

// to reserve some more address space.

p_size := round(n+_PageSize, 256 h.arena_used {

h.setArenaUsed(h.arena_alloc, true)

}

if p&(_PageSize-1) != 0 {

throw("misrounded allocation in MHeap_SysAlloc")

}

return unsafe.Pointer(p)

}

// 预留空间失败后的处理

reservationFailed:

// If using 64-bit, our reservation is all we have.

if sys.PtrSize != 4 {

return nil

}

// On 32-bit, once the reservation is gone we can

// try to get memory at a location chosen by the OS.

p_size := round(n, _PageSize) + _PageSize

p := uintptr(sysAlloc(p_size, &memstats.heap_sys))

if p == 0 {

return nil

}

if p _MaxMem {

// This shouldn't be possible because _MaxMem is the

// whole address space on 32-bit.

top := uint64(h.arena_start) + _MaxMem

print("runtime: memory allocated by OS (", hex(p), ") not in usable range [", hex(h.arena_start), ",", hex(top), ")\n")

sysFree(unsafe.Pointer(p), p_size, &memstats.heap_sys)

return nil

}

p += -p & (_PageSize - 1)

if p+n > h.arena_used {

h.setArenaUsed(p+n, true)

}

if p&(_PageSize-1) != 0 {

throw("misrounded allocation in MHeap_SysAlloc")

}

return unsafe.Pointer(p)

}以上就是分配对象的完整流程了, 接下来分析GC标记和回收对象的处理.

回收对象的处理

回收对象的流程

GO的GC是并行GC, 也就是GC的大部分处理和普通的go代码是同时运行的, 这让GO的GC流程比较复杂.

首先GC有四个阶段, 它们分别是:

Sweep Termination: 对未清扫的span进行清扫, 只有上一轮的GC的清扫工作完成才可以开始新一轮的GC

Mark: 扫描所有根对象, 和根对象可以到达的所有对象, 标记它们不被回收

Mark Termination: 完成标记工作, 重新扫描部分根对象(要求STW)

Sweep: 按标记结果清扫span

下图是比较完整的GC流程, 并按颜色对这四个阶段进行了分类:

在GC过程中会有两种后台任务(G), 一种是标记用的后台任务, 一种是清扫用的后台任务.

标记用的后台任务会在需要时启动, 可以同时工作的后台任务数量大约是P的数量的25%, 也就是go所讲的让25%的cpu用在GC上的根据.

清扫用的后台任务在程序启动时会启动一个, 进入清扫阶段时唤醒.

目前整个GC流程会进行两次STW(Stop The World), 第一次是Mark阶段的开始, 第二次是Mark Termination阶段.

第一次STW会准备根对象的扫描, 启动写屏障(Write Barrier)和辅助GC(mutator assist).

第二次STW会重新扫描部分根对象, 禁用写屏障(Write Barrier)和辅助GC(mutator assist).

需要注意的是, 不是所有根对象的扫描都需要STW, 例如扫描栈上的对象只需要停止拥有该栈的G.

从go 1.9开始, 写屏障的实现使用了Hybrid Write Barrier, 大幅减少了第二次STW的时间.

GC的触发条件

GC在满足一定条件后会被触发, 触发条件有以下几种:

gcTriggerAlways: 强制触发GC

gcTriggerHeap: 当前分配的内存达到一定值就触发GC

gcTriggerTime: 当一定时间没有执行过GC就触发GC

gcTriggerCycle: 要求启动新一轮的GC, 已启动则跳过, 手动触发GC的runtime.GC()会使用这个条件

触发条件的判断在gctrigger的test函数.

其中gcTriggerHeap和gcTriggerTime这两个条件是自然触发的, gcTriggerHeap的判断代码如下:

return memstats.heap_live >= memstats.gc_trigger

heap_live的增加在上面对分配器的代码分析中可以看到, 当值达到gc_trigger就会触发GC, 那么gc_trigger是如何决定的?

gc_trigger的计算在gcSetTriggerRatio函数中, 公式是:

trigger = uint64(float64(memstats.heap_marked) * (1 + triggerRatio))

当前标记存活的大小乘以1+系数triggerRatio, 就是下次出发GC需要的分配量.

triggerRatio在每次GC后都会调整, 计算triggerRatio的函数是encCycle, 公式是:

const triggerGain = 0.5

// 目标Heap增长率, 默认是1.0

goalGrowthRatio := float64(gcpercent) / 100

// 实际Heap增长率, 等于总大小/存活大小-1

actualGrowthRatio := float64(memstats.heap_live)/float64(memstats.heap_marked) - 1

// GC标记阶段的使用时间(因为endCycle是在Mark Termination阶段调用的)

assistDuration := nanotime() - c.markStartTime

// GC标记阶段的CPU占用率, 目标值是0.25

utilization := gcGoalUtilization

if assistDuration > 0 {

// assistTime是G辅助GC标记对象所使用的时间合计

// (nanosecnds spent in mutator assists during this cycle)

// 额外的CPU占用率 = 辅助GC标记对象的总时间 / (GC标记使用时间 * P的数量)

utilization += float64(c.assistTime) / float64(assistDuration*int64(gomaxprocs))

}

// 触发系数偏移值 = 目标增长率 - 原触发系数 - CPU占用率 / 目标CPU占用率 * (实际增长率 - 原触发系数)

// 参数的分析:

// 实际增长率越大, 触发系数偏移值越小, 小于0时下次触发GC会提早

// CPU占用率越大, 触发系数偏移值越小, 小于0时下次触发GC会提早

// 原触发系数越大, 触发系数偏移值越小, 小于0时下次触发GC会提早

triggerError := goalGrowthRatio - memstats.triggerRatio - utilization/gcGoalUtilization*(actualGrowthRatio-memstats.triggerRatio)

// 根据偏移值调整触发系数, 每次只调整偏移值的一半(渐进式调整)

triggerRatio := memstats.triggerRatio + triggerGain*triggerError公式中的"目标Heap增长率"可以通过设置环境变量"GOGC"调整, 默认值是100, 增加它的值可以减少GC的触发.

设置"GOGC=off"可以彻底关掉GC.

gcTriggerTime的判断代码如下:

lastgc := int64(atomic.Load64(&memstats.last_gc_nanotime)) return lastgc != 0 && t.now-lastgc > forcegcperiod

forcegcperiod的定义是2分钟, 也就是2分钟内没有执行过GC就会强制触发.

三色的定义(黑, 灰, 白)

我看过的对三色GC的"三色"这个概念解释的最好的文章就是这一篇了, 强烈建议先看这一篇中的讲解.

"三色"的概念可以简单的理解为:

黑色: 对象在这次GC中已标记, 且这个对象包含的子对象也已标记

灰色: 对象在这次GC中已标记, 但这个对象包含的子对象未标记

白色: 对象在这次GC中未标记

在go内部对象并没有保存颜色的属性, 三色只是对它们的状态的描述,

白色的对象在它所在的span的gcmarkBits中对应的bit为0,

灰色的对象在它所在的span的gcmarkBits中对应的bit为1, 并且对象在标记队列中,

黑色的对象在它所在的span的gcmarkBits中对应的bit为1, 并且对象已经从标记队列中取出并处理.

gc完成后, gcmarkBits会移动到allocBits然后重新分配一个全部为0的bitmap, 这样黑色的对象就变为了白色.

写屏障(Write Barrier)

因为go支持并行GC, GC的扫描和go代码可以同时运行, 这样带来的问题是GC扫描的过程中go代码有可能改变了对象的依赖树,

例如开始扫描时发现根对象A和B, B拥有C的指针, GC先扫描A, 然后B把C的指针交给A, GC再扫描B, 这时C就不会被扫描到.

为了避免这个问题, go在GC的标记阶段会启用写屏障(Write Barrier).

启用了写屏障(Write Barrier)后, 当B把C的指针交给A时, GC会认为在这一轮的扫描中C的指针是存活的,

即使A可能会在稍后丢掉C, 那么C就在下一轮回收.

写屏障只针对指针启用, 而且只在GC的标记阶段启用, 平时会直接把值写入到目标地址.

go在1.9开始启用了混合写屏障(Hybrid Write Barrier), 伪代码如下:

writePointer(slot, ptr): shade(*slot) if any stack is grey: shade(ptr) *slot = ptr

混合写屏障会同时标记指针写入目标的"原指针"和“新指针".

标记原指针的原因是, 其他运行中的线程有可能会同时把这个指针的值复制到寄存器或者栈上的本地变量,

因为复制指针到寄存器或者栈上的本地变量不会经过写屏障, 所以有可能会导致指针不被标记, 试想下面的情况:

[go] b = obj [go] oldx = nil [gc] scan oldx... [go] oldx = b.x // 复制b.x到本地变量, 不进过写屏障 [go] b.x = ptr // 写屏障应该标记b.x的原值 [gc] scan b... 如果写屏障不标记原值, 那么oldx就不会被扫描到.

标记新指针的原因是, 其他运行中的线程有可能会转移指针的位置, 试想下面的情况:

[go] a = ptr [go] b = obj [gc] scan b... [go] b.x = a // 写屏障应该标记b.x的新值 [go] a = nil [gc] scan a... 如果写屏障不标记新值, 那么ptr就不会被扫描到.

混合写屏障可以让GC在并行标记结束后不需要重新扫描各个G的堆栈, 可以减少Mark Termination中的STW时间.

除了写屏障外, 在GC的过程中所有新分配的对象都会立刻变为黑色, 在上面的mallocgc函数中可以看到.

辅助GC(mutator assist)

为了防止heap增速太快, 在GC执行的过程中如果同时运行的G分配了内存, 那么这个G会被要求辅助GC做一部分的工作.

在GC的过程中同时运行的G称为"mutator", "mutator assist"机制就是G辅助GC做一部分工作的机制.

辅助GC做的工作有两种类型, 一种是标记(Mark), 另一种是清扫(Sweep).

辅助标记的触发可以查看上面的mallocgc函数, 触发时G会帮助扫描"工作量"个对象, 工作量的计算公式是:

debtBytes * assistWorkPerByte

意思是分配的大小乘以系数assistWorkPerByte, assistWorkPerByte的计算在函数revise中, 公式是:

// 等待扫描的对象数量 = 未扫描的对象数量 - 已扫描的对象数量 scanWorkExpected := int64(memstats.heap_scan) - c.scanWork if scanWorkExpected <p>和辅助标记不一样的是, 辅助清扫申请新span时才会检查, 而辅助标记是每次分配对象时都会检查.<br>辅助清扫的触发可以看上面的cacheSpan函数, 触发时G会帮助回收"工作量"页的对象, 工作量的计算公式是:</p><pre class="brush:php;toolbar:false">spanBytes * sweepPagesPerByte // 不完全相同, 具体看deductSweepCredit函数

意思是分配的大小乘以系数sweepPagesPerByte, sweepPagesPerByte的计算在函数gcSetTriggerRatio中, 公式是:

// 当前的Heap大小 heapLiveBasis := atomic.Load64(&memstats.heap_live) // 距离触发GC的Heap大小 = 下次触发GC的Heap大小 - 当前的Heap大小 heapDistance := int64(trigger) - int64(heapLiveBasis) heapDistance -= 1024 * 1024 if heapDistance <p id="根对象">根对象</p><p>在GC的标记阶段首先需要标记的就是"根对象", 从根对象开始可到达的所有对象都会被认为是存活的.<br>根对象包含了全局变量, 各个G的栈上的变量等, GC会先扫描根对象然后再扫描根对象可到达的所有对象.<br>扫描根对象包含了一系列的工作, 它们定义在函数:</p><p>Fixed Roots: 特殊的扫描工作</p><p>fixedRootFinalizers: 扫描析构器队列</p><p>fixedRootFreeGStacks: 释放已中止的G的栈</p><p>Flush Cache Roots: 释放mcache中的所有span, 要求STW</p><p>Data Roots: 扫描可读写的全局变量</p><p>BSS Roots: 扫描只读的全局变量</p><p>Span Roots: 扫描各个span中特殊对象(析构器列表)</p><p>Stack Roots: 扫描各个G的栈</p><p>标记阶段(Mark)会做其中的"Fixed Roots", "Data Roots", "BSS Roots", "Span Roots", "Stack Roots".</p><p>完成标记阶段(Mark Termination)会做其中的"Fixed Roots", "Flush Cache Roots".</p><p>标记队列</p><p>GC的标记阶段会使用"标记队列"来确定所有可从根对象到达的对象都已标记, 上面提到的"灰色"的对象就是在标记队列中的对象.</p><p>举例来说, 如果当前有[A, B, C]这三个根对象, 那么扫描根对象时就会把它们放到标记队列:</p><pre class="brush:php;toolbar:false">work queue: [A, B, C]

后台标记任务从标记队列中取出A, 如果A引用了D, 则把D放入标记队列:

work queue: [B, C, D]

后台标记任务从标记队列取出B, 如果B也引用了D, 这时因为D在gcmarkBits中对应的bit已经是1所以会跳过:

work queue: [C, D]

如果并行运行的go代码分配了一个对象E, 对象E会被立刻标记, 但不会进入标记队列(因为确定E没有引用其他对象).

然后并行运行的go代码把对象F设置给对象E的成员, 写屏障会标记对象F然后把对象F加到运行队列:

work queue: [C, D, F]

后台标记任务从标记队列取出C, 如果C没有引用其他对象, 则不需要处理:

work queue: [D, F]

后台标记任务从标记队列取出D, 如果D引用了X, 则把X放入标记队列:

work queue: [F, X]

后台标记任务从标记队列取出F, 如果F没有引用其他对象, 则不需要处理.

后台标记任务从标记队列取出X, 如果X没有引用其他对象, 则不需要处理.

最后标记队列为空, 标记完成, 存活的对象有[A, B, C, D, E, F, X].

实际的状况会比上面介绍的状况稍微复杂一点.

标记队列会分为全局标记队列和各个P的本地标记队列, 这点和协程中的运行队列相似.

并且标记队列为空以后, 还需要停止整个世界并禁止写屏障, 然后再次检查是否为空.

源代码分析

go触发gc会从gcStart函数开始:

// gcStart transitions the GC from _GCoff to _GCmark (if

// !mode.stwMark) or _GCmarktermination (if mode.stwMark) by

// performing sweep termination and GC initialization.

//

// This may return without performing this transition in some cases,

// such as when called on a system stack or with locks held.

func gcStart(mode gcMode, trigger gcTrigger) {

// 判断当前G是否可抢占, 不可抢占时不触发GC

// Since this is called from malloc and malloc is called in

// the guts of a number of libraries that might be holding

// locks, don't attempt to start GC in non-preemptible or

// potentially unstable situations.

mp := acquirem()

if gp := getg(); gp == mp.g0 || mp.locks > 1 || mp.preemptoff != "" {

releasem(mp)

return

}

releasem(mp)

mp = nil

// 并行清扫上一轮GC未清扫的span

// Pick up the remaining unswept/not being swept spans concurrently

//

// This shouldn't happen if we're being invoked in background

// mode since proportional sweep should have just finished

// sweeping everything, but rounding errors, etc, may leave a

// few spans unswept. In forced mode, this is necessary since

// GC can be forced at any point in the sweeping cycle.

//

// We check the transition condition continuously here in case

// this G gets delayed in to the next GC cycle.

for trigger.test() && gosweepone() != ^uintptr(0) {

sweep.nbgsweep++

}

// 上锁, 然后重新检查gcTrigger的条件是否成立, 不成立时不触发GC

// Perform GC initialization and the sweep termination

// transition.

semacquire(&work.startSema)

// Re-check transition condition under transition lock.

if !trigger.test() {

semrelease(&work.startSema)

return

}

// 记录是否强制触发, gcTriggerCycle是runtime.GC用的

// For stats, check if this GC was forced by the user.

work.userForced = trigger.kind == gcTriggerAlways || trigger.kind == gcTriggerCycle

// 判断是否指定了禁止并行GC的参数

// In gcstoptheworld debug mode, upgrade the mode accordingly.

// We do this after re-checking the transition condition so

// that multiple goroutines that detect the heap trigger don't

// start multiple STW GCs.

if mode == gcBackgroundMode {

if debug.gcstoptheworld == 1 {

mode = gcForceMode

} else if debug.gcstoptheworld == 2 {

mode = gcForceBlockMode

}

}

// Ok, we're doing it! Stop everybody else

semacquire(&worldsema)

// 跟踪处理

if trace.enabled {

traceGCStart()

}

// 启动后台扫描任务(G)

if mode == gcBackgroundMode {

gcBgMarkStartWorkers()

}

// 重置标记相关的状态

gcResetMarkState()

// 重置参数

work.stwprocs, work.maxprocs = gcprocs(), gomaxprocs

work.heap0 = atomic.Load64(&memstats.heap_live)

work.pauseNS = 0

work.mode = mode

// 记录开始时间

now := nanotime()

work.tSweepTerm = now

work.pauseStart = now

// 停止所有运行中的G, 并禁止它们运行

systemstack(stopTheWorldWithSema)

// !!!!!!!!!!!!!!!!

// 世界已停止(STW)...

// !!!!!!!!!!!!!!!!

// 清扫上一轮GC未清扫的span, 确保上一轮GC已完成

// Finish sweep before we start concurrent scan.

systemstack(func() {

finishsweep_m()

})

// 清扫sched.sudogcache和sched.deferpool

// clearpools before we start the GC. If we wait they memory will not be

// reclaimed until the next GC cycle.

clearpools()

// 增加GC计数

work.cycles++

// 判断是否并行GC模式

if mode == gcBackgroundMode { // Do as much work concurrently as possible

// 标记新一轮GC已开始

gcController.startCycle()

work.heapGoal = memstats.next_gc

// 设置全局变量中的GC状态为_GCmark

// 然后启用写屏障

// Enter concurrent mark phase and enable

// write barriers.

//

// Because the world is stopped, all Ps will

// observe that write barriers are enabled by

// the time we start the world and begin

// scanning.

//

// Write barriers must be enabled before assists are

// enabled because they must be enabled before

// any non-leaf heap objects are marked. Since

// allocations are blocked until assists can

// happen, we want enable assists as early as

// possible.

setGCPhase(_GCmark)

// 重置后台标记任务的计数

gcBgMarkPrepare() // Must happen before assist enable.

// 计算扫描根对象的任务数量

gcMarkRootPrepare()

// 标记所有tiny alloc等待合并的对象

// Mark all active tinyalloc blocks. Since we're

// allocating from these, they need to be black like

// other allocations. The alternative is to blacken

// the tiny block on every allocation from it, which

// would slow down the tiny allocator.

gcMarkTinyAllocs()

// 启用辅助GC

// At this point all Ps have enabled the write

// barrier, thus maintaining the no white to

// black invariant. Enable mutator assists to

// put back-pressure on fast allocating

// mutators.

atomic.Store(&gcBlackenEnabled, 1)

// 记录标记开始的时间

// Assists and workers can start the moment we start

// the world.

gcController.markStartTime = now

// 重新启动世界

// 前面创建的后台标记任务会开始工作, 所有后台标记任务都完成工作后, 进入完成标记阶段

// Concurrent mark.

systemstack(startTheWorldWithSema)

// !!!!!!!!!!!!!!!

// 世界已重新启动...

// !!!!!!!!!!!!!!!

// 记录停止了多久, 和标记阶段开始的时间

now = nanotime()

work.pauseNS += now - work.pauseStart

work.tMark = now

} else {

// 不是并行GC模式

// 记录完成标记阶段开始的时间

t := nanotime()

work.tMark, work.tMarkTerm = t, t

work.heapGoal = work.heap0

// 跳过标记阶段, 执行完成标记阶段

// 所有标记工作都会在世界已停止的状态执行

// (标记阶段会设置work.markrootDone=true, 如果跳过则它的值是false, 完成标记阶段会执行所有工作)

// 完成标记阶段会重新启动世界

// Perform mark termination. This will restart the world.

gcMarkTermination(memstats.triggerRatio)

}

semrelease(&work.startSema)

}接下来一个个分析gcStart调用的函数, 建议配合上面的"回收对象的流程"中的图理解.

函数gcBgMarkStartWorkers用于启动后台标记任务, 先分别对每个P启动一个:

// gcBgMarkStartWorkers prepares background mark worker goroutines.

// These goroutines will not run until the mark phase, but they must

// be started while the work is not stopped and from a regular G

// stack. The caller must hold worldsema.

func gcBgMarkStartWorkers() {

// Background marking is performed by per-P G's. Ensure that

// each P has a background GC G.

for _, p := range &allp {

if p == nil || p.status == _Pdead {

break

}

// 如果已启动则不重复启动

if p.gcBgMarkWorker == 0 {

go gcBgMarkWorker(p)

// 启动后等待该任务通知信号量bgMarkReady再继续

notetsleepg(&work.bgMarkReady, -1)

noteclear(&work.bgMarkReady)

}

}

}这里虽然为每个P启动了一个后台标记任务, 但是可以同时工作的只有25%, 这个逻辑在协程M获取G时调用的findRunnableGCWorker中:

// findRunnableGCWorker returns the background mark worker for _p_ if it

// should be run. This must only be called when gcBlackenEnabled != 0.

func (c *gcControllerState) findRunnableGCWorker(_p_ *p) *g {

if gcBlackenEnabled == 0 {

throw("gcControllerState.findRunnable: blackening not enabled")

}

if _p_.gcBgMarkWorker == 0 {

// The mark worker associated with this P is blocked

// performing a mark transition. We can't run it

// because it may be on some other run or wait queue.

return nil

}

if !gcMarkWorkAvailable(_p_) {

// No work to be done right now. This can happen at

// the end of the mark phase when there are still

// assists tapering off. Don't bother running a worker

// now because it'll just return immediately.

return nil

}

// 原子减少对应的值, 如果减少后大于等于0则返回true, 否则返回false

decIfPositive := func(ptr *int64) bool {

if *ptr > 0 {

if atomic.Xaddint64(ptr, -1) >= 0 {

return true

}

// We lost a race

atomic.Xaddint64(ptr, +1)

}

return false

}

// 减少dedicatedMarkWorkersNeeded, 成功时后台标记任务的模式是Dedicated

// dedicatedMarkWorkersNeeded是当前P的数量的25%去除小数点

// 详见startCycle函数

if decIfPositive(&c.dedicatedMarkWorkersNeeded) {

// This P is now dedicated to marking until the end of

// the concurrent mark phase.

_p_.gcMarkWorkerMode = gcMarkWorkerDedicatedMode

} else {

// 减少fractionalMarkWorkersNeeded, 成功是后台标记任务的模式是Fractional

// 上面的计算如果小数点后有数值(不能够整除)则fractionalMarkWorkersNeeded为1, 否则为0

// 详见startCycle函数

// 举例来说, 4个P时会执行1个Dedicated模式的任务, 5个P时会执行1个Dedicated模式和1个Fractional模式的任务

if !decIfPositive(&c.fractionalMarkWorkersNeeded) {

// No more workers are need right now.

return nil

}

// 按Dedicated模式的任务的执行时间判断cpu占用率是否超过预算值, 超过时不启动

// This P has picked the token for the fractional worker.

// Is the GC currently under or at the utilization goal?

// If so, do more work.

//

// We used to check whether doing one time slice of work

// would remain under the utilization goal, but that has the

// effect of delaying work until the mutator has run for

// enough time slices to pay for the work. During those time

// slices, write barriers are enabled, so the mutator is running slower.

// Now instead we do the work whenever we're under or at the

// utilization work and pay for it by letting the mutator run later.

// This doesn't change the overall utilization averages, but it

// front loads the GC work so that the GC finishes earlier and

// write barriers can be turned off sooner, effectively giving

// the mutator a faster machine.

//

// The old, slower behavior can be restored by setting

// gcForcePreemptNS = forcePreemptNS.

const gcForcePreemptNS = 0

// TODO(austin): We could fast path this and basically

// eliminate contention on c.fractionalMarkWorkersNeeded by

// precomputing the minimum time at which it's worth

// next scheduling the fractional worker. Then Ps

// don't have to fight in the window where we've

// passed that deadline and no one has started the

// worker yet.

//

// TODO(austin): Shorter preemption interval for mark

// worker to improve fairness and give this

// finer-grained control over schedule?

now := nanotime() - gcController.markStartTime

then := now + gcForcePreemptNS

timeUsed := c.fractionalMarkTime + gcForcePreemptNS

if then > 0 && float64(timeUsed)/float64(then) > c.fractionalUtilizationGoal {

// Nope, we'd overshoot the utilization goal

atomic.Xaddint64(&c.fractionalMarkWorkersNeeded, +1)

return nil

}

_p_.gcMarkWorkerMode = gcMarkWorkerFractionalMode

}

// 安排后台标记任务执行

// Run the background mark worker

gp := _p_.gcBgMarkWorker.ptr()

casgstatus(gp, _Gwaiting, _Grunnable)

if trace.enabled {

traceGoUnpark(gp, 0)

}

return gp

}gcResetMarkState函数会重置标记相关的状态:

// gcResetMarkState resets global state prior to marking (concurrent

// or STW) and resets the stack scan state of all Gs.

//

// This is safe to do without the world stopped because any Gs created

// during or after this will start out in the reset state.

func gcResetMarkState() {

// This may be called during a concurrent phase, so make sure

// allgs doesn't change.

lock(&allglock)

for _, gp := range allgs {

gp.gcscandone = false // set to true in gcphasework

gp.gcscanvalid = false // stack has not been scanned

gp.gcAssistBytes = 0

}

unlock(&allglock)

work.bytesMarked = 0

work.initialHeapLive = atomic.Load64(&memstats.heap_live)

work.markrootDone = false

}stopTheWorldWithSema函数会停止整个世界, 这个函数必须在g0中运行:

// stopTheWorldWithSema is the core implementation of stopTheWorld.

// The caller is responsible for acquiring worldsema and disabling

// preemption first and then should stopTheWorldWithSema on the system

// stack:

//

// semacquire(&worldsema, 0)

// m.preemptoff = "reason"

// systemstack(stopTheWorldWithSema)

//

// When finished, the caller must either call startTheWorld or undo

// these three operations separately:

//

// m.preemptoff = ""

// systemstack(startTheWorldWithSema)

// semrelease(&worldsema)

//

// It is allowed to acquire worldsema once and then execute multiple

// startTheWorldWithSema/stopTheWorldWithSema pairs.

// Other P's are able to execute between successive calls to

// startTheWorldWithSema and stopTheWorldWithSema.

// Holding worldsema causes any other goroutines invoking

// stopTheWorld to block.

func stopTheWorldWithSema() {

_g_ := getg()

// If we hold a lock, then we won't be able to stop another M

// that is blocked trying to acquire the lock.

if _g_.m.locks > 0 {

throw("stopTheWorld: holding locks")

}

lock(&sched.lock)

// 需要停止的P数量

sched.stopwait = gomaxprocs

// 设置gc等待标记, 调度时看见此标记会进入等待

atomic.Store(&sched.gcwaiting, 1)

// 抢占所有运行中的G

preemptall()

// 停止当前的P

// stop current P

_g_.m.p.ptr().status = _Pgcstop // Pgcstop is only diagnostic.

// 减少需要停止的P数量(当前的P算一个)

sched.stopwait--

// 抢占所有在Psyscall状态的P, 防止它们重新参与调度

// try to retake all P's in Psyscall status

for i := 0; i 0

unlock(&sched.lock)

// 如果仍有需要停止的P, 则等待它们停止

// wait for remaining P's to stop voluntarily

if wait {

for {

// 循环等待 + 抢占所有运行中的G

// wait for 100us, then try to re-preempt in case of any races

if notetsleep(&sched.stopnote, 100*1000) {

noteclear(&sched.stopnote)

break

}

preemptall()

}

}

// 逻辑正确性检查

// sanity checks

bad := ""

if sched.stopwait != 0 {

bad = "stopTheWorld: not stopped (stopwait != 0)"

} else {

for i := 0; i <p>finishsweep_m函数会清扫上一轮GC未清扫的span, 确保上一轮GC已完成:</p><pre class="brush:php;toolbar:false">// finishsweep_m ensures that all spans are swept.

//

// The world must be stopped. This ensures there are no sweeps in

// progress.

//

//go:nowritebarrier

func finishsweep_m() {

// sweepone会取出一个未sweep的span然后执行sweep

// 详细将在下面sweep阶段时分析

// Sweeping must be complete before marking commences, so

// sweep any unswept spans. If this is a concurrent GC, there

// shouldn't be any spans left to sweep, so this should finish

// instantly. If GC was forced before the concurrent sweep

// finished, there may be spans to sweep.

for sweepone() != ^uintptr(0) {

sweep.npausesweep++

}

// 所有span都sweep完成后, 启动一个新的markbit时代

// 这个函数是实现span的gcmarkBits和allocBits的分配和复用的关键, 流程如下

// - span分配gcmarkBits和allocBits

// - span完成sweep

// - 原allocBits不再被使用

// - gcmarkBits变为allocBits

// - 分配新的gcmarkBits

// - 开启新的markbit时代

// - span完成sweep, 同上

// - 开启新的markbit时代

// - 2个时代之前的bitmap将不再被使用, 可以复用这些bitmap

nextMarkBitArenaEpoch()

}clearpools函数会清理sched.sudogcache和sched.deferpool, 让它们的内存可以被回收:

func clearpools() {

// clear sync.Pools

if poolcleanup != nil {

poolcleanup()

}

// Clear central sudog cache.

// Leave per-P caches alone, they have strictly bounded size.

// Disconnect cached list before dropping it on the floor,

// so that a dangling ref to one entry does not pin all of them.

lock(&sched.sudoglock)

var sg, sgnext *sudog

for sg = sched.sudogcache; sg != nil; sg = sgnext {

sgnext = sg.next

sg.next = nil

}

sched.sudogcache = nil

unlock(&sched.sudoglock)

// Clear central defer pools.

// Leave per-P pools alone, they have strictly bounded size.

lock(&sched.deferlock)

for i := range sched.deferpool {

// disconnect cached list before dropping it on the floor,

// so that a dangling ref to one entry does not pin all of them.

var d, dlink *_defer

for d = sched.deferpool[i]; d != nil; d = dlink {

dlink = d.link

d.link = nil

}

sched.deferpool[i] = nil

}

unlock(&sched.deferlock)

}startCycle标记开始了新一轮的GC:

// startCycle resets the GC controller's state and computes estimates

// for a new GC cycle. The caller must hold worldsema.

func (c *gcControllerState) startCycle() {

c.scanWork = 0

c.bgScanCredit = 0

c.assistTime = 0

c.dedicatedMarkTime = 0

c.fractionalMarkTime = 0

c.idleMarkTime = 0

// 伪装heap_marked的值如果gc_trigger的值很小, 防止后面对triggerRatio做出错误的调整

// If this is the first GC cycle or we're operating on a very

// small heap, fake heap_marked so it looks like gc_trigger is

// the appropriate growth from heap_marked, even though the

// real heap_marked may not have a meaningful value (on the

// first cycle) or may be much smaller (resulting in a large

// error response).

if memstats.gc_trigger 0 {

c.fractionalMarkWorkersNeeded = 1

} else {

c.fractionalMarkWorkersNeeded = 0

}

// 重置P中的辅助GC所用的时间统计

// Clear per-P state

for _, p := range &allp {

if p == nil {

break

}

p.gcAssistTime = 0

}

// 计算辅助GC的参数

// 参考上面对计算assistWorkPerByte的公式的分析

// Compute initial values for controls that are updated

// throughout the cycle.

c.revise()

if debug.gcpacertrace > 0 {

print("pacer: assist ratio=", c.assistWorkPerByte,

" (scan ", memstats.heap_scan>>20, " MB in ",

work.initialHeapLive>>20, "->",

memstats.next_gc>>20, " MB)",

" workers=", c.dedicatedMarkWorkersNeeded,

"+", c.fractionalMarkWorkersNeeded, "\n")

}

}setGCPhase函数会修改表示当前GC阶段的全局变量和是否开启写屏障的全局变量:

//go:nosplit

func setGCPhase(x uint32) {

atomic.Store(&gcphase, x)

writeBarrier.needed = gcphase == _GCmark || gcphase == _GCmarktermination

writeBarrier.enabled = writeBarrier.needed || writeBarrier.cgo

}gcBgMarkPrepare函数会重置后台标记任务的计数:

// gcBgMarkPrepare sets up state for background marking.

// Mutator assists must not yet be enabled.

func gcBgMarkPrepare() {

// Background marking will stop when the work queues are empty

// and there are no more workers (note that, since this is

// concurrent, this may be a transient state, but mark

// termination will clean it up). Between background workers

// and assists, we don't really know how many workers there

// will be, so we pretend to have an arbitrarily large number

// of workers, almost all of which are "waiting". While a

// worker is working it decrements nwait. If nproc == nwait,

// there are no workers.

work.nproc = ^uint32(0)

work.nwait = ^uint32(0)

}gcMarkRootPrepare函数会计算扫描根对象的任务数量:

// gcMarkRootPrepare queues root scanning jobs (stacks, globals, and

// some miscellany) and initializes scanning-related state.

//

// The caller must have call gcCopySpans().

//

// The world must be stopped.

//

//go:nowritebarrier

func gcMarkRootPrepare() {

// 释放mcache中的所有span的任务, 只在完成标记阶段(mark termination)中执行

if gcphase == _GCmarktermination {

work.nFlushCacheRoots = int(gomaxprocs)

} else {

work.nFlushCacheRoots = 0

}

// 计算block数量的函数, rootBlockBytes是256KB

// Compute how many data and BSS root blocks there are.

nBlocks := func(bytes uintptr) int {

return int((bytes + rootBlockBytes - 1) / rootBlockBytes)

}

work.nDataRoots = 0

work.nBSSRoots = 0

// data和bss每一轮GC只扫描一次

// 并行GC中会在后台标记任务中扫描, 完成标记阶段(mark termination)中不扫描

// 非并行GC会在完成标记阶段(mark termination)中扫描

// Only scan globals once per cycle; preferably concurrently.

if !work.markrootDone {

// 计算扫描可读写的全局变量的任务数量

for _, datap := range activeModules() {

nDataRoots := nBlocks(datap.edata - datap.data)

if nDataRoots > work.nDataRoots {

work.nDataRoots = nDataRoots

}

}

// 计算扫描只读的全局变量的任务数量

for _, datap := range activeModules() {

nBSSRoots := nBlocks(datap.ebss - datap.bss)

if nBSSRoots > work.nBSSRoots {

work.nBSSRoots = nBSSRoots

}

}

}

// span中的finalizer和各个G的栈每一轮GC只扫描一次

// 同上

if !work.markrootDone {

// 计算扫描span中的finalizer的任务数量

// On the first markroot, we need to scan span roots.

// In concurrent GC, this happens during concurrent

// mark and we depend on addfinalizer to ensure the

// above invariants for objects that get finalizers

// after concurrent mark. In STW GC, this will happen

// during mark termination.

//

// We're only interested in scanning the in-use spans,

// which will all be swept at this point. More spans

// may be added to this list during concurrent GC, but

// we only care about spans that were allocated before

// this mark phase.

work.nSpanRoots = mheap_.sweepSpans[mheap_.sweepgen/2%2].numBlocks()

// 计算扫描各个G的栈的任务数量

// On the first markroot, we need to scan all Gs. Gs

// may be created after this point, but it's okay that

// we ignore them because they begin life without any

// roots, so there's nothing to scan, and any roots

// they create during the concurrent phase will be

// scanned during mark termination. During mark

// termination, allglen isn't changing, so we'll scan

// all Gs.

work.nStackRoots = int(atomic.Loaduintptr(&allglen))

} else {

// We've already scanned span roots and kept the scan

// up-to-date during concurrent mark.

work.nSpanRoots = 0

// The hybrid barrier ensures that stacks can't

// contain pointers to unmarked objects, so on the

// second markroot, there's no need to scan stacks.

work.nStackRoots = 0

if debug.gcrescanstacks > 0 {

// Scan stacks anyway for debugging.

work.nStackRoots = int(atomic.Loaduintptr(&allglen))

}

}

// 计算总任务数量

// 后台标记任务会对markrootNext进行原子递增, 来决定做哪个任务

// 这种用数值来实现锁自由队列的办法挺聪明的, 尽管google工程师觉得不好(看后面markroot函数的分析)

work.markrootNext = 0

work.markrootJobs = uint32(fixedRootCount + work.nFlushCacheRoots + work.nDataRoots + work.nBSSRoots + work.nSpanRoots + work.nStackRoots)

}gcMarkTinyAllocs函数会标记所有tiny alloc等待合并的对象:

// gcMarkTinyAllocs greys all active tiny alloc blocks.

//

// The world must be stopped.

func gcMarkTinyAllocs() {

for _, p := range &allp {

if p == nil || p.status == _Pdead {

break

}

c := p.mcache

if c == nil || c.tiny == 0 {

continue

}

// 标记各个P中的mcache中的tiny

// 在上面的mallocgc函数中可以看到tiny是当前等待合并的对象

_, hbits, span, objIndex := heapBitsForObject(c.tiny, 0, 0)

gcw := &p.gcw

// 标记一个对象存活, 并把它加到标记队列(该对象变为灰色)

greyobject(c.tiny, 0, 0, hbits, span, gcw, objIndex)

// gcBlackenPromptly变量表示当前是否禁止本地队列, 如果已禁止则把标记任务flush到全局队列

if gcBlackenPromptly {

gcw.dispose()

}

}

}startTheWorldWithSema函数会重新启动世界:

func startTheWorldWithSema() {

_g_ := getg()

// 禁止G被抢占

_g_.m.locks++ // disable preemption because it can be holding p in a local var

// 判断收到的网络事件(fd可读可写或错误)并添加对应的G到待运行队列

gp := netpoll(false) // non-blocking

injectglist(gp)

// 判断是否要启动gc helper

add := needaddgcproc()

lock(&sched.lock)

// 如果要求改变gomaxprocs则调整P的数量

// procresize会返回有可运行任务的P的链表

procs := gomaxprocs

if newprocs != 0 {

procs = newprocs

newprocs = 0

}

p1 := procresize(procs)

// 取消GC等待标记

sched.gcwaiting = 0

// 如果sysmon在等待则唤醒它

if sched.sysmonwait != 0 {

sched.sysmonwait = 0

notewakeup(&sched.sysmonnote)

}

unlock(&sched.lock)

// 唤醒有可运行任务的P

for p1 != nil {

p := p1

p1 = p1.link.ptr()

if p.m != 0 {

mp := p.m.ptr()

p.m = 0

if mp.nextp != 0 {

throw("startTheWorld: inconsistent mp->nextp")

}

mp.nextp.set(p)

notewakeup(&mp.park)

} else {

// Start M to run P. Do not start another M below.

newm(nil, p)

add = false

}

}

// 如果有空闲的P,并且没有自旋中的M则唤醒或者创建一个M

// Wakeup an additional proc in case we have excessive runnable goroutines

// in local queues or in the global queue. If we don't, the proc will park itself.

// If we have lots of excessive work, resetspinning will unpark additional procs as necessary.

if atomic.Load(&sched.npidle) != 0 && atomic.Load(&sched.nmspinning) == 0 {

wakep()

}

// 启动gc helper

if add {

// If GC could have used another helper proc, start one now,

// in the hope that it will be available next time.

// It would have been even better to start it before the collection,

// but doing so requires allocating memory, so it's tricky to

// coordinate. This lazy approach works out in practice:

// we don't mind if the first couple gc rounds don't have quite

// the maximum number of procs.

newm(mhelpgc, nil)

}

// 允许G被抢占

_g_.m.locks--

// 如果当前G要求被抢占则重新尝试

if _g_.m.locks == 0 && _g_.preempt { // restore the preemption request in case we've cleared it in newstack

_g_.stackguard0 = stackPreempt

}

}重启世界后各个M会重新开始调度, 调度时会优先使用上面提到的findRunnableGCWorker函数查找任务, 之后就有大约25%的P运行后台标记任务.

后台标记任务的函数是gcBgMarkWorker:

func gcBgMarkWorker(_p_ *p) {

gp := getg()

// 用于休眠后重新获取P的构造体

type parkInfo struct {

m muintptr // Release this m on park.

attach puintptr // If non-nil, attach to this p on park.

}

// We pass park to a gopark unlock function, so it can't be on

// the stack (see gopark). Prevent deadlock from recursively

// starting GC by disabling preemption.

gp.m.preemptoff = "GC worker init"

park := new(parkInfo)

gp.m.preemptoff = ""

// 设置当前的M并禁止抢占

park.m.set(acquirem())

// 设置当前的P(需要关联到的P)

park.attach.set(_p_)

// 通知gcBgMarkStartWorkers可以继续处理

// Inform gcBgMarkStartWorkers that this worker is ready.

// After this point, the background mark worker is scheduled

// cooperatively by gcController.findRunnable. Hence, it must

// never be preempted, as this would put it into _Grunnable

// and put it on a run queue. Instead, when the preempt flag

// is set, this puts itself into _Gwaiting to be woken up by

// gcController.findRunnable at the appropriate time.

notewakeup(&work.bgMarkReady)

for {

// 让当前G进入休眠

// Go to sleep until woken by gcController.findRunnable.

// We can't releasem yet since even the call to gopark

// may be preempted.

gopark(func(g *g, parkp unsafe.Pointer) bool {

park := (*parkInfo)(parkp)

// 重新允许抢占

// The worker G is no longer running, so it's

// now safe to allow preemption.

releasem(park.m.ptr())

// 设置关联的P

// 把当前的G设到P的gcBgMarkWorker成员, 下次findRunnableGCWorker会使用

// 设置失败时不休眠

// If the worker isn't attached to its P,

// attach now. During initialization and after

// a phase change, the worker may have been

// running on a different P. As soon as we

// attach, the owner P may schedule the

// worker, so this must be done after the G is

// stopped.

if park.attach != 0 {

p := park.attach.ptr()

park.attach.set(nil)

// cas the worker because we may be

// racing with a new worker starting

// on this P.

if !p.gcBgMarkWorker.cas(0, guintptr(unsafe.Pointer(g))) {

// The P got a new worker.

// Exit this worker.

return false

}

}

return true

}, unsafe.Pointer(park), "GC worker (idle)", traceEvGoBlock, 0)

// 检查P的gcBgMarkWorker是否和当前的G一致, 不一致时结束当前的任务

// Loop until the P dies and disassociates this

// worker (the P may later be reused, in which case

// it will get a new worker) or we failed to associate.

if _p_.gcBgMarkWorker.ptr() != gp {

break

}

// 禁止G被抢占

// Disable preemption so we can use the gcw. If the

// scheduler wants to preempt us, we'll stop draining,

// dispose the gcw, and then preempt.

park.m.set(acquirem())

if gcBlackenEnabled == 0 {

throw("gcBgMarkWorker: blackening not enabled")

}

// 记录开始时间

startTime := nanotime()

decnwait := atomic.Xadd(&work.nwait, -1)

if decnwait == work.nproc {

println("runtime: work.nwait=", decnwait, "work.nproc=", work.nproc)

throw("work.nwait was > work.nproc")

}

// 切换到g0运行

systemstack(func() {

// 设置G的状态为等待中这样它的栈可以被扫描(两个后台标记任务可以互相扫描对方的栈)

// Mark our goroutine preemptible so its stack

// can be scanned. This lets two mark workers

// scan each other (otherwise, they would

// deadlock). We must not modify anything on

// the G stack. However, stack shrinking is

// disabled for mark workers, so it is safe to

// read from the G stack.

casgstatus(gp, _Grunning, _Gwaiting)

// 判断后台标记任务的模式

switch _p_.gcMarkWorkerMode {

default:

throw("gcBgMarkWorker: unexpected gcMarkWorkerMode")

case gcMarkWorkerDedicatedMode:

// 这个模式下P应该专心执行标记

// 执行标记, 直到被抢占, 并且需要计算后台的扫描量来减少辅助GC和唤醒等待中的G

gcDrain(&_p_.gcw, gcDrainUntilPreempt|gcDrainFlushBgCredit)

// 被抢占时把本地运行队列中的所有G都踢到全局运行队列

if gp.preempt {

// We were preempted. This is

// a useful signal to kick

// everything out of the run

// queue so it can run

// somewhere else.

lock(&sched.lock)

for {

gp, _ := runqget(_p_)

if gp == nil {

break

}

globrunqput(gp)

}

unlock(&sched.lock)

}

// 继续执行标记, 直到无更多任务, 并且需要计算后台的扫描量来减少辅助GC和唤醒等待中的G

// Go back to draining, this time

// without preemption.

gcDrain(&_p_.gcw, gcDrainNoBlock|gcDrainFlushBgCredit)

case gcMarkWorkerFractionalMode:

// 这个模式下P应该适当执行标记

// 执行标记, 直到被抢占, 并且需要计算后台的扫描量来减少辅助GC和唤醒等待中的G

gcDrain(&_p_.gcw, gcDrainUntilPreempt|gcDrainFlushBgCredit)

case gcMarkWorkerIdleMode:

// 这个模式下P只在空闲时执行标记

// 执行标记, 直到被抢占或者达到一定的量, 并且需要计算后台的扫描量来减少辅助GC和唤醒等待中的G

gcDrain(&_p_.gcw, gcDrainIdle|gcDrainUntilPreempt|gcDrainFlushBgCredit)

}

// 恢复G的状态到运行中

casgstatus(gp, _Gwaiting, _Grunning)

})

// 如果标记了禁止本地标记队列则flush到全局标记队列

// If we are nearing the end of mark, dispose

// of the cache promptly. We must do this

// before signaling that we're no longer

// working so that other workers can't observe

// no workers and no work while we have this

// cached, and before we compute done.

if gcBlackenPromptly {

_p_.gcw.dispose()

}

// 累加所用时间

// Account for time.

duration := nanotime() - startTime

switch _p_.gcMarkWorkerMode {

case gcMarkWorkerDedicatedMode:

atomic.Xaddint64(&gcController.dedicatedMarkTime, duration)

atomic.Xaddint64(&gcController.dedicatedMarkWorkersNeeded, 1)

case gcMarkWorkerFractionalMode:

atomic.Xaddint64(&gcController.fractionalMarkTime, duration)

atomic.Xaddint64(&gcController.fractionalMarkWorkersNeeded, 1)

case gcMarkWorkerIdleMode:

atomic.Xaddint64(&gcController.idleMarkTime, duration)

}

// Was this the last worker and did we run out

// of work?

incnwait := atomic.Xadd(&work.nwait, +1)

if incnwait > work.nproc {

println("runtime: p.gcMarkWorkerMode=", _p_.gcMarkWorkerMode,

"work.nwait=", incnwait, "work.nproc=", work.nproc)

throw("work.nwait > work.nproc")

}

// 判断是否所有后台标记任务都完成, 并且没有更多的任务

// If this worker reached a background mark completion

// point, signal the main GC goroutine.

if incnwait == work.nproc && !gcMarkWorkAvailable(nil) {

// 取消和P的关联

// Make this G preemptible and disassociate it

// as the worker for this P so

// findRunnableGCWorker doesn't try to

// schedule it.

_p_.gcBgMarkWorker.set(nil)

// 允许G被抢占

releasem(park.m.ptr())

// 准备进入完成标记阶段

gcMarkDone()

// 休眠之前会重新关联P

// 因为上面允许被抢占, 到这里的时候可能就会变成其他P

// 如果重新关联P失败则这个任务会结束

// Disable preemption and prepare to reattach

// to the P.

//

// We may be running on a different P at this

// point, so we can't reattach until this G is

// parked.

park.m.set(acquirem())

park.attach.set(_p_)

}

}

}gcDrain函数用于执行标记:

// gcDrain scans roots and objects in work buffers, blackening grey

// objects until all roots and work buffers have been drained.

//

// If flags&gcDrainUntilPreempt != 0, gcDrain returns when g.preempt

// is set. This implies gcDrainNoBlock.

//

// If flags&gcDrainIdle != 0, gcDrain returns when there is other work

// to do. This implies gcDrainNoBlock.

//

// If flags&gcDrainNoBlock != 0, gcDrain returns as soon as it is

// unable to get more work. Otherwise, it will block until all

// blocking calls are blocked in gcDrain.

//

// If flags&gcDrainFlushBgCredit != 0, gcDrain flushes scan work

// credit to gcController.bgScanCredit every gcCreditSlack units of

// scan work.

//

//go:nowritebarrier

func gcDrain(gcw *gcWork, flags gcDrainFlags) {

if !writeBarrier.needed {

throw("gcDrain phase incorrect")

}

gp := getg().m.curg

// 看到抢占标志时是否要返回

preemptible := flags&gcDrainUntilPreempt != 0

// 没有任务时是否要等待任务

blocking := flags&(gcDrainUntilPreempt|gcDrainIdle|gcDrainNoBlock) == 0

// 是否计算后台的扫描量来减少辅助GC和唤醒等待中的G

flushBgCredit := flags&gcDrainFlushBgCredit != 0

// 是否只执行一定量的工作

idle := flags&gcDrainIdle != 0

// 记录初始的已扫描数量

initScanWork := gcw.scanWork

// 扫描idleCheckThreshold(100000)个对象以后检查是否要返回

// idleCheck is the scan work at which to perform the next

// idle check with the scheduler.

idleCheck := initScanWork + idleCheckThreshold

// 如果根对象未扫描完, 则先扫描根对象

// Drain root marking jobs.

if work.markrootNext = work.markrootJobs {

break

}

// 执行根对象扫描工作

markroot(gcw, job)

// 如果是idle模式并且有其他工作, 则返回

if idle && pollWork() {

goto done

}

}

}

// 根对象已经在标记队列中, 消费标记队列

// 如果标记了preemptible, 循环直到被抢占

// Drain heap marking jobs.

for !(preemptible && gp.preempt) {

// 如果全局标记队列为空, 把本地标记队列的一部分工作分过去

// (如果wbuf2不为空则移动wbuf2过去, 否则移动wbuf1的一半过去)

// Try to keep work available on the global queue. We used to

// check if there were waiting workers, but it's better to

// just keep work available than to make workers wait. In the

// worst case, we'll do O(log(_WorkbufSize)) unnecessary

// balances.

if work.full == 0 {

gcw.balance()

}

// 从本地标记队列中获取对象, 获取不到则从全局标记队列获取

var b uintptr

if blocking {

// 阻塞获取

b = gcw.get()

} else {

// 非阻塞获取

b = gcw.tryGetFast()

if b == 0 {

b = gcw.tryGet()

}

}

// 获取不到对象, 标记队列已为空, 跳出循环

if b == 0 {

// work barrier reached or tryGet failed.

break

}

// 扫描获取到的对象

scanobject(b, gcw)

// 如果已经扫描了一定数量的对象(gcCreditSlack的值是2000)

// Flush background scan work credit to the global

// account if we've accumulated enough locally so

// mutator assists can draw on it.

if gcw.scanWork >= gcCreditSlack {

// 把扫描的对象数量添加到全局

atomic.Xaddint64(&gcController.scanWork, gcw.scanWork)

// 减少辅助GC的工作量和唤醒等待中的G

if flushBgCredit {

gcFlushBgCredit(gcw.scanWork - initScanWork)

initScanWork = 0

}

idleCheck -= gcw.scanWork

gcw.scanWork = 0

// 如果是idle模式且达到了检查的扫描量, 则检查是否有其他任务(G), 如果有则跳出循环

if idle && idleCheck 0 {

atomic.Xaddint64(&gcController.scanWork, gcw.scanWork)

// 减少辅助GC的工作量和唤醒等待中的G

if flushBgCredit {

gcFlushBgCredit(gcw.scanWork - initScanWork)

}

gcw.scanWork = 0

}

}markroot函数用于执行根对象扫描工作:

// markroot scans the i'th root.

//

// Preemption must be disabled (because this uses a gcWork).

//

// nowritebarrier is only advisory here.

//

//go:nowritebarrier

func markroot(gcw *gcWork, i uint32) {

// 判断取出的数值对应哪种任务

// (google的工程师觉得这种办法可笑)

// TODO(austin): This is a bit ridiculous. Compute and store

// the bases in gcMarkRootPrepare instead of the counts.

baseFlushCache := uint32(fixedRootCount)

baseData := baseFlushCache + uint32(work.nFlushCacheRoots)

baseBSS := baseData + uint32(work.nDataRoots)

baseSpans := baseBSS + uint32(work.nBSSRoots)

baseStacks := baseSpans + uint32(work.nSpanRoots)

end := baseStacks + uint32(work.nStackRoots)

// Note: if you add a case here, please also update heapdump.go:dumproots.

switch {

// 释放mcache中的所有span, 要求STW

case baseFlushCache <p>scang函数负责扫描G的栈:</p><pre class="brush:php;toolbar:false">// scang blocks until gp's stack has been scanned.

// It might be scanned by scang or it might be scanned by the goroutine itself.

// Either way, the stack scan has completed when scang returns.

func scang(gp *g, gcw *gcWork) {

// Invariant; we (the caller, markroot for a specific goroutine) own gp.gcscandone.

// Nothing is racing with us now, but gcscandone might be set to true left over

// from an earlier round of stack scanning (we scan twice per GC).

// We use gcscandone to record whether the scan has been done during this round.

// 标记扫描未完成

gp.gcscandone = false

// See http://golang.org/cl/21503 for justification of the yield delay.

const yieldDelay = 10 * 1000

var nextYield int64

// 循环直到扫描完成

// Endeavor to get gcscandone set to true,

// either by doing the stack scan ourselves or by coercing gp to scan itself.

// gp.gcscandone can transition from false to true when we're not looking

// (if we asked for preemption), so any time we lock the status using

// castogscanstatus we have to double-check that the scan is still not done.

loop:

for i := 0; !gp.gcscandone; i++ {

// 判断G的当前状态

switch s := readgstatus(gp); s {

default:

dumpgstatus(gp)

throw("stopg: invalid status")

// G已中止, 不需要扫描它

case _Gdead:

// No stack.

gp.gcscandone = true

break loop

// G的栈正在扩展, 下一轮重试

case _Gcopystack:

// Stack being switched. Go around again.

// G不是运行中, 首先需要防止它运行

case _Grunnable, _Gsyscall, _Gwaiting:

// Claim goroutine by setting scan bit.

// Racing with execution or readying of gp.

// The scan bit keeps them from running

// the goroutine until we're done.

if castogscanstatus(gp, s, s|_Gscan) {

// 原子切换状态成功时扫描它的栈

if !gp.gcscandone {

scanstack(gp, gcw)

gp.gcscandone = true

}

// 恢复G的状态, 并跳出循环

restartg(gp)

break loop

}

// G正在扫描它自己, 等待扫描完毕

case _Gscanwaiting:

// newstack is doing a scan for us right now. Wait.

// G正在运行

case _Grunning:

// Goroutine running. Try to preempt execution so it can scan itself.

// The preemption handler (in newstack) does the actual scan.

// 如果已经有抢占请求, 则抢占成功时会帮我们处理

// Optimization: if there is already a pending preemption request

// (from the previous loop iteration), don't bother with the atomics.

if gp.preemptscan && gp.preempt && gp.stackguard0 == stackPreempt {

break

}

// 抢占G, 抢占成功时G会扫描它自己

// Ask for preemption and self scan.

if castogscanstatus(gp, _Grunning, _Gscanrunning) {

if !gp.gcscandone {

gp.preemptscan = true

gp.preempt = true

gp.stackguard0 = stackPreempt

}

casfrom_Gscanstatus(gp, _Gscanrunning, _Grunning)

}

}

// 第一轮休眠10毫秒, 第二轮休眠5毫秒

if i == 0 {

nextYield = nanotime() + yieldDelay

}

if nanotime() <p>设置preemptscan后, 在抢占G成功时会调用scanstack扫描它自己的栈, 具体代码在这里.<br>扫描栈用的函数是scanstack:</p><pre class="brush:php;toolbar:false">// scanstack scans gp's stack, greying all pointers found on the stack.

//

// scanstack is marked go:systemstack because it must not be preempted

// while using a workbuf.

//

//go:nowritebarrier

//go:systemstack

func scanstack(gp *g, gcw *gcWork) {

if gp.gcscanvalid {

return

}

if readgstatus(gp)&_Gscan == 0 {

print("runtime:scanstack: gp=", gp, ", goid=", gp.goid, ", gp->atomicstatus=", hex(readgstatus(gp)), "\n")

throw("scanstack - bad status")

}

switch readgstatus(gp) &^ _Gscan {

default:

print("runtime: gp=", gp, ", goid=", gp.goid, ", gp->atomicstatus=", readgstatus(gp), "\n")

throw("mark - bad status")

case _Gdead:

return

case _Grunning:

print("runtime: gp=", gp, ", goid=", gp.goid, ", gp->atomicstatus=", readgstatus(gp), "\n")

throw("scanstack: goroutine not stopped")

case _Grunnable, _Gsyscall, _Gwaiting:

// ok

}

if gp == getg() {

throw("can't scan our own stack")

}

mp := gp.m

if mp != nil && mp.helpgc != 0 {

throw("can't scan gchelper stack")

}

// Shrink the stack if not much of it is being used. During

// concurrent GC, we can do this during concurrent mark.

if !work.markrootDone {

shrinkstack(gp)

}

// Scan the stack.

var cache pcvalueCache

scanframe := func(frame *stkframe, unused unsafe.Pointer) bool {

// scanframeworker会根据代码地址(pc)获取函数信息

// 然后找到函数信息中的stackmap.bytedata, 它保存了函数的栈上哪些地方有指针

// 再调用scanblock来扫描函数的栈空间, 同时函数的参数也会这样扫描

scanframeworker(frame, &cache, gcw)

return true

}

// 枚举所有调用帧, 分别调用scanframe函数

gentraceback(^uintptr(0), ^uintptr(0), 0, gp, 0, nil, 0x7fffffff, scanframe, nil, 0)

// 枚举所有defer的调用帧, 分别调用scanframe函数

tracebackdefers(gp, scanframe, nil)

gp.gcscanvalid = true

}scanblock函数是一个通用的扫描函数, 扫描全局变量和栈空间都会用它, 和scanobject不同的是bitmap需要手动传入: