[置顶] 数据仓库----Hive进阶篇 一

数据仓库—-hive进阶篇二(表的链接,子查询,客户端jdbc和Thrift Client操作,自定义函数) 一、数据的导入 1、使用Load语句执行数据的导入 1.语法: 其中(中括号中表示可加指令): LOCAL:表示指定的文件路径是否是本地的,没有则说明是HDFS上的文件路径

数据仓库—-hive进阶篇二(表的链接,子查询,客户端jdbc和Thrift Client操作,自定义函数)

一、数据的导入

1、使用Load语句执行数据的导入

<code>1.语法: </code>

<code> 其中(中括号中表示可加指令):

LOCAL:表示指定的文件路径是否是本地的,没有则说明是HDFS上的文件路径。

OVERWRITE:表示覆盖表中的已有数据。

PARTITION ():如果是向分区表中导入数据的话需要指定分区。

2.实例:

(1).无分区情况:

</code>

<code> 其中的'/root/data'可以是路径也可以是文件:

路径表示把该路径下的所有文件都导入到表中;

文件表示只把当前文件导入到表中。

(2).有分区情况:

</code>

2、使用Sqoop进行数据的导入

<code>1.使用sqoop将mysql数据库中的数据导入到HDFS中 </code>

<code class=" hljs brainfuck"><span class="hljs-comment">hive</span>> <span class="hljs-comment">sqoop</span> <span class="hljs-comment">import</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">connect</span> <span class="hljs-comment">jdbc:mysql://localhost/3306/sfd</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">username</span> <span class="hljs-comment">root</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">password</span> <span class="hljs-comment">123</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">table</span> <span class="hljs-comment">student</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">columns</span> <span class="hljs-comment">'sid</span><span class="hljs-string">,</span><span class="hljs-comment">sname'</span> <span class="hljs-literal">-</span><span class="hljs-comment">m</span> <span class="hljs-comment">1</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">target</span><span class="hljs-literal">-</span><span class="hljs-comment">dir</span> <span class="hljs-comment">'/sqoop/student'</span></code>

<code> 其中:

--connet :表示数据库的url链接

--username :数据库用户名

--password :数据库用户密码

--table :源数据所在的表

--clomns : 表中的列名,(例子中使用',' 链接)

-m 1 : 表示启用的mapreduce个数为1个

--target-dir : 将源数据导入到HDFS上的那个文件夹下

2.使用sqoop将mysql数据库中的数据导入到hive中:

</code><code class=" hljs brainfuck"><span class="hljs-comment">hive</span>> <span class="hljs-comment">sqoop</span> <span class="hljs-comment">import</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">hive</span><span class="hljs-literal">-</span><span class="hljs-comment">import</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">connect</span> <span class="hljs-comment">jdbc:mysql://localhost/3306/sfd</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">username</span> <span class="hljs-comment">root</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">password</span> <span class="hljs-comment">123</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">table</span> <span class="hljs-comment">student</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">columns</span> <span class="hljs-comment">'sid</span><span class="hljs-string">,</span><span class="hljs-comment">sname'</span> <span class="hljs-literal">-</span><span class="hljs-comment">m</span> <span class="hljs-comment">1</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">hive</span><span class="hljs-literal">-</span><span class="hljs-comment">table</span> <span class="hljs-comment">stu</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">where</span> <span class="hljs-comment">'sid=1'</span></code>

<code> 其中:

--hive-table stu : 表示在导入到hive中名为stu的表中

--where :表示插入数据的条件

3.使用sqoop将mysql数据库中的数据导入到hive中,并使用查询语句;

</code><code class=" hljs brainfuck"><span class="hljs-comment">hive</span>> <span class="hljs-comment">sqoop</span> <span class="hljs-comment">import</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">hive</span><span class="hljs-literal">-</span><span class="hljs-comment">import</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">connect</span> <span class="hljs-comment">jdbc:mysql://localhost/3306/sfd</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">username</span> <span class="hljs-comment">root</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">password</span> <span class="hljs-comment">123</span> <span class="hljs-literal">-</span><span class="hljs-comment">m</span> <span class="hljs-comment">1</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">query</span> <span class="hljs-comment">'select</span> <span class="hljs-comment">*</span> <span class="hljs-comment">from</span> <span class="hljs-comment">student</span> <span class="hljs-comment">where</span> <span class="hljs-comment">sid='1'</span> <span class="hljs-comment">and</span> <span class="hljs-comment">$CONDITIONS'</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">target</span><span class="hljs-literal">-</span><span class="hljs-comment">dir</span> <span class="hljs-comment">'/sqoop/student1'</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">hive</span><span class="hljs-literal">-</span><span class="hljs-comment">table</span> <span class="hljs-comment">stu</span> </code>

<code> 其中:

--query : 表示使用的查询语句,如果查询语句中有where条件限制那么必须加上 and $CONDITIONS(大写)

4.使用sqoop将hive中的数据导出到mysql中:

</code><code class=" hljs brainfuck"><span class="hljs-comment">hive</span>> <span class="hljs-comment">sqoop</span> <span class="hljs-comment">export</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">connect</span> <span class="hljs-comment">jdbc:mysql://localhost/3306/sfd</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">username</span> <span class="hljs-comment">root</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">password</span> <span class="hljs-comment">123</span> <span class="hljs-literal">-</span><span class="hljs-comment">m</span> <span class="hljs-comment">1</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">table</span> <span class="hljs-comment">student1</span> <span class="hljs-literal">-</span><span class="hljs-literal">-</span><span class="hljs-comment">export</span><span class="hljs-literal">-</span><span class="hljs-comment">dir</span> <span class="hljs-comment">'/data'</span></code>

<code> 其中:

--table :为mysql数据库中的已经建立了的表

--export-dir :将数据这个文件夹下的数据导入到mysql的student1表中。

</code>二、Hive的数据查询

1、 查询的语法:

<code> 例子:查询student表中的信息:

select * from student;(查询所有信息不用启用mapreduce)

select sid from student;(需要启动mapreduce)

select sid,sname,math,english,math+english from student;(在(math+english)表达式中如果有一个变量为空那么整个表达式为空,可以使用nvl(math,0)函数,表示如果math为空令其为0)

</code>

2、简单查询的Fetch Task功能,

<code>从上面的例子中可以看出,简单的查询如果不是查询所有的信息,就会开启mapreduce任务,这样会影响工作效率,从Hive0.10.0版本开始支持了Fetch Task功能;

Fetch Task功能配置方式:

a. 方式一: set hive.fetch.task.conversion=more

b. 方式二: hive --hiveconf hive.fetch.task.conversion=more

c. 方式三: 修改hive-site.xml文件

</code>

<code> 前两种方式只在当前hive命令行有用,当重启hive时简单查询还是会调用mapreduce程序;而第二种方式配置是一直起作用的。 </code>

3.、在查询中使用过滤

<code>1.where 语句进行过滤。(字符串过滤区分大小写) </code>

<code> 其中:%\\_% : 由于_是模糊查询中的关键词(表示有一个字符),所以要用到转义字符,第一个'\'表示后面使用的是转义字符,'\_'表示的是'_'; </code>

4、在查询中排序

排序默认是升序的,要想降序只需在末尾加上desc

注意:当使用序号进行排序的使用需要设置一个属性:set hive.groupby.orderby.position.alias=true;

三、Hive的内置函数

1、数学函数:

<code>round(45.926,2):四舍五入(第二个参数表示的是保留小数点后面几位,当参数为负数是表示的是小数点前) </code>

ceil(45.9):向上取整

floor(45.9):向下取整

2、字符函数:

<code>lower:把字符串转换成小写

upper:把字符串装换成大写

length:字符串的长度

concat('hello','world'):添加一个字符串

substr(a,b):截取字符串:(从a中,第b为开始取,取到右边所有的字符)

substr(a,b,c):截取字符串:(从a中,第b为开始取,取c个字符)

trim:去掉字符串两端的空格

lpad('abc',10,'*'):左填充

rpad:右填充

</code>3、收集函数和转换函数:

<code>1,收集函数:

size:

</code>

<code>2,转换函数:

cast:cast(1 as bigint);

</code>4、日期函数:

<code>to_data:取出字符串中的日期部分 </code>

<code>year:取出日期中的年 month:取出日期中的月 day:取出日期中的日 </code>

<code>weekofyear:返回一个日期在一年中是第几个星期 </code>

<code>datediff:两个日期相减返回相差的天数 </code>

<code>date_add:在一个日期上加上多少天 date_sub:在一个日期上减去多少天 </code>

5、条件函数:

<code>coalesce(a,b,...):从做到右返回第一个不为null的值 </code>

<code>case...when...: 条件表达式

case a when b then c [when d then e]* [else f] end

</code>

6、聚合函数:

<code>count:个数 sum:求和 min:求最小值 max:求最大值 avg:求平均值 </code>

7、表生成函数:

<code>explode:把一个map集合或者是array数组中的一个元素单独生成一行 </code>

数据仓库—-hive进阶篇二

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

DDREASE is a tool for recovering data from file or block devices such as hard drives, SSDs, RAM disks, CDs, DVDs and USB storage devices. It copies data from one block device to another, leaving corrupted data blocks behind and moving only good data blocks. ddreasue is a powerful recovery tool that is fully automated as it does not require any interference during recovery operations. Additionally, thanks to the ddasue map file, it can be stopped and resumed at any time. Other key features of DDREASE are as follows: It does not overwrite recovered data but fills the gaps in case of iterative recovery. However, it can be truncated if the tool is instructed to do so explicitly. Recover data from multiple files or blocks to a single

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

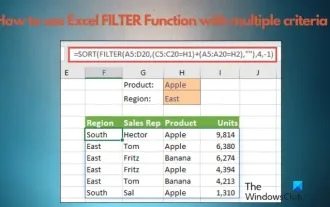

How to use Excel filter function with multiple conditions

Feb 26, 2024 am 10:19 AM

How to use Excel filter function with multiple conditions

Feb 26, 2024 am 10:19 AM

If you need to know how to use filtering with multiple criteria in Excel, the following tutorial will guide you through the steps to ensure you can filter and sort your data effectively. Excel's filtering function is very powerful and can help you extract the information you need from large amounts of data. This function can filter data according to the conditions you set and display only the parts that meet the conditions, making data management more efficient. By using the filter function, you can quickly find target data, saving time in finding and organizing data. This function can not only be applied to simple data lists, but can also be filtered based on multiple conditions to help you locate the information you need more accurately. Overall, Excel’s filtering function is a very practical

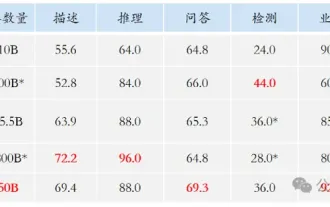

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

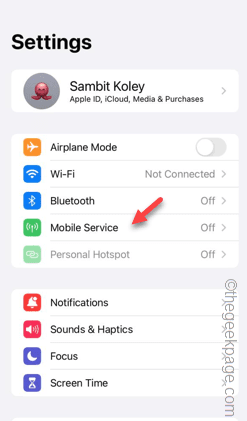

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

Recently, the military circle has been overwhelmed by the news: US military fighter jets can now complete fully automatic air combat using AI. Yes, just recently, the US military’s AI fighter jet was made public for the first time and the mystery was unveiled. The full name of this fighter is the Variable Stability Simulator Test Aircraft (VISTA). It was personally flown by the Secretary of the US Air Force to simulate a one-on-one air battle. On May 2, U.S. Air Force Secretary Frank Kendall took off in an X-62AVISTA at Edwards Air Force Base. Note that during the one-hour flight, all flight actions were completed autonomously by AI! Kendall said - "For the past few decades, we have been thinking about the unlimited potential of autonomous air-to-air combat, but it has always seemed out of reach." However now,

The first robot to autonomously complete human tasks appears, with five fingers that are flexible and fast, and large models support virtual space training

Mar 11, 2024 pm 12:10 PM

The first robot to autonomously complete human tasks appears, with five fingers that are flexible and fast, and large models support virtual space training

Mar 11, 2024 pm 12:10 PM

This week, FigureAI, a robotics company invested by OpenAI, Microsoft, Bezos, and Nvidia, announced that it has received nearly $700 million in financing and plans to develop a humanoid robot that can walk independently within the next year. And Tesla’s Optimus Prime has repeatedly received good news. No one doubts that this year will be the year when humanoid robots explode. SanctuaryAI, a Canadian-based robotics company, recently released a new humanoid robot, Phoenix. Officials claim that it can complete many tasks autonomously at the same speed as humans. Pheonix, the world's first robot that can autonomously complete tasks at human speeds, can gently grab, move and elegantly place each object to its left and right sides. It can autonomously identify objects