Hadoop 2.2 & HBase 0.96 Maven 依赖总结

由于Hbase 0.94对Hadoop 2.x的支持不是非常好,故直接添加Hbase 0.94的jar依赖可能会导致问题。 但是直接添加Hbase0.96的依赖,由于官方并没有发布Hbase 0.96的jar包,通过maven编译项目的时候会出现找不到jar包导致编译失败。 通过网上的资料,得知Hbase 0.9

由于Hbase 0.94对Hadoop 2.x的支持不是非常好,故直接添加Hbase 0.94的jar依赖可能会导致问题。 但是直接添加Hbase0.96的依赖,由于官方并没有发布Hbase 0.96的jar包,通过maven编译项目的时候会出现找不到jar包导致编译失败。

通过网上的资料,得知Hbase 0.94后版本,直接添加Hbase-Client的依赖,通过查询得知需要以下依赖:

[html]

view plaincopy

- dependency>

- groupId>commons-iogroupId>

- artifactId>commons-ioartifactId>

- version>1.3.2version>

- dependency>

- dependency>

- groupId>commons-logginggroupId>

- artifactId>commons-loggingartifactId>

- version>1.1.3version>

- dependency>

- dependency>

- groupId>log4jgroupId>

- artifactId>log4jartifactId>

- version>1.2.17version>

- dependency>

- dependency>

- groupId>org.apache.hbasegroupId>

- artifactId>hbase-clientartifactId>

- version>0.96.1-hadoop2version>

- dependency>

- dependency>

- groupId>com.google.protobufgroupId>

- artifactId>protobuf-javaartifactId>

- version>2.5.0version>

- dependency>

- dependency>

- groupId>io.nettygroupId>

- artifactId>nettyartifactId>

- version>3.6.6.Finalversion>

- dependency>

- dependency>

- groupId>org.apache.hbasegroupId>

- artifactId>hbase-commonartifactId>

- version>0.96.1-hadoop2version>

- dependency>

- dependency>

- groupId>org.apache.hbasegroupId>

- artifactId>hbase-protocolartifactId>

- version>0.96.1-hadoop2version>

- dependency>

- dependency>

- groupId>org.apache.zookeepergroupId>

- artifactId>zookeeperartifactId>

- version>3.4.5version>

- dependency>

- dependency>

- groupId>org.cloudera.htracegroupId>

- artifactId>htrace-coreartifactId>

- version>2.01version>

- dependency>

- dependency>

- groupId>org.codehaus.jacksongroupId>

- artifactId>jackson-mapper-aslartifactId>

- version>1.9.13version>

- dependency>

- dependency>

- groupId>org.codehaus.jacksongroupId>

- artifactId>jackson-core-aslartifactId>

- version>1.9.13version>

- dependency>

- dependency>

- groupId>org.codehaus.jacksongroupId>

- artifactId>jackson-jaxrsartifactId>

- version>1.9.13version>

- dependency>

- dependency>

- groupId>org.codehaus.jacksongroupId>

- artifactId>jackson-xcartifactId>

- version>1.9.13version>

- dependency>

- dependency>

- groupId>org.slf4jgroupId>

- artifactId>slf4j-apiartifactId>

- version>1.6.4version>

- dependency>

- dependency>

- groupId>org.slf4jgroupId>

- artifactId>slf4j-log4j12artifactId>

- version>1.6.4version>

- dependency>

若要使用org.apache.hadoop.hbase.mapreduce的API,需要加上:

最后,把Hadoop的依赖也贴上来,以防自己忘记:

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1381

1381

52

52

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid When using Hadoop to process big data, you often encounter some Java exception errors, which may affect the execution of tasks and cause data processing to fail. This article will introduce some common Hadoop errors and provide ways to deal with and avoid them. Java.lang.OutOfMemoryErrorOutOfMemoryError is an error caused by insufficient memory of the Java virtual machine. When Hadoop is

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

With the advent of the big data era, data processing and storage have become more and more important, and how to efficiently manage and analyze large amounts of data has become a challenge for enterprises. Hadoop and HBase, two projects of the Apache Foundation, provide a solution for big data storage and analysis. This article will introduce how to use Hadoop and HBase in Beego for big data storage and query. 1. Introduction to Hadoop and HBase Hadoop is an open source distributed storage and computing system that can

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

As the amount of data continues to increase, traditional data processing methods can no longer handle the challenges brought by the big data era. Hadoop is an open source distributed computing framework that solves the performance bottleneck problem caused by single-node servers in big data processing through distributed storage and processing of large amounts of data. PHP is a scripting language that is widely used in web development and has the advantages of rapid development and easy maintenance. This article will introduce how to use PHP and Hadoop for big data processing. What is HadoopHadoop is

What coin is AMP?

Feb 24, 2024 pm 09:16 PM

What coin is AMP?

Feb 24, 2024 pm 09:16 PM

What is AMP Coin? The AMP token was created by the Synereo team in 2015 as the main trading currency of the Synereo platform. AMP token aims to provide users with a better digital economic experience through multiple functions and uses. Purpose of AMP Token The AMP Token has multiple roles and functions in the Synereo platform. First, as part of the platform’s cryptocurrency reward system, users are able to earn AMP rewards by sharing and promoting content, a mechanism that encourages users to participate more actively in the platform’s activities. AMP tokens can also be used to promote and distribute content on the Synereo platform. Users can increase the visibility of their content on the platform by using AMP tokens to attract more viewers to view and share

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Java big data technology stack: Understand the application of Java in the field of big data, such as Hadoop, Spark, Kafka, etc. As the amount of data continues to increase, big data technology has become a hot topic in today's Internet era. In the field of big data, we often hear the names of Hadoop, Spark, Kafka and other technologies. These technologies play a vital role, and Java, as a widely used programming language, also plays a huge role in the field of big data. This article will focus on the application of Java in large

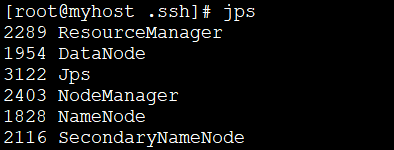

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

1: Install JDK1. Execute the following command to download the JDK1.8 installation package. wget--no-check-certificatehttps://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz2. Execute the following command to decompress the downloaded JDK1.8 installation package. tar-zxvfjdk-8u151-linux-x64.tar.gz3. Move and rename the JDK package. mvjdk1.8.0_151//usr/java84. Configure Java environment variables. echo'

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

As the amount of data continues to increase, large-scale data processing has become a problem that enterprises must face and solve. Traditional relational databases can no longer meet this demand. For the storage and analysis of large-scale data, distributed computing platforms such as Hadoop, Spark, and Flink have become the best choices. In the selection process of data processing tools, PHP is becoming more and more popular among developers as a language that is easy to develop and maintain. In this article, we will explore how to leverage PHP for large-scale data processing and how

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

In the current Internet era, the processing of massive data is a problem that every enterprise and institution needs to face. As a widely used programming language, PHP also needs to keep up with the times in data processing. In order to process massive data more efficiently, PHP development has introduced some big data processing tools, such as Spark and Hadoop. Spark is an open source data processing engine that can be used for distributed processing of large data sets. The biggest feature of Spark is its fast data processing speed and efficient data storage.