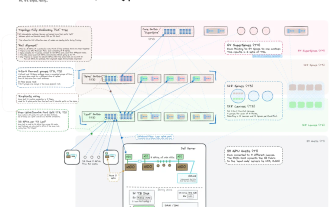

Hadoop入门--HDFS(单节点)配置和部署(一)

一 配置SSH 下载ssh服务端和客户端 sudo apt-get install openssh-server openssh-client 验证是否安装成功 ssh username@192.168.30.128按照提示输入username的密码,回车后显示以下,则成功。(此处不建议修改端口号,hadoop默认的是22,修改后启动hadoop会

一 配置SSH

下载ssh服务端和客户端 sudo apt-get install openssh-server openssh-client 验证是否安装成功 ssh username@192.168.30.128按照提示输入username的密码,回车后显示以下,则成功。(此处不建议修改端口号,hadoop默认的是22,修改后启动hadoop会报异常,除非在hadoop的配置文件中也修改ssh端口号)Welcome to Ubuntu 13.04 (GNU/Linux 3.8.0-34-generic i686)* Documentation: https://help.ubuntu.com/

New release '13.10' available.

Run 'do-release-upgrade' to upgrade to it.

Last login: Sun Dec 8 10:27:38 2013 from ubuntu.local 公钥-私钥登录配置(无密) ssh-keygen -t rsa -P ""(其中会出现输入提示,回车即可,之后home/username/.ssh/ 下生成id_rsa ,id_rsa.pub, known_hosts三个文件。

/home/username/ 下生成 authorized_keys 文件)将id_rsa.pub追加到authorized_keys授权文件中 cat .ssh/id_rsa >> authorized_keys (切换到/home/username/下)公钥-私钥登录配置(有密) ssh-keygen -t rsa (在出现 Enter passphrase (empty for no passphrase):

时,输入设置的密码。其它同上,此处未测试过)

二 安装JDK(采用OpenJDK,为啥不用JDK...百度or谷歌)

下载jdk sudo apt-get install openjdk-7-jdk(目前最新的是openjdk-7)配置环境变量 sudo vim ~/.bashrc (在文件末尾添加) export JAVA_HOME=/usr/lib/jvm/java-1.6.0-openjdk-i386export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH(修改环境变量生效)source ~/.bashrc

测试jdk是否安装成功 java -version(出现以下信息则成功)java version "1.7.0_25"

OpenJDK Runtime Environment (IcedTea 2.3.10) (7u25-2.3.10-1ubuntu0.13.04.2)

OpenJDK Client VM (build 23.7-b01, mixed mode, sharing)

三 安装Hadoop和HDFS配置

下载hadoop tar -zxvf hadoop-1.2.1.tar.gz(解压到 hadoop-1.2.1目录下)mv hadoop-1.2.1 hadoop(hadoop-1.2.1目录改名为hadoop)cp hadoop /usr/local(复制hadoop到 /usr/local 目录下)配置hdfs文件(

hadoop<code class="bash plain">/conf/core-site<code class="bash plain">.xml,<code class="bash plain">hadoop<code class="bash plain">/conf/hdfs-site<code class="bash plain">.xml,<code class="bash plain">hadoop<code class="bash plain">/conf/mapred-site<code class="bash plain">.xml) sudo vim /usr/local/<code class="bash plain">hadoop<code class="bash plain">/conf/core-site<code class="bash plain">.xml(修改为以下内容) <?xml version="1.0"?><br>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?><br>

<br>

<!-- Put site-specific property overrides in this file. --><br>

<br>

<configuration><br>

<property><br>

<name>fs.default.name</name><br>

<value>hdfs://192.168.30.128:9000</value><br>

</property><br>

</configuration><br>

sudo vim /usr/local/<code class="bash plain">hadoop<code class="bash plain">/conf/hdfs-site<code class="bash plain">.xml(修改为以下内容) <?xml version="1.0"?><br>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?><br>

<br>

<!-- Put site-specific property overrides in this file. --><br>

<br>

<configuration><br>

<property><br>

<name>hadoop.tmp.dir</name><br>

<value>/home/username/hadoop_tmp</value><!--需要创建此目录--><br>

<description>A base for other temporary directories.</description><br>

</property><br>

<property><br>

<name>dfs.name.dir</name><br>

<value>/tmp/hadoop/dfs/datalog1,/tmp/hadoop/dfs/datalog2</value><br>

</property><br>

<property><br>

<name>dfs.data.dir</name><br>

<value>/tmp/hadoop/dfs/data1,/tmp/hadoop/dfs/data2</value><br>

</property><br>

<property><br>

<name>dfs.replication</name><br>

<value>2</value><br>

</property><br>

sudo vim /usr/local/<code class="bash plain">hadoop<code class="bash plain">/conf/mapred-site<code class="bash plain">.xml(修改为以下内容) <?xml version="1.0"?><br>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?><br>

<br>

<!-- Put site-specific property overrides in this file. --><br>

<br>

<configuration><br>

<property><br>

<name>mapred.job.tracker</name><br>

<value>192.168.30.128:9001</value><br>

</property><br>

</configuration>

<code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain">四 运行wordcount

<code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain"><code class="bash plain">在hdfs中创建一个统计目录,输出目录不用创建,否则运行wordcount的时候报错。 ./hadoop fs -mkdir /input./hadoop fs -put myword.txt /input./hadoop jar /usr/local/hadoop/hadoop-examples-1.2.1.jar wordcount /input /output./hadoop fs -cat <strong>/output/part-r-00000</strong>

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1375

1375

52

52

A Diffusion Model Tutorial Worth Your Time, from Purdue University

Apr 07, 2024 am 09:01 AM

A Diffusion Model Tutorial Worth Your Time, from Purdue University

Apr 07, 2024 am 09:01 AM

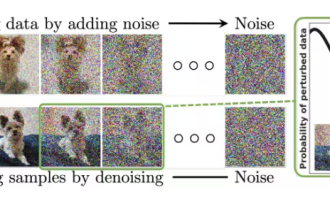

Diffusion can not only imitate better, but also "create". The diffusion model (DiffusionModel) is an image generation model. Compared with the well-known algorithms such as GAN and VAE in the field of AI, the diffusion model takes a different approach. Its main idea is a process of first adding noise to the image and then gradually denoising it. How to denoise and restore the original image is the core part of the algorithm. The final algorithm is able to generate an image from a random noisy image. In recent years, the phenomenal growth of generative AI has enabled many exciting applications in text-to-image generation, video generation, and more. The basic principle behind these generative tools is the concept of diffusion, a special sampling mechanism that overcomes the limitations of previous methods.

Generate PPT with one click! Kimi: Let the 'PPT migrant workers' become popular first

Aug 01, 2024 pm 03:28 PM

Generate PPT with one click! Kimi: Let the 'PPT migrant workers' become popular first

Aug 01, 2024 pm 03:28 PM

Kimi: In just one sentence, in just ten seconds, a PPT will be ready. PPT is so annoying! To hold a meeting, you need to have a PPT; to write a weekly report, you need to have a PPT; to make an investment, you need to show a PPT; even when you accuse someone of cheating, you have to send a PPT. College is more like studying a PPT major. You watch PPT in class and do PPT after class. Perhaps, when Dennis Austin invented PPT 37 years ago, he did not expect that one day PPT would become so widespread. Talking about our hard experience of making PPT brings tears to our eyes. "It took three months to make a PPT of more than 20 pages, and I revised it dozens of times. I felt like vomiting when I saw the PPT." "At my peak, I did five PPTs a day, and even my breathing was PPT." If you have an impromptu meeting, you should do it

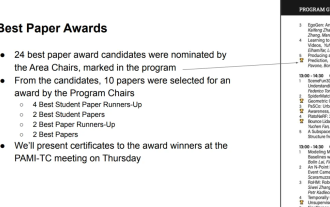

All CVPR 2024 awards announced! Nearly 10,000 people attended the conference offline, and a Chinese researcher from Google won the best paper award

Jun 20, 2024 pm 05:43 PM

All CVPR 2024 awards announced! Nearly 10,000 people attended the conference offline, and a Chinese researcher from Google won the best paper award

Jun 20, 2024 pm 05:43 PM

In the early morning of June 20th, Beijing time, CVPR2024, the top international computer vision conference held in Seattle, officially announced the best paper and other awards. This year, a total of 10 papers won awards, including 2 best papers and 2 best student papers. In addition, there were 2 best paper nominations and 4 best student paper nominations. The top conference in the field of computer vision (CV) is CVPR, which attracts a large number of research institutions and universities every year. According to statistics, a total of 11,532 papers were submitted this year, and 2,719 were accepted, with an acceptance rate of 23.6%. According to Georgia Institute of Technology’s statistical analysis of CVPR2024 data, from the perspective of research topics, the largest number of papers is image and video synthesis and generation (Imageandvideosyn

Understand Linux Bashrc: functions, configuration and usage

Mar 20, 2024 pm 03:30 PM

Understand Linux Bashrc: functions, configuration and usage

Mar 20, 2024 pm 03:30 PM

Understanding Linux Bashrc: Function, Configuration and Usage In Linux systems, Bashrc (BourneAgainShellruncommands) is a very important configuration file, which contains various commands and settings that are automatically run when the system starts. The Bashrc file is usually located in the user's home directory and is a hidden file. Its function is to customize the Bashshell environment for the user. 1. Bashrc function setting environment

From bare metal to a large model with 70 billion parameters, here is a tutorial and ready-to-use scripts

Jul 24, 2024 pm 08:13 PM

From bare metal to a large model with 70 billion parameters, here is a tutorial and ready-to-use scripts

Jul 24, 2024 pm 08:13 PM

We know that LLM is trained on large-scale computer clusters using massive data. This site has introduced many methods and technologies used to assist and improve the LLM training process. Today, what we want to share is an article that goes deep into the underlying technology and introduces how to turn a bunch of "bare metals" without even an operating system into a computer cluster for training LLM. This article comes from Imbue, an AI startup that strives to achieve general intelligence by understanding how machines think. Of course, turning a bunch of "bare metal" without an operating system into a computer cluster for training LLM is not an easy process, full of exploration and trial and error, but Imbue finally successfully trained an LLM with 70 billion parameters. and in the process accumulate

A must-read for technical beginners: Analysis of the difficulty levels of C language and Python

Mar 22, 2024 am 10:21 AM

A must-read for technical beginners: Analysis of the difficulty levels of C language and Python

Mar 22, 2024 am 10:21 AM

Title: A must-read for technical beginners: Difficulty analysis of C language and Python, requiring specific code examples In today's digital age, programming technology has become an increasingly important ability. Whether you want to work in fields such as software development, data analysis, artificial intelligence, or just learn programming out of interest, choosing a suitable programming language is the first step. Among many programming languages, C language and Python are two widely used programming languages, each with its own characteristics. This article will analyze the difficulty levels of C language and Python

AI in use | AI created a life vlog of a girl living alone, which received tens of thousands of likes in 3 days

Aug 07, 2024 pm 10:53 PM

AI in use | AI created a life vlog of a girl living alone, which received tens of thousands of likes in 3 days

Aug 07, 2024 pm 10:53 PM

Editor of the Machine Power Report: Yang Wen The wave of artificial intelligence represented by large models and AIGC has been quietly changing the way we live and work, but most people still don’t know how to use it. Therefore, we have launched the "AI in Use" column to introduce in detail how to use AI through intuitive, interesting and concise artificial intelligence use cases and stimulate everyone's thinking. We also welcome readers to submit innovative, hands-on use cases. Video link: https://mp.weixin.qq.com/s/2hX_i7li3RqdE4u016yGhQ Recently, the life vlog of a girl living alone became popular on Xiaohongshu. An illustration-style animation, coupled with a few healing words, can be easily picked up in just a few days.

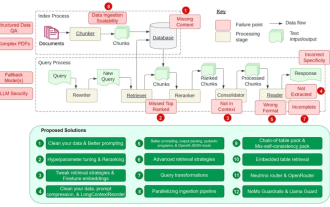

Counting down the 12 pain points of RAG, NVIDIA senior architect teaches solutions

Jul 11, 2024 pm 01:53 PM

Counting down the 12 pain points of RAG, NVIDIA senior architect teaches solutions

Jul 11, 2024 pm 01:53 PM

Retrieval-augmented generation (RAG) is a technique that uses retrieval to boost language models. Specifically, before a language model generates an answer, it retrieves relevant information from an extensive document database and then uses this information to guide the generation process. This technology can greatly improve the accuracy and relevance of content, effectively alleviate the problem of hallucinations, increase the speed of knowledge update, and enhance the traceability of content generation. RAG is undoubtedly one of the most exciting areas of artificial intelligence research. For more details about RAG, please refer to the column article on this site "What are the new developments in RAG, which specializes in making up for the shortcomings of large models?" This review explains it clearly." But RAG is not perfect, and users often encounter some "pain points" when using it. Recently, NVIDIA’s advanced generative AI solution