编写简单的Mapreduce程序并部署在Hadoop2.2.0上运行

经过几天的折腾,终于配置好了 Hadoop 2.2.0(如何配置在Linux平台部署 Hadoop 请参见本博客《在Fedora上部署Hadoop2.2.0伪分布式平台》),今天主要来说说怎么在Hadoop2.2.0伪分布式上面运行我们写好的 Mapreduce 程序。先给出这个程序所依赖的Maven包: 01 0

经过几天的折腾,终于配置好了Hadoop2.2.0(如何配置在Linux平台部署Hadoop请参见本博客《在Fedora上部署Hadoop2.2.0伪分布式平台》),今天主要来说说怎么在Hadoop2.2.0伪分布式上面运行我们写好的Mapreduce程序。先给出这个程序所依赖的Maven包:

|

01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 |

|

好了,现在给出程序,代码如下:

|

001 002 003 004 005 006 007 008 009 010 011 012 013 014 015 016 017 018 019 020 021 022 023 024 025 026 027 028 029 030 031 032 033 034 035 036 037 038 039 040 041 042 043 044 045 046 047 048 049 050 051 052 053 054 055 056 057 058 059 060 061 062 063 064 065 066 067 068 069 070 071 072 073 074 075 076 077 078 079 080 081 082 083 084 085 086 087 088 089 090 091 092 093 094 095 096 097 098 099 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 |

|

将上面的程序编译和打包成jar文件,然后开始在Hadoop2.2.0(本文假定用户都部署好了Hadoop2.2.0)上面部署了。下面主要讲讲如何去部署:

首先,启动Hadoop2.2.0,命令如下:

|

1 2 |

|

如果你想看看Hadoop2.2.0是否运行成功,运行下面的命令去查看

|

1 2 3 4 5 6 7 8 9 |

|

其中jps是jdk自带的一个命令,在jdk/bin目录下。如果你电脑上面出现了以上的几个进程(NameNode、SecondaryNameNode、NodeManager、ResourceManager、DataNode这五个进程必须出现!)说明你的Hadoop服务器启动成功了!现在来运行上面打包好的jar文件(这里为Hadoop.jar,其中/home/wyp/IdeaProjects/Hadoop/out/artifacts/Hadoop_jar/Hadoop.jar是它的绝对路径,不知道绝对路径是什么?那你好好去学学吧!),运行下面的命令:

|

1 2 3 4 5 |

|

(上面是一条命令,由于太长了,所以我分行写,在实际情况中,请写一行!)其中,/home/wyp/Downloads/hadoop/bin/hadoop是hadoop的绝对路径,如果你在环境变量中配置好hadoop命令的路径就不需要这样写;com/wyp/hadoop/MaxTemperature是上面程序的main函数的入口;/user/wyp/data.txt是Hadoop文件系统(HDFS)中的绝对路径(注意:这里不是你Linux系统中的绝对路径!),为需要分析文件的路径(也就是input);/user/wyp/result是分析结果输出的绝对路径(注意:这里不是你Linux系统中的绝对路径!而是HDFS上面的路径!而且/user/wyp/result一定不能存在,否则会抛出异常!这是Hadoop的保护机制,你总不想你以前运行好几天的程序突然被你不小心给覆盖掉了吧?所以,如果/user/wyp/result存在,程序会抛出异常,很不错啊)。好了。输入上面的命令,应该会得到下面类似的输出:

13/10/28 15:20:44 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032 13/10/28 15:20:44 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032 13/10/28 15:20:45 WARN mapreduce.JobSubmitter: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this. 13/10/28 15:20:45 WARN mapreduce.JobSubmitter: No job jar file set. User classes may not be found. See Job or Job#setJar(String). 13/10/28 15:20:45 INFO mapred.FileInputFormat: Total input paths to process : 1 13/10/28 15:20:46 INFO mapreduce.JobSubmitter: number of splits:2 13/10/28 15:20:46 INFO Configuration.deprecation: user.name is deprecated. Instead, use mapreduce.job.user.name 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.output.value.class is deprecated. Instead, use mapreduce.job.output.value.class 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.job.name is deprecated. Instead, use mapreduce.job.name 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.input.dir is deprecated. Instead, use mapreduce.input.fileinputformat.inputdir 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.output.dir is deprecated. Instead, use mapreduce.output.fileoutputformat.outputdir 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.output.key.class is deprecated. Instead, use mapreduce.job.output.key.class 13/10/28 15:20:46 INFO Configuration.deprecation: mapred.working.dir is deprecated. Instead, use mapreduce.job.working.dir 13/10/28 15:20:46 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1382942307976_0008 13/10/28 15:20:47 INFO mapred.YARNRunner: Job jar is not present. Not adding any jar to the list of resources. 13/10/28 15:20:49 INFO impl.YarnClientImpl: Submitted application application_1382942307976_0008 to ResourceManager at /0.0.0.0:8032 13/10/28 15:20:49 INFO mapreduce.Job: The url to track the job: http://wyp:8088/proxy/application_1382942307976_0008/ 13/10/28 15:20:49 INFO mapreduce.Job: Running job: job_1382942307976_0008 13/10/28 15:20:59 INFO mapreduce.Job: Job job_1382942307976_0008 running in uber mode : false 13/10/28 15:20:59 INFO mapreduce.Job: map 0% reduce 0% 13/10/28 15:21:35 INFO mapreduce.Job: map 100% reduce 0% 13/10/28 15:21:38 INFO mapreduce.Job: map 0% reduce 0% 13/10/28 15:21:38 INFO mapreduce.Job: Task Id : attempt_1382942307976_0008_m_000000_0, Status : FAILED Error: java.lang.RuntimeException: Error in configuring object at org.apache.hadoop.util.ReflectionUtils.setJobConf(ReflectionUtils.java:109) at org.apache.hadoop.util.ReflectionUtils.setConf(ReflectionUtils.java:75) at org.apache.hadoop.util.ReflectionUtils.newInstance(ReflectionUtils.java:133) at org.apache.hadoop.mapred.MapTask.runOldMapper(MapTask.java:425) at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341) at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:162) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:415) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1491) at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:157) Caused by: java.lang.reflect.InvocationTargetException at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:606) at org.apache.hadoop.util.ReflectionUtils.setJobConf(ReflectionUtils.java:106) ... 9 more Caused by: java.lang.RuntimeException: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class com.wyp.hadoop.MaxTemperatureMapper1 not found at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:1752) at org.apache.hadoop.mapred.JobConf.getMapperClass(JobConf.java:1058) at org.apache.hadoop.mapred.MapRunner.configure(MapRunner.java:38) ... 14 more Caused by: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class com.wyp.hadoop.MaxTemperatureMapper1 not found at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:1720) at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:1744) ... 16 more Caused by: java.lang.ClassNotFoundException: Class com.wyp.hadoop.MaxTemperatureMapper1 not found at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:1626) at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:1718) ... 17 more Container killed by the ApplicationMaster. Container killed on request. Exit code is 143

程序居然抛出异常(ClassNotFoundException)!这是什么回事?其实我也不太明白!!

在网上Google了一下,找到别人的观点:

经个人总结,这通常是由于以下几种原因造成的:

(1)你编写了一个java lib,封装成了jar,然后再写了一个Hadoop程序,调用这个jar完成mapper和reducer的编写

(2)你编写了一个Hadoop程序,期间调用了一个第三方java lib。

之后,你将自己的jar包或者第三方java包分发到各个TaskTracker的HADOOP_HOME目录下,运行你的JAVA程序,报了以上错误。

那怎么解决呢?一个笨重的方法是,在运行Hadoop作业的时候,先运行下面的命令:

|

1 2 |

|

其中,/home/wyp/IdeaProjects/Hadoop/out/artifacts/Hadoop_jar/是上面Hadoop.jar文件所在的目录。好了,现在再运行一下Hadoop作业命令:

[wyp@wyp Hadoop_jar]$ hadoop jar /home/wyp/IdeaProjects/Hadoop/out/artifacts/Hadoop_jar/Hadoop.jar com/wyp/hadoop/MaxTemperature /user/wyp/data.txt /user/wyp/result 13/10/28 15:34:16 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032 13/10/28 15:34:16 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032 13/10/28 15:34:17 WARN mapreduce.JobSubmitter: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this. 13/10/28 15:34:17 INFO mapred.FileInputFormat: Total input paths to process : 1 13/10/28 15:34:17 INFO mapreduce.JobSubmitter: number of splits:2 13/10/28 15:34:17 INFO Configuration.deprecation: user.name is deprecated. Instead, use mapreduce.job.user.name 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.output.value.class is deprecated. Instead, use mapreduce.job.output.value.class 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.job.name is deprecated. Instead, use mapreduce.job.name 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.input.dir is deprecated. Instead, use mapreduce.input.fileinputformat.inputdir 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.output.dir is deprecated. Instead, use mapreduce.output.fileoutputformat.outputdir 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.output.key.class is deprecated. Instead, use mapreduce.job.output.key.class 13/10/28 15:34:17 INFO Configuration.deprecation: mapred.working.dir is deprecated. Instead, use mapreduce.job.working.dir 13/10/28 15:34:18 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1382942307976_0009 13/10/28 15:34:18 INFO impl.YarnClientImpl: Submitted application application_1382942307976_0009 to ResourceManager at /0.0.0.0:8032 13/10/28 15:34:18 INFO mapreduce.Job: The url to track the job: http://wyp:8088/proxy/application_1382942307976_0009/ 13/10/28 15:34:18 INFO mapreduce.Job: Running job: job_1382942307976_0009 13/10/28 15:34:26 INFO mapreduce.Job: Job job_1382942307976_0009 running in uber mode : false 13/10/28 15:34:26 INFO mapreduce.Job: map 0% reduce 0% 13/10/28 15:34:41 INFO mapreduce.Job: map 50% reduce 0% 13/10/28 15:34:53 INFO mapreduce.Job: map 100% reduce 0% 13/10/28 15:35:17 INFO mapreduce.Job: map 100% reduce 100% 13/10/28 15:35:18 INFO mapreduce.Job: Job job_1382942307976_0009 completed successfully 13/10/28 15:35:18 INFO mapreduce.Job: Counters: 43 File System Counters FILE: Number of bytes read=144425 FILE: Number of bytes written=524725 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=1777598 HDFS: Number of bytes written=18 HDFS: Number of read operations=9 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=2 Launched reduce tasks=1 Data-local map tasks=2 Total time spent by all maps in occupied slots (ms)=38057 Total time spent by all reduces in occupied slots (ms)=24800 Map-Reduce Framework Map input records=13130 Map output records=13129 Map output bytes=118161 Map output materialized bytes=144431 Input split bytes=182 Combine input records=0 Combine output records=0 Reduce input groups=2 Reduce shuffle bytes=144431 Reduce input records=13129 Reduce output records=2 Spilled Records=26258 Shuffled Maps =2 Failed Shuffles=0 Merged Map outputs=2 GC time elapsed (ms)=321 CPU time spent (ms)=5110 Physical memory (bytes) snapshot=552824832 Virtual memory (bytes) snapshot=1228738560 Total committed heap usage (bytes)=459800576 Shuffle Errors BAD_ID=0 CONNECTION=0 IO_ERROR=0 WRONG_LENGTH=0 WRONG_MAP=0 WRONG_REDUCE=0 File Input Format Counters Bytes Read=1777416 File Output Format Counters Bytes Written=18

到这里,程序就成功运行了!很高兴吧?那么怎么查看刚刚程序运行的结果呢?很简单,运行下面命令:

|

01 02 03 04 05 06 07 08 09 10 11 |

|

到此,你自己写好的一个Mapreduce程序终于成功运行了!

附程序测试的数据的下载地址:http://pan.baidu.com/s/1iSacM

过往记忆(http://www.iteblog.com/)

编写简单的Mapreduce程序并部署在Hadoop2.2.0上运行(http://www.iteblog.com/archives/789)

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

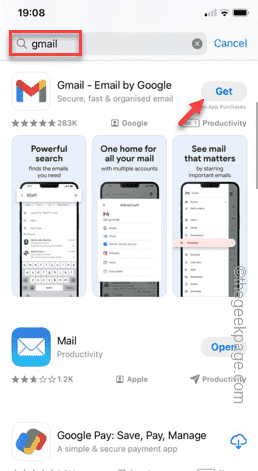

How to make Google Maps the default map in iPhone

Apr 17, 2024 pm 07:34 PM

How to make Google Maps the default map in iPhone

Apr 17, 2024 pm 07:34 PM

The default map on the iPhone is Maps, Apple's proprietary geolocation provider. Although the map is getting better, it doesn't work well outside the United States. It has nothing to offer compared to Google Maps. In this article, we discuss the feasible steps to use Google Maps to become the default map on your iPhone. How to Make Google Maps the Default Map in iPhone Setting Google Maps as the default map app on your phone is easier than you think. Follow the steps below – Prerequisite steps – You must have Gmail installed on your phone. Step 1 – Open the AppStore. Step 2 – Search for “Gmail”. Step 3 – Click next to Gmail app

The easiest way to query the hard drive serial number

Feb 26, 2024 pm 02:24 PM

The easiest way to query the hard drive serial number

Feb 26, 2024 pm 02:24 PM

The hard disk serial number is an important identifier of the hard disk and is usually used to uniquely identify the hard disk and identify the hardware. In some cases, we may need to query the hard drive serial number, such as when installing an operating system, finding the correct device driver, or performing hard drive repairs. This article will introduce some simple methods to help you check the hard drive serial number. Method 1: Use Windows Command Prompt to open the command prompt. In Windows system, press Win+R keys, enter "cmd" and press Enter key to open the command

Clock app missing in iPhone: How to fix it

May 03, 2024 pm 09:19 PM

Clock app missing in iPhone: How to fix it

May 03, 2024 pm 09:19 PM

Is the clock app missing from your phone? The date and time will still appear on your iPhone's status bar. However, without the Clock app, you won’t be able to use world clock, stopwatch, alarm clock, and many other features. Therefore, fixing missing clock app should be at the top of your to-do list. These solutions can help you resolve this issue. Fix 1 – Place the Clock App If you mistakenly removed the Clock app from your home screen, you can put the Clock app back in its place. Step 1 – Unlock your iPhone and start swiping to the left until you reach the App Library page. Step 2 – Next, search for “clock” in the search box. Step 3 – When you see “Clock” below in the search results, press and hold it and

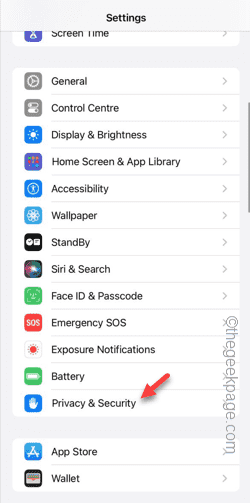

Can't allow access to camera and microphone in iPhone

Apr 23, 2024 am 11:13 AM

Can't allow access to camera and microphone in iPhone

Apr 23, 2024 am 11:13 AM

Are you getting "Unable to allow access to camera and microphone" when trying to use the app? Typically, you grant camera and microphone permissions to specific people on a need-to-provide basis. However, if you deny permission, the camera and microphone will not work and will display this error message instead. Solving this problem is very basic and you can do it in a minute or two. Fix 1 – Provide Camera, Microphone Permissions You can provide the necessary camera and microphone permissions directly in settings. Step 1 – Go to the Settings tab. Step 2 – Open the Privacy & Security panel. Step 3 – Turn on the “Camera” permission there. Step 4 – Inside, you will find a list of apps that have requested permission for your phone’s camera. Step 5 – Open the “Camera” of the specified app

Write a method to calculate power function in C language

Feb 19, 2024 pm 01:00 PM

Write a method to calculate power function in C language

Feb 19, 2024 pm 01:00 PM

How to write exponentiation function in C language Exponentiation (exponentiation) is a commonly used operation in mathematics, which means multiplying a number by itself several times. In C language, we can implement this function by writing a power function. The following will introduce in detail how to write a power function in C language and give specific code examples. Determine the input and output of the function The input of the power function usually contains two parameters: base and exponent, and the output is the calculated result. therefore, we

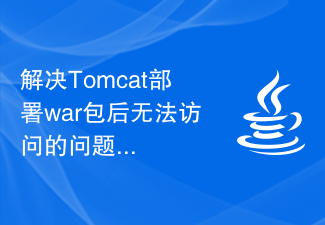

How to solve the problem of inaccessibility after Tomcat deploys war package

Jan 13, 2024 pm 12:07 PM

How to solve the problem of inaccessibility after Tomcat deploys war package

Jan 13, 2024 pm 12:07 PM

How to solve the problem that Tomcat cannot successfully access the war package after deploying it requires specific code examples. As a widely used Java Web server, Tomcat allows developers to package their own developed Web applications into war files for deployment. However, sometimes we may encounter the problem of being unable to successfully access the war package after deploying it. This may be caused by incorrect configuration or other reasons. In this article, we'll provide some concrete code examples that address this dilemma. 1. Check Tomcat service

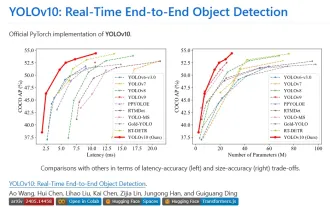

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

Gunicorn Deployment Guide for Flask Applications

Jan 17, 2024 am 08:13 AM

Gunicorn Deployment Guide for Flask Applications

Jan 17, 2024 am 08:13 AM

How to deploy Flask application using Gunicorn? Flask is a lightweight Python Web framework that is widely used to develop various types of Web applications. Gunicorn (GreenUnicorn) is a Python-based HTTP server used to run WSGI (WebServerGatewayInterface) applications. This article will introduce how to use Gunicorn to deploy Flask applications, with