memcached的线程模型

Memcached数据结构 memcached的多线程主要是通过实例化多个libevent实现的,分别是一个主线程和n个workers线程。每个线程都是一个单独的libevent实例,主线程eventloop负责处理监听fd,监听客户端的建立连接请求,以及accept连接,将已建立的连接round robin到

Memcached数据结构

memcached的多线程主要是通过实例化多个libevent实现的,分别是一个主线程和n个workers线程。每个线程都是一个单独的libevent实例,主线程eventloop负责处理监听fd,监听客户端的建立连接请求,以及accept连接,将已建立的连接round robin到各个worker。workers线程负责处理已经建立好的连接的读写等事件。”one event loop per thread”.

首先看下主要的数据结构

thread.c

CQ_ITEM是主线程accept后返回的已建立连接的fd的封装。

/* An item in the connection queue. */

typedef struct conn_queue_item CQ_ITEM;

struct conn_queue_item {

int sfd;

int init_state;

int event_flags;

int read_buffer_size;

int is_udp;

CQ_ITEM *next;

};

CQ是一个管理CQ_ITEM的单向链表

/* A connection queue. */

typedef struct conn_queue CQ;

struct conn_queue {

CQ_ITEM *head;

CQ_ITEM *tail;

pthread_mutex_t lock;

pthread_cond_t cond;

};

LIBEVENT_THREAD 是memcached里的线程结构的封装,可以看到每个线程都包含一个CQ队列,一条通知管道pipe和一个libevent的实例event_base。

typedef struct {

pthread_t thread_id; /* unique ID of this thread */

struct event_base *base; /* libevent handle this thread uses */

struct event notify_event; /* listen event for notify pipe */

int notify_receive_fd; /* receiving end of notify pipe */

int notify_send_fd; /* sending end of notify pipe */

CQ new_conn_queue; /* queue of new connections to handle */

} LIBEVENT_THREAD;

Memcached对每个网络连接的封装conn

typedef struct{

int sfd;

int state;

struct event event;

short which;

char *rbuf;

... //这里省去了很多状态标志和读写buf信息等

}conn;

memcached主要通过设置/转换连接的不同状态,来处理事件(核心函数是drive_machine,连接的状态机)。

Memcached线程处理流程

Memcached.c

里main函数,先对主线程的libevent实例进行初始化, 然后初始化所有的workers线程,并启动。接着主线程调用server_socket(这里只分析tcp的情况)创建监听socket,绑定地址,设置非阻塞模式并注册监听socket的libevent 读事件等一系列操作。最后主线程调用event_base_loop接收外来连接请求。

Main() {

/* initialize main thread libevent instance */

main_base = event_init();

/* start up worker threads if MT mode */

thread_init(settings.num_threads, main_base);

server_socket(settings.port, 0);

/* enter the event loop */

event_base_loop(main_base, 0);

}

最后看看memcached网络事件处理的最核心部分- drive_machine drive_machine是多线程环境执行的,主线程和workers都会执行drive_machine。

static void drive_machine(conn *c) {

bool stop = false;

int sfd, flags = 1;

socklen_t addrlen;

struct sockaddr_storage addr;

int res;

assert(c != NULL);

while (!stop) {

switch(c->state) {

case conn_listening:

addrlen = sizeof(addr);

if ((sfd = accept(c->sfd, (struct sockaddr *)&addr, &addrlen)) == -1) {

//省去n多错误情况处理

break;

}

if ((flags = fcntl(sfd, F_GETFL, 0))

<p>drive_machine主要是通过当前连接的state来判断该进行何种处理,因为通过libevent注册了读写事件后回调的都是这个核心函数,所以实际上我们在注册libevent相应事件时,会同时把事件状态写到该conn结构体里,libevent进行回调时会把该conn结构作为参数传递过来,就是该方法的形参。

连接的状态枚举如下。</p>

<p class="highlight"></p><pre class="brush:php;toolbar:false"> enum conn_states {

conn_listening, /** the socket which listens for connections */

conn_read, /** reading in a command line */

conn_write, /** writing out a simple response */

conn_nread, /** reading in a fixed number of bytes */

conn_swallow, /** swallowing unnecessary bytes w/o storing */

conn_closing, /** closing this connection */

conn_mwrite, /** writing out many items sequentially */

};

实际对于case conn_listening:这种情况是主线程自己处理的,workers线程永远不会执行此分支我们看到主线程进行了accept后调用了

dispatch_conn_new(sfd, conn_read, EV_READ | EV_PERSIST,DATA_BUFFER_SIZE, false);

这个函数就是通知workers线程的地方,看看

void dispatch_conn_new(int sfd, int init_state, int event_flags,

int read_buffer_size, int is_udp) {

CQ_ITEM *item = cqi_new();

int thread = (last_thread + 1) % settings.num_threads;

last_thread = thread;

item->sfd = sfd;

item->init_state = init_state;

item->event_flags = event_flags;

item->read_buffer_size = read_buffer_size;

item->is_udp = is_udp;

cq_push(&threads[thread].new_conn_queue, item);

MEMCACHED_CONN_DISPATCH(sfd, threads[thread].thread_id);

if (write(threads[thread].notify_send_fd, "", 1) != 1) {

perror("Writing to thread notify pipe");

}

}

可以清楚的看到,主线程首先创建了一个新的CQ_ITEM,然后通过round robin策略选择了一个thread并通过cq_push将这个CQ_ITEM放入了该线程的CQ队列里,那么对应的workers线程是怎么知道的呢? 就是通过

write(threads[thread].notify_send_fd, "", 1)

向该线程管道写了1字节数据,则该线程的libevent立即回调了thread_libevent_process方法(上面已经描述过)。 然后那个线程取出item,注册读时间,当该条连接上有数据时,最终也会回调drive_machine方法,也就是drive_machine方法的 case conn_read:等全部是workers处理的,主线程只处理conn_listening 建立连接这个。 memcached的这套多线程event机制很值得设计linux后端网络程序时参考。

参考文献

- memcache源码分析--线程模型

- memcached结构分析——线程模型

- Memcached的线程模型及状态机

原文地址:memcached的线程模型, 感谢原作者分享。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

In order to align large language models (LLMs) with human values and intentions, it is critical to learn human feedback to ensure that they are useful, honest, and harmless. In terms of aligning LLM, an effective method is reinforcement learning based on human feedback (RLHF). Although the results of the RLHF method are excellent, there are some optimization challenges involved. This involves training a reward model and then optimizing a policy model to maximize that reward. Recently, some researchers have explored simpler offline algorithms, one of which is direct preference optimization (DPO). DPO learns the policy model directly based on preference data by parameterizing the reward function in RLHF, thus eliminating the need for an explicit reward model. This method is simple and stable

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

At the forefront of software technology, UIUC Zhang Lingming's group, together with researchers from the BigCode organization, recently announced the StarCoder2-15B-Instruct large code model. This innovative achievement achieved a significant breakthrough in code generation tasks, successfully surpassing CodeLlama-70B-Instruct and reaching the top of the code generation performance list. The unique feature of StarCoder2-15B-Instruct is its pure self-alignment strategy. The entire training process is open, transparent, and completely autonomous and controllable. The model generates thousands of instructions via StarCoder2-15B in response to fine-tuning the StarCoder-15B base model without relying on expensive manual annotation.

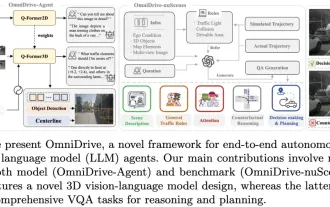

LLM is all done! OmniDrive: Integrating 3D perception and reasoning planning (NVIDIA's latest)

May 09, 2024 pm 04:55 PM

LLM is all done! OmniDrive: Integrating 3D perception and reasoning planning (NVIDIA's latest)

May 09, 2024 pm 04:55 PM

Written above & the author’s personal understanding: This paper is dedicated to solving the key challenges of current multi-modal large language models (MLLMs) in autonomous driving applications, that is, the problem of extending MLLMs from 2D understanding to 3D space. This expansion is particularly important as autonomous vehicles (AVs) need to make accurate decisions about 3D environments. 3D spatial understanding is critical for AVs because it directly impacts the vehicle’s ability to make informed decisions, predict future states, and interact safely with the environment. Current multi-modal large language models (such as LLaVA-1.5) can often only handle lower resolution image inputs (e.g.) due to resolution limitations of the visual encoder, limitations of LLM sequence length. However, autonomous driving applications require

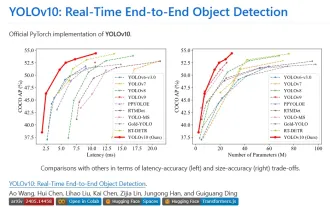

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

C++ Concurrent Programming: How to avoid thread starvation and priority inversion?

May 06, 2024 pm 05:27 PM

C++ Concurrent Programming: How to avoid thread starvation and priority inversion?

May 06, 2024 pm 05:27 PM

To avoid thread starvation, you can use fair locks to ensure fair allocation of resources, or set thread priorities. To solve priority inversion, you can use priority inheritance, which temporarily increases the priority of the thread holding the resource; or use lock promotion, which increases the priority of the thread that needs the resource.