Hadoop Pig Job Name

hadoop pig job name 默认是 script name,可以在脚本中自己设置。 command line set job.name ‘myselfJobName’ http://jagaranandhadoop.blogspot.com/2011/07/hadoop-pig-job-name-in-commandline.html http://pig.apache.org/docs/r0.9.2/cmds.html#set

hadoop pig job name 默认是 script name,可以在脚本中自己设置。

command line

<code>set job.name ‘myselfJobName’ </code>

http://jagaranandhadoop.blogspot.com/2011/07/hadoop-pig-job-name-in-commandline.html

http://pig.apache.org/docs/r0.9.2/cmds.html#set

原文地址:Hadoop Pig Job Name, 感谢原作者分享。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid When using Hadoop to process big data, you often encounter some Java exception errors, which may affect the execution of tasks and cause data processing to fail. This article will introduce some common Hadoop errors and provide ways to deal with and avoid them. Java.lang.OutOfMemoryErrorOutOfMemoryError is an error caused by insufficient memory of the Java virtual machine. When Hadoop is

How to reproduce the RCE vulnerability of unauthorized access to the XXL-JOB API interface

May 12, 2023 am 09:37 AM

How to reproduce the RCE vulnerability of unauthorized access to the XXL-JOB API interface

May 12, 2023 am 09:37 AM

XXL-JOB Description XXL-JOB is a lightweight distributed task scheduling platform. Its core design goals are rapid development, easy learning, lightweight, and easy expansion. The source code is now open and connected to the online product lines of many companies, ready to use out of the box. 1. Vulnerability details The core issue of this vulnerability is GLUE mode. XXL-JOB supports multi-language and script tasks through "GLUE mode". The task features of this mode are as follows: ●Multi-language support: supports Java, Shell, Python, NodeJS, PHP, PowerShell... and other types. ●WebIDE: Tasks are maintained in the dispatch center in source code mode and support online development and maintenance through WebIDE. ●Dynamic effective: user online communication

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

With the advent of the big data era, data processing and storage have become more and more important, and how to efficiently manage and analyze large amounts of data has become a challenge for enterprises. Hadoop and HBase, two projects of the Apache Foundation, provide a solution for big data storage and analysis. This article will introduce how to use Hadoop and HBase in Beego for big data storage and query. 1. Introduction to Hadoop and HBase Hadoop is an open source distributed storage and computing system that can

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

As the amount of data continues to increase, traditional data processing methods can no longer handle the challenges brought by the big data era. Hadoop is an open source distributed computing framework that solves the performance bottleneck problem caused by single-node servers in big data processing through distributed storage and processing of large amounts of data. PHP is a scripting language that is widely used in web development and has the advantages of rapid development and easy maintenance. This article will introduce how to use PHP and Hadoop for big data processing. What is HadoopHadoop is

php提交表单通过后,弹出的对话框怎样在当前页弹出,该如何解决

Jun 13, 2016 am 10:23 AM

php提交表单通过后,弹出的对话框怎样在当前页弹出,该如何解决

Jun 13, 2016 am 10:23 AM

php提交表单通过后,弹出的对话框怎样在当前页弹出php提交表单通过后,弹出的对话框怎样在当前页弹出而不是在空白页弹出?想实现这样的效果:而不是空白页弹出:------解决方案--------------------如果你的验证用PHP在后端,那么就用Ajax;仅供参考:HTML code

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Java big data technology stack: Understand the application of Java in the field of big data, such as Hadoop, Spark, Kafka, etc. As the amount of data continues to increase, big data technology has become a hot topic in today's Internet era. In the field of big data, we often hear the names of Hadoop, Spark, Kafka and other technologies. These technologies play a vital role, and Java, as a widely used programming language, also plays a huge role in the field of big data. This article will focus on the application of Java in large

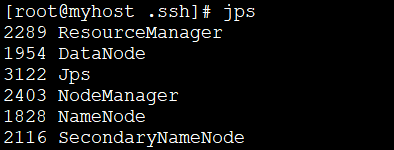

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

1: Install JDK1. Execute the following command to download the JDK1.8 installation package. wget--no-check-certificatehttps://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz2. Execute the following command to decompress the downloaded JDK1.8 installation package. tar-zxvfjdk-8u151-linux-x64.tar.gz3. Move and rename the JDK package. mvjdk1.8.0_151//usr/java84. Configure Java environment variables. echo'

Introduction to the three core components of hadoop

Mar 13, 2024 pm 05:54 PM

Introduction to the three core components of hadoop

Mar 13, 2024 pm 05:54 PM

The three core components of Hadoop are: Hadoop Distributed File System (HDFS), MapReduce and Yet Another Resource Negotiator (YARN).