[转载]MapReduce的模式、算法和用例

转载自:http://yangguan.org/mapreduce-patterns-algorithms-and-use-cases/翻译自:http://highlyscalable.wordpress.com/2012/02/01/mapreduce-patterns/在这篇文章里总结了几种网上或者论文中常见的MapReduce模式和算法,并系统化的解释了这些技术的不同

转载自:http://yangguan.org/mapreduce-patterns-algorithms-and-use-cases/ 翻译自:http://highlyscalable.wordpress.com/2012/02/01/mapreduce-patterns/ 在这篇文章里总结了几种网上或者论文中常见的MapReduce模式和算法,并系统化的解释了这些技术的不同之处。所有描述性的文字和代码都使用了标准hadoop的MapReduce模型,包括Mappers, Reduces, Combiners, Partitioners,和 sorting。如下图所示。

基本MapReduce模式

计数与求和

问题陈述:?有许多文档,每个文档都有一些字段组成。需要计算出每个字段在所有文档中的出现次数或者这些字段的其他什么统计值。例如,给定一个log文件,其中的每条记录都包含一个响应时间,需要计算出平均响应时间。 解决方案: 让我们先从简单的例子入手。在下面的代码片段里,Mapper每遇到指定词就把频次记1,Reducer一个个遍历这些词的集合然后把他们的频次加和。class Mapper

method Map(docid id, doc d)

for all term t in doc d do

Emit(term t, count 1)

class Reducer

method Reduce(term t, counts [c1, c2,...])

sum = 0

for all count c in [c1, c2,...] do

sum = sum + c

Emit(term t, count sum)

这种方法的缺点显而易见,Mapper提交了太多无意义的计数。它完全可以通过先对每个文档中的词进行计数从而减少传递给Reducer的数据量:

class Mapper

method Map(docid id, doc d)

H = new AssociativeArray

for all term t in doc d do

H{t} = H{t} + 1

for all term t in H do

Emit(term t, count H{t})class Mapper method Map(docid id, doc d) for all term t in doc d do Emit(term t, count 1) class Combiner method Combine(term t, [c1, c2,...]) sum = 0 for all count c in [c1, c2,...] do sum = sum + c Emit(term t, count sum) class Reducer method Reduce(term t, counts [c1, c2,...]) sum = 0 for all count c in [c1, c2,...] do sum = sum + c Emit(term t, count sum)

应用:

Log 分析, 数据查询整理归类

问题陈述: 有一系列条目,每个条目都有几个属性,要把具有同一属性值的条目都保存在一个文件里,或者把条目按照属性值分组。 最典型的应用是倒排索引。 解决方案: 解决方案很简单。 在 Mapper 中以每个条目的所需属性值作为 key,其本身作为值传递给 Reducer。 Reducer 取得按照属性值分组的条目,然后可以处理或者保存。如果是在构建倒排索引,那么 每个条目相当于一个词而属性值就是词所在的文档ID。应用:

倒排索引, ETL过滤 (文本查找),解析和校验

问题陈述: 假设有很多条记录,需要从其中找出满足某个条件的所有记录,或者将每条记录传换成另外一种形式(转换操作相对于各条记录独立,即对一条记录的操作与其他记录无关)。像文本解析、特定值抽取、格式转换等都属于后一种用例。 解决方案: 非常简单,在Mapper 里逐条进行操作,输出需要的值或转换后的形式。应用:

日志分析,数据查询,ETL,数据校验分布式任务执行

问题陈述: 大型计算可以分解为多个部分分别进行然后合并各个计算的结果以获得最终结果。 解决方案:? 将数据切分成多份作为每个 Mapper 的输入,每个Mapper处理一份数据,执行同样的运算,产生结果,Reducer把多个Mapper的结果组合成一个。案例研究: 数字通信系统模拟

像 WiMAX 这样的数字通信模拟软件通过系统模型来传输大量的随机数据,然后计算传输中的错误几率。 每个 Mapper 处理样本 1/N ?的数据,计算出这部分数据的错误率,然后在 Reducer 里计算平均错误率。应用:

工程模拟,数字分析,性能测试排序

问题陈述: 有许多条记录,需要按照某种规则将所有记录排序或是按照顺序来处理记录。 解决方案:?简单排序很好办 – Mappers 将待排序的属性值为键,整条记录为值输出。 不过实际应用中的排序要更加巧妙一点, 这就是它之所以被称为MapReduce 核心的原因(“核心”是说排序?因为证明Hadoop计算能力的实验是大数据排序?还是说Hadoop的处理过程中对key排序的环节?)。在实践中,常用组合键来实现二次排序和分组。 MapReduce 最初只能够对键排序, 但是也有技术利用可以利用Hadoop 的特性来实现按值排序。想了解的话可以看?这篇博客。 按照BigTable的概念,使用 MapReduce来对最初数据而非中间数据排序,也即保持数据的有序状态更有好处,必须注意这一点。换句话说,在数据插入时排序一次要比在每次查询数据的时候排序更高效。应用:

ETL,数据分析非基本 MapReduce 模式

迭代消息传递 (图处理)

问题陈述: 假设一个实体网络,实体之间存在着关系。 需要按照与它比邻的其他实体的属性计算出一个状态。这个状态可以表现为它和其它节点之间的距离, 存在特定属性的邻接点的迹象, 邻域密度特征等等。 解决方案: ?网络存储为系列节点的结合,每个节点包含有其所有邻接点ID的列表。按照这个概念,MapReduce 迭代进行,每次迭代中每个节点都发消息给它的邻接点。邻接点根据接收到的信息更新自己的状态。当满足了某些条件的时候迭代停止,如达到了最大迭代次数(网络半径)或两次连续的迭代几乎没有状态改变。从技术上来看,Mapper 以每个邻接点的ID为键发出信息,所有的信息都会按照接受节点分组,reducer 就能够重算各节点的状态然后更新那些状态改变了的节点。下面展示了这个算法:class Mapper

method Map(id n, object N)

Emit(id n, object N)

for all id m in N.OutgoingRelations do

Emit(id m, message getMessage(N))

class Reducer

method Reduce(id m, [s1, s2,...])

M = null

messages = []

for all s in [s1, s2,...] do

if IsObject(s) then

M = s

else // s is a message

messages.add(s)

M.State = calculateState(messages)

Emit(id m, item M)

案例研究: 沿分类树的有效性传递

问题陈述: 这个问题来自于真实的电子商务应用。将各种货物分类,这些类别可以组成一个树形结构,比较大的分类(像男人、女人、儿童)可以再分出小分类(像男裤或女装),直到不能再分为止(像男式蓝色牛仔裤)。这些不能再分的基层类别可以是有效(这个类别包含有货品)或者已无效的(没有属于这个分类的货品)。如果一个分类至少含有一个有效的子分类那么认为这个分类也是有效的。我们需要在已知一些基层分类有效的情况下找出分类树上所有有效的分类。 解决方案: 这个问题可以用上一节提到的框架来解决。我们咋下面定义了名为 getMessage和 calculateState 的方法:class N

State in {True = 2, False = 1, null = 0},

initialized 1 or 2 for end-of-line categories, 0 otherwise

method getMessage(object N)

return N.State

method calculateState(state s, data [d1, d2,...])

return max( [d1, d2,...] )案例研究:广度优先搜索

问题陈述:需要计算出一个图结构中某一个节点到其它所有节点的距离。 解决方案:?Source源节点给所有邻接点发出值为0的信号,邻接点把收到的信号再转发给自己的邻接点,每转发一次就对信号值加1:class N

State is distance,

initialized 0 for source node, INFINITY for all other nodes

method getMessage(N)

return N.State + 1

method calculateState(state s, data [d1, d2,...])

min( [d1, d2,...] )案例研究:网页排名和 Mapper 端数据聚合

这个算法由Google提出,使用权威的PageRank算法,通过连接到一个网页的其他网页来计算网页的相关性。真实算法是相当复杂的,但是核心思想是权重可以传播,也即通过一个节点的各联接节点的权重的均值来计算节点自身的权重。class N State is PageRank method getMessage(object N) return N.State / N.OutgoingRelations.size() method calculateState(state s, data [d1, d2,...]) return ( sum([d1, d2,...]) )

class Mapper

method Initialize

H = new AssociativeArray

method Map(id n, object N)

p = N.PageRank / N.OutgoingRelations.size()

Emit(id n, object N)

for all id m in N.OutgoingRelations do

H{m} = H{m} + p

method Close

for all id n in H do

Emit(id n, value H{n})

class Reducer

method Reduce(id m, [s1, s2,...])

M = null

p = 0

for all s in [s1, s2,...] do

if IsObject(s) then

M = s

else

p = p + s

M.PageRank = p

Emit(id m, item M)应用:

图分析,网页索引值去重 (对唯一项计数)

问题陈述:?记录包含值域F和值域 G,要分别统计相同G值的记录中不同的F值的数目 (相当于按照 G分组). 这个问题可以推而广之应用于分面搜索(某些电子商务网站称之为Narrow Search) Record 1: F=1, G={a, b}

Record 2: F=2, G={a, d, e}

Record 3: F=1, G={b}

Record 4: F=3, G={a, b}

Result:

a -> 3 // F=1, F=2, F=3

b -> 2 // F=1, F=3

d -> 1 // F=2

e -> 1 // F=2class Mapper

method Map(null, record [value f, categories [g1, g2,...]])

for all category g in [g1, g2,...]

Emit(record [g, f], count 1)

class Reducer

method Reduce(record [g, f], counts [n1, n2, ...])

Emit(record [g, f], null ) class Mapper

method Map(record [f, g], null)

Emit(value g, count 1)

class Reducer

method Reduce(value g, counts [n1, n2,...])

Emit(value g, sum( [n1, n2,...] ) )class Mapper

method Map(null, record [value f, categories [g1, g2,...] )

for all category g in [g1, g2,...]

Emit(value f, category g)

class Reducer

method Initialize

H = new AssociativeArray : category -> count

method Reduce(value f, categories [g1, g2,...])

[g1', g2',..] = ExcludeDuplicates( [g1, g2,..] )

for all category g in [g1', g2',...]

H{g} = H{g} + 1

method Close

for all category g in H do

Emit(category g, count H{g})应用:

日志分析,用户计数互相关

问题陈述:有多个各由若干项构成的组,计算项两两共同出现于一个组中的次数。假如项数是N,那么应该计算N*N。 这种情况常见于文本分析(条目是单词而元组是句子),市场分析(购买了此物的客户还可能购买什么)。如果N*N小到可以容纳于一台机器的内存,实现起来就比较简单了。 配对法 第一种方法是在Mapper中给所有条目配对,然后在Reducer中将同一条目对的计数加和。但这种做法也有缺点:- 使用 combiners 带来的的好处有限,因为很可能所有项对都是唯一的

- 不能有效利用内存

class Mapper method Map(null, items [i1, i2,...] ) for all item i in [i1, i2,...] for all item j in [i1, i2,...] Emit(pair [i j], count 1) class Reducer method Reduce(pair [i j], counts [c1, c2,...]) s = sum([c1, c2,...]) Emit(pair[i j], count s)

- 中间结果的键数量相对较少,因此减少了排序消耗。

- 可以有效利用 combiners。

- 可在内存中执行,不过如果没有正确执行的话也会带来问题。

- 实现起来比较复杂。

- 一般来说, “stripes” 比 “pairs” 更快

class Mapper

method Map(null, items [i1, i2,...] )

for all item i in [i1, i2,...]

H = new AssociativeArray : item -> counter

for all item j in [i1, i2,...]

H{j} = H{j} + 1

Emit(item i, stripe H)

class Reducer

method Reduce(item i, stripes [H1, H2,...])

H = new AssociativeArray : item -> counter

H = merge-sum( [H1, H2,...] )

for all item j in H.keys()

Emit(pair [i j], H{j})应用:

文本分析,市场分析References:

- Lin J. Dyer C. Hirst G.?Data Intensive Processing MapReduce

用MapReduce 表达关系模式

在这部分我们会讨论一下怎么使用MapReduce来进行主要的关系操作。筛选(Selection)

class Mapper

method Map(rowkey key, tuple t)

if t satisfies the predicate

Emit(tuple t, null)投影(Projection)

投影只比筛选稍微复杂一点,在这种情况下我们可以用Reducer来消除可能的重复值。class Mapper

method Map(rowkey key, tuple t)

tuple g = project(t) // extract required fields to tuple g

Emit(tuple g, null)

class Reducer

method Reduce(tuple t, array n) // n is an array of nulls

Emit(tuple t, null)合并(Union)

两个数据集中的所有记录都送入Mapper,在Reducer里消重class Mapper

method Map(rowkey key, tuple t)

Emit(tuple t, null)

class Reducer

method Reduce(tuple t, array n) // n is an array of one or two nulls

Emit(tuple t, null)交集(Intersection)

将两个数据集中需要做交叉的记录输入Mapper,Reducer 输出出现了两次的记录。因为每条记录都有一个主键,在每个数据集中只会出现一次,所以这样做是可行的。class Mapper

method Map(rowkey key, tuple t)

Emit(tuple t, null)

class Reducer

method Reduce(tuple t, array n) // n is an array of one or two nulls

if n.size() = 2

Emit(tuple t, null)差异(Difference)

假设有两个数据集R和S,我们要找出R与S的差异。Mapper将所有的元组做上标记,表明他们来自于R还是S,Reducer只输出那些存在于R中而不在S中的记录。class Mapper

method Map(rowkey key, tuple t)

Emit(tuple t, string t.SetName) // t.SetName is either 'R' or 'S'

class Reducer

method Reduce(tuple t, array n) // array n can be ['R'], ['S'], ['R' 'S'], or ['S', 'R']

if n.size() = 1 and n[1] = 'R'

Emit(tuple t, null)分组聚合(GroupBy and Aggregation)

分组聚合可以在如下的一个MapReduce中完成。Mapper抽取数据并将之分组聚合,Reducer 中对收到的数据再次聚合。典型的聚合应用比如求和与最值可以以流的方式进行计算,因而不需要同时保有所有的值。但是另外一些情景就必须要两阶段MapReduce,前面提到过的惟一值模式就是一个这种类型的例子。class Mapper

method Map(null, tuple [value GroupBy, value AggregateBy, value ...])

Emit(value GroupBy, value AggregateBy)

class Reducer

method Reduce(value GroupBy, [v1, v2,...])

Emit(value GroupBy, aggregate( [v1, v2,...] ) ) // aggregate() : sum(), max(),...连接(Joining)

MapperReduce框架可以很好地处理连接,不过在面对不同的数据量和处理效率要求的时候还是有一些技巧。在这部分我们会介绍一些基本方法,在后面的参考文档中还列出了一些关于这方面的专题文章。分配后连接 (Reduce端连接,排序-合并连接)

这个算法按照键K来连接数据集R和L。Mapper 遍历R和L中的所有元组,以K为键输出每一个标记了来自于R还是L的元组,Reducer把同一个K的数据分装入两个容器(R和L),然后嵌套循环遍历两个容器中的数据以得到交集,最后输出的每一条结果都包含了R中的数据、L中的数据和K。这种方法有以下缺点:- Mapper要输出所有的数据,即使一些key只会在一个集合中出现。

- Reducer 要在内存中保有一个key的所有数据,如果数据量打过了内存,那么就要缓存到硬盘上,这就增加了硬盘IO的消耗。

class Mapper

method Map(null, tuple [join_key k, value v1, value v2,...])

Emit(join_key k, tagged_tuple [set_name tag, values [v1, v2, ...] ] )

class Reducer

method Reduce(join_key k, tagged_tuples [t1, t2,...])

H = new AssociativeArray : set_name -> values

for all tagged_tuple t in [t1, t2,...] // separate values into 2 arrays

H{t.tag}.add(t.values)

for all values r in H{'R'} // produce a cross-join of the two arrays

for all values l in H{'L'}

Emit(null, [k r l] )复制链接Replicated Join (Mapper端连接, Hash 连接)

在实际应用中,将一个小数据集和一个大数据集连接是很常见的(如用户与日志记录)。假定要连接两个集合R和L,其中R相对较小,这样,可以把R分发给所有的Mapper,每个Mapper都可以载入它并以连接键来索引其中的数据,最常用和有效的索引技术就是哈希表。之后,Mapper遍历L,并将其与存储在哈希表中的R中的相应记录连接,。这种方法非常高效,因为不需要对L中的数据排序,也不需要通过网络传送L中的数据,但是R必须足够小到能够分发给所有的Mapper。class Mapper

method Initialize

H = new AssociativeArray : join_key -> tuple from R

R = loadR()

for all [ join_key k, tuple [r1, r2,...] ] in R

H{k} = H{k}.append( [r1, r2,...] )

method Map(join_key k, tuple l)

for all tuple r in H{k}

Emit(null, tuple [k r l] )参考:

- Join Algorithms using Map/Reduce

- Optimizing Joins in a MapReduce Environment

应用于机器学习和数学方面的 MapReduce 算法

- C. T. Chu?et al?provides an excellent?description?of ?machine learning algorithms for MapReduce in the article?Map-Reduce for Machine Learning on Multicore.

- FFT using MapReduce: ?http://www.slideshare.net/hortonworks/large-scale-math-with-hadoop-mapreduce

- MapReduce for integer factorization:?http://www.javiertordable.com/files/MapreduceForIntegerFactorization.pdf

- Matrix multiplication with MapReduce:?http://csl.skku.edu/papers/CS-TR-2010-330.pdf?and?http://www.norstad.org/matrix-multiply/index.html

原文地址:[转载]MapReduce的模式、算法和用例, 感谢原作者分享。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

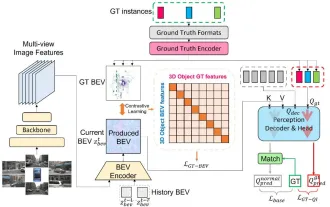

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

Written above & the author’s personal understanding: At present, in the entire autonomous driving system, the perception module plays a vital role. The autonomous vehicle driving on the road can only obtain accurate perception results through the perception module. The downstream regulation and control module in the autonomous driving system makes timely and correct judgments and behavioral decisions. Currently, cars with autonomous driving functions are usually equipped with a variety of data information sensors including surround-view camera sensors, lidar sensors, and millimeter-wave radar sensors to collect information in different modalities to achieve accurate perception tasks. The BEV perception algorithm based on pure vision is favored by the industry because of its low hardware cost and easy deployment, and its output results can be easily applied to various downstream tasks.

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explore the underlying principles and algorithm selection of the C++sort function

Apr 02, 2024 pm 05:36 PM

Explore the underlying principles and algorithm selection of the C++sort function

Apr 02, 2024 pm 05:36 PM

The bottom layer of the C++sort function uses merge sort, its complexity is O(nlogn), and provides different sorting algorithm choices, including quick sort, heap sort and stable sort.

Do Not Disturb Mode Not Working in iPhone: Fix

Apr 24, 2024 pm 04:50 PM

Do Not Disturb Mode Not Working in iPhone: Fix

Apr 24, 2024 pm 04:50 PM

Even answering calls in Do Not Disturb mode can be a very annoying experience. As the name suggests, Do Not Disturb mode turns off all incoming call notifications and alerts from emails, messages, etc. You can follow these solution sets to fix it. Fix 1 – Enable Focus Mode Enable focus mode on your phone. Step 1 – Swipe down from the top to access Control Center. Step 2 – Next, enable “Focus Mode” on your phone. Focus Mode enables Do Not Disturb mode on your phone. It won't cause any incoming call alerts to appear on your phone. Fix 2 – Change Focus Mode Settings If there are some issues in the focus mode settings, you should fix them. Step 1 – Open your iPhone settings window. Step 2 – Next, turn on the Focus mode settings

Can artificial intelligence predict crime? Explore CrimeGPT's capabilities

Mar 22, 2024 pm 10:10 PM

Can artificial intelligence predict crime? Explore CrimeGPT's capabilities

Mar 22, 2024 pm 10:10 PM

The convergence of artificial intelligence (AI) and law enforcement opens up new possibilities for crime prevention and detection. The predictive capabilities of artificial intelligence are widely used in systems such as CrimeGPT (Crime Prediction Technology) to predict criminal activities. This article explores the potential of artificial intelligence in crime prediction, its current applications, the challenges it faces, and the possible ethical implications of the technology. Artificial Intelligence and Crime Prediction: The Basics CrimeGPT uses machine learning algorithms to analyze large data sets, identifying patterns that can predict where and when crimes are likely to occur. These data sets include historical crime statistics, demographic information, economic indicators, weather patterns, and more. By identifying trends that human analysts might miss, artificial intelligence can empower law enforcement agencies

Improved detection algorithm: for target detection in high-resolution optical remote sensing images

Jun 06, 2024 pm 12:33 PM

Improved detection algorithm: for target detection in high-resolution optical remote sensing images

Jun 06, 2024 pm 12:33 PM

01 Outlook Summary Currently, it is difficult to achieve an appropriate balance between detection efficiency and detection results. We have developed an enhanced YOLOv5 algorithm for target detection in high-resolution optical remote sensing images, using multi-layer feature pyramids, multi-detection head strategies and hybrid attention modules to improve the effect of the target detection network in optical remote sensing images. According to the SIMD data set, the mAP of the new algorithm is 2.2% better than YOLOv5 and 8.48% better than YOLOX, achieving a better balance between detection results and speed. 02 Background & Motivation With the rapid development of remote sensing technology, high-resolution optical remote sensing images have been used to describe many objects on the earth’s surface, including aircraft, cars, buildings, etc. Object detection in the interpretation of remote sensing images

Application of algorithms in the construction of 58 portrait platform

May 09, 2024 am 09:01 AM

Application of algorithms in the construction of 58 portrait platform

May 09, 2024 am 09:01 AM

1. Background of the Construction of 58 Portraits Platform First of all, I would like to share with you the background of the construction of the 58 Portrait Platform. 1. The traditional thinking of the traditional profiling platform is no longer enough. Building a user profiling platform relies on data warehouse modeling capabilities to integrate data from multiple business lines to build accurate user portraits; it also requires data mining to understand user behavior, interests and needs, and provide algorithms. side capabilities; finally, it also needs to have data platform capabilities to efficiently store, query and share user profile data and provide profile services. The main difference between a self-built business profiling platform and a middle-office profiling platform is that the self-built profiling platform serves a single business line and can be customized on demand; the mid-office platform serves multiple business lines, has complex modeling, and provides more general capabilities. 2.58 User portraits of the background of Zhongtai portrait construction

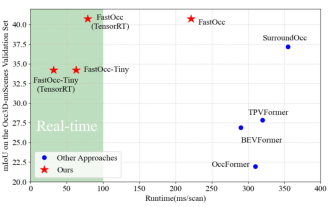

Add SOTA in real time and skyrocket! FastOcc: Faster inference and deployment-friendly Occ algorithm is here!

Mar 14, 2024 pm 11:50 PM

Add SOTA in real time and skyrocket! FastOcc: Faster inference and deployment-friendly Occ algorithm is here!

Mar 14, 2024 pm 11:50 PM

Written above & The author’s personal understanding is that in the autonomous driving system, the perception task is a crucial component of the entire autonomous driving system. The main goal of the perception task is to enable autonomous vehicles to understand and perceive surrounding environmental elements, such as vehicles driving on the road, pedestrians on the roadside, obstacles encountered during driving, traffic signs on the road, etc., thereby helping downstream modules Make correct and reasonable decisions and actions. A vehicle with self-driving capabilities is usually equipped with different types of information collection sensors, such as surround-view camera sensors, lidar sensors, millimeter-wave radar sensors, etc., to ensure that the self-driving vehicle can accurately perceive and understand surrounding environment elements. , enabling autonomous vehicles to make correct decisions during autonomous driving. Head