The whole process of deploying yolov to iPhone or terminal practice

The long-awaited detection classic has another wave of attacks - YOLOv5. Among them, YOLOv5 does not have complete files. The most important thing now is to figure out YOLOv4, which will benefit a lot in the field of target detection and can be highly improved in certain scenarios. Today we will analyze YOLOv4 for you. In the next issue, we will practice deploying YOLOv5 to Apple mobile phones or detect it in real time through the camera on the terminal!

1. Technology Review

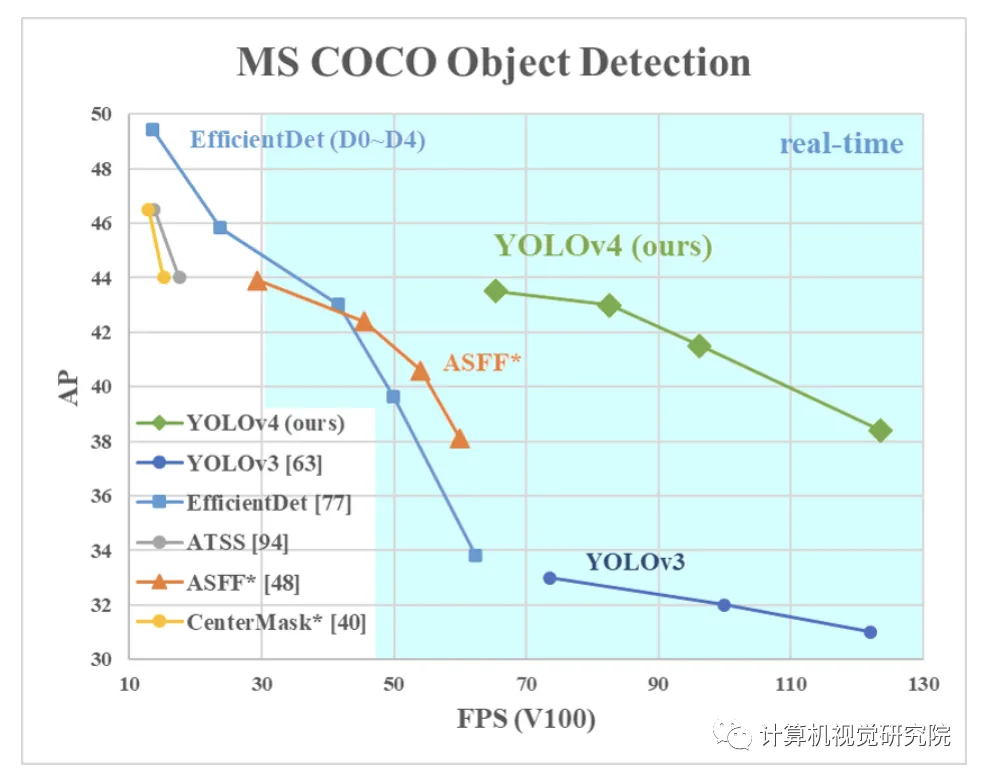

There are a large number of features that are considered to improve the accuracy of convolutional neural networks (CNN). Combinations of these features need to be practically tested on large datasets and the results theoretically validated. Some functions operate only on certain models, on certain problems, or on small datasets; while some functions, such as batch normalization and residual joins, work on most Models, tasks, and datasets. This paper assumes that these common features include weighted residual connections (WRC), cross-stage connections (CSP), cross-minibatch normalization (CMbN), self-adversarial training (SAT), and Mish activation. This paper uses new features: WRC, CSP, CMbN, SAT, error activation, mosaic data augmentation, CMbN, DropBlock regularization and CIoU loss, and combines some of them to achieve the following effect: 43.5% AP (65.7% AP50), using MS+COCO dataset, real-time speed of 65 FPS on Tesla V100.

2. Innovation point analysis

Mosaic data enhancement

Putting four pictures into one picture for training is equivalent to increasing the mini-batch in disguise. This is an improvement based on CutMix mixing two pictures;

Self-Adversarial Training

On a picture, let the neural network update the picture in reverse, make changes and perturbations to the picture, and then train on this picture. This method is the main method of image stylization, allowing the network to reversely update the image to stylize the image.

Self-Adversarial Training (SAT) also represents a new data augmentation technique that operates in 2 forward backward stages. In the 1st stage the neural network alters the original image instead of the network weights . In this way the neural network executes an adversarial attack on itself, altering the original image to create the deception that there is no desired object on the image. In the 2nd stage, the neural network is trained to detect an object on this modified image in the normal way.

Cross mini-batch Normal

CmBN means CBN modification The version, as shown in the figure below, is defined as Cross mini-Batch Normalization (CMBN). This only collects statistics between the smallest batches within a single batch.

modify SAM

##From SAM's space-by-space attention to point-by-point attention; The modified PAN changes the channel from addition (add) to concat.

Experiment

Take the data enhancement method as an example. Although it increases the training time, it can make the model generalize. Better performance and robustness. For example, the following common enhancement methods:

- Image disturbance,

- Change brightness, contrast, saturation, hue

- Add noise

- Random scaling

- Random crop

- Flip

- Rotation

- Random erase

- Cutout

- MixUp

- CutMix

Through experiments, we can see that using a lot of tricks, it is simply the most powerful kaleidoscope for target detection, as shown in the table below It is an experiment on classification networks:

CSPResNeXt-50 classifier accuracy

CSPDarknet-53 classifier accuracy

On the YOLOv4 detection network, four losses (GIoU, CIoU, DIoU, MSE), label smoothing, Cosine learning rate, genetic algorithm hyperparameter selection, Mosaic data enhancement and other methods were compared. . The following table is the ablation experiment results on the YOLOv4 detection network:

CSPResNeXt50-PANet-SPP, 512x512

Use models with different training weights for training:

Different mini-batch size results:

Finally, on three different series of GPUs: Maxwell, Pascal, and Volta, in the COCO data set Comparison of results on:

The most exciting thing is that in the COCO data set, compared with other frameworks (speed and accuracy):

The above is the detailed content of The whole process of deploying yolov to iPhone or terminal practice. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

Abandon the encoder-decoder architecture and use the diffusion model for edge detection, which is more effective. The National University of Defense Technology proposed DiffusionEdge

Feb 07, 2024 pm 10:12 PM

Abandon the encoder-decoder architecture and use the diffusion model for edge detection, which is more effective. The National University of Defense Technology proposed DiffusionEdge

Feb 07, 2024 pm 10:12 PM

Current deep edge detection networks usually adopt an encoder-decoder architecture, which contains up and down sampling modules to better extract multi-level features. However, this structure limits the network to output accurate and detailed edge detection results. In response to this problem, a paper on AAAI2024 provides a new solution. Thesis title: DiffusionEdge:DiffusionProbabilisticModelforCrispEdgeDetection Authors: Ye Yunfan (National University of Defense Technology), Xu Kai (National University of Defense Technology), Huang Yuxing (National University of Defense Technology), Yi Renjiao (National University of Defense Technology), Cai Zhiping (National University of Defense Technology) Paper link: https ://ar

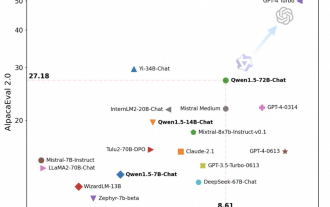

Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5

Feb 07, 2024 pm 10:15 PM

Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5

Feb 07, 2024 pm 10:15 PM

In time for the Spring Festival, version 1.5 of Tongyi Qianwen Model (Qwen) is online. This morning, the news of the new version attracted the attention of the AI community. The new version of the large model includes six model sizes: 0.5B, 1.8B, 4B, 7B, 14B and 72B. Among them, the performance of the strongest version surpasses GPT3.5 and Mistral-Medium. This version includes Base model and Chat model, and provides multi-language support. Alibaba’s Tongyi Qianwen team stated that the relevant technology has also been launched on the Tongyi Qianwen official website and Tongyi Qianwen App. In addition, today's release of Qwen 1.5 also has the following highlights: supports 32K context length; opens the checkpoint of the Base+Chat model;

Large models can also be sliced, and Microsoft SliceGPT greatly increases the computational efficiency of LLAMA-2

Jan 31, 2024 am 11:39 AM

Large models can also be sliced, and Microsoft SliceGPT greatly increases the computational efficiency of LLAMA-2

Jan 31, 2024 am 11:39 AM

Large language models (LLMs) typically have billions of parameters and are trained on trillions of tokens. However, such models are very expensive to train and deploy. In order to reduce computational requirements, various model compression techniques are often used. These model compression techniques can generally be divided into four categories: distillation, tensor decomposition (including low-rank factorization), pruning, and quantization. Pruning methods have been around for some time, but many require recovery fine-tuning (RFT) after pruning to maintain performance, making the entire process costly and difficult to scale. Researchers from ETH Zurich and Microsoft have proposed a solution to this problem called SliceGPT. The core idea of this method is to reduce the embedding of the network by deleting rows and columns in the weight matrix.

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

Updated Point Transformer: more efficient, faster and more powerful!

Jan 17, 2024 am 08:27 AM

Updated Point Transformer: more efficient, faster and more powerful!

Jan 17, 2024 am 08:27 AM

Original title: PointTransformerV3: Simpler, Faster, Stronger Paper link: https://arxiv.org/pdf/2312.10035.pdf Code link: https://github.com/Pointcept/PointTransformerV3 Author unit: HKUSHAILabMPIPKUMIT Paper idea: This article is not intended to be published in Seeking innovation within the attention mechanism. Instead, it focuses on leveraging the power of scale to overcome existing trade-offs between accuracy and efficiency in the context of point cloud processing. Draw inspiration from recent advances in 3D large-scale representation learning,

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

LLaVA-1.6, which catches up with Gemini Pro and improves reasoning and OCR capabilities, is too powerful

Feb 01, 2024 pm 04:51 PM

LLaVA-1.6, which catches up with Gemini Pro and improves reasoning and OCR capabilities, is too powerful

Feb 01, 2024 pm 04:51 PM

In April last year, researchers from the University of Wisconsin-Madison, Microsoft Research, and Columbia University jointly released LLaVA (Large Language and Vision Assistant). Although LLaVA is only trained with a small multi-modal instruction data set, it shows very similar inference results to GPT-4 on some samples. Then in October, they launched LLaVA-1.5, which refreshed the SOTA in 11 benchmarks with simple modifications to the original LLaVA. The results of this upgrade are very exciting, bringing new breakthroughs to the field of multi-modal AI assistants. The research team announced the launch of LLaVA-1.6 version, targeting reasoning, OCR and