Technology peripherals

Technology peripherals

AI

AI

The first GPU high-level language, massive parallelism is like writing Python, has received 8500 stars

The first GPU high-level language, massive parallelism is like writing Python, has received 8500 stars

The first GPU high-level language, massive parallelism is like writing Python, has received 8500 stars

After nearly 10 years of unremitting efforts and in-depth research on the core of computer science, people have finally realized a dream: running high-level languages on GPUs.

Last weekend, a programming language called Bend sparked heated discussions in the open source community, and the number of stars on GitHub has exceeded 8,500.

##GitHub: https://github.com/HigherOrderCO/Bend

As a large A large-scale parallel high-level programming language, it is still in the research stage, but the ideas proposed have surprised people. With Bend you can write parallel code for multi-core CPUs/GPUs without having to be a C/CUDA expert with 10 years of experience, it just feels like Python!

Yes, Bend adopts Python syntax.

Bend is a programming paradigm that supports expressive languages such as Python and Haskell. It is different from low-level alternatives such as CUDA and Metal. Bend features fast object allocation, full closure support for higher-order functions, unlimited recursion, and near-linear speedup based on core count. Bend runs on massively parallel hardware and provides HVM2-based runtime support.

The main contributor to the project, Victor Taelin, is from Brazil. He shared the main features and development ideas of Bend on the X platform.

First of all, Bend is not suitable for modern machine learning algorithms, because these algorithms are highly regularized (matrix multiplication) and have pre-allocated memory, and are usually already written in good CUDA Kernel.

The huge advantage of Bend is in practical applications, because "real applications" usually don't have the budget to make dedicated GPU cores. Question, who made the website in CUDA? Moreover, even if someone did, it would not be feasible because:

1. A real application would need to import functions from many different libraries, and CUDA kernels cannot be written for them;

2. Real applications have dynamic functions and closures;

3. Real applications dynamically and unpredictably allocate large amounts of Memory.

Bend has completed some new attempts and can be quite fast in some cases, but it is definitely not possible to write a large language model now.

The author compared the old method with the new method, using the same algorithm tree for bitonic sorting, involving JSON allocation and manipulation. Node.js is 3.5 seconds (Apple M3 Max) and Bend is 0.5 seconds (NVIDIA RTX 4090).

Yes, currently Bend requires an entire GPU to beat Node.js on a single core. But on the other hand, this is still a nascent new approach compared to a JIT compiler that a big company (Google) has been optimizing for 16 years. There are many possibilities in the future.

How to use

On GitHub, the author briefly introduces the usage process of Bend.

First, install Rust. If you want to use the C runtime, install a C compiler (such as GCC or Clang); if you want to use the CUDA runtime, install CUDA toolkit (CUDA and nvcc) version 12.x. Bend currently only supports Nvidia GPUs.

Then, install HVM2 and Bend:

cargo +nightly install hvmcargo +nightly install bend-lang

Finally, write some Bend files and use one of the following commands Once you run it:

bend run<file.bend> # uses the Rust interpreter (sequential)bend run-c<file.bend> # uses the C interpreter (parallel)bend run-cu <file.bend> # uses the CUDA interpreter (massively parallel)

You can also use gen-c and gen-cu to compile Bend into a standalone C/CUDA file for optimal performance . But gen-c and gen-cu are still in their infancy and are far less mature than SOTA compilers like GCC and GHC.

Parallel Programming in Bend

Here are examples of programs that can be run in parallel in Bend. For example, the expression:

(((1 + 2) + 3) + 4)

cannot be run in parallel because + 4 depends on + 3, which in turn depends on (1+2). And the expression:

((1 + 2) + (3 + 4))

can be run in parallel because (1+2) and (3+4) are independent. The condition for Bend to run in parallel is to comply with parallel logic.

Let’s look at a more complete code example:

# Sorting Network = just rotate trees!def sort (d, s, tree):switch d:case 0:return treecase _:(x,y) = treelft = sort (d-1, 0, x)rgt = sort (d-1, 1, y)return rots (d, s, lft, rgt)# Rotates sub-trees (Blue/Green Box)def rots (d, s, tree):switch d:case 0:return treecase _:(x,y) = treereturn down (d, s, warp (d-1, s, x, y))(...)

该文件实现了具有不可变树旋转的双调排序器。它不是很多人期望的在 GPU 上快速运行的算法。然而,由于它使用本质上并行的分治方法,因此 Bend 会以多线程方式运行它。一些速度基准:

- CPU,Apple M3 Max,1 个线程:12.15 秒

- CPU,Apple M3 Max,16 线程:0.96 秒

- GPU,NVIDIA RTX 4090,16k 线程:0.21 秒

不执行任何操作即可实现 57 倍的加速。没有线程产生,没有锁、互斥锁的显式管理。我们只是要求 Bend 在 RTX 上运行我们的程序,就这么简单。

Bend 不限于特定范例,例如张量或矩阵。任何的并发系统,从着色器到类 Erlang 的 actor 模型都可以在 Bend 上进行模拟。例如,要实时渲染图像,我们可以简单地在每个帧上分配一个不可变的树:

# given a shader, returns a square imagedef render (depth, shader):bend d = 0, i = 0:when d < depth:color = (fork (d+1, i*2+0), fork (d+1, i*2+1))else:width = depth / 2color = shader (i % width, i /width)return color# given a position, returns a color# for this demo, it just busy loopsdef demo_shader (x, y):bend i = 0:when i < 5000:color = fork (i + 1)else:color = 0x000001return color# renders a 256x256 image using demo_shaderdef main:return render (16, demo_shader)

它确实会起作用,即使涉及的算法在 Bend 上也能很好地并行。长距离通信通过全局 beta 缩减(根据交互演算)执行,并通过 HVM2 的原子链接器正确有效地同步。

最后,作者表示 Bend 现在仅仅是第一个版本,还没有在合适的编译器上投入太多精力。大家可以预期未来每个版本的原始性能都会大幅提高。而现在,我们已经可以使用解释器,从 Python 高级语言的角度一睹大规模并行编程的样子了。

The above is the detailed content of The first GPU high-level language, massive parallelism is like writing Python, has received 8500 stars. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1422

1422

52

52

1316

1316

25

25

1267

1267

29

29

1239

1239

24

24

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

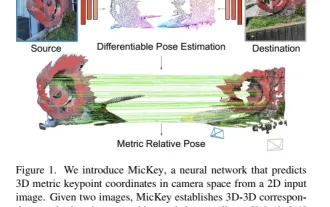

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative