Technology peripherals

Technology peripherals

AI

AI

New work by Bengio et al.: Attention can be regarded as RNN. The new model is comparable to Transformer, but is super memory-saving.

New work by Bengio et al.: Attention can be regarded as RNN. The new model is comparable to Transformer, but is super memory-saving.

New work by Bengio et al.: Attention can be regarded as RNN. The new model is comparable to Transformer, but is super memory-saving.

- ##Paper address: https://arxiv.org/ pdf/2405.13956

- Paper title: Attention as an RNN

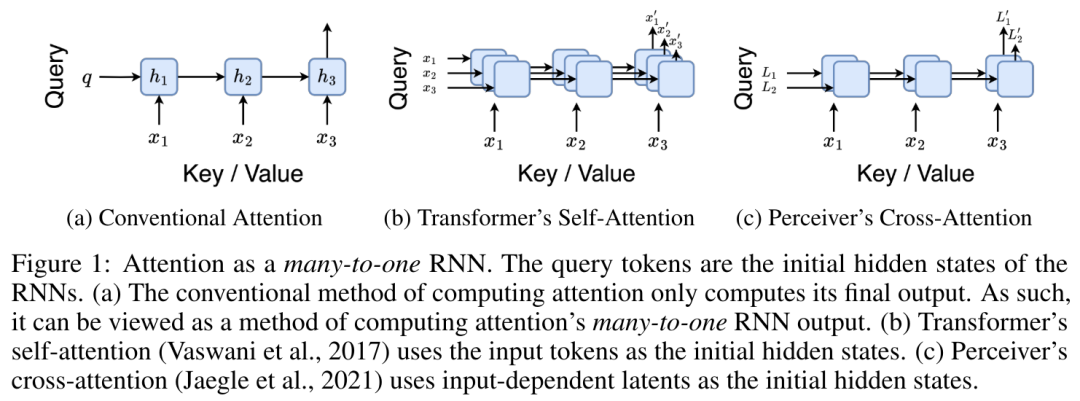

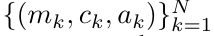

##Specifically, the researcher first Examined the attention mechanism in Transformer, which is the component that causes quadratic growth in the computational complexity of Transformer. This study shows that the attention mechanism can be viewed as a special type of recurrent neural network (RNN), with the ability to efficiently compute many-to-one RNN outputs. Utilizing the RNN formulation of attention, this study demonstrates that popular attention-based models such as Transformer and Perceiver can be considered RNN variants.

However, unlike traditional RNNs such as LSTM and GRU, popular attention models such as Transformer and Perceiver can be considered RNN variants. Unfortunately, they cannot be updated efficiently with new tokens.

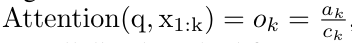

To solve this problem, this research introduces a new attention formula based on the parallel prefix scan algorithm , this formula can efficiently calculate the many-to-many RNN output of attention, thereby achieving efficient updates.

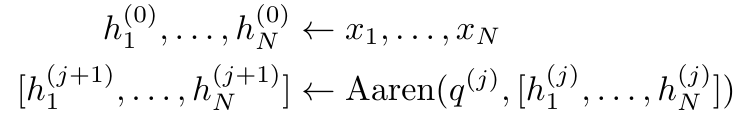

Based on this new attention formula, this study proposed Aaren ([A] attention [a] s a [re] current neural [n] etwork), This is a computationally efficient module that can not only be trained in parallel like a Transformer, but can also be updated as efficiently as an RNN.

Experimental results show that Aaren performs comparably to Transformer on 38 data sets covering four common sequence data settings: reinforcement learning, event prediction , time series classification and time series prediction tasks, while being more efficient in terms of time and memory.

In order to solve the above problems, the author proposed an attention-based A highly efficient module that takes advantage of GPU parallelism while updating efficiently.

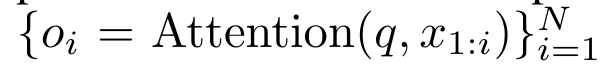

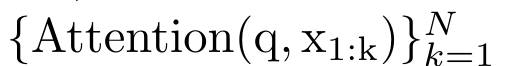

First, the authors show in Section 3.1 that attention can be viewed as a type of RNN with the special ability to efficiently compute the output of many-to-one RNNs (Figure 1a) . Leveraging the RNN form of attention, the authors further illustrate that popular attention-based models, such as Transformer (Figure 1b) and Perceiver (Figure 1c), can be considered RNNs. However, unlike traditional RNNs, these models cannot efficiently update themselves based on new tokens, limiting their potential in sequential problems where data arrives in the form of a stream.

In order to solve this problem, the author introduces a many-to-many RNN calculation based on the parallel prefix scanning algorithm in Section 3.2 Highly effective ways to focus. On this basis, the author introduced Aaren in Section 3.3 - a computationally efficient module that can not only be trained in parallel (just like Transformer), but can also be efficiently updated with new tokens during inference. Inference only requires a constant Memory (just like traditional RNN).

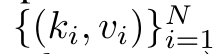

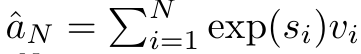

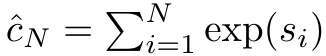

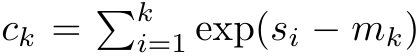

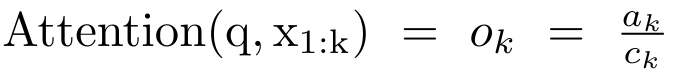

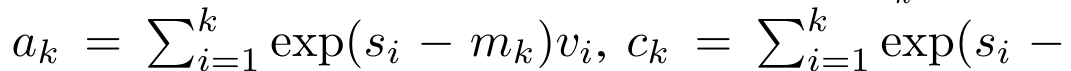

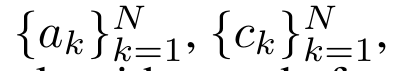

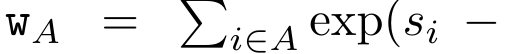

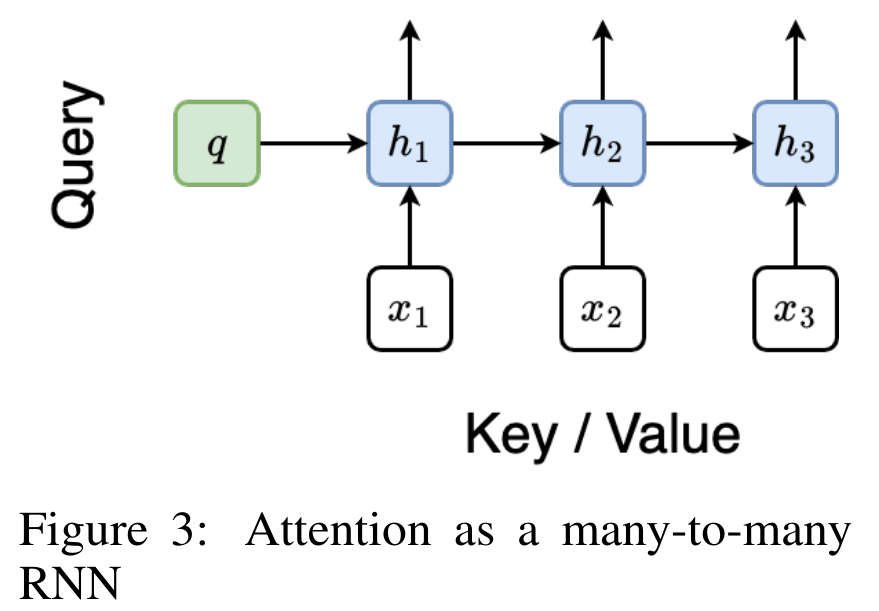

##The numerator is

and

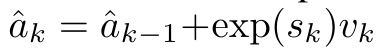

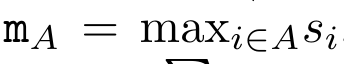

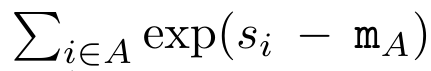

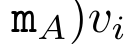

and  can be iteratively calculated in a rolling summation manner when k = 1,...,.... In practice, however, this implementation is unstable and suffers from numerical problems due to limited precision representation and potentially very small or very large exponents (i.e., exp (s)). In order to alleviate this problem, the author uses the cumulative maximum value term

can be iteratively calculated in a rolling summation manner when k = 1,...,.... In practice, however, this implementation is unstable and suffers from numerical problems due to limited precision representation and potentially very small or very large exponents (i.e., exp (s)). In order to alleviate this problem, the author uses the cumulative maximum value term  to rewrite the recursion formula to calculate

to rewrite the recursion formula to calculate  and

and  . It is worth noting that the end result is the same

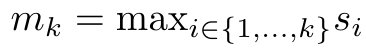

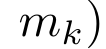

. It is worth noting that the end result is the same  , the loop calculation of m_k is as follows:

, the loop calculation of m_k is as follows:

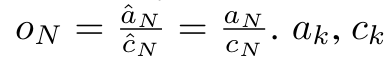

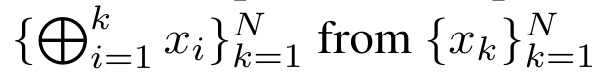

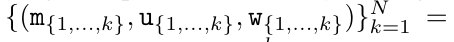

. To this end, the authors utilize the parallel prefix scan algorithm (see Algorithm 1), a parallel computing method that computes N prefixes from N consecutive data points via the correlation operator ⊕.This algorithm can efficiently calculate

. To this end, the authors utilize the parallel prefix scan algorithm (see Algorithm 1), a parallel computing method that computes N prefixes from N consecutive data points via the correlation operator ⊕.This algorithm can efficiently calculate

,

,  is for efficiency To calculate

is for efficiency To calculate  , you can calculate

, you can calculate  and

and  through a parallel scan algorithm, and then combine a_k and c_k to calculate

through a parallel scan algorithm, and then combine a_k and c_k to calculate  .

.

,

,

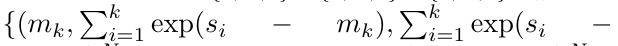

. The input to the parallel scan algorithm is

. The input to the parallel scan algorithm is  . The algorithm recursively applies the operator ⊕ and works as follows:

. The algorithm recursively applies the operator ⊕ and works as follows:

, where

,

,

.

.

. Also known as

. Also known as  . Combining the last two values of the output tuple,

. Combining the last two values of the output tuple,  is retrieved resulting in an efficient parallel method of computing attention as a many-to-many RNN (Figure 3).

is retrieved resulting in an efficient parallel method of computing attention as a many-to-many RNN (Figure 3).

##Different from Transformer, the query in Transformer is input to attention One of the tokens, and in Aaren, the query token q is learned through backpropagation during the training process.

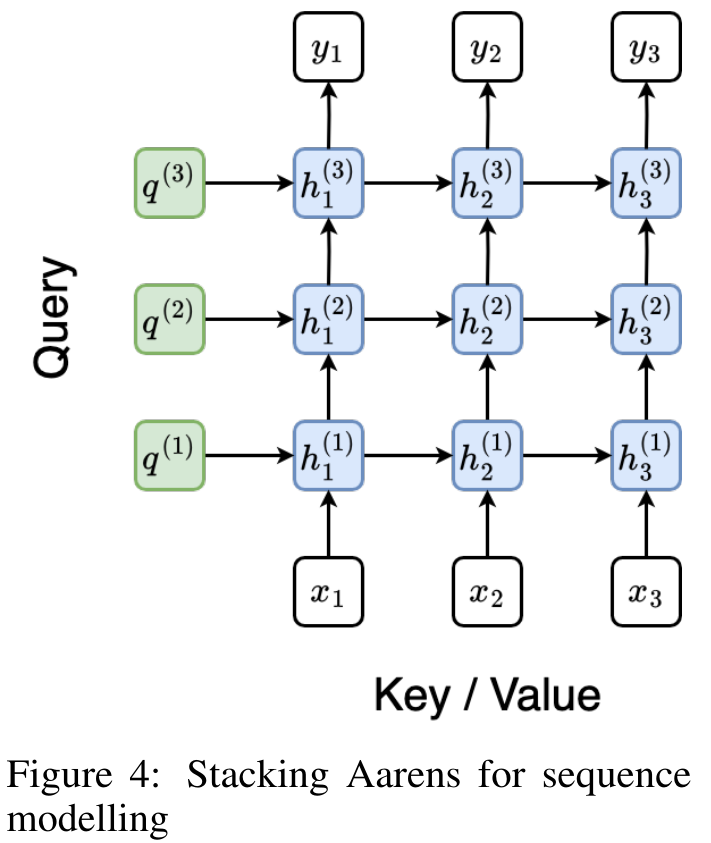

The following figure shows an example of a stacked Aaren model. The input context token of the model is x_1:3 and the output is y_1:3. It is worth noting that since Aaren utilizes the attention mechanism in the form of RNN, stacking Aarens is also equivalent to stacking RNN. Therefore, Aarens is also able to efficiently update with new tokens, i.e. the iterative computation of y_k only requires constant computation since it only depends on h_k-1 and x_k.

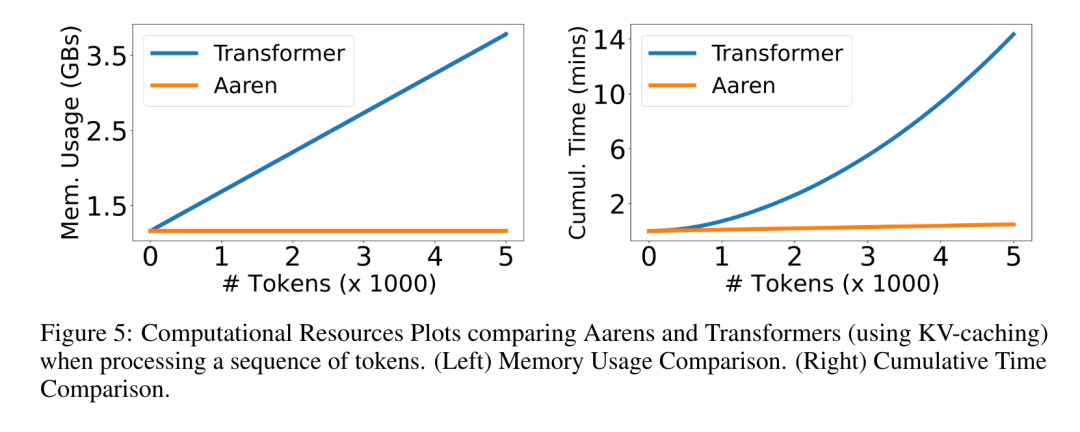

Transformer-based models require linear memory (when using KV cache) and need to store all Previous tokens, including those in the intermediate Transformer layer, but Aarens-based models only require constant memory and do not need to store all previous tokens, which makes Aarens significantly better than Transformer in computational efficiency.

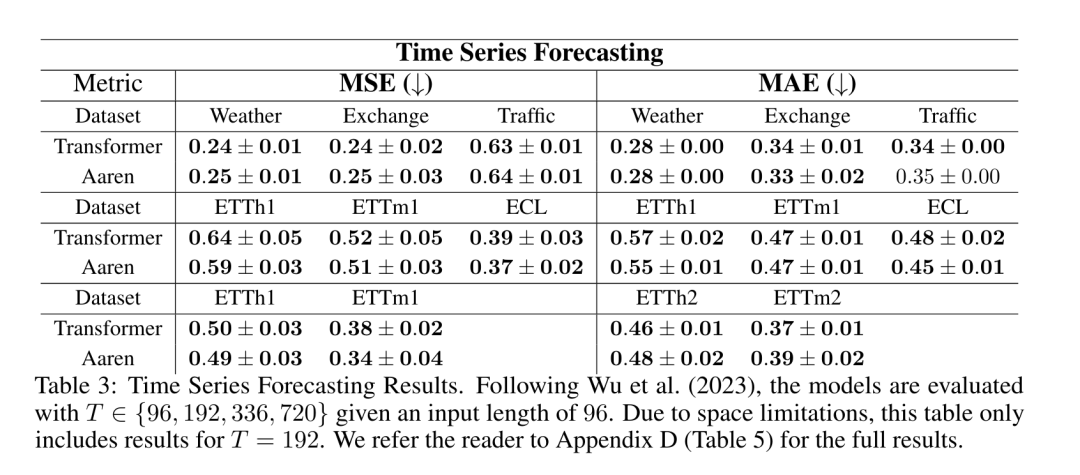

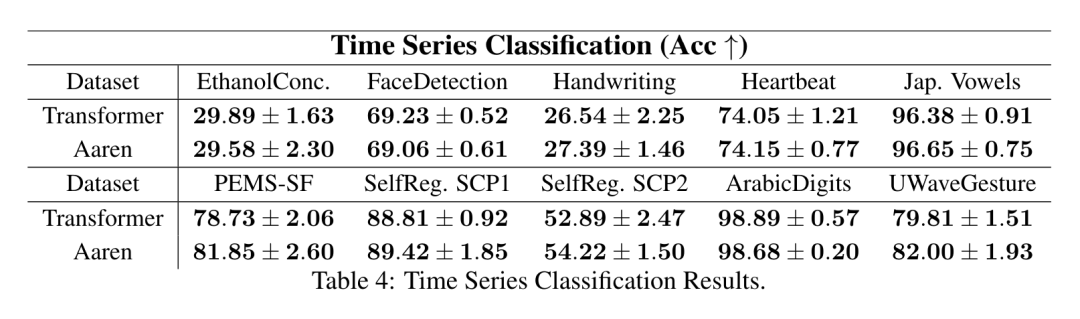

The goal of the experimental part is to compare the performance and performance of Aaren and Transformer Performance in terms of resources (time and memory) required. For a comprehensive comparison, the authors performed evaluations on four problems: reinforcement learning, event prediction, time series prediction, and time series classification.

The author first compared Aaren and Transformer in reinforcement learning Performance. Reinforcement learning is popular in interactive environments such as robotics, recommendation engines, and traffic control.

The results in Table 1 show that Aaren performs comparably with Transformer across all 12 datasets and 4 environments. However, unlike Transformer, Aaren is also an RNN and therefore can efficiently handle new environmental interactions in continuous computation, making it more suitable for reinforcement learning.

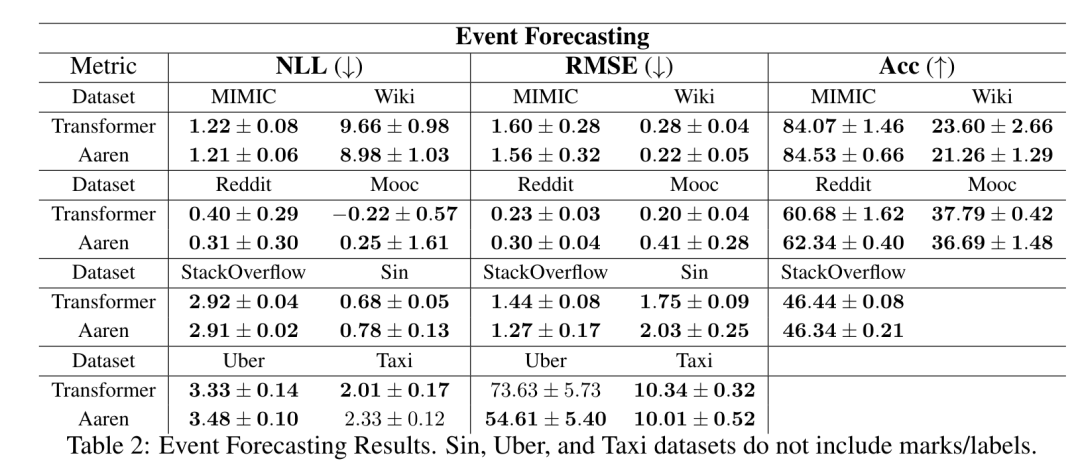

Next, The authors compare the performance of Aaren and Transformer in event prediction. Event prediction is popular in many real-world settings, such as finance (e.g., transactions), healthcare (e.g., patient observation), and e-commerce (e.g., purchases).

#The results in Table 2 show that Aaren performs comparably to Transformer on all datasets.Aaren's ability to efficiently process new inputs is particularly useful in event prediction environments, where events occur in irregular streams.

The above is the detailed content of New work by Bengio et al.: Attention can be regarded as RNN. The new model is comparable to Transformer, but is super memory-saving.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1245

1245

24

24

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

In modern manufacturing, accurate defect detection is not only the key to ensuring product quality, but also the core of improving production efficiency. However, existing defect detection datasets often lack the accuracy and semantic richness required for practical applications, resulting in models unable to identify specific defect categories or locations. In order to solve this problem, a top research team composed of Hong Kong University of Science and Technology Guangzhou and Simou Technology innovatively developed the "DefectSpectrum" data set, which provides detailed and semantically rich large-scale annotation of industrial defects. As shown in Table 1, compared with other industrial data sets, the "DefectSpectrum" data set provides the most defect annotations (5438 defect samples) and the most detailed defect classification (125 defect categories

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Editor |KX To this day, the structural detail and precision determined by crystallography, from simple metals to large membrane proteins, are unmatched by any other method. However, the biggest challenge, the so-called phase problem, remains retrieving phase information from experimentally determined amplitudes. Researchers at the University of Copenhagen in Denmark have developed a deep learning method called PhAI to solve crystal phase problems. A deep learning neural network trained using millions of artificial crystal structures and their corresponding synthetic diffraction data can generate accurate electron density maps. The study shows that this deep learning-based ab initio structural solution method can solve the phase problem at a resolution of only 2 Angstroms, which is equivalent to only 10% to 20% of the data available at atomic resolution, while traditional ab initio Calculation

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

For AI, Mathematical Olympiad is no longer a problem. On Thursday, Google DeepMind's artificial intelligence completed a feat: using AI to solve the real question of this year's International Mathematical Olympiad IMO, and it was just one step away from winning the gold medal. The IMO competition that just ended last week had six questions involving algebra, combinatorics, geometry and number theory. The hybrid AI system proposed by Google got four questions right and scored 28 points, reaching the silver medal level. Earlier this month, UCLA tenured professor Terence Tao had just promoted the AI Mathematical Olympiad (AIMO Progress Award) with a million-dollar prize. Unexpectedly, the level of AI problem solving had improved to this level before July. Do the questions simultaneously on IMO. The most difficult thing to do correctly is IMO, which has the longest history, the largest scale, and the most negative

PRO | Why are large models based on MoE more worthy of attention?

Aug 07, 2024 pm 07:08 PM

PRO | Why are large models based on MoE more worthy of attention?

Aug 07, 2024 pm 07:08 PM

In 2023, almost every field of AI is evolving at an unprecedented speed. At the same time, AI is constantly pushing the technological boundaries of key tracks such as embodied intelligence and autonomous driving. Under the multi-modal trend, will the situation of Transformer as the mainstream architecture of AI large models be shaken? Why has exploring large models based on MoE (Mixed of Experts) architecture become a new trend in the industry? Can Large Vision Models (LVM) become a new breakthrough in general vision? ...From the 2023 PRO member newsletter of this site released in the past six months, we have selected 10 special interpretations that provide in-depth analysis of technological trends and industrial changes in the above fields to help you achieve your goals in the new year. be prepared. This interpretation comes from Week50 2023

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

The accuracy rate reaches 60.8%. Zhejiang University's chemical retrosynthesis prediction model based on Transformer was published in the Nature sub-journal

Aug 06, 2024 pm 07:34 PM

The accuracy rate reaches 60.8%. Zhejiang University's chemical retrosynthesis prediction model based on Transformer was published in the Nature sub-journal

Aug 06, 2024 pm 07:34 PM

Editor | KX Retrosynthesis is a critical task in drug discovery and organic synthesis, and AI is increasingly used to speed up the process. Existing AI methods have unsatisfactory performance and limited diversity. In practice, chemical reactions often cause local molecular changes, with considerable overlap between reactants and products. Inspired by this, Hou Tingjun's team at Zhejiang University proposed to redefine single-step retrosynthetic prediction as a molecular string editing task, iteratively refining the target molecular string to generate precursor compounds. And an editing-based retrosynthetic model EditRetro is proposed, which can achieve high-quality and diverse predictions. Extensive experiments show that the model achieves excellent performance on the standard benchmark data set USPTO-50 K, with a top-1 accuracy of 60.8%.

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Editor | ScienceAI Based on limited clinical data, hundreds of medical algorithms have been approved. Scientists are debating who should test the tools and how best to do so. Devin Singh witnessed a pediatric patient in the emergency room suffer cardiac arrest while waiting for treatment for a long time, which prompted him to explore the application of AI to shorten wait times. Using triage data from SickKids emergency rooms, Singh and colleagues built a series of AI models that provide potential diagnoses and recommend tests. One study showed that these models can speed up doctor visits by 22.3%, speeding up the processing of results by nearly 3 hours per patient requiring a medical test. However, the success of artificial intelligence algorithms in research only verifies this