Technology peripherals

Technology peripherals

AI

AI

Simple and universal: 3 times lossless training acceleration of visual basic network, Tsinghua EfficientTrain++ selected for TPAMI 2024

Simple and universal: 3 times lossless training acceleration of visual basic network, Tsinghua EfficientTrain++ selected for TPAMI 2024

Simple and universal: 3 times lossless training acceleration of visual basic network, Tsinghua EfficientTrain++ selected for TPAMI 2024

The author of this discussion paper, Wang Yulin, is a 2019 direct doctoral student in the Department of Automation, Tsinghua University. He studied under Academician Wu Cheng and Associate Professor Huang Gao. His main research directions are efficient deep learning, computer vision, etc. He has published discussion papers as the first author in journals and conferences such as TPAMI, NeurIPS, ICLR, ICCV, CVPR, ECCV, etc. He has received Baidu Scholarship, Microsoft Scholar, CCF-CV Academic Emerging Award, ByteDance Scholarship and other honors. Personal homepage: wyl.cool.

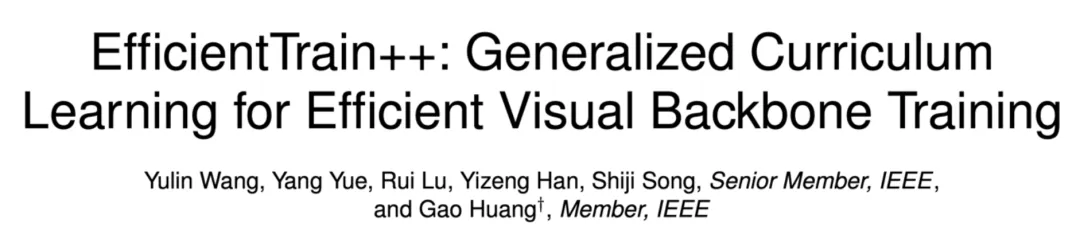

This article mainly introduces an article that has just been accepted by IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI): EfficientTrain++: Generalized Curriculum Learning for Efficient Visual Backbone Training.

- ##Paper link: https://arxiv.org/pdf/2405.08768

- The code and pre-trained model have been open source: https://github.com/LeapLabTHU/EfficientTrain ##Conference version paper (ICCV 2023) :

- https://arxiv.org/pdf/2211.09703

However, "scaling" often brings prohibitive

high model training overhead, which significantly hinders the further development and industrial application of basic vision models.

To solve this problem, the research team of Tsinghua University proposed a generalized curriculum learning algorithm:EfficientTrain++. The core idea is to promote the traditional course learning paradigm of "screening and using data from easy to difficult, and gradually training the model" to "not filtering data dimensions, always using all training data, but gradually revealing each feature during the training process" Characteristics or patterns (pattern) from easy to difficult of each data sample."

EfficientTrain++ has several important highlights:

- Plug and play implementation of visual basic network 1.5−3.0× Lossless training acceleration. Neither upstream nor downstream model performance is lost. The measured speed is consistent with the theoretical results. Commonly applicable to

- different training data sizes (such as ImageNet-1K/22K, the effect of 22K is even more obvious). Commonly used for supervised learning and self-supervised learning (such as MAE). Common to different training costs (e.g. corresponding to 0-300 or more epochs). Commonly used in

- ViT, ConvNet and other network structures (More than twenty models of different sizes and types have been tested in this article, and they are consistent and effective). For smaller models, in addition to training acceleration, it can also significantly improve performance (for example, without the help of additional information and without additional training overhead, we obtained on ImageNet-1K

- 81.3% DeiT-S, comparable to the original Swin-Tiny). Developed

- specialized practical efficiency optimization technology## for two challenging common practical situations #: 1) The CPU/hard disk is not powerful enough, and the data preprocessing efficiency cannot keep up with the GPU; 2) Large-scale parallel training, such as using 64 or more GPUs to train large models on ImageNet-22K. Next, let’s take a look at the details of the study.

one. Research motivation

In recent years, the vigorous development of large-scale foundation models has promoted the progress of artificial intelligence and deep learning. In the field of computer vision, representative works such as Vision Transformer (ViT), CLIP, SAM, and DINOv2 have proven that scaling up the size of neural networks and the scale of training data can significantly expand important visual tasks such as cognition, detection, and segmentation. performance boundaries.

However, large basic models often have high training overhead. Figure 1 gives two typical examples. Taking 8 NVIDIA V100 or higher-performance GPUs as an example, it would take years or even decades to complete just one training session for GPT-3 and ViT-G. Such high training costs are a huge expense that is difficult to afford for both academia and industry. Often only a few high-level institutions consume large amounts of resources to advance the progress of deep learning. Therefore, an urgent problem to be solved is: how to effectively improve the training efficiency of large-scale deep learning models?

Figure 1 Example: High training overhead of large deep learning basic models

Figure 1 Example: High training overhead of large deep learning basic models

For computer vision models, a classic The idea is curriculum learning, as shown in Figure 2, which imitates the progressive and highly structured learning process of humans. During the model training process, we start with the "simplest" training data and gradually introduce it from easy to difficult. The data.

Figure 2 Classic Curriculum Learning Paradigm (Picture Source: "A Survey on Curriculum Learning", TPAMI'22)

Figure 2 Classic Curriculum Learning Paradigm (Picture Source: "A Survey on Curriculum Learning", TPAMI'22)

However ,Although the motivation is relatively natural, course learning ,has not been widely applied as a general method for training ,visual basic models. The main reason is that there are ,two key bottlenecks, as shown in Figure 3. First, designing an effective training curriculum (curriculum) is not easy. Distinguishing between "simple" and "difficult" samples often requires the help of additional pre-training models, designing more complex AutoML algorithms, introducing reinforcement learning, etc., and has poor versatility. Second, the modeling of course learning itself is somewhat unreasonable. Visual data in natural distribution often has a high degree of diversity. An example is given below in Figure 3 (parrot pictures randomly selected from ImageNet). The model training data contains a large number of parrots with different movements, parrots at different distances from the camera, Parrots from different perspectives and backgrounds, as well as the diverse interactions between parrots and people or objects, etc., it is actually a relatively rough method to distinguish such diverse data only by single-dimensional indicators of "simple" and "difficult" and far-fetched modeling methods.

Figure 3 Two key bottlenecks that hinder large-scale application of course learning in training visual basic models

Figure 3 Two key bottlenecks that hinder large-scale application of course learning in training visual basic models

2. Method Introduction

Inspired by the above challenges, this paper proposes a generalized curriculum learning paradigm. The core idea is to make "filtering and use easy The traditional course learning paradigm of "obtaining difficult data and gradually training the model" has been extended to "does not filter the data dimensions and always uses all training data, but gradually reveals the reasons for each data sample during the training process. Difficult features or patterns", thus effectively avoiding the limitations and sub-optimal designs caused by the data screening paradigm, as shown in Figure 4.

Figure 4 Traditional curriculum learning (sample dimension) vs. generalized curriculum learning (feature dimension)

Figure 4 Traditional curriculum learning (sample dimension) vs. generalized curriculum learning (feature dimension)

The main reasons for this paradigm Based on an interesting phenomenon: In the training process of a natural visual model, although the model can always obtain all the information contained in the data at any time, the model will always naturally learn to recognize some simpler information contained in the data first. The discriminant features (pattern), and then gradually learn to identify more difficult discriminant features on this basis. Moreover, this rule is relatively universal, "relatively simple" discriminant features can be found more easily in both the frequency domain and the spatial domain. This paper designed a series of interesting experiments to demonstrate the above findings, as described below.

From a frequency domain perspective, "low-frequency features" are "relatively simple" for the model. In Figure 5, the author of this article trained a DeiT-S model using standard ImageNet-1K training data, and used low-pass filters with different bandwidths to filter the verification set, retaining only the low-frequency components of the verification image, and reports on this basis. The accuracy of DeiT-S on the low-pass filtered verification data during the training process. The curve of the obtained accuracy relative to the training process is shown on the right side of Figure 5.

We can see an interesting phenomenon: in the early stages of training, using only low-pass filtered validation data will not significantly reduce the accuracy, and the curve is consistent with the normal validation set accuracy. The separation point gradually moves to the right as the filter bandwidth increases. This phenomenon shows that although the model always has access to the low- and high-frequency parts of the training data, its learning process naturally starts by focusing only on low-frequency information, and the ability to identify higher-frequency features is gradually acquired later in the training (this phenomenon For more evidence, please refer to the original text).

Figure 5 From a frequency domain perspective, the model naturally tends to learn to identify low-frequency features first

Figure 5 From a frequency domain perspective, the model naturally tends to learn to identify low-frequency features first

This finding leads to an interesting Question: Can we design a training curriculum that starts with low-frequency information that only provides visual input to the model, and then gradually introduces high-frequency information?

Figure 6 investigates the idea of performing low-pass filtering on the training data only during an early training phase of a specific length, leaving the rest of the training process unchanged. It can be observed from the results that although the final performance improvement is limited, it is interesting to note that the final accuracy of the model can be preserved to a large extent even if only low-frequency components are provided to the model for a considerable period of early training phase, which It also coincides with the observation in Figure 5 that "the model mainly focuses on learning to identify low-frequency features in the early stages of training".

This discovery inspired the author of this article to think about training efficiency: Since the model only needs low-frequency components in the data in the early stages of training, and the information contained in the low-frequency components is smaller than the original data, then it can Can the model efficiently learn from only low-frequency components at less computational cost than processing the original input?

Figure 6 Providing only low-frequency components to the model for a long period of early training does not significantly affect the final performance

Figure 6 Providing only low-frequency components to the model for a long period of early training does not significantly affect the final performance

In fact, this idea is completely feasible. As shown on the left side of Figure 7, the author of this article introduces a cropping operation in the Fourier spectrum of the image to crop out the low-frequency part and map it back to the pixel space. This low-frequency cropping operation accurately preserves all low-frequency information while reducing the size of the image input, so the computational cost of the model learning from the input can be exponentially reduced.

If you use this low-frequency cropping operation to process the model input in the early stages of training, you can significantly save the overall training cost, but because the information necessary for model learning is maximally retained, The final model with almost no performance loss can still be obtained, and the experimental results are shown in the lower right corner of Figure 7.

Figure 7 Low-frequency cropping: Make the model efficiently learn only from low-frequency information

Figure 7 Low-frequency cropping: Make the model efficiently learn only from low-frequency information

In addition to frequency domain operations, from the perspective of spatial domain transformation, "relatively simple" features for the model can also be found. For example, natural image information contained in raw visual input that has not undergone strong data enhancement or distortion processing is often "simpler" for the model and easier for the model to learn because it is derived from real-world distributions. , and the additional information, invariance, etc. introduced by preprocessing techniques such as data enhancement are often difficult for the model to learn (a typical example is given on the left side of Figure 8).

In fact, existing research has also observed that data augmentation mainly plays a role in the later stages of training (such as "Improving Auto-Augment via Augmentation-Wise Weight Sharing", NeurIPS' 20).

在这一维度上,为实现广义课程学习的范式,可以简单地通过改变数据增强的强度方便地实现在训练早期阶段仅向模型提供训练数据中较容易学习的自然图像信息。图 8 右侧使用 RandAugment 作为代表性示例来验证了这个思路,RandAugment 包含了一系列常见的空域数据增强变换(例如随机旋转、更改锐度、仿射变换、更改曝光度等)。

可以观察到,从较弱的数据增强开始训练模型可以有效提高模型最终表现,同时这一技术与低频裁切兼容。

图 8 从空域的角度寻找模型 “较容易学习” 的特征:一个数据增强的视角

图 8 从空域的角度寻找模型 “较容易学习” 的特征:一个数据增强的视角

到此处为止,本文提出了广义课程学习的核心框架与假设,并通过揭示频域、空域的两个关键现象证明了广义课程学习的合理性和有效性。在此基础上,本文进一步完成了一系列系统性工作,在下面列出。由于篇幅所限,关于更多研究细节,可参考原论文。

- 融合频域、空域的两个核心发现,提出和改进了专门设计的优化算法,建立了一个统一、整合的 EfficientTrain++ 广义课程学习方案。

- 探讨了低频裁切操作在实际硬件上高效实现的具体方法,并从理论和实验两个角度比较了两种提取低频信息的可行方法:低频裁切和图像降采样,的区别和联系。

- 对两种有挑战性的常见实际情形开发了专门的实际效率优化技术:1)CPU / 硬盘不够强力,数据预处理效率跟不上 GPU;2)大规模并行训练,例如在 ImageNet-22K 上使用 64 或以上的 GPUs 训练大型模型。

本文最终得到的 EfficientTrain++ 广义课程学习方案如图 9 所示。EfficientTrain++ 以模型训练总计算开销的消耗百分比为依据,动态调整频域低频裁切的带宽和空域数据增强的强度。

值得注意的是,作为一种即插即用的方法,EfficientTrain++ 无需进一步的超参数调整或搜索即可直接应用于多种视觉基础网络和多样化的模型训练场景,效果比较稳定、显著。

图 9 统一、整合的广义课程学习方案:EfficientTrain++

图 9 统一、整合的广义课程学习方案:EfficientTrain++

三.实验结果

作为一种即插即用的方法,EfficientTrain++ 在 ImageNet-1K 上,在基本不损失或提升性能的条件下,将多种视觉基础网络的实际训练开销降低了 1.5 倍左右。

图 10 ImageNet-1K 实验结果:EfficientTrain++ 在多种视觉基础网络上的表现

图 10 ImageNet-1K 实验结果:EfficientTrain++ 在多种视觉基础网络上的表现

EfficientTrain++ 的增益通用于不同的训练开销预算,严格相同表现的情况下,DeiT/Swin 在 ImageNet-1K 上的训加速比在 2-3 倍左右。

图 11 ImageNet-1K 实验结果:EfficientTrain++ 在不同训练开销预算下的表现

图 11 ImageNet-1K 实验结果:EfficientTrain++ 在不同训练开销预算下的表现

EfficientTrain++ 在 ImageNet-22k 上可以取得 2-3 倍的性能无损预训练加速。

图 12 ImageNet-22K 实验结果:EfficientTrain++ 在更大规模训练数据上的表现

图 12 ImageNet-22K 实验结果:EfficientTrain++ 在更大规模训练数据上的表现

对于较小的模型,EfficientTrain++ 可以实现显著的性能上界提升。

图13 ImageNet-1K 实验结果:EfficientTrain++ 可以显着提升较小模型的性能上界

图13 ImageNet-1K 实验结果:EfficientTrain++ 可以显着提升较小模型的性能上界

EfficientTrain++ 对于自监督学习算法(如MAE)同样有效。

图14 EfficientTrain++ 可以应用于自监督学习(如MAE)

图14 EfficientTrain++ 可以应用于自监督学习(如MAE)

EfficientTrain++ 训得的模型在目标检测、实例分割、语义分割等下游任务上同样不损失性能。

图 15 COCO 目标检测、COCO 实例分割、ADE20K 语义分割实验结果

图 15 COCO 目标检测、COCO 实例分割、ADE20K 语义分割实验结果

The above is the detailed content of Simple and universal: 3 times lossless training acceleration of visual basic network, Tsinghua EfficientTrain++ selected for TPAMI 2024. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1421

1421

52

52

1315

1315

25

25

1266

1266

29

29

1239

1239

24

24

Which of the top ten currency trading platforms in the world are among the top ten currency trading platforms in 2025

Apr 28, 2025 pm 08:12 PM

Which of the top ten currency trading platforms in the world are among the top ten currency trading platforms in 2025

Apr 28, 2025 pm 08:12 PM

The top ten cryptocurrency exchanges in the world in 2025 include Binance, OKX, Gate.io, Coinbase, Kraken, Huobi, Bitfinex, KuCoin, Bittrex and Poloniex, all of which are known for their high trading volume and security.

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

DMA in C refers to DirectMemoryAccess, a direct memory access technology, allowing hardware devices to directly transmit data to memory without CPU intervention. 1) DMA operation is highly dependent on hardware devices and drivers, and the implementation method varies from system to system. 2) Direct access to memory may bring security risks, and the correctness and security of the code must be ensured. 3) DMA can improve performance, but improper use may lead to degradation of system performance. Through practice and learning, we can master the skills of using DMA and maximize its effectiveness in scenarios such as high-speed data transmission and real-time signal processing.

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

Using the chrono library in C can allow you to control time and time intervals more accurately. Let's explore the charm of this library. C's chrono library is part of the standard library, which provides a modern way to deal with time and time intervals. For programmers who have suffered from time.h and ctime, chrono is undoubtedly a boon. It not only improves the readability and maintainability of the code, but also provides higher accuracy and flexibility. Let's start with the basics. The chrono library mainly includes the following key components: std::chrono::system_clock: represents the system clock, used to obtain the current time. std::chron

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

Handling high DPI display in C can be achieved through the following steps: 1) Understand DPI and scaling, use the operating system API to obtain DPI information and adjust the graphics output; 2) Handle cross-platform compatibility, use cross-platform graphics libraries such as SDL or Qt; 3) Perform performance optimization, improve performance through cache, hardware acceleration, and dynamic adjustment of the details level; 4) Solve common problems, such as blurred text and interface elements are too small, and solve by correctly applying DPI scaling.

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

The built-in quantization tools on the exchange include: 1. Binance: Provides Binance Futures quantitative module, low handling fees, and supports AI-assisted transactions. 2. OKX (Ouyi): Supports multi-account management and intelligent order routing, and provides institutional-level risk control. The independent quantitative strategy platforms include: 3. 3Commas: drag-and-drop strategy generator, suitable for multi-platform hedging arbitrage. 4. Quadency: Professional-level algorithm strategy library, supporting customized risk thresholds. 5. Pionex: Built-in 16 preset strategy, low transaction fee. Vertical domain tools include: 6. Cryptohopper: cloud-based quantitative platform, supporting 150 technical indicators. 7. Bitsgap:

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

C performs well in real-time operating system (RTOS) programming, providing efficient execution efficiency and precise time management. 1) C Meet the needs of RTOS through direct operation of hardware resources and efficient memory management. 2) Using object-oriented features, C can design a flexible task scheduling system. 3) C supports efficient interrupt processing, but dynamic memory allocation and exception processing must be avoided to ensure real-time. 4) Template programming and inline functions help in performance optimization. 5) In practical applications, C can be used to implement an efficient logging system.

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

Measuring thread performance in C can use the timing tools, performance analysis tools, and custom timers in the standard library. 1. Use the library to measure execution time. 2. Use gprof for performance analysis. The steps include adding the -pg option during compilation, running the program to generate a gmon.out file, and generating a performance report. 3. Use Valgrind's Callgrind module to perform more detailed analysis. The steps include running the program to generate the callgrind.out file and viewing the results using kcachegrind. 4. Custom timers can flexibly measure the execution time of a specific code segment. These methods help to fully understand thread performance and optimize code.

An efficient way to batch insert data in MySQL

Apr 29, 2025 pm 04:18 PM

An efficient way to batch insert data in MySQL

Apr 29, 2025 pm 04:18 PM

Efficient methods for batch inserting data in MySQL include: 1. Using INSERTINTO...VALUES syntax, 2. Using LOADDATAINFILE command, 3. Using transaction processing, 4. Adjust batch size, 5. Disable indexing, 6. Using INSERTIGNORE or INSERT...ONDUPLICATEKEYUPDATE, these methods can significantly improve database operation efficiency.