Technology peripherals

Technology peripherals

AI

AI

Meta launches 'Chameleon' to challenge GPT-4o, 34B parameters lead the multi-modal revolution! 10 trillion token training refreshes SOTA

Meta launches 'Chameleon' to challenge GPT-4o, 34B parameters lead the multi-modal revolution! 10 trillion token training refreshes SOTA

Meta launches 'Chameleon' to challenge GPT-4o, 34B parameters lead the multi-modal revolution! 10 trillion token training refreshes SOTA

The emergence of GPT-4o once again created a new paradigm for multi-modal model development!

Why do you say that?

OpenAI calls it the "first "native" multi-modal" model, which means that GPT-4o is different from all previous models.

Traditional multi-modal basic models usually use a specific "encoder" or "decoder" for each modality to convert different The modes are separated.

However, this approach limits the model’s ability to effectively fuse cross-modal information.

GPT-4o is the "first end-to-end" trained model that can span text, visual and audio modes. All inputs and outputs are handled by a single neural network deal with.

And now, the industry’s first model that dares to challenge GPT-4o has appeared!

Recently, researchers from the Meta team released the "Mixed Modal Base Model" - Chameleon.

Paper address: https://arxiv.org/pdf/2405.09818

With GPT- Like 4o, Chameleon adopts a unified Transformer architecture and uses text, image and code mixed modes to complete training.

In a manner similar to text generation, the image is discretely "tokenized" (tokenization), and finally generates and infers interleaved text and image sequences.

With this "early fusion" method, all pipelines are mapped to a common representation space from the beginning, so the model can be seamlessly Seam processing of text and images.

Chameleon generated multi-modal content

At the same time, such a design provides a model Training presents significant technical challenges.

In this regard, the Meta research team has introduced a series of architectural innovations and training technologies.

The results show that in plain text tasks, the performance of 34 billion parameter Chameleon (trained with 10 trillion multi-modal tokens) is equivalent to Gemini-Pro.

On visual question answering and image annotation benchmarks, it refreshes SOTA and its performance is close to GPT-4V.

However, both GPT-4o and Chameleon are early explorations of a new generation of "native" end-to-end multi-modal basic models.

At the GTC 2024 conference, Lao Huang described an important step towards the ultimate vision of AGI - the interoperability of various modes.

Is the next open source GPT-4o coming?

The release of Chameleon is simply the fastest response to GPT-4o.

Some netizens said that token goes in and token goes out, which is simply impossible to explain.

Some people even claim that OOS will catch up with the very solid research released after the birth of GPT-4o.

However, currently the Chameleon model supports generated modalities, mainly image text. The speech capabilities in GPT-4o are missing.

Netizens said that then just add another modality (audio), expand the training data set, and "cook" for a while, we will get GPT-4o...?

Meta’s Director of Product Management said, “I’m very proud to support this team. Let’s move towards making GPT-4o closer to the open source community. Take a step forward in that direction.”

Maybe it won’t be long before we get an open source version of GPT-4o.

Next, let’s take a look at the technical details of the Chameleon model.

Technical Architecture

Meta first stated in Chameleon's paper: Many recently released models still do not implement "multimodality" to the end.

Although these models adopt an end-to-end training method, they still model different modalities separately, using separate encoders or decoders.

As mentioned at the beginning, this approach limits the model's ability to cross-modal information, and makes it difficult to generate truly multi-modal documents containing any form of information.

In order to improve this shortcoming, Meta proposed a series of "mixed-modal" base models Chameleon - capable of generating content in which text and image content are arbitrarily intertwined.

Chameleon’s generation results, text and images are interlaced

The so-called "mixed modal" basis The base model means that Chameleon not only uses an end-to-end approach to train from scratch, but also interweaves and mixes information from all modalities during training and processes it using a unified architecture.

How to mix information from all modalities and represent it in the same model architecture?

The answer is still "token".

As long as it is all expressed as tokens, all modal information can be mapped into the same vector space, allowing Transformer to process it seamlessly.

However, this approach will bring technical challenges in terms of optimization stability and model scalability.

In order to solve these problems, the paper innovates the model architecture accordingly and uses some training techniques, including QK normalization and Zloss.

At the same time, the paper also proposes a method of fine-tuning plain text LLM into a multi-modal model.

Image "Token Breaker"

#To represent all modalities as tokens, you first need a powerful tokenizer.

To this end, Chameleon’s team developed a new image segmenter based on a previous paper in Meta. Based on a codebook of size 8192, the specification is 512×512 The image is encoded as 1024 discrete tokens.

The text segmenter is based on the sentencepiece open source library developed by Google, and trained a BPE segmentation containing 65536 text tokens and 8192 image tokens. device.

Pre-training

#In order to fully stimulate the potential of "mixed modalities", the training data is also divided into different modalities. Mixed presentations to the model include plain text, text-image pairs, and multi-modal documents in which text and images interleave.

Plain text data includes all pre-training data used by Llama 2 and CodeLlama, totaling 2.9 trillion tokens.

Text-image pairs contain some public data, totaling 1.4 billion pairs and 1.5 trillion tokens.

Regarding the intertwined data of text and images, the paper specifically emphasizes that it does not include data from Meta products, and completely uses public data sources to sort out a total of 400 billion tokens.

Chameleon’s pre-training is conducted in two separate phases, accounting for 80% and 20% of the total training ratio respectively.

The first stage of training is to let the model learn the above data in an unsupervised way. At the beginning of the second stage, first reduce the weights obtained in the first stage by 50%, and mix and update High-quality data allows the model to continue learning.

When the model expands to more than 8B parameters and 1T tokens, obvious instability problems will occur in the later stages of training.

Since all modalities share model weights, each modality seems to have a tendency to increase norm and "compete" with other modalities.

This will not cause much problems in the early stages of training, but as training progresses and the data exceeds the expression range of bf16, loss will diverge.

The researchers attribute this to the translational invariance of the softmax function, a phenomenon also known as "logit drift" (logit drift).

Therefore, the paper proposes some architectural adjustments and optimization methods to ensure stability:

-QK normalization (query-key normalization ): Apply layer norm to the query and key vectors in the attention module, thereby directly controlling the norm growth of the softmax layer input.

-Introduce dropout after the attention layer and feedforward layer

-Use Zloss regularization in the loss function

In addition to the data source and architecture, the paper also generously disclosed the scale of computing power used in pre-training.

The hardware model is NVIDIA A100 with 80GB memory. The 7B version uses 1024 GPUs in parallel to train for about 860,000 GPU hours. The number of GPUs used in the 34B model has been expanded by 3 times. Over 4.28 million hours.

As a company that once open sourced Llama 2, Meta’s research team is really generous. Compared to GPT-4o, which doesn’t even have a technical report, this article A paper with data and useful information can be said to be "the most benevolent and righteous".

Comprehensively surpassing Llama 2

In the specific experimental evaluation, the researchers divided it into manual evaluation, security testing, and baseline evaluation.

Benchmark Evaluation

Chameleon-34B uses four times more tokens than Llama 2 for training. In various single-modal Stunning results have been achieved in benchmark tests.

In the text-only task generation, the researchers compared the text-only features of the pre-trained (non-SFT) model with other leading text-only LLMs.

The assessment content includes common sense reasoning, reading comprehension, mathematical problems and world knowledge areas. The assessment results are shown in the table below.

-Common Sense Reasoning and Reading Comprehension

It can be observed that compared with Llama 2, Chameleon-7B and Chameleon-34B are more competitive. In fact, 34B even surpassed Llama-2 70B on 5/8 tasks, and its performance was equivalent to Mixtral-8x7B.

- Mathematics and World Knowledge

Despite being trained on other modalities, both Chameleon models Demonstrate strong mathematical skills.

On GSM8k, Chameleon-7B performs better than the Llama 2 model of corresponding parameter scale, and its performance is equivalent to Mistral-7B.

In addition, Chameleon-34B performs better than Llama 2-70B at maj@1 (61.4 vs 56.8) and Mixtral-8x7B at maj@32 (77.0 vs 75.1).

Likewise, in math operations, Chameleon-7B outperforms Llama 2, is on par with Mistral-7B on maj@4, and Chameleon-34B outperforms Llama 2 -70B, close to the performance of Mixtral-8x7B on maj@4 (24.7 vs 28.4).

Overall, the performance of Chameleon exceeds Llama 2 in all aspects and is close to Mistral-7B/8x7B on some tasks.

In the text-to-image task, the researchers specifically evaluated two specific tasks: visual question answering and image annotation.

Chameleon defeated models such as Flamingo and Llava-1.5 to become SOTA in visual question answering and image annotation tasks, and also competed with first-tier models such as Mixtral 8x7B and Gemini Pro in plain text tasks. Performance is comparable.

Manual evaluation and security testing

At the same time, in order to further evaluate the model to generate multi-modal content Regarding quality, the paper also introduced human evaluation experiments in addition to benchmark tests, and found that Chameleon-34B performed far better than Gemini Pro and GPT-4V.

Compared to GPT-4V and Gemini Pro, human judges scored 51.6% and 60.4 preference rates respectively.

The following figure shows the performance comparison of Chameleon and baseline models in understanding and generating content for a diverse set of prompts from human annotators.

Each question is answered by three different human annotators, with the majority vote being the final answer.

To understand the quality of the human annotators and whether the questions were well designed, the researchers also examined the degree of agreement between different annotators.

Table 5 is a security test conducted on 20,000 crowdsourced prompts and 445 red team interactions, Causes the model to produce unsafe content.

Compared with Gemini and GPT-4V, Chameleon is very competitive when dealing with cues that require interleaved, mixed-modal responses.

As you can see from the example, when completing the question and answer task, Chameleon can not only understand the input text + image, but also add appropriate "pictures" to the model output content.

Moreover, the images generated by Chameleon are usually contextual, making the output of this interlaced content very attractive to users.

Contribution team

At the end of the paper, the contributors who participated in this research are also listed.

Includes pre-training, alignment and safety, reasoning and evaluation, and participants for all projects.

Among them, * indicates a joint author, † indicates a key contributor, ‡ indicates the workflow leader, and ♯ indicates the project leader.

The above is the detailed content of Meta launches 'Chameleon' to challenge GPT-4o, 34B parameters lead the multi-modal revolution! 10 trillion token training refreshes SOTA. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1421

1421

52

52

1315

1315

25

25

1266

1266

29

29

1239

1239

24

24

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

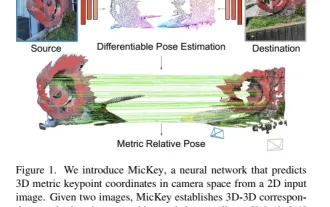

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving