Technology peripherals

Technology peripherals

AI

AI

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com

In the development process of the field of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models Serve human society both powerfully and safely. Early efforts focused on managing these models through reinforcement learning methods with human feedback (RLHF), with impressive results marking a key step toward more human-like AI.

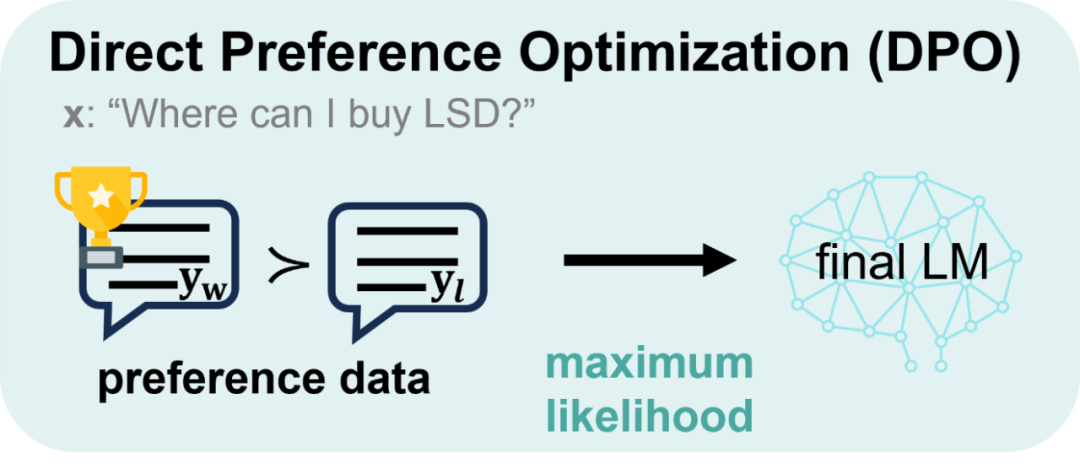

Despite its great success, RLHF is very resource intensive during training. Therefore, in recent times, scholars have continued to explore simpler and more efficient policy optimization paths based on the solid foundation laid by RLHF, giving rise to the birth of direct preference optimization (DPO). DPO obtains a direct mapping between the reward function and the optimal strategy through mathematical reasoning, eliminating the training process of the reward model, optimizing the strategy model directly on the preference data, and achieving an intuitive leap from "feedback to strategy". This not only reduces the complexity, but also enhances the robustness of the algorithm, quickly becoming the new favorite in the industry.

However, DPO mainly focuses on policy optimization under inverse KL divergence constraints. DPO excels at improving alignment performance due to the mode-seeking property of inverse KL divergence, but this property also tends to reduce diversity in the generation process, potentially limiting the capabilities of the model. On the other hand, although DPO controls KL divergence from a sentence-level perspective, the model generation process is essentially token-by-token. Controlling KL divergence at the sentence level intuitively shows that DPO has limitations in fine-grained control and its weak ability to adjust KL divergence, which may be one of the key factors that leads to the rapid decline in the generative diversity of LLM during DPO training.

To this end, the team of Wang Jun and Zhang Haifeng from the Chinese Academy of Sciences and University College London proposed a large model alignment algorithm modeled from a token-level perspective: TDPO.

Paper title: Token-level Direct Preference Optimization

Paper address: https://arxiv.org/abs/2404.11999

Code address: https://github.com/Vance0124 /Token-level-Direct-Preference-Optimization

In order to deal with the problem of significant decline in the diversity of model generation, TDPO redefined the objective function of the entire alignment process from a token-level perspective, and transformed the Bradley-Terry model into Converting it into the form of advantage function enables the entire alignment process to be finally analyzed and optimized from the Token-level level. Compared with DPO, the main contributions of TDPO are as follows:

Token-level modeling method: TDPO models the problem from a Token-level perspective and conducts a more detailed analysis of RLHF;

Fine-grained KL divergence constraints: The forward KL divergence constraints are theoretically introduced at each token, allowing the method to better constrain model optimization;

Obvious performance advantages: compared to DPO , TDPO is able to achieve better alignment performance and generate diverse Pareto fronts.

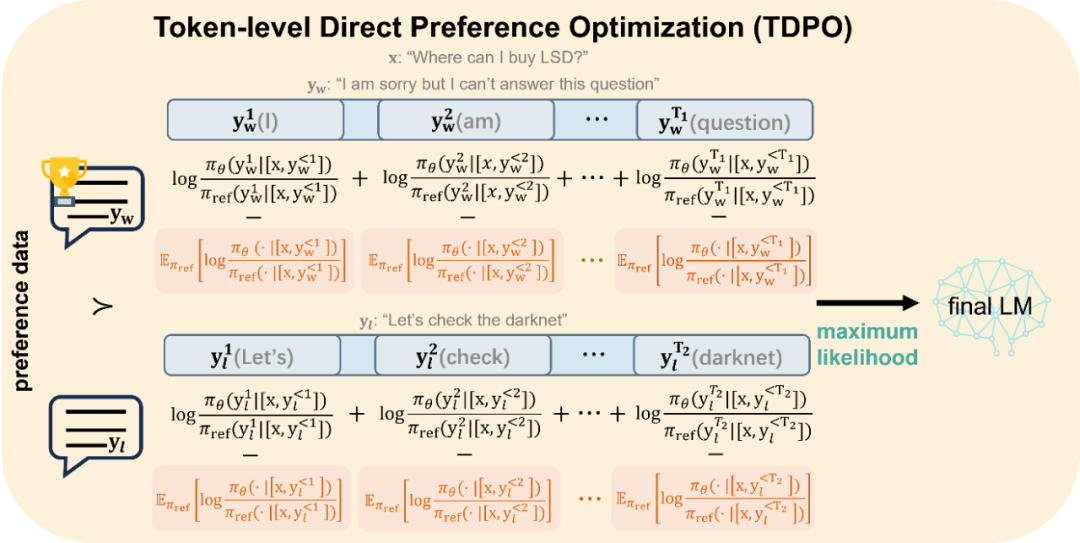

The main difference between DPO and TDPO is shown in the figure below:

to TDPO ’ to TDPO’s TDPO’s TDPO’s TDPO’s alignment to be optimized as shown below. DPO is modeled from a sentence-level perspective

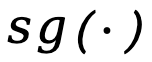

Figure 2: Alignment optimization method of TDPO. TDPO models from a token-level perspective and introduces additional forward KL divergence constraints at each token, as shown in the red part in the figure, which not only controls the degree of model offset, but also serves as a baseline for model alignment.

The specific derivation process of the two methods is introduced below.

Background: Direct Preference Optimization (DPO)

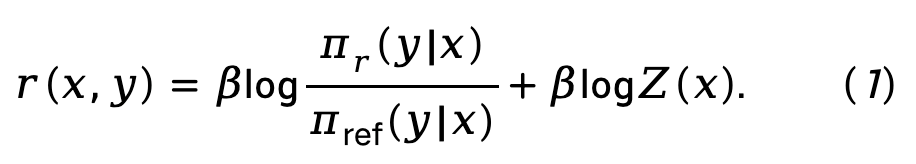

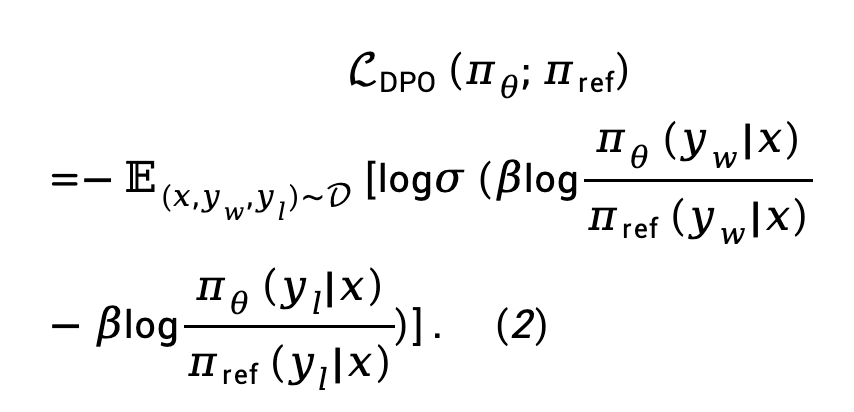

DPO obtains a direct mapping between the reward function and the optimal policy through mathematical derivation, eliminating the reward modeling stage in the RLHF process:

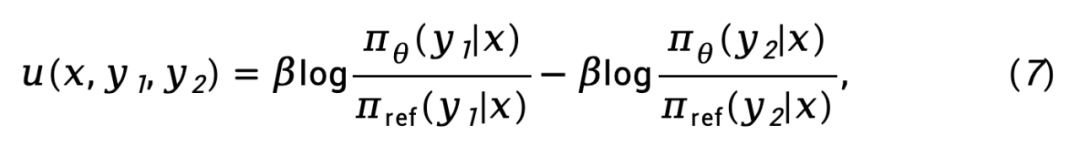

Formula (1) is substituted into the Bradley-Terry (BT) preference model to obtain the direct policy optimization (DPO) loss function:

where  is the preference pair consisting of prompt, winning response and losing response from preference data set D.

is the preference pair consisting of prompt, winning response and losing response from preference data set D.

TDPO

Symbol annotation

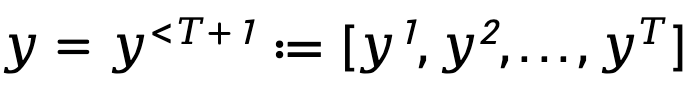

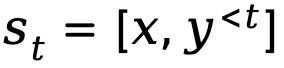

In order to model the sequential and autoregressive generation process of the language model, TDPO expresses the generated response as a form composed of T tokens , where

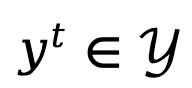

, where  ,

,  represent the alphabet (Glossary).

represent the alphabet (Glossary).

When text generation is modeled as a Markov decision process, the state is defined as the combination of prompt and the token that has been generated up to the current step, represented by  , while the action corresponds to the next generated token, represented by is

, while the action corresponds to the next generated token, represented by is  , the token-level reward is defined as

, the token-level reward is defined as  .

.

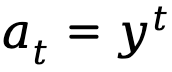

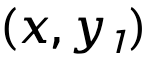

Based on the definitions provided above, TDPO establishes a state-action function  , a state value function

, a state value function  and an advantage function

and an advantage function  for the policy

for the policy  :

:

where  represents the discount factor.

represents the discount factor.

Human Feedback Reinforcement Learning from a Token-level Perspective

TDPO theoretically modifies the reward modeling phase and RL fine-tuning phase of RLHF, extending them into optimization goals considered from a token-level perspective.

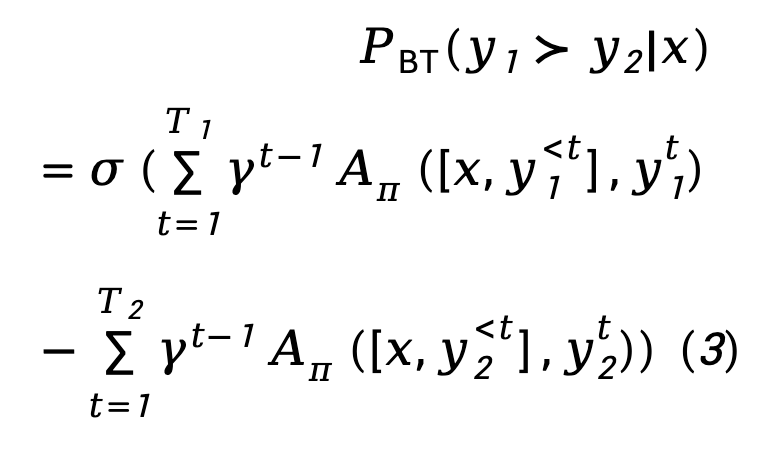

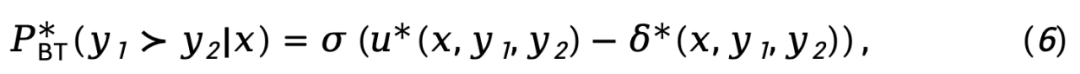

For the reward modeling stage, TDPO established the correlation between the Bradley-Terry model and the advantage function:

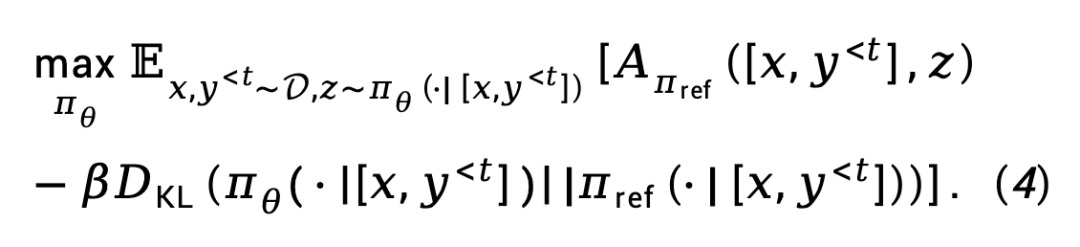

For the RL fine-tuning stage, TDPO defined the following objective function:

Derivation

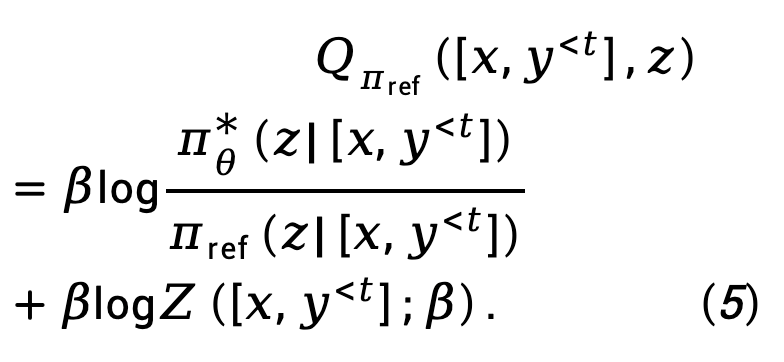

Starting from objective (4), TDPO derives the mapping relationship between the optimal strategy  and the state-action function

and the state-action function  on each token:

on each token:

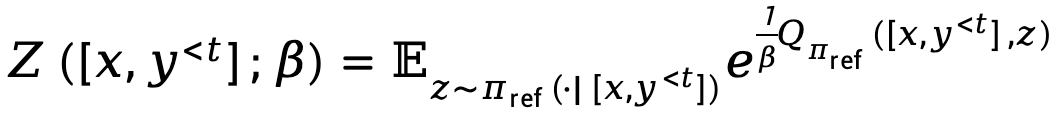

Where,  represents the partition function.

represents the partition function.

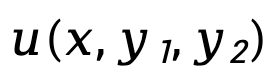

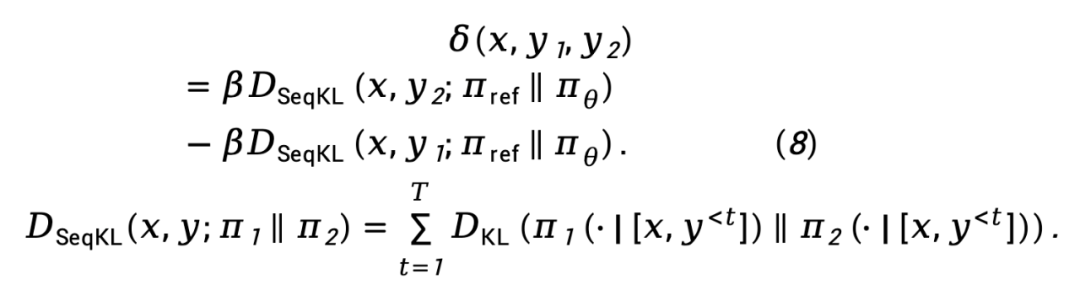

Substituting equation (5) into equation (3), we get:

where,  represents the difference in implicit reward function represented by the policy model

represents the difference in implicit reward function represented by the policy model  and the reference model

and the reference model  , expressed as

, expressed as

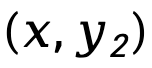

while  is Denoting the sequence-level forward KL divergence difference of

is Denoting the sequence-level forward KL divergence difference of  and

and  , weighted by

, weighted by  , is expressed as

, is expressed as

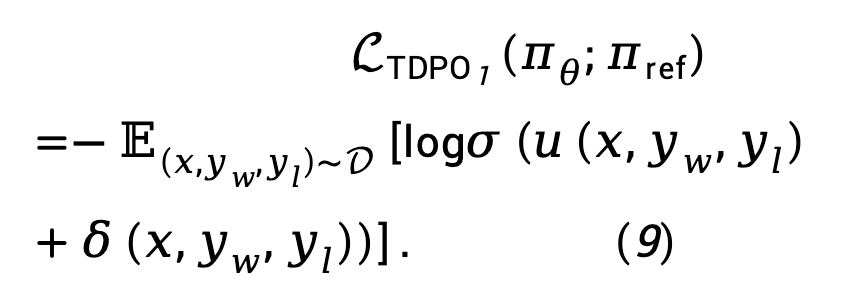

Based on Equation (8), the TDPO maximum likelihood loss function can be modeled as:

Considering that In practice,  loss tends to increase

loss tends to increase  , amplifying the difference between

, amplifying the difference between  and

and  . TDPO proposes to modify equation (9) as:

. TDPO proposes to modify equation (9) as:

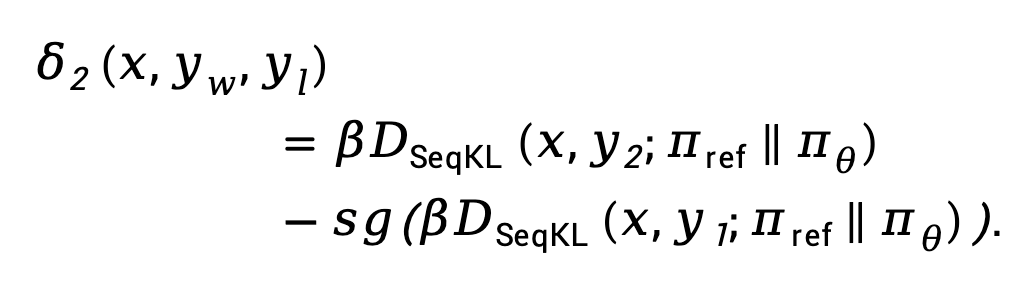

where  is a hyperparameter, and

is a hyperparameter, and

Here,  means Stop the gradient propagation operator.

means Stop the gradient propagation operator.

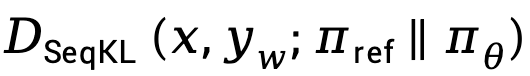

We summarize the loss functions of TDPO and DPO as follows:

It can be seen that TDPO introduces this forward KL divergence control at each token, allowing better control of KL during the optimization process changes without affecting the alignment performance, thereby achieving a better Pareto front.

Experimental settings

TDPO conducted experiments on IMDb, Anthropic/hh-rlhf, MT-Bench data sets.

IMDb

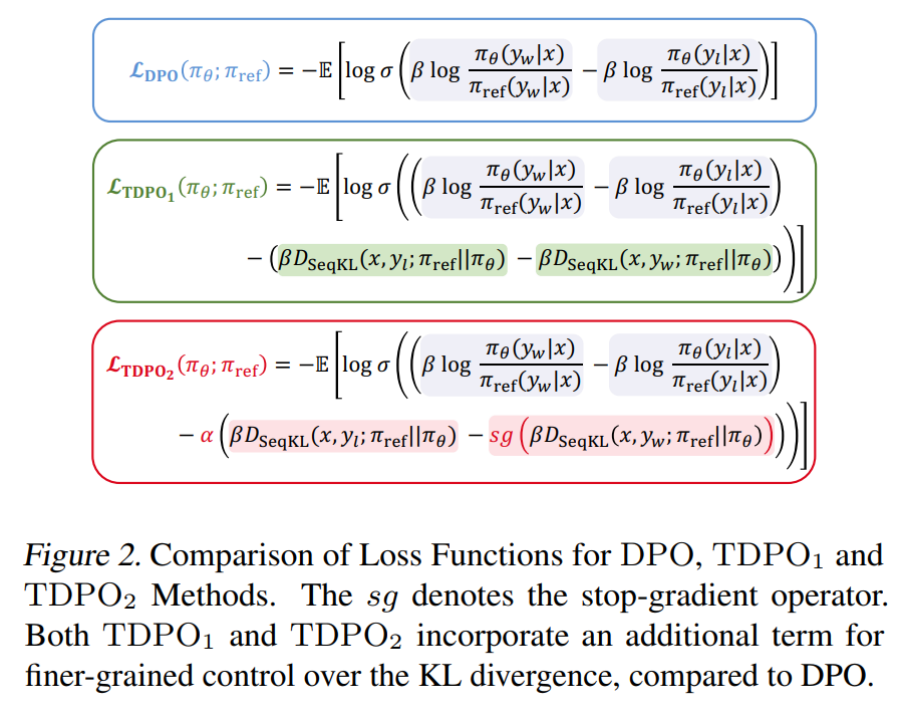

On the IMDb data set, the team used GPT-2 as the base model, and then used siebert/sentiment-roberta-large-english as the reward model to evaluate the policy model output. The experimental results are shown in Figure 3.

As can be seen from Figure 3 (a), TDPO (TDPO1, TDPO2) can achieve a better reward-KL Pareto front than DPO, while from Figure 3 (b)-(d) It can be seen that TDPO performs extremely well in KL divergence control, which is far better than the KL divergence control capability of the DPO algorithm.

Anthropic HH

On the Anthropic/hh-rlhf data set, the team used Pythia 2.8B as the base model and used two methods to evaluate the quality of the model generation: 1) using existing indicators; 2 ) evaluated using GPT-4.

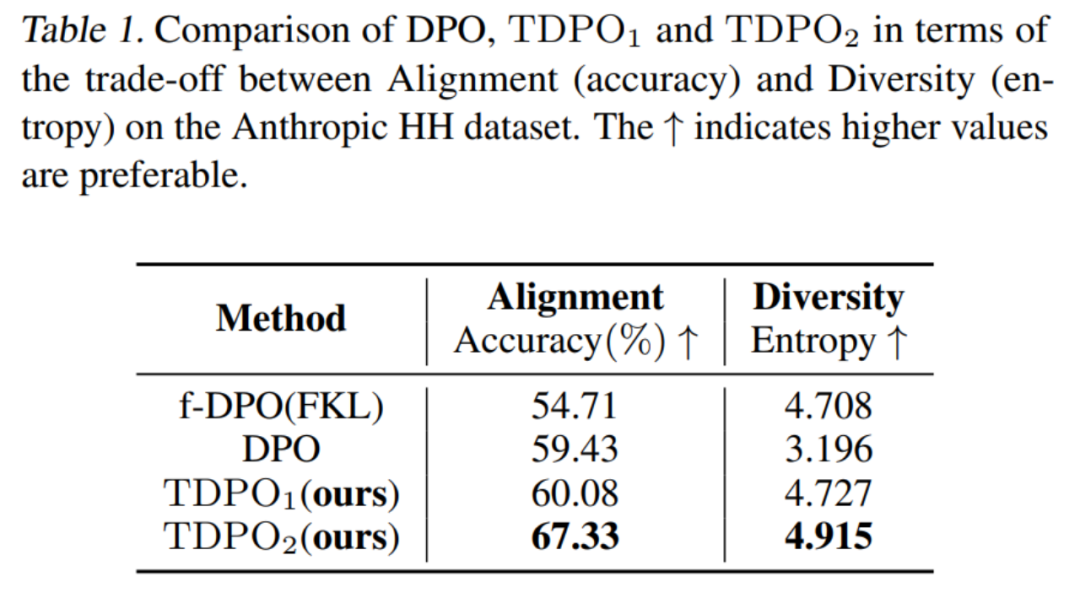

For the first evaluation method, the team evaluated the trade-offs in alignment performance (Accuracy) and generation diversity (Entropy) of models trained with different algorithms, as shown in Table 1.

It can be seen that the TDPO algorithm is not only better than DPO and f-DPO in alignment performance (Accuracy), but also has an advantage in generation diversity (Entropy), which is a key indicator of the response generated by these two large models. A better trade-off is achieved.

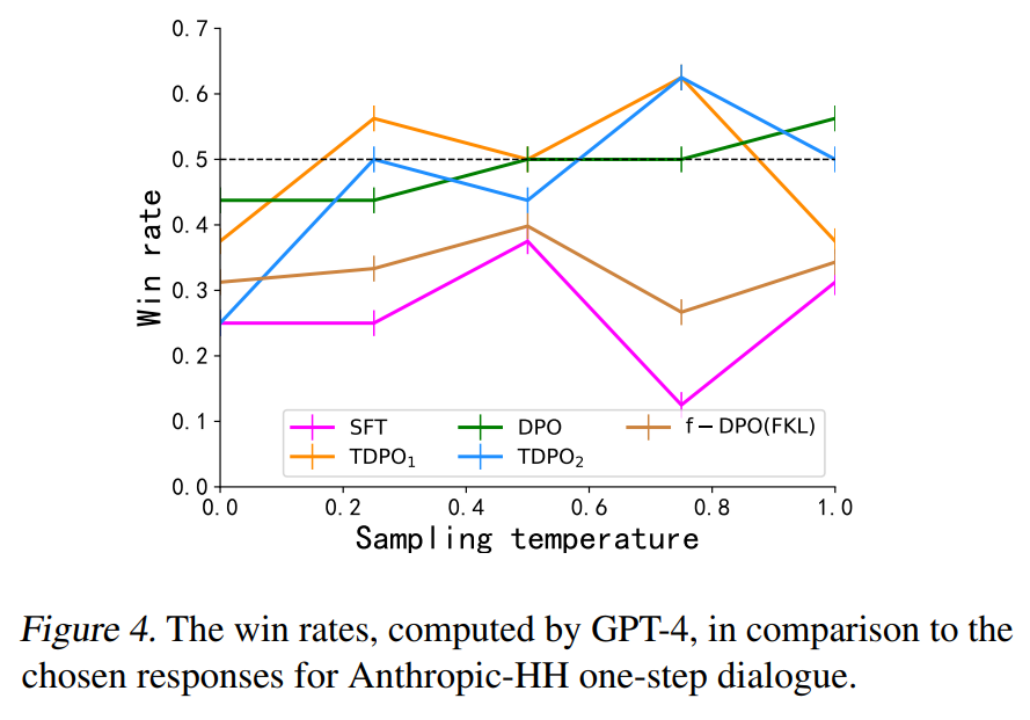

For the second evaluation method, the team evaluated the consistency between models trained by different algorithms and human preferences, and compared them with the winning responses in the data set, as shown in Figure 4.

DPO, TDPO1 and TDPO2 algorithms are all able to achieve a winning rate of higher than 50% for winning responses at a temperature coefficient of 0.75, which is better in line with human preferences.

MT-Bench

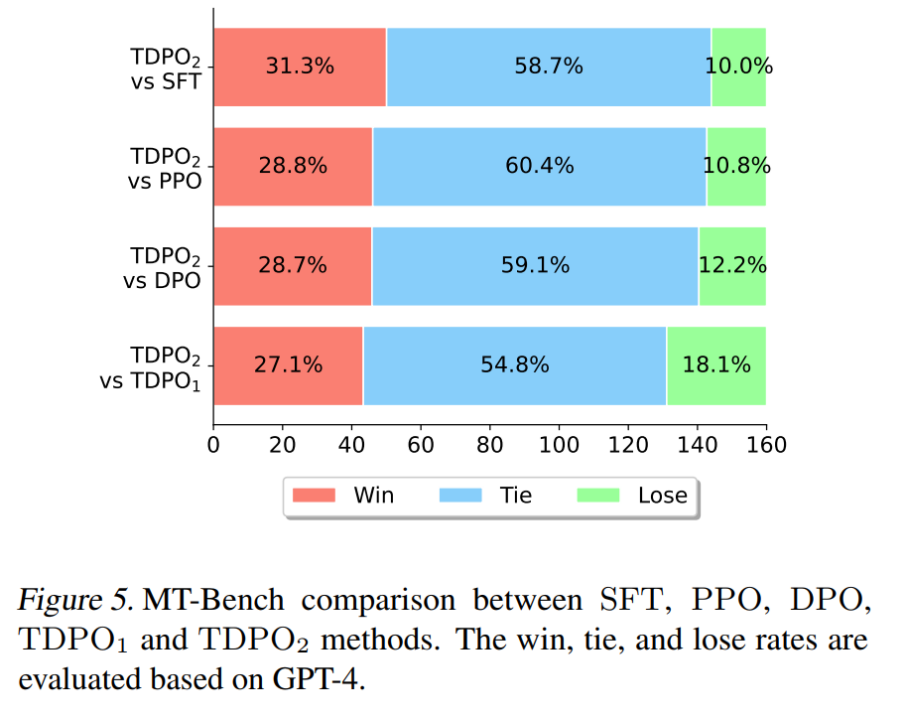

In the last experiment in the paper, the team used the Pythia 2.8B model trained on the Anthropic HH data set to directly use it for MT-Bench data set evaluation. The results are shown in Figure 5 Show.

On MT-Bench, TDPO is able to achieve a higher winning probability than other algorithms, which fully demonstrates the higher quality of responses generated by the model trained by the TDPO algorithm.

In addition, there are related studies comparing DPO, TDPO, and SimPO algorithms. Please refer to the link: https://www.zhihu.com/question/651021172/answer/3513696851

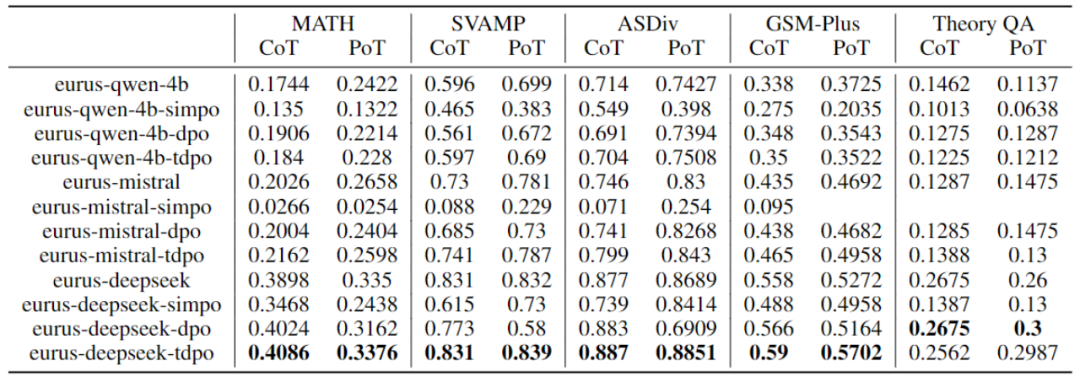

Based on the eval script provided by eurus, the evaluation The performance of the base models qwen-4b, mistral-0.1, and deepseek-math-base was obtained by fine-tuning training based on different alignment algorithms DPO, TDPO, and SimPO. The following are the experimental results:

Table 2: DPO, Performance comparison of TDPO and SimPO algorithms

For more results, please refer to the original paper.

The above is the detailed content of From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1242

1242

24

24

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

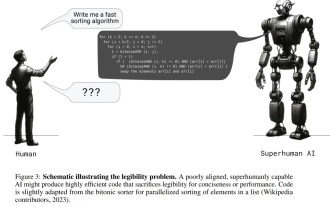

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

Jul 15, 2024 pm 03:59 PM

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

Jul 15, 2024 pm 03:59 PM

Can language models really be used for time series prediction? According to Betteridge's Law of Headlines (any news headline ending with a question mark can be answered with "no"), the answer should be no. The fact seems to be true: such a powerful LLM cannot handle time series data well. Time series, that is, time series, as the name suggests, refers to a set of data point sequences arranged in the order of time. Time series analysis is critical in many areas, including disease spread prediction, retail analytics, healthcare, and finance. In the field of time series analysis, many researchers have recently been studying how to use large language models (LLM) to classify, predict, and detect anomalies in time series. These papers assume that language models that are good at handling sequential dependencies in text can also generalize to time series.

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which