Technology peripherals

Technology peripherals

AI

AI

Li Feifei's team's new work: brain-controlled robots do housework, giving brain-computer interfaces the ability to learn with few samples

Li Feifei's team's new work: brain-controlled robots do housework, giving brain-computer interfaces the ability to learn with few samples

Li Feifei's team's new work: brain-controlled robots do housework, giving brain-computer interfaces the ability to learn with few samples

You can use your brain, never your hands.

In the future, you may be able to ask a robot to help you with housework just by thinking about it. The NOIR system recently proposed by the team of Wu Jiajun and Li Feifei of Stanford University allows users to control robots to complete daily tasks through non-invasive electroencephalography devices.

NOIR can decode your EEG signals into a robot skill library. It can now complete tasks such as cooking sukiyaki, ironing clothes, grating cheese, playing tic-tac-toe, and even petting a robot dog. This modular system has powerful learning capabilities and can handle complex and varied tasks in daily life.

The Brain and Robot Interface (BRI) is a masterpiece of human art, science and engineering. We have seen it in countless science fiction works and creative arts, such as "The Matrix" and "Avatar"; but truly realizing BRI is not easy and requires breakthrough scientific research to create a device that can perfectly coordinate with humans. functioning robotic system.

One key component for such a system is the ability of machines to communicate with humans. In the process of human-machine collaboration and robot learning, the ways humans communicate their intentions include actions, button presses, gaze, facial expressions, language, etc. Communicating directly with robots through neural signals is the most exciting but also most challenging prospect.

Recently, a multidisciplinary joint team led by Wu Jiajun and Li Feifei of Stanford University proposed a universal intelligent BRI system NOIR (Neural Signal Operated Intelligent Robots/Neural Signal Operated Intelligent Robots).

Paper address: https://openreview.net/pdf?id=eyykI3UIHa

Project website: https://noir-corl.github.io/

The system is based on non-invasive electroencephalography (EEG) technology. According to reports, the main principle based on this system is hierarchical shared autonomy, that is, humans define high-level goals, and robots achieve their goals by executing low-level movement instructions. The system incorporates new advances in neuroscience, robotics, and machine learning to achieve improvements over previous methods. The team summarizes the contributions made.

First of all, NOIR is versatile, can be used for diverse tasks, and is easy to use by different communities. Research shows that NOIR can complete up to 20 daily activities; in comparison, previous BRI systems were often designed for one or a few tasks, or were simply simulation systems. Additionally, the NOIR system can be used by the general population with minimal training.

Secondly, the I in NOIR means that the robot system is intelligent and has adaptive capabilities. The robot is equipped with a diverse repertoire of skills that allow it to perform low-level actions without intensive human supervision. Using parameterized skill primitives such as Pick (obj-A) or MoveTo (x,y), robots can naturally acquire, interpret, and execute human behavioral goals.

In addition, the NOIR system also has the ability to learn what humans want to achieve during the collaboration process. Research shows that by leveraging recent advances in underlying models, the system can adapt to even very limited data. This can significantly improve the efficiency of the system.

NOIR’s key technical contributions include a modular workflow for decoding neural signals to understand human intent. You know, decoding human intended goals from neural signals is extremely challenging. To do this, the team's approach is to break down human intention into three major components: the object to be manipulated (What), the way to interact with the object (How), and the location of the interaction (Where). Their research shows that these signals can be decoded from different types of neural data. These decomposed signals can naturally correspond to parameterized robot skills and can be effectively communicated to the robot.

Three human subjects successfully used the NOIR system in 20 home activities involving desktop or mobile operations (including making sukiyaki, ironing clothes, playing tic-tac-toe, petting a robot dog, etc.), That is, completing these tasks through their brain signals!

Experiments show that by using humans as teachers for few-shot robot learning, the efficiency of the NOIR system can be significantly improved. This method of using human brain signals to collaborate to build intelligent robotic systems has great potential to develop vital assistive technologies for people, especially those with disabilities, to improve their quality of life.

NOIR System

The challenges this research seeks to solve include: 1. How to build a universal BRI system suitable for various tasks? 2. How to decode relevant communication signals from the human brain? 3. How to improve the intelligence and adaptability of robots to achieve more efficient collaboration? Figure 2 gives an overview of the system.

In this system, humans, as planning agents, perceive, plan, and communicate behavioral goals to the robot; while the robot uses predefined primitive skills to achieve these goals.

To achieve the overall goal of creating a universal BRI system, these two designs need to be collaboratively integrated. To this end, the team proposed a new brain signal decoding workflow and equipped the robot with a set of parameterized original skill libraries. Finally, the team used few-sample imitation learning technology to give the robot more efficient learning capabilities.

Brain: Modular decoding workflow

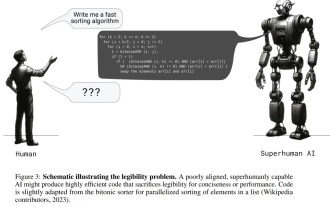

As shown in Figure 3, human intention will be decomposed into three components: the object to be manipulated (What), the way to interact with the object (How), and the interaction Where.

Decoding specific user intentions from EEG signals is not easy, but it can be accomplished through steady-state visual evoked potentials (SSVEP) and motor imagery. Briefly, the process includes:

Select an object with a Steady State Visual Evoked Potential (SSVEP)

Select skills and parameters via Motor Imagery (MI)

Select via muscle tightening to confirm or Interrupt

Robot: Parameterized primitive skills

Parameterized primitive skills can be combined and reused for different tasks to achieve complex and diverse operations. Furthermore, these skills are very intuitive to humans. Neither humans nor agents need to understand the control mechanisms of these skills, so people can implement these skills through any method as long as they are robust and adaptable to diverse tasks.

The team used two robots in the experiment: one is a Franka Emika Panda robotic arm for desktop operation tasks, and the other is a PAL Tiago robot for mobile operation tasks. The following table gives the primitive skills of these two robots.

Using Robot Learning for Efficient BRI

The modular decoding workflow and primitive skill library described above lay the foundation for NOIR. However, the efficiency of such systems can be improved further. The robot should be able to learn the user's items, skills, and parameter selection preferences during the collaboration process, so that in the future it can predict the goals the user wants to achieve, achieve better automation, and make decoding simpler and easier. Since the position, pose, arrangement, and instance of the items may differ each time it is executed, learning and generalization capabilities are required. Additionally, learning algorithms should be highly sample efficient because collecting human data is expensive.

The team adopted two methods for this: retrieval-based few-sample item and skill selection, and single-sample skill parameter learning.

Retrieval-based few-sample item and skill selection. This method can learn implicit representations of the observed states. Given a new observed state, it finds the most similar state and corresponding action in the hidden space. Figure 4 gives an overview of the method.

During mission execution, data points consisting of images and human-selected "item-skill" pairs are recorded. These images are first encoded by a pre-trained R3M model to extract features useful for robot manipulation tasks, and then passed through a number of trainable fully connected layers. These layers are trained using contrastive learning with a triplet loss, which encourages images with the same "item-skill" label to be closer together in the hidden space. The learned image embeddings and "item-skill" labels are stored in memory.

During testing, the model retrieves the nearest data point in the hidden space and then suggests the item-skill pair associated with that data point to the human.

Single sample skill parameter learning. Parameter selection requires extensive human involvement, as the process requires precise cursor operation through motor imagery (MI). To reduce human effort, the team proposed a learning algorithm that predicts parameters given an item-skill pair used as the starting point for cursor control. Assuming that the user has successfully located the precise key point of picking up a cup handle, does it need to specify this parameter again in the future? Recently, basic models such as DINOv2 have made a lot of progress, and the corresponding semantic key points can be found, eliminating the need to specify parameters again.

Compared with previous work, the new algorithm proposed here is single-sample and predicts specific 2D points rather than semantic fragments. As shown in Figure 4, given a training image (360 × 240) and parameter selection (x, y), the model predicts semantically corresponding points in different test images. Specifically, the team used the pre-trained DINOv2 model to obtain semantic features.

Experiments and results

missions. The tasks selected for the experiment come from the BEHAVIOR and Activities of Daily Living benchmarks, which can reflect human daily needs to a certain extent. Figure 1 shows the experimental tasks, which include 16 desktop tasks and 4 mobile operation tasks.

Examples of experimental processes for making sandwiches and caring for COVID-19 patients are shown below.

Experimental process. During the experiment, the user stayed in an isolated room, remained still, watched the robot on the screen, and relied solely on brain signals to communicate with the robot.

System performance. Table 1 summarizes the system performance under two metrics: the number of attempts before success and the time to complete the task upon success.

Despite the long span and difficulty of these tasks, NOIR achieved very encouraging results: on average, it only took 1.83 attempts to complete the tasks.

Decoding accuracy. The accuracy with which brain signals are decoded is a key to the success of the NOIR system. Table 2 summarizes the decoding accuracy at different stages. It can be seen that the CCA (canonical correlation analysis) based on SSVEP can achieve a high accuracy of 81.2%, which means that the item selection is generally accurate.

Item and skill selection results. So, can the newly proposed robot learning algorithm improve the efficiency of NOIR? The researchers first assessed item and skill selection learning. To do this, they collected an offline dataset for the MakePasta task, with 15 training samples for each item-skill pair. Given an image, when the correct item and skill are predicted simultaneously, the prediction is considered correct. The results are shown in Table 3.

A simple image classification model using ResNet can achieve an average accuracy of 0.31, while the new method based on pre-trained ResNet backbone network can achieve a significantly higher 0.73, which highlights the contrastive learning and retrieval-based The importance of learning.

Results of single-sample parameter learning. The researchers compared the new algorithm against multiple benchmarks based on pre-collected data sets. Table 4 gives the MSE values of the predicted results.

They also demonstrated the effectiveness of the parameter learning algorithm in actual task execution on the SetTable task. Figure 5 shows the human effort saved in controlling cursor movement.

The above is the detailed content of Li Feifei's team's new work: brain-controlled robots do housework, giving brain-computer interfaces the ability to learn with few samples. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations