Large language model evaluation metrics

What are the most widely used and reliable metrics for evaluating large language models?

The most widely used and reliable metrics for evaluating large language models (LLMs) are:

- BLEU (Bilingual Evaluation Understudy): BLEU measures the similarity between a generated text and a reference text. It calculates the n-gram precision between the generated text and the reference text, where n is typically 1 to 4.

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): ROUGE measures the recall of content units (e.g., words, phrases) between a generated text and a reference text. It calculates the recall of n-grams (typically 1 to 4) and the longest common subsequence (LCS) between the generated text and the reference text.

- METEOR (Metric for Evaluation of Translation with Explicit Ordering): METEOR is a metric that combines precision, recall, and word alignment to evaluate the quality of machine translation output. It considers both exact matches and paraphrase matches between the generated text and the reference text.

- NIST (National Institute of Standards and Technology): NIST is a metric that measures the machine translation quality based on the BLEU score and other factors such as word tokenization, part-of-speech tagging, and syntactic analysis.

These metrics are reliable and well-established in the NLP community. They provide a quantitative measure of the performance of LLMs on various NLP tasks, such as machine translation, natural language generation, and question answering.

How do different evaluation metrics capture the performance of LLMs across various NLP tasks?

Different evaluation metrics capture the performance of LLMs across various NLP tasks in different ways:

- BLEU: BLEU is primarily used to evaluate the quality of machine translation output. It measures the similarity between the generated text and the reference translation, which is important for assessing the fluency and accuracy of the translation.

- ROUGE: ROUGE is often used to evaluate the quality of natural language generation output. It measures the recall of content units between the generated text and the reference text, which is essential for assessing the informativeness and coherence of the generated text.

- METEOR: METEOR is suitable for evaluating both machine translation and natural language generation output. It combines precision, recall, and word alignment to assess the overall quality of the generated text, including its fluency, accuracy, and informativeness.

- NIST: NIST is specifically designed for evaluating machine translation output. It considers a wider range of factors than BLEU, including word tokenization, part-of-speech tagging, and syntactic analysis. This makes it more comprehensive than BLEU for evaluating the quality of machine translation.

What are the limitations and challenges associated with current evaluation methods for LLMs?

Current evaluation methods for LLMs have several limitations and challenges:

- Subjectivity: Evaluation metrics are often based on human judgments, which can lead to subjectivity and inconsistency in the evaluation process.

- Lack of diversity: Most evaluation metrics focus on a limited set of evaluation criteria, such as fluency, accuracy, and informativeness. This can overlook other important aspects of LLM performance, such as bias, fairness, and social impact.

- Difficulty in capturing qualitative aspects: Evaluation metrics are primarily quantitative and may not fully capture the qualitative aspects of LLM performance, such as creativity, style, and tone.

- Limited generalization: Evaluation metrics are often task-specific and may not generalize well to different NLP tasks or domains.

These limitations and challenges highlight the need for developing more comprehensive and robust evaluation methods for LLMs that can better capture their capabilities and societal impact.

The above is the detailed content of Large language model evaluation metrics. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

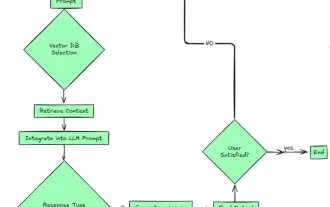

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le