What is a Load Balancer & How Does It Distribute Incoming Requests?

In the world of web applications and distributed systems, load balancers play a crucial role in ensuring optimal performance, high availability, and scalability. This comprehensive guide will delve into the intricacies of load balancers, exploring their purpose, types, configuration, and best practices. Whether you're a beginner looking to understand the basics or an experienced developer aiming to optimize your system architecture, this article will provide valuable insights into the world of load balancing.

What is a Load Balancer?

A load balancer is a device or software that distributes network or application traffic across multiple servers. By evenly distributing requests, load balancers help ensure that no single server becomes overwhelmed, which enhances the reliability and performance of your application.

Purpose and Functionality

A load balancer is a critical component in system architecture that acts as a traffic cop for your application. Its primary purpose is to distribute incoming network traffic across multiple servers, ensuring that no single server bears too much demand. By spreading the workload, load balancers help to:

- Improve application responsiveness

- Increase availability and reliability

- Prevent server overload

- Facilitate scaling of applications

How Load Balancers Distribute Traffic

Load balancers use various algorithms to determine how to distribute incoming requests. Some common methods include:

- Round Robin: Requests are distributed sequentially to each server in turn.

- Least Connections: Traffic is sent to the server with the fewest active connections.

- IP Hash: The client's IP address is used to determine which server receives the request, ensuring that a client always connects to the same server.

- Weighted Round Robin: Servers are assigned different weights based on their capabilities, influencing the distribution of requests.

Client Request

|

Load Balancer Receives Request

|

Select Appropriate Server (Based on Algorithm)

|

Forward Request to Selected Server

|

Server Processes Request

|

Send Response Back to Client

Basic Configuration

Let’s set up a simple load balancer using Nginx, a popular open-source software for load balancing.

Install Nginx:

`bash` sudo apt-get update sudo apt-get install nginx

Configure Nginx as a Load Balancer:

Edit the nginx.conf file to include the following:

nginx

http {

upstream backend {

server server1.example.com;

server server2.example.com;

server server3.example.com;

}

server {

listen 80;

location / {

proxy_pass http://backend;

}

}

}

Test the Load Balancer:

- Start Nginx: sudo service nginx start

- Send requests to your load balancer’s IP address. You should see the requests being distributed across server1.example.com, server2.example.com, and server3.example.com.

Configuration Process

- Choose Your Load Balancer: Select a hardware device or software solution based on your needs.

- Define Backend Servers: Specify the pool of servers that will receive traffic.

- Configure Listening Ports: Set up the ports on which the load balancer will receive incoming traffic.

- Set Up Routing Rules: Define how traffic should be distributed to backend servers.

- Configure Health Checks: Implement checks to ensure backend servers are operational.

Essential Configuration Settings

- Load Balancing Algorithm: Choose the method for distributing traffic (e.g., round robin, least connections).

- Session Persistence: Decide if and how to maintain user sessions on specific servers.

- SSL/TLS Settings: Configure encryption settings if terminating SSL at the load balancer.

- Logging and Monitoring: Set up logging to track performance and troubleshoot issues.

Server Health Checks

- Periodic Probes: The load balancer sends requests to backend servers at regular intervals.

- Response Evaluation: It assesses the responses to determine if the server is healthy.

- Customizable Checks: Health checks can be as simple as a ping or as complex as requesting a specific page and evaluating the content.

Handling Failed Health Checks

When a server fails a health check:

- The load balancer removes it from the pool of active servers.

- Traffic is redirected to healthy servers.

- The load balancer continues to check the failed server and reintroduces it to the pool when it passes health checks again.

Session Persistence

Session persistence, also known as sticky sessions, ensures that a client's requests are always routed to the same backend server.

When to Use Session Persistence

- Stateful Applications: When your application maintains state on the server.

- Shopping Carts: To ensure a user's cart remains consistent during their session.

- Progressive Workflows: For multi-step processes where state needs to be maintained.

When to Avoid Session Persistence

- Stateless Applications: When your application doesn't rely on server-side state.

- Highly Dynamic Content: For applications where any server can handle any request equally well.

- When Scaling is a Priority: Sticky sessions can complicate scaling and server maintenance.

SSL/TLS Termination

SSL/TLS termination is the process of decrypting encrypted traffic at the load balancer before passing it to backend servers.

Importance of SSL/TLS Termination

- Reduced Server Load: Offloads the computationally expensive task of encryption/decryption from application servers.

- Centralized SSL Management: Simplifies certificate management by centralizing it at the load balancer.

- Enhanced Security: Allows the load balancer to inspect and filter HTTPS traffic.

Configuring SSL/TLS Termination

- Install SSL certificates on the load balancer.

- Configure the load balancer to listen on HTTPS ports (usually 443).

- Set up backend communication, which can be either encrypted or unencrypted, depending on your security requirements.

Common Issues and Troubleshooting

- Uneven Load Distribution: Some servers receiving disproportionately more traffic than others.

- Session Persistence Problems: Users losing their session data or being routed to incorrect servers.

- SSL Certificate Issues: Expired or misconfigured certificates causing connection problems.

- Health Check Failures: Overly aggressive or poorly configured health checks marking healthy servers as down.

- Performance Bottlenecks: The load balancer itself becoming a bottleneck under high traffic.

Troubleshooting Techniques

- Log Analysis: Examine load balancer and server logs to identify patterns or anomalies.

- Monitoring Tools: Use comprehensive monitoring solutions to track performance metrics and identify issues.

- Testing: Regularly perform load testing to ensure your setup can handle expected traffic volumes.

- Configuration Review: Periodically review and optimize load balancer settings.

- Network Analysis: Use tools like tcpdump or Wireshark to analyze network traffic for issues.

Conclusion

Load balancers are indispensable tools in modern system architecture, providing the foundation for scalable, reliable, and high-performance applications. By distributing traffic efficiently, facilitating scaling, and improving fault tolerance, load balancers play a crucial role in ensuring optimal user experiences.

As you implement and manage load balancers in your infrastructure, remember that the key to success lies in understanding your application's specific needs, choosing the right type of load balancer, and continually monitoring and optimizing your setup. With the knowledge gained from this guide, you're well-equipped to leverage load balancers effectively in your system architecture.

The above is the detailed content of What is a Load Balancer & How Does It Distribute Incoming Requests?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1422

1422

52

52

1316

1316

25

25

1267

1267

29

29

1239

1239

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

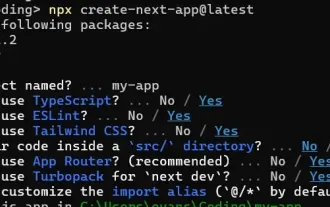

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.