With today's AI advancements, it's easy to setup a generative AI model on your computer to create a chatbot.

In this article we will see how a you can setup a chatbot on your system using Ollama and Next.js

Let's start by setting up Ollama on our system. Visit ollama.com and download it for your OS. This will allow us to use ollama command in the terminal/command prompt.

Check Ollama version by using command ollama -v

Check out the list of models on Ollama library page.

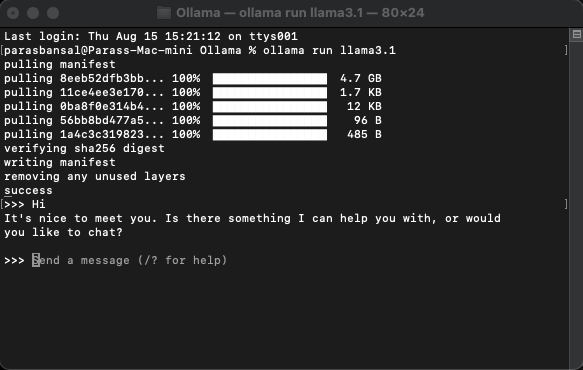

To download and run a model, run command ollama run

Example: ollama run llama3.1 or ollama run gemma2

You will be able to chat with the model right in the terminal.

There are few npm packages that needs to be installed to use the ollama.

To install these dependencies run npm i ai ollama ollama-ai-provider.

Under app/src there is a file named page.tsx.

Let's remove everything in it and start with the basic functional component:

src/app/page.tsx

export default function Home() {

return (

<main className="flex min-h-screen flex-col items-center justify-start p-24">

{/* Code here... */}

</main>

);

}

Let's start by importing useChat hook from ai/react and react-markdown

"use client";

import { useChat } from "ai/react";

import Markdown from "react-markdown";

Because we are using a hook, we need to convert this page to to a client component.

Tip: You can create a separate component for chat and call it in the page.tsx for limiting client component usage.

In the component get messages, input, handleInputChange and handleSubmit from useChat hook.

const { messages, input, handleInputChange, handleSubmit } = useChat();

In JSX, create an input form to get the user input in order to initiate conversation.

<form onSubmit={handleSubmit} className="w-full px-3 py-2">

<input

className="w-full px-3 py-2 border border-gray-700 bg-transparent rounded-lg text-neutral-200"

value={input}

placeholder="Ask me anything..."

onChange={handleInputChange}

/>

</form>

The good think about this is we don't need to right the handler or maintain a state for input value, the useChat hook provide it to us.

We can display the messages by looping through the messages array.

messages.map((m, i) => (<div key={i}>{m}</div>)

The styled version based on the role of the sender looks like this:

<div

className="min-h-[50vh] h-[50vh] max-h-[50vh] overflow-y-auto p-4"

>

<div className="min-h-full flex-1 flex flex-col justify-end gap-2 w-full pb-4">

{messages.length ? (

messages.map((m, i) => {

return m.role === "user" ? (

<div key={i} className="w-full flex flex-col gap-2 items-end">

<span className="px-2">You</span>

<div className="flex flex-col items-center px-4 py-2 max-w-[90%] bg-orange-700/50 rounded-lg text-neutral-200 whitespace-pre-wrap">

<Markdown>{m.content}</Markdown>

</div>

</div>

) : (

<div key={i} className="w-full flex flex-col gap-2 items-start">

<span className="px-2">AI</span>

<div className="flex flex-col max-w-[90%] px-4 py-2 bg-indigo-700/50 rounded-lg text-neutral-200 whitespace-pre-wrap">

<Markdown>{m.content}</Markdown>

</div>

</div>

);

})

) : (

<div className="text-center flex-1 flex items-center justify-center text-neutral-500 text-4xl">

<h1>Local AI Chat</h1>

</div>

)}

</div>

</div>

Let's take a look at the whole file

src/app/page.tsx

"use client";

import { useChat } from "ai/react";

import Markdown from "react-markdown";

export default function Home() {

const { messages, input, handleInputChange, handleSubmit } = useChat();

return (

);

}

With this, the frontend part is complete. Now let's handle the API.

Let's start by creating route.ts inside app/api/chat.

Based on the Next.js naming convention, it will allow us to handle the requests on localhost:3000/api/chat endpoint.

src/app/api/chat/route.ts

import { createOllama } from "ollama-ai-provider";

import { streamText } from "ai";

const ollama = createOllama();

export async function POST(req: Request) {

const { messages } = await req.json();

const result = await streamText({

model: ollama("llama3.1"),

messages,

});

return result.toDataStreamResponse();

}

The above code is basically using the ollama and vercel ai to stream the data back as response.

Run npm run dev to start the server in the development mode.

Open the browser and go to localhost:3000 to see the results.

If everything is configured properly, you will be able to talk to your very own chatbot.

You can find the source code here: https://github.com/parasbansal/ai-chat

Let me know if you have any questions in the comments, I'll try to answer those.

The above is the detailed content of Local GPT with Ollama and Next.js. For more information, please follow other related articles on the PHP Chinese website!