Technology peripherals

Technology peripherals

AI

AI

The multimodal model evaluation framework lmms-eval is released! Comprehensive coverage, low cost, zero pollution

The multimodal model evaluation framework lmms-eval is released! Comprehensive coverage, low cost, zero pollution

The multimodal model evaluation framework lmms-eval is released! Comprehensive coverage, low cost, zero pollution

The AIxiv column is a column where academic and technical content is published on this site. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com

With the deepening of research on large models, how to promote them to more modalities has become a hot topic in academia and industry. Recently released large closed-source models such as GPT-4o and Claude 3.5 already have strong image understanding capabilities, and open-source field models such as LLaVA-NeXT, MiniCPM, and InternVL have also shown performance that is getting closer to closed-source.

In this era of "80,000 kilograms per mu" and "one SoTA every 10 days", a multi-modal evaluation framework that is easy to use, has transparent standards and is reproducible has become increasingly important, and this is not easy.

In order to solve the above problems, researchers from Nanyang Technological University's LMMs-Lab jointly open sourced LMMs-Eval, which is an evaluation framework specially designed for multi-modal large-scale models and provides evaluation of multi-modal models (LMMs). A one-stop, efficient solution.

Code repository: https://github.com/EvolvingLMMs-Lab/lmms-eval

Official homepage: https://lmms-lab.github.io/

Paper address : https://arxiv.org/abs/2407.12772

List address: https://huggingface.co/spaces/lmms-lab/LiveBench

Since its release in March 2024, LMMs-Eval The framework has received collaborative contributions from the open source community, companies, and universities. It has now received 1.1K Stars on Github, with more than 30+ contributors, including a total of more than 80 data sets and more than 10 models, and it continues to increase.

Standardized assessment framework

In order to provide a standardized assessment platform, LMMs-Eval includes the following features:

Unified interface: LMMs-Eval is based on the text assessment framework lm-evaluation-harness It has been improved and expanded to facilitate users to add new multi-modal models and data sets by defining a unified interface for models, data sets and evaluation indicators.

One-Click Launch: LMMs-Eval hosts over 80 (and growing) datasets on HuggingFace, carefully transformed from the original sources, including all variants, versions, and splits. Users do not need to make any preparations. With just one command, multiple data sets and models will be automatically downloaded and tested, and the results will be available in a few minutes.

Transparent and reproducible: LMMs-Eval has a built-in unified logging tool. Each question answered by the model and whether it is correct or not will be recorded, ensuring reproducibility and transparency. It also facilitates comparison of the advantages and disadvantages of different models.

The vision of LMMs-Eval is that future multi-modal models no longer need to write their own data processing, inference and submission code. In today's environment where multi-modal test sets are highly concentrated, this approach is unrealistic, and the measured scores are difficult to directly compare with other models. By accessing LMMs-Eval, model trainers can focus more on improving and optimizing the model itself, rather than spending time on evaluation and alignment results.

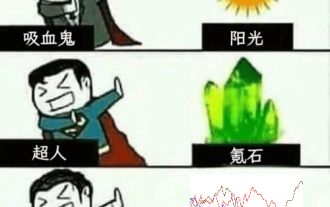

The "Impossible Triangle" of Evaluation

The ultimate goal of LMMs-Eval is to find a 1. wide coverage 2. low cost 3. zero data leakage method to evaluate LMMs. However, even with LMMs-Eval, the author team found it difficult or even impossible to do all three at the same time.

As shown in the figure below, when they expanded the evaluation dataset to more than 50, it became very time-consuming to perform a comprehensive evaluation of these datasets. Furthermore, these benchmarks are also susceptible to contamination during training. To this end, LMMs-Eval proposed LMMs-Eval-Lite to take into account wide coverage and low cost. They also designed LiveBench to be low cost and with zero data leakage.

LMMs-Eval-Lite: Wide coverage lightweight evaluation

大規模なモデルを評価する場合、膨大な数のパラメータとテストタスクにより、評価タスクの時間とコストが大幅に増加することが多いため、誰もが小規模なモデルを使用することを選択することがよくあります。データ セットを使用するか、評価に特定のデータ セットを使用します。ただし、限定された評価では、モデルの機能の理解が不足することがよくあります。評価の多様性と評価コストの両方を考慮するために、LMMs-Eval は LMMs-Eval-Lite

LiveBench: LMM 動的テスト

従来のベンチマークは、固定された質問と回答を使用した静的な評価に重点を置いています。マルチモーダル研究の進歩により、スコア比較ではオープンソース モデルが GPT-4V などの商用モデルよりも優れていることがよくありますが、実際のユーザー エクスペリエンスでは劣ります。動的なユーザー主導のチャットボット Arenas と WildVision は、モデルの評価にますます人気が高まっていますが、何千ものユーザー設定を収集する必要があり、評価には非常にコストがかかります。 LiveBench の中心的なアイデアは、汚染ゼロを達成し、コストを低く抑えるために、継続的に更新されるデータセットでモデルのパフォーマンスを評価することです。著者チームは Web から評価データを収集し、ニュースやコミュニティ フォーラムなどの Web サイトから最新のグローバル情報を自動的に収集するパイプラインを構築しました。情報の適時性と信頼性を確保するために、著者チームは CNN、BBC、日本の朝日新聞、中国の新華社通信を含む 60 以上の報道機関や Reddit などのフォーラムから情報源を選択しました。具体的な手順は次のとおりです。- ホームページのスクリーンショットをキャプチャし、広告やニュース以外の要素を削除します。

- GPT4-V、Claude-3-Opus、Gemini-1.5-Pro など、現在利用可能な最も強力なマルチモーダル モデルを使用して質問と回答のセットを設計します。正確さと関連性を確保するために、質問は別のモデルによってレビューおよび修正されます。

- 最終的な Q&A セットは手動でレビューされ、毎月約 500 個の質問が収集され、100 ~ 300 個が最終的なライブベンチ質問セットとして保持されます。

- LLaVA-Wilder および Vibe-Eval の採点基準を使用します -- 採点モデルは提供された標準回答に基づいて得点し、得点範囲は [1, 10] です。 ]。デフォルトのスコアリング モデルは GPT-4o で、代替として Claude-3-Opus および Gemini 1.5 Pro も含まれています。最終的に報告される結果は、0 ~ 100 の範囲の精度メトリクスに変換されたスコアに基づきます。

- 将来的には、動的に更新されるリストでマルチモーダル モデルを表示することもできます。毎月動的に更新される最新の評価データと最新の評価結果を一覧で表示します。

The above is the detailed content of The multimodal model evaluation framework lmms-eval is released! Comprehensive coverage, low cost, zero pollution. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1421

1421

52

52

1315

1315

25

25

1266

1266

29

29

1239

1239

24

24

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

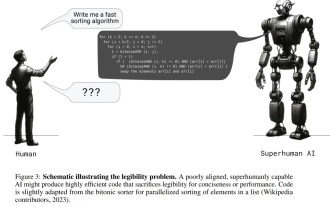

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

Jul 15, 2024 pm 03:59 PM

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

Jul 15, 2024 pm 03:59 PM

Can language models really be used for time series prediction? According to Betteridge's Law of Headlines (any news headline ending with a question mark can be answered with "no"), the answer should be no. The fact seems to be true: such a powerful LLM cannot handle time series data well. Time series, that is, time series, as the name suggests, refers to a set of data point sequences arranged in the order of time. Time series analysis is critical in many areas, including disease spread prediction, retail analytics, healthcare, and finance. In the field of time series analysis, many researchers have recently been studying how to use large language models (LLM) to classify, predict, and detect anomalies in time series. These papers assume that language models that are good at handling sequential dependencies in text can also generalize to time series.