Web Scraping Made Easy: Parse Any HTML Page with Puppeteer

Imagine building an e-commerce platform where we can easily fetch product data in real-time from major stores like eBay, Amazon, and Flipkart. Sure, there’s Shopify and similar services, but let's be honest—it can feel a bit cumbersome to buy a subscription just for a project. So, I thought, why not scrape these sites and store the products directly in our database? It would be an efficient and cost-effective way to get products for our e-commerce projects.

What is Web Scraping?

Web scraping involves extracting data from websites by parsing the HTML of web pages to read and collect content. It often involves automating a browser or sending HTTP requests to the site, and then analyzing the HTML structure to retrieve specific pieces of information like text, links, or images.Puppeteer is one library used to scrape the websites.

?What is Puppeteer?

Puppeteer is a Node.js library.It provides a high-level API for controlling headless Chrome or Chromium browsers.Headless Chrome is a version of chrome that runs everything without an UI(perfect for running things in the background).

We can automate various tasks using puppeteer,such as:

- Web Scraping: Extracting content from websites involves interacting with the page's HTML and JavaScript. We typically retrieve the content by targeting the CSS selectors.

- PDF Generation: Converting web pages into PDFs programmatically is ideal when you want to directly generate a PDF from a web page, rather than taking a screenshot and then converting the screenshot to a PDF. (P.S. Apologies if you already have workarounds for this).

- Automated Testing: Running tests on web pages by simulating user actions like clicking buttons, filling out forms, and taking screenshots. This eliminates the tedious process of manually going through long forms to ensure everything is in place.

?How to get started with puppetter?

Firstly we have to install the library,go ahead and do this.

Using npm:

npm i puppeteer # Downloads compatible Chrome during installation. npm i puppeteer-core # Alternatively, install as a library, without downloading Chrome.

Using yarn:

yarn add puppeteer // Downloads compatible Chrome during installation. yarn add puppeteer-core // Alternatively, install as a library, without downloading Chrome.

Using pnpm:

pnpm add puppeteer # Downloads compatible Chrome during installation. pnpm add puppeteer-core # Alternatively, install as a library, without downloading Chrome.

? Example to demonstrate the use of puppeteer

Here is an example of how to scrape a website. (P.S. I used this code to retrieve products from the Myntra website for my e-commerce project.)

const puppeteer = require("puppeteer");

const CategorySchema = require("./models/Category");

// Define the scrape function as a named async function

const scrape = async () => {

// Launch a new browser instance

const browser = await puppeteer.launch({ headless: false });

// Open a new page

const page = await browser.newPage();

// Navigate to the target URL and wait until the DOM is fully loaded

await page.goto('https://www.myntra.com/mens-sport-wear?rawQuery=mens%20sport%20wear', { waitUntil: 'domcontentloaded' });

// Wait for additional time to ensure all content is loaded

await new Promise((resolve) => setTimeout(resolve, 25000));

// Extract product details from the page

const items = await page.evaluate(() => {

// Select all product elements

const elements = document.querySelectorAll('.product-base');

const elementsArray = Array.from(elements);

// Map each element to an object with the desired properties

const results = elementsArray.map((element) => {

const image = element.querySelector(".product-imageSliderContainer img")?.getAttribute("src");

return {

image: image ?? null,

brand: element.querySelector(".product-brand")?.textContent,

title: element.querySelector(".product-product")?.textContent,

discountPrice: element.querySelector(".product-price .product-discountedPrice")?.textContent,

actualPrice: element.querySelector(".product-price .product-strike")?.textContent,

discountPercentage: element.querySelector(".product-price .product-discountPercentage")?.textContent?.split(' ')[0]?.slice(1, -1),

total: 20, // Placeholder value, adjust as needed

available: 10, // Placeholder value, adjust as needed

ratings: Math.round((Math.random() * 5) * 10) / 10 // Random rating for demonstration

};

});

return results; // Return the list of product details

});

// Close the browser

await browser.close();

// Prepare the data for saving

const data = {

category: "mens-sport-wear",

subcategory: "Mens",

list: items

};

// Create a new Category document and save it to the database

// Since we want to store product information in our e-commerce store, we use a schema and save it to the database.

// If you don't need to save the data, you can omit this step.

const category = new CategorySchema(data);

console.log(category);

await category.save();

// Return the scraped items

return items;

};

// Export the scrape function as the default export

module.exports = scrape;

?Explanation:

- In this code, we are using Puppeteer to scrape product data from a website. After extracting the details, we create a schema (CategorySchema) to structure and save this data into our database. This step is particularly useful if we want to integrate the scraped products into our e-commerce store. If storing the data in a database is not required, you can omit the schema-related code.

- Before scraping, it's important to understand the HTML structure of the page and identify which CSS selectors contain the content you want to extract.

- In my case, I used the relevant CSS selectors identified on the Myntra website to extract the content I was targeting.

The above is the detailed content of Web Scraping Made Easy: Parse Any HTML Page with Puppeteer. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1422

1422

52

52

1316

1316

25

25

1268

1268

29

29

1241

1241

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

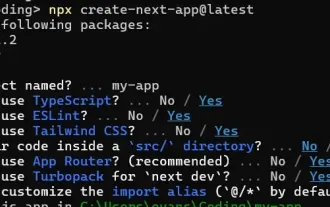

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.