Choosing Between C# and JavaScript for Web Scraping

A brief understanding of the difference between C# and JavaScript web scraping

As a compiled language, C# provides a wealth of libraries and frameworks, such as HtmlAgilityPack, HttpClient, etc., which facilitate the implementation of complex web crawling logic, and the code is concise and efficient, with strong debugging and error handling capabilities. At the same time, C# has good cross-platform support and is suitable for a variety of operating systems. However, the learning curve of C# may be relatively steep and requires a certain programming foundation.

In contrast, JavaScript, as a scripting language, is more flexible in web crawling and can be run directly in the browser without the need for additional installation environment. JavaScript has a rich DOM operation API, which is convenient for direct operation of web page elements. In addition, JavaScript is also supported by a large number of third-party libraries and frameworks, such as Puppeteer, Cheerio, etc., which further simplifies the implementation of web crawling. However, JavaScript's asynchronous programming model may be relatively complex and requires a certain learning cost.

Summary of C# vs JavaScript for web scraping

Differences in language and environment

C#: Requires .NET environment, suitable for desktop or server-side applications. JavaScript: Built-in in the browser, suitable for front-end and Node.js environment.

Crawl tools and libraries:

C#: Commonly used HttpClient, combined with HtmlAgilityPack parsing. JavaScript: Libraries such as Axios can be used, with Cheerio parsing.

Execution environment and restrictions

C#: Executed on the server or desktop, less restricted by browsers. JavaScript: Executed in the browser, restricted by the same-origin policy, etc.

Processing dynamic content

Both require additional processing, such as Selenium assistance. JavaScript has a natural advantage in the browser environment.

Summary

Choose based on project requirements, development environment and resources.

Which one is better for crawling complex dynamic web pages, C# or JavaScript?

For crawling complex dynamic web pages, C# and JavaScript each have their own advantages, but C# combined with tools such as Selenium is usually more suitable.

JavaScript: As a front-end scripting language, JavaScript is executed in a browser environment and naturally supports processing dynamic content. However, when JavaScript is executed on the server side or in desktop applications, it requires the help of tools such as Node.js, and may be limited by the browser's homology policy, etc.

C#: By combining libraries such as Selenium WebDriver, C# can simulate browser behavior and process JavaScript-rendered content, including login, click, scroll, and other operations. This method can more comprehensively crawl dynamic web page data, and C#'s strong typing characteristics and rich library support also improve development efficiency and stability.

Therefore, in scenarios where complex dynamic web pages need to be crawled, it is recommended to use C# combined with tools such as Selenium for development

What technologies and tools are needed for web scraping with C#?

Web scraping with C# requires the following technologies and tools:

HttpClient or WebClient class: used to send HTTP requests and obtain web page content. HttpClient provides more flexible functions and is suitable for handling complex HTTP requests.

HTML parsing library: such as HtmlAgilityPack, used to parse the obtained HTML document and extract the required data from it. HtmlAgilityPack supports XPath and CSS selectors, which is convenient for locating HTML elements.

Regular expression: used to match and extract specific text content in HTML documents, but attention should be paid to the accuracy and efficiency of regular expressions.

Selenium WebDriver: For scenarios that need to simulate browser behavior (such as logging in, processing JavaScript rendered content), Selenium WebDriver can be used to simulate user operations.

JSON parsing library: such as Json.NET, used to parse JSON formatted data, which is very useful when processing data returned by API.

Exception handling and multithreading: In order to improve the stability and efficiency of the program, you need to write exception handling code and consider using multithreading technology to process multiple requests concurrently.

Proxy and User-Agent settings: In order to bypass the anti-crawling mechanism of the website, you may need to set the proxy and custom User-Agent to simulate different access environments.

The combination of these technologies and tools can efficiently implement the C# web crawling function.

How to crawl dynamic web pages with C# combined with Selenium?

How to use C# combined with Selenium to crawl dynamic web pages? C# combined with Selenium to crawl dynamic web pages

1. Environment preparation:

Make sure that the C# development environment is installed.

Install Selenium WebDriver, which is used to simulate browser behavior.

Download and set up the browser driver, such as ChromeDriver, to ensure that it is consistent with the browser version.

2. Usage steps:

Import Selenium-related external libraries, such as WebDriver, WebDriverWait, etc.

Initialize WebDriver, set up the browser driver, and open the target web page.

Use the methods provided by Selenium to simulate user behaviors, such as clicking, input, scrolling, etc., to handle operations such as dynamically loading content or logging in.

Parse the web page source code and extract the required data.

Close the browser and WebDriver instance.

By combining C# with Selenium, you can effectively crawl dynamic web page content, handle complex interactions, and avoid being blocked by website detection.

Conclusion

In summary, C# and JavaScript each have their own advantages and disadvantages in web crawling. The choice of language depends on specific needs and development environment.

The above is the detailed content of Choosing Between C# and JavaScript for Web Scraping. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1421

1421

52

52

1316

1316

25

25

1266

1266

29

29

1239

1239

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

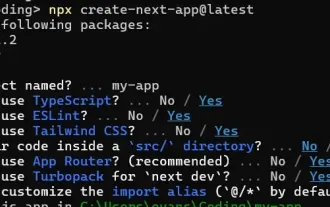

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.