Understanding Node.js Streams: What, Why, and How to Use Them

Node.js Streams are an essential feature for handling large amounts of data efficiently. Unlike traditional input-output mechanisms, streams allow data to be processed in chunks rather than loading the entire data into memory, making them perfect for dealing with large files or real-time data. In this article, we'll dive deep into what Node.js Streams are, why they’re useful, how to implement them, and various types of streams with detailed examples and use cases.

What are Node.js Streams?

In simple terms, a stream is a sequence of data being moved from one point to another over time. You can think of it as a conveyor belt where data flows piece by piece instead of all at once.

Node.js Streams work similarly; they allow you to read and write data in chunks (instead of all at once), making them highly memory-efficient.

Streams in Node.js are built on top of EventEmitter, making them event-driven. A few important events include:

- data: Emitted when data is available for consumption.

- end: Emitted when no more data is available to consume.

- error: Emitted when an error occurs during reading or writing.

Why Use Streams?

Streams offer several advantages over traditional methods like fs.readFile() or fs.writeFile() for handling I/O:

- Memory Efficiency: You can handle very large files without consuming large amounts of memory, as data is processed in chunks.

- Performance: Streams provide non-blocking I/O. They allow reading or writing data piece by piece without waiting for the entire operation to complete, making the program more responsive.

- Real-Time Data Processing: Streams enable the processing of real-time data like live video/audio or large datasets from APIs.

Types of Streams in Node.js

There are four types of streams in Node.js:

- Readable Streams: For reading data.

- Writable Streams: For writing data.

- Duplex Streams: Streams that can read and write data simultaneously.

- Transform Streams: A type of Duplex Stream where the output is a modified version of the input (e.g., data compression).

Let’s go through each type with examples.

1. Readable Streams

Readable streams are used to read data chunk by chunk. For example, when reading a large file, using a readable stream allows us to read small chunks of data into memory instead of loading the entire file.

Example: Reading a File Using Readable Stream

const fs = require('fs');

// Create a readable stream

const readableStream = fs.createReadStream('largefile.txt', { encoding: 'utf8' });

// Listen for data events and process chunks

readableStream.on('data', (chunk) => {

console.log('Chunk received:', chunk);

});

// Listen for the end event when no more data is available

readableStream.on('end', () => {

console.log('No more data.');

});

// Handle error event

readableStream.on('error', (err) => {

console.error('Error reading the file:', err);

});

Explanation:

- fs.createReadStream() creates a stream to read the file in chunks.

- The data event is triggered each time a chunk is ready, and the end event is triggered when there is no more data to read.

2. Writable Streams

Writable streams are used to write data chunk by chunk. Instead of writing all data at once, you can stream it into a file or another writable destination.

Example: Writing Data Using Writable Stream

const fs = require('fs');

// Create a writable stream

const writableStream = fs.createWriteStream('output.txt');

// Write chunks to the writable stream

writableStream.write('Hello, World!\n');

writableStream.write('Streaming data...\n');

// End the stream (important to avoid hanging the process)

writableStream.end('Done writing.\n');

// Listen for the finish event

writableStream.on('finish', () => {

console.log('Data has been written to output.txt');

});

// Handle error event

writableStream.on('error', (err) => {

console.error('Error writing to the file:', err);

});

Explanation:

- fs.createWriteStream() creates a writable stream.

- Data is written to the stream using the write() method.

- The finish event is triggered when all data is written, and the end() method marks the end of the stream.

3. Duplex Streams

Duplex streams can both read and write data. A typical example of a duplex stream is a network socket, where you can send and receive data simultaneously.

Example: Duplex Stream

const { Duplex } = require('stream');

const duplexStream = new Duplex({

write(chunk, encoding, callback) {

console.log(`Writing: ${chunk.toString()}`);

callback();

},

read(size) {

this.push('More data');

this.push(null); // End the stream

}

});

// Write to the duplex stream

duplexStream.write('Hello Duplex!\n');

// Read from the duplex stream

duplexStream.on('data', (chunk) => {

console.log(`Read: ${chunk}`);

});

Explanation:

- We define a write method for writing and a read method for reading.

- Duplex streams can handle both reading and writing simultaneously.

4. Transform Streams

Transform streams modify the data as it passes through the stream. For example, a transform stream could compress, encrypt, or manipulate data.

Example: Transform Stream (Uppercasing Text)

const { Transform } = require('stream');

// Create a transform stream that converts data to uppercase

const transformStream = new Transform({

transform(chunk, encoding, callback) {

this.push(chunk.toString().toUpperCase());

callback();

}

});

// Pipe input to transform stream and then output the result

process.stdin.pipe(transformStream).pipe(process.stdout);

Explanation:

- Data input from stdin is transformed to uppercase by the transform method and then output to stdout.

Piping Streams

One of the key features of Node.js streams is their ability to be piped. Piping allows you to chain streams together, passing the output of one stream as the input to another.

Example: Piping a Readable Stream into a Writable Stream

const fs = require('fs');

// Create a readable stream for the input file

const readableStream = fs.createReadStream('input.txt');

// Create a writable stream for the output file

const writableStream = fs.createWriteStream('output.txt');

// Pipe the readable stream into the writable stream

readableStream.pipe(writableStream);

// Handle errors

readableStream.on('error', (err) => console.error('Read error:', err));

writableStream.on('error', (err) => console.error('Write error:', err));

Explanation:

- The pipe() method connects the readable stream to the writable stream, sending data chunks directly from input.txt to output.txt.

Practical Use Cases of Node.js Streams

- Reading and Writing Large Files: Instead of reading an entire file into memory, streams allow you to process the file in small chunks.

- Real-Time Data Processing: Streams are ideal for real-time applications such as audio/video processing, chat applications, or live data feeds.

- HTTP Requests/Responses: HTTP requests and responses in Node.js are streams, making it easy to process incoming data or send data progressively.

Conclusion

Node.js streams provide a powerful and efficient way to handle I/O operations by working with data in chunks. Whether you are reading large files, piping data between sources, or transforming data on the fly, streams offer a memory-efficient and performant solution. Understanding how to leverage readable, writable, duplex, and transform streams in your application can significantly improve your application's performance and scalability.

The above is the detailed content of Understanding Node.js Streams: What, Why, and How to Use Them. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1243

1243

24

24

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

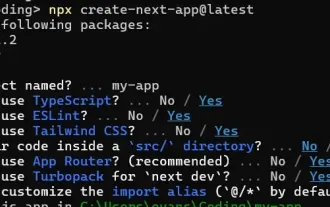

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.

JavaScript and the Web: Core Functionality and Use Cases

Apr 18, 2025 am 12:19 AM

JavaScript and the Web: Core Functionality and Use Cases

Apr 18, 2025 am 12:19 AM

The main uses of JavaScript in web development include client interaction, form verification and asynchronous communication. 1) Dynamic content update and user interaction through DOM operations; 2) Client verification is carried out before the user submits data to improve the user experience; 3) Refreshless communication with the server is achieved through AJAX technology.