Reverse engineering GraphQL persistedQuery extension

GraphQL is a query language for getting deeply nested structured data from a website's backend, similar to MongoDB queries.

The request is usually a POST to some general /graphql endpoint with a body like this:

However, with large data structures, this becomes inefficient - you are sending a large query in a POST request body, which is (almost always) the same and only changes on website updates; POST requests can’t be cached, etc. Therefore, an extension called “persisted queries” was developed. This isn’t an anti-scraping secret; you can read the public documentation about it here.

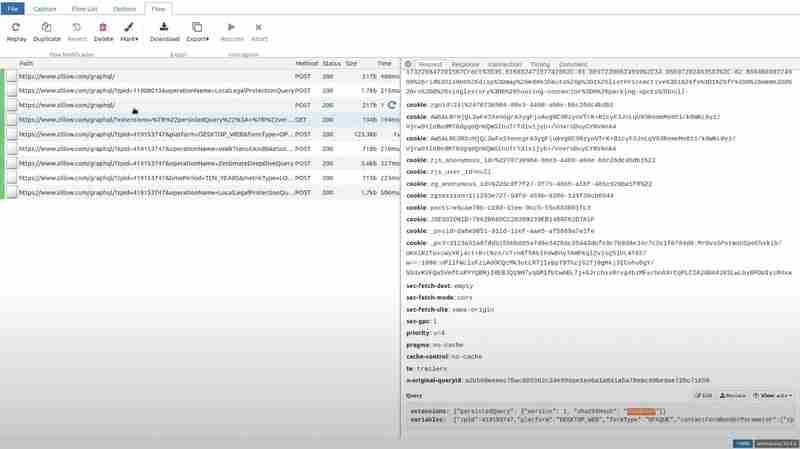

TLDR: the client computes the sha256 hash of the query text and only sends that hash. In addition, you can possibly fit all of this into the query string of a GET request, making it easily cachable. Below is an example request from Zillow

As you can see, it’s just some metadata about the persistedQuery extension, the hash of the query, and variables to be embedded in the query.

Here’s another request from expedia.com, sent as a POST, but with the same extension:

This primarily optimizes website performance, but it creates several challenges for web scraping:

- GET requests are usually more prone to being blocked.

- Hidden Query Parameters: We don’t know the full query, so if the website responds with a “Persisted query not found” error (asking us to send the query in full, not just the hash), we can’t send it.

- Once the website changes even a little bit and the clients start asking for a new query - even though the old one might still work, the server will very soon forget its ID/hash, and your request with this hash will never work again, since you can’t “remind” the server of the full query text.

Therefore, for different reasons, you might find yourself in the need to extract the whole query text. You could dig through the website JavaScript, and if you’re lucky, you might find the query text there in full, but often, it is somehow dynamically constructed from multiple fragments, etc.

Therefore, we figured out a better way: we will not touch the client-side JavaScript at all. Instead, we will try to simulate the situation where the client tries to use a hash that the server does not know. Therefore, we need to intercept the (valid) request sent by the browser in-flight and modify the hash to a bogus one before passing it to the server.

For exactly this use case, a perfect tool exists: mitmproxy, an open-source Python library that intercepts requests made by your own devices, websites, or apps and allows you to modify them with simple Python scripts.

Download mitmproxy, and prepare a Python script like this:

import json

def request(flow):

try:

dat = json.loads(flow.request.text)

dat[0]["extensions"]["persistedQuery"]["sha256Hash"] = "0d9e" # any bogus hex string here

flow.request.text = json.dumps(dat)

except:

pass

This defines a hook that mitmproxy will run on every request: it tries to load the request's JSON body, modifies the hash to an arbitrary value, and writes the updated JSON as a new body of the request.

We also need to make sure we reroute our browser requests to mitmproxy. For this purpose we are going to use a browser extension called FoxyProxy. It is available in both Firefox and Chrome.

Just add a route with these settings:

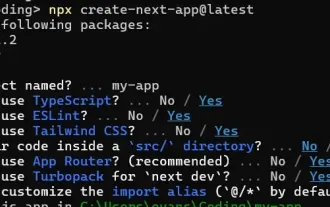

Now we can run mitmproxy with this script: mitmweb -s script.py

This will open a browser tab where you can watch all the intercepted requests in real-time.

If you go to the particular path and see the query in the request section, you will see some garbage value has replaced the hash.

Now, if you visit Zillow and open that particular path that we tried for the extension, and go to the response section, the client-side receives the PersistedQueryNotFound error.

The front end of Zillow reacts with sending the whole query as a POST request.

We extract the query and hash directly from this POST request. To ensure that the Zillow server does not forget about this hash, we periodically run this POST request with the exact same query and hash. This will ensure that the scraper continues to work even when the server's cache is cleaned or reset or the website changes.

Conclusion

Persisted queries are a powerful optimization tool for GraphQL APIs, enhancing website performance by minimizing payload sizes and enabling GET request caching. However, they also pose significant challenges for web scraping, primarily due to the reliance on server-stored hashes and the potential for those hashes to become invalid.

Using mitmproxy to intercept and manipulate GraphQL requests gives an efficient approach to reveal the full query text without delving into complex client-side JavaScript. By forcing the server to respond with a PersistedQueryNotFound error, we can capture the full query payload and utilize it for scraping purposes. Periodically running the extracted query ensures the scraper remains functional, even when server-side cache resets occur or the website evolves.

The above is the detailed content of Reverse engineering GraphQL persistedQuery extension. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1243

1243

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.