Web Front-end

Web Front-end

JS Tutorial

JS Tutorial

Building a Web Crawler in Node.js to Discover AI-Powered JavaScript Repos on GitHub

Building a Web Crawler in Node.js to Discover AI-Powered JavaScript Repos on GitHub

Building a Web Crawler in Node.js to Discover AI-Powered JavaScript Repos on GitHub

GitHub is a treasure trove of innovative projects, especially in the ever-evolving world of artificial intelligence. But sifting through the countless repositories to find those that combine AI and JavaScript? That’s like finding gems in a vast sea of code. Enter our Node.js web crawler—a script that automates the search, extracting repository details like name, URL, and description.

In this tutorial, we’ll build a crawler that taps into GitHub, hunting down repositories that work with AI and JavaScript. Let’s dive into the code and start mining those gems.

Part 1: Setting Up the Project

Initialize the Node.js Project

Begin by creating a new directory for your project and initializing it with npm:

mkdir github-ai-crawler cd github-ai-crawler npm init -y

Next, install the necessary dependencies:

npm install axios cheerio

- axios : For making HTTP requests to GitHub.

- cheerio : For parsing and manipulating HTML, similar to jQuery.

Part 2: Understanding GitHub’s Search

GitHub provides a powerful search feature accessible via URL queries. For example, you can search for JavaScript repositories related to AI with this query:

https://github.com/search?q=ai+language:javascript&type=repositories

Our crawler will mimic this search, parse the results, and extract relevant details.

Part 3: Writing the Crawler Script

Create a file named crawler.js in your project directory and start coding.

Step 1: Import Dependencies

const axios = require('axios');

const cheerio = require('cheerio');

We’re using axios to fetch GitHub’s search results and cheerio to parse the HTML.

Step 2: Define the Search URL

const SEARCH_URL = 'https://github.com/search?q=ai+language:javascript&type=repositories';

This URL targets repositories related to AI and written in JavaScript.

2220 FREE RESOURCES FOR DEVELOPERS!! ❤️ ?? (updated daily)

1400 Free HTML Templates

351 Free News Articles

67 Free AI Prompts

315 Free Code Libraries

52 Free Code Snippets & Boilerplates for Node, Nuxt, Vue, and more!

25 Free Open Source Icon Libraries

Visit dailysandbox.pro for free access to a treasure trove of resources!

Step 3: Fetch and Parse the HTML

const fetchRepositories = async () => {

try {

// Fetch the search results page

const { data } = await axios.get(SEARCH_URL);

const $ = cheerio.load(data); // Load the HTML into cheerio

// Extract repository details

const repositories = [];

$('.repo-list-item').each((_, element) => {

const repoName = $(element).find('a').text().trim();

const repoUrl = `https://github.com${$(element).find('a').attr('href')}`;

const repoDescription = $(element).find('.mb-1').text().trim();

repositories.push({

name: repoName,

url: repoUrl,

description: repoDescription,

});

});

return repositories;

} catch (error) {

console.error('Error fetching repositories:', error.message);

return [];

}

};

Here’s what’s happening:

- Fetching HTML : The axios.get method retrieves the search results page.

- Parsing with Cheerio : We use Cheerio to navigate the DOM, targeting elements with classes like .repo-list-item.

- Extracting Details : For each repository, we extract the name, URL, and description.

Step 4: Display the Results

Finally, call the function and log the results:

mkdir github-ai-crawler cd github-ai-crawler npm init -y

Part 4: Running the Crawler

Save your script and run it with Node.js:

npm install axios cheerio

You’ll see a list of AI-related JavaScript repositories, each with its name, URL, and description, neatly displayed in your terminal.

Part 5: Enhancing the Crawler

Want to take it further? Here are some ideas:

- Pagination : Add support for fetching multiple pages of search results by modifying the URL with &p=2, &p=3, etc.

- Filtering : Filter repositories by stars or forks to prioritize popular projects.

- Saving Data : Save the results to a file or database for further analysis.

Example for saving to a JSON file:

https://github.com/search?q=ai+language:javascript&type=repositories

The Beauty of Automation

With this crawler, you’ve automated the tedious task of finding relevant repositories on GitHub. No more manual browsing or endless clicking—your script does the hard work, presenting the results in seconds.

For more tips on web development, check out DailySandbox and sign up for our free newsletter to stay ahead of the curve!

The above is the detailed content of Building a Web Crawler in Node.js to Discover AI-Powered JavaScript Repos on GitHub. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1662

1662

14

14

1419

1419

52

52

1312

1312

25

25

1262

1262

29

29

1235

1235

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

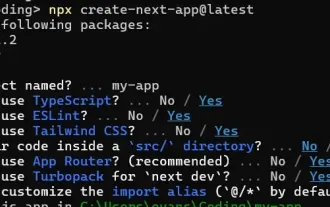

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing