Web scraping and analysing foreign languages data

Recently I decided that I would like to do a quick web scraping and data analysis project. Because my brain likes to come up with big ideas that would take lots of time, I decided to challenge myself to come up with something simple that could viably be done in a few hours.

Here's what I came up with:

As my undergrad degree was originally in Foreign Languages (French and Spanish), I thought it'd be fun to web scrape some language related data. I wanted to use the BeautifulSoup library, which can parse static html but isn't able to deal with dynamic web pages that need onclick events to reveal the whole dataset (ie. clicking on the next page of data if the page is paginated).

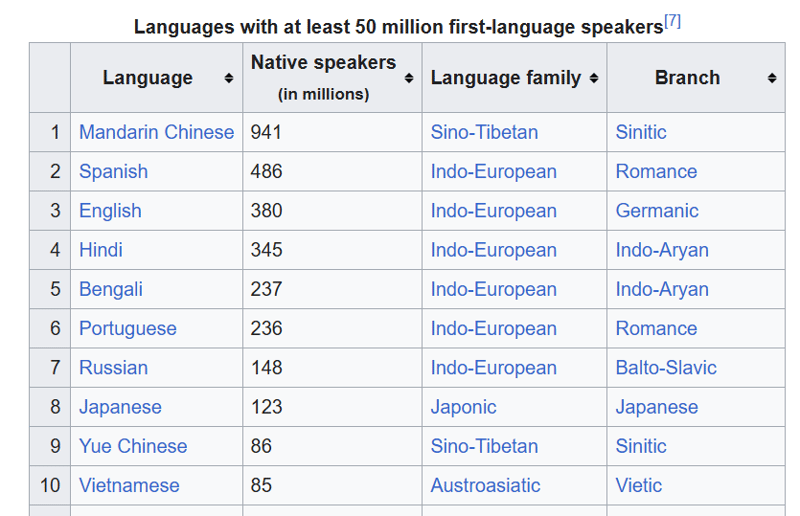

I decided on this Wikipedia page of the most commonly spoken languages.

I wanted to do the following:

- Get the html for the page and output to a .txt file

- Use beautiful soup to parse the html file and extract the table data

- Write the table to a .csv file

- Come up with 10 questions I wanted to answer for this dataset using data analysis

- Answer those questions using pandas and a Jupyter Notebook

I decided on splitting out the project into these steps for separation of concern, but also I wanted to avoid making multiple unnecessary requests to get the html from Wikipedia by rerunning the script. Saving the html file and then working with it in a separate script means that you don't need to keep re-requesting the data, as you already have it.

Project Link

The link to my github repo for this project is: https://github.com/gabrielrowan/Foreign-Languages-Analysis

Getting the html

First, I retrieved and output the html. After working with C# and C , it's always a novelty to me how short and concise Python code is ?

url = 'https://en.wikipedia.org/wiki/List_of_languages_by_number_of_native_speakers'

response = requests.get(url)

html = response.text

with open("languages_html.txt", "w", encoding="utf-8") as file:

file.write(html)

Parsing the html

To parse the html with Beautiful soup and select the table I was interested in, I did:

with open("languages_html.txt", "r", encoding="utf-8") as file:

soup = BeautifulSoup(file, 'html.parser')

# get table

top_languages_table = soup.select_one('.wikitable.sortable.static-row-numbers')

Then, I got the table header text to get the column names for my pandas dataframe:

# get column names

columns = top_languages_table.find_all("th")

column_titles = [column.text.strip() for column in columns]

After that, I created the dataframe, set the column names, retrieved each table row and wrote each row to the dataframe:

# get table rows

table_data = top_languages_table.find_all("tr")

# define dataframe

df = pd.DataFrame(columns=column_titles)

# get table data

for row in table_data[1:]:

row_data = row.find_all('td')

row_data_txt = [row.text.strip() for row in row_data]

print(row_data_txt)

df.loc[len(df)] = row_data_txt

Note - without using strip() there were n characters in the text which weren't needed.

Last, I wrote the dataframe to a .csv.

Analysing the data

In advance, I'd come up with these questions that I wanted to answer from the data:

- What is the total number of native speakers across all languages in the dataset?

- How many different types of language family are there?

- What is the total number of native speakers per language family?

- What are the top 3 most common language families?

- Create a pie chart showing the top 3 most common language families

- What is the most commonly occuring Language family - branch pair?

- Which languages are Sino-Tibetan in the table?

- Display a bar chart of the native speakers of all Romance and Germanic languages

- What percentage of total native speakers is represented by the top 5 languages?

- Which branch has the most native speakers, and which has the least?

The Results

While I won't go into the code to answer all of these questions, I will go into the 2 ones that involved charts.

Display a bar chart of the native speakers of all Romance and Germanic languages

First, I created a dataframe that only included rows where the branch name was 'Romance' or 'Germanic'

url = 'https://en.wikipedia.org/wiki/List_of_languages_by_number_of_native_speakers'

response = requests.get(url)

html = response.text

with open("languages_html.txt", "w", encoding="utf-8") as file:

file.write(html)

Then I specified the x axis, y axis and the colour of the bars that I wanted for the chart:

with open("languages_html.txt", "r", encoding="utf-8") as file:

soup = BeautifulSoup(file, 'html.parser')

# get table

top_languages_table = soup.select_one('.wikitable.sortable.static-row-numbers')

This created:

Create a pie chart showing the top 3 most common language families

To create the pie chart, I retrieved the top 3 most common language families and put these in a dataframe.

This code groups gets the total sum of native speakers per language family, sorts them in descending order, and extracts the top 3 entries.

# get column names

columns = top_languages_table.find_all("th")

column_titles = [column.text.strip() for column in columns]

Then I put the data in a pie chart, specifying the y axis of 'Native Speakers' and a legend, which creates colour coded labels for each language family shown in the chart.

# get table rows

table_data = top_languages_table.find_all("tr")

# define dataframe

df = pd.DataFrame(columns=column_titles)

# get table data

for row in table_data[1:]:

row_data = row.find_all('td')

row_data_txt = [row.text.strip() for row in row_data]

print(row_data_txt)

df.loc[len(df)] = row_data_txt

The code and responses for the rest of the questions can be found here. I used markdown in the notebook to write the questions and their answers.

Next Time:

For my next iteration of a web scraping & data analysis project, I'd like to make things more complicated with:

- Web scraping a dynamic page where more data is revealed on click/ scroll

- Analysing a much bigger dataset, potentially one that needs some data cleaning work before analysis

Final thoughts

Even though it was a quick one, I enjoyed doing this project. It reminded me how useful short, manageable projects can be for getting the practice reps in ? Plus, extracting data from the internet and creating charts from it, even with a small dataset, is fun ?

The above is the detailed content of Web scraping and analysing foreign languages data. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1393

1393

52

52

37

37

111

111

How to solve the permissions problem encountered when viewing Python version in Linux terminal?

Apr 01, 2025 pm 05:09 PM

How to solve the permissions problem encountered when viewing Python version in Linux terminal?

Apr 01, 2025 pm 05:09 PM

Solution to permission issues when viewing Python version in Linux terminal When you try to view Python version in Linux terminal, enter python...

How to teach computer novice programming basics in project and problem-driven methods within 10 hours?

Apr 02, 2025 am 07:18 AM

How to teach computer novice programming basics in project and problem-driven methods within 10 hours?

Apr 02, 2025 am 07:18 AM

How to teach computer novice programming basics within 10 hours? If you only have 10 hours to teach computer novice some programming knowledge, what would you choose to teach...

How to efficiently copy the entire column of one DataFrame into another DataFrame with different structures in Python?

Apr 01, 2025 pm 11:15 PM

How to efficiently copy the entire column of one DataFrame into another DataFrame with different structures in Python?

Apr 01, 2025 pm 11:15 PM

When using Python's pandas library, how to copy whole columns between two DataFrames with different structures is a common problem. Suppose we have two Dats...

How to avoid being detected by the browser when using Fiddler Everywhere for man-in-the-middle reading?

Apr 02, 2025 am 07:15 AM

How to avoid being detected by the browser when using Fiddler Everywhere for man-in-the-middle reading?

Apr 02, 2025 am 07:15 AM

How to avoid being detected when using FiddlerEverywhere for man-in-the-middle readings When you use FiddlerEverywhere...

How does Uvicorn continuously listen for HTTP requests without serving_forever()?

Apr 01, 2025 pm 10:51 PM

How does Uvicorn continuously listen for HTTP requests without serving_forever()?

Apr 01, 2025 pm 10:51 PM

How does Uvicorn continuously listen for HTTP requests? Uvicorn is a lightweight web server based on ASGI. One of its core functions is to listen for HTTP requests and proceed...

How to dynamically create an object through a string and call its methods in Python?

Apr 01, 2025 pm 11:18 PM

How to dynamically create an object through a string and call its methods in Python?

Apr 01, 2025 pm 11:18 PM

In Python, how to dynamically create an object through a string and call its methods? This is a common programming requirement, especially if it needs to be configured or run...

How to solve permission issues when using python --version command in Linux terminal?

Apr 02, 2025 am 06:36 AM

How to solve permission issues when using python --version command in Linux terminal?

Apr 02, 2025 am 06:36 AM

Using python in Linux terminal...

How to handle comma-separated list query parameters in FastAPI?

Apr 02, 2025 am 06:51 AM

How to handle comma-separated list query parameters in FastAPI?

Apr 02, 2025 am 06:51 AM

Fastapi ...