Database

Database

Mysql Tutorial

Mysql Tutorial

How Can I Efficiently Select Random Rows from a Large PostgreSQL Table?

How Can I Efficiently Select Random Rows from a Large PostgreSQL Table?

How Can I Efficiently Select Random Rows from a Large PostgreSQL Table?

Randomly selecting rows from large databases such as PostgreSQL can be a performance-intensive task. This article explores two common methods of achieving this goal efficiently and discusses their advantages and disadvantages.

Method 1: Filter by random value

select * from table where random() < 0.01;

This method randomly sorts the rows and then filters based on a threshold. However, it requires a full table scan and can be slow for large data sets.

Method 2: Sort by random values and limit the results

select * from table order by random() limit 1000;

This method randomly sorts the rows and selects the top n rows. It performs better than the first method, but it has a limitation: it may not be able to select a random subset when there are too many rows in the row group.

Optimization solutions for large data sets

For tables with a large number of rows (such as 500 million rows in your example), the following approach provides an optimized solution:

WITH params AS (

SELECT 1 AS min_id, -- 最小ID(小于等于当前最小ID)

5100000 AS id_span -- 四舍五入。(max_id - min_id + buffer)

)

SELECT *

FROM (

SELECT p.min_id + trunc(random() * p.id_span)::integer AS id

FROM params p

, generate_series(1, 1100) g -- 1000 + buffer

GROUP BY 1 -- 去除重复项

) r

JOIN big USING (id)

LIMIT 1000; -- 去除多余项This query utilizes the index on the ID column for efficient retrieval. It generates a series of random numbers within the ID space, ensuring the IDs are unique, and joins the data with the main table to select the required number of rows.

Other considerations

Boundary query:

It is crucial that the table ID column has relatively few gaps to avoid the need for large buffers in random number generation.

Materialized view:

If you need to repeatedly access random data, consider creating materialized views to improve performance.

TABLESAMPLE SYSTEM for PostgreSQL 9.5:

This optimization technique introduced in PostgreSQL 9.5 allows fast sampling of a specified percentage of rows.

The above is the detailed content of How Can I Efficiently Select Random Rows from a Large PostgreSQL Table?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

When might a full table scan be faster than using an index in MySQL?

Apr 09, 2025 am 12:05 AM

When might a full table scan be faster than using an index in MySQL?

Apr 09, 2025 am 12:05 AM

Full table scanning may be faster in MySQL than using indexes. Specific cases include: 1) the data volume is small; 2) when the query returns a large amount of data; 3) when the index column is not highly selective; 4) when the complex query. By analyzing query plans, optimizing indexes, avoiding over-index and regularly maintaining tables, you can make the best choices in practical applications.

Can I install mysql on Windows 7

Apr 08, 2025 pm 03:21 PM

Can I install mysql on Windows 7

Apr 08, 2025 pm 03:21 PM

Yes, MySQL can be installed on Windows 7, and although Microsoft has stopped supporting Windows 7, MySQL is still compatible with it. However, the following points should be noted during the installation process: Download the MySQL installer for Windows. Select the appropriate version of MySQL (community or enterprise). Select the appropriate installation directory and character set during the installation process. Set the root user password and keep it properly. Connect to the database for testing. Note the compatibility and security issues on Windows 7, and it is recommended to upgrade to a supported operating system.

Explain InnoDB Full-Text Search capabilities.

Apr 02, 2025 pm 06:09 PM

Explain InnoDB Full-Text Search capabilities.

Apr 02, 2025 pm 06:09 PM

InnoDB's full-text search capabilities are very powerful, which can significantly improve database query efficiency and ability to process large amounts of text data. 1) InnoDB implements full-text search through inverted indexing, supporting basic and advanced search queries. 2) Use MATCH and AGAINST keywords to search, support Boolean mode and phrase search. 3) Optimization methods include using word segmentation technology, periodic rebuilding of indexes and adjusting cache size to improve performance and accuracy.

Difference between clustered index and non-clustered index (secondary index) in InnoDB.

Apr 02, 2025 pm 06:25 PM

Difference between clustered index and non-clustered index (secondary index) in InnoDB.

Apr 02, 2025 pm 06:25 PM

The difference between clustered index and non-clustered index is: 1. Clustered index stores data rows in the index structure, which is suitable for querying by primary key and range. 2. The non-clustered index stores index key values and pointers to data rows, and is suitable for non-primary key column queries.

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL is an open source relational database management system. 1) Create database and tables: Use the CREATEDATABASE and CREATETABLE commands. 2) Basic operations: INSERT, UPDATE, DELETE and SELECT. 3) Advanced operations: JOIN, subquery and transaction processing. 4) Debugging skills: Check syntax, data type and permissions. 5) Optimization suggestions: Use indexes, avoid SELECT* and use transactions.

Can mysql and mariadb coexist

Apr 08, 2025 pm 02:27 PM

Can mysql and mariadb coexist

Apr 08, 2025 pm 02:27 PM

MySQL and MariaDB can coexist, but need to be configured with caution. The key is to allocate different port numbers and data directories to each database, and adjust parameters such as memory allocation and cache size. Connection pooling, application configuration, and version differences also need to be considered and need to be carefully tested and planned to avoid pitfalls. Running two databases simultaneously can cause performance problems in situations where resources are limited.

The relationship between mysql user and database

Apr 08, 2025 pm 07:15 PM

The relationship between mysql user and database

Apr 08, 2025 pm 07:15 PM

In MySQL database, the relationship between the user and the database is defined by permissions and tables. The user has a username and password to access the database. Permissions are granted through the GRANT command, while the table is created by the CREATE TABLE command. To establish a relationship between a user and a database, you need to create a database, create a user, and then grant permissions.

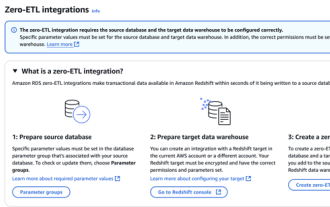

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

Data Integration Simplification: AmazonRDSMySQL and Redshift's zero ETL integration Efficient data integration is at the heart of a data-driven organization. Traditional ETL (extract, convert, load) processes are complex and time-consuming, especially when integrating databases (such as AmazonRDSMySQL) with data warehouses (such as Redshift). However, AWS provides zero ETL integration solutions that have completely changed this situation, providing a simplified, near-real-time solution for data migration from RDSMySQL to Redshift. This article will dive into RDSMySQL zero ETL integration with Redshift, explaining how it works and the advantages it brings to data engineers and developers.