Prompting Vision Language Models

Vision Language Models (VLMs): A Deep Dive into Multimodal Prompting

VLMs represent a significant leap forward in multimodal data processing, seamlessly integrating text and visual inputs. Unlike LLMs, which operate solely on text, VLMs handle both modalities, enabling tasks requiring visual and textual understanding. This opens doors to applications like Visual Question Answering (VQA) and Image Captioning. This post explores effective prompting techniques for VLMs to harness their visual comprehension capabilities.

Table of Contents:

- Introduction

- Prompting VLMs

- Zero-Shot Prompting

- Few-Shot Prompting

- Chain of Thought Prompting

- Object Detection Guided Prompting

- Conclusion

- References

Introduction:

VLMs build upon LLMs, adding visual processing as an extra modality. Training typically involves aligning image and text representations within a shared vector space, often using cross-attention mechanisms [1, 2, 3, 4]. This allows for convenient text-based interaction and querying of images. VLMs excel at bridging the gap between textual and visual data, handling tasks beyond the scope of text-only models. For a deeper understanding of VLM architecture, refer to Sebastian Raschka's article on multimodal LLMs.

Prompting VLMs:

Similar to LLMs, VLMs utilize various prompting techniques, enhanced by the inclusion of images. This post covers zero-shot, few-shot, and chain-of-thought prompting, along with object detection integration. Experiments use OpenAI's GPT-4o-mini VLM.

Code and resources are available on GitHub [link omitted, as per instructions].

Data Used:

Five permissively licensed images from Unsplash [links omitted] were used, with captions derived from the image URLs.

Zero-Shot Prompting:

Zero-shot prompting involves providing only a task description and the image(s). The VLM relies solely on this description for output generation. This represents the minimal information approach. The benefit is that well-crafted prompts can yield decent results without extensive training data, unlike earlier methods requiring large datasets for image classification or captioning.

OpenAI supports Base64 encoded image URLs [2]. The request structure resembles LLM prompting, but includes a Base64 encoded image:

{

"role": "system",

"content": "You are a helpful assistant that can analyze images and provide captions."

},

{

"role": "user",

"content": [

{

"type": "text",

"text": "Please analyze the following image:"

},

{

"type": "image_url",

"image_url": {

"url": "data:image/jpeg;base64,{base64_image}",

"detail": "detail"

}

}

]

}Multiple images can be included. Helper functions for Base64 encoding, prompt construction, and parallel API calls are implemented. [Code snippets omitted, as per instructions]. The results demonstrate detailed captions generated from zero-shot prompting. [Image omitted, as per instructions].

Few-Shot Prompting:

Few-shot prompting provides task examples as context, enhancing model understanding. [Code snippets omitted, as per instructions]. The use of three example images shows that the captions generated are more concise than those from zero-shot prompting. [Images omitted, as per instructions]. This highlights the impact of example selection on VLM output style and detail.

Chain of Thought Prompting:

Chain of Thought (CoT) prompting [9] breaks down complex problems into simpler steps. This is applied to VLMs, allowing them to utilize both image and text for reasoning. [Code snippets omitted, as per instructions]. CoT traces are created using OpenAI's O1 model and used as few-shot examples. [Example CoT trace and image omitted, as per instructions]. The results show the VLM's ability to reason through intermediate steps before generating the final caption. [Image omitted, as per instructions].

Object Detection Guided Prompting:

Object detection can enhance VLM prompting. An open-vocabulary object detection model, OWL-ViT [11], is used. First, the VLM identifies high-level objects. These are used as prompts for OWL-ViT to generate bounding boxes. The annotated image is then passed to the VLM for captioning. [Code snippets omitted, as per instructions]. While the impact is limited for simple images, this technique is valuable for complex tasks like document understanding. [Image omitted, as per instructions].

Conclusion:

VLMs offer powerful capabilities for tasks requiring both visual and textual understanding. This post explored various prompting strategies, showcasing their impact on VLM performance. Further exploration of creative prompting techniques holds immense potential. Additional resources on VLM prompting are available [13].

References:

[1-13] [References omitted, as per instructions].

The above is the detailed content of Prompting Vision Language Models. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1359

1359

52

52

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

How to Use DALL-E 3: Tips, Examples, and Features

Mar 09, 2025 pm 01:00 PM

How to Use DALL-E 3: Tips, Examples, and Features

Mar 09, 2025 pm 01:00 PM

DALL-E 3: A Generative AI Image Creation Tool Generative AI is revolutionizing content creation, and DALL-E 3, OpenAI's latest image generation model, is at the forefront. Released in October 2023, it builds upon its predecessors, DALL-E and DALL-E 2

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

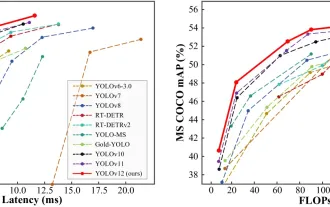

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Sora vs Veo 2: Which One Creates More Realistic Videos?

Mar 10, 2025 pm 12:22 PM

Sora vs Veo 2: Which One Creates More Realistic Videos?

Mar 10, 2025 pm 12:22 PM

Google's Veo 2 and OpenAI's Sora: Which AI video generator reigns supreme? Both platforms generate impressive AI videos, but their strengths lie in different areas. This comparison, using various prompts, reveals which tool best suits your needs. T

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google DeepMind's GenCast: A Revolutionary AI for Weather Forecasting Weather forecasting has undergone a dramatic transformation, moving from rudimentary observations to sophisticated AI-powered predictions. Google DeepMind's GenCast, a groundbreak

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

The article discusses AI models surpassing ChatGPT, like LaMDA, LLaMA, and Grok, highlighting their advantages in accuracy, understanding, and industry impact.(159 characters)

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.