The Math Behind In-Context Learning

In-context learning (ICL), a key feature of modern large language models (LLMs), allows transformers to adapt based on examples within the input prompt. Few-shot prompting, using several task examples, effectively demonstrates the desired behavior. But how do transformers achieve this adaptation? This article explores potential mechanisms behind ICL.

The core of ICL is: given example pairs ((x,y)), can attention mechanisms learn an algorithm to map new queries (x) to their outputs (y)?

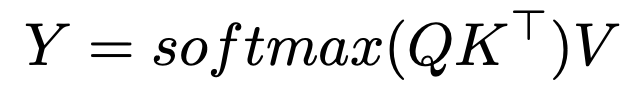

Softmax Attention and Nearest Neighbor Search

The softmax attention formula is:

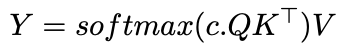

Introducing an inverse temperature parameter, c, modifies the attention allocation:

As c approaches infinity, attention becomes a one-hot vector, focusing solely on the most similar token – effectively a nearest neighbor search. With finite c, attention resembles Gaussian kernel smoothing. This suggests ICL might implement a nearest neighbor algorithm on input-output pairs.

Implications and Further Research

Understanding how transformers learn algorithms (like nearest neighbor) opens doors for AutoML. Hollmann et al. demonstrated training a transformer on synthetic datasets to learn the entire AutoML pipeline, predicting optimal models and hyperparameters from new data in a single pass.

Anthropic's 2022 research suggests "induction heads" as a mechanism. These pairs of attention heads copy and complete patterns; for example, given "...A, B...A", they predict "B" based on prior context.

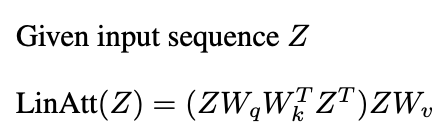

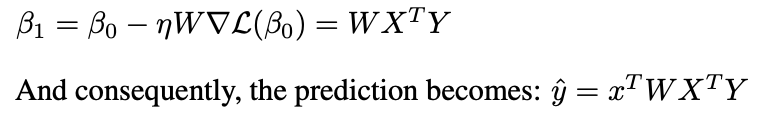

Recent studies (Garg et al. 2022, Oswald et al. 2023) link transformers' ICL to gradient descent. Linear attention, omitting the softmax operation:

Resembles preconditioned gradient descent (PGD):

One layer of linear attention performs one PGD step.

Conclusion

Attention mechanisms can implement learning algorithms, enabling ICL by learning from demonstration pairs. While the interplay of multiple attention layers and MLPs is complex, research sheds light on ICL's mechanics. This article offers a high-level overview of these insights.

Further Reading:

- In-context Learning and Induction Heads

- What Can Transformers Learn In-Context? A Case Study of Simple Function Classes

- Transformers Learn In-Context by Gradient Descent

- Transformers learn to implement preconditioned gradient descent for in-context learning

Acknowledgment

This article is inspired by Fall 2024 graduate coursework at the University of Michigan. Any errors are solely the author's.

The above is the detailed content of The Math Behind In-Context Learning. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

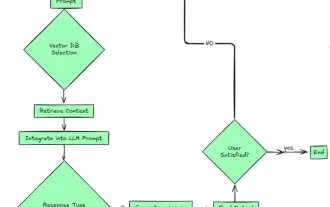

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le