Groq LPU Inference Engine Tutorial

Experience the speed of Groq's Language Processing Unit (LPU) Inference Engine and say goodbye to lengthy ChatGPT wait times! This tutorial demonstrates how Groq drastically reduces response times, from a potential 40 seconds to a mere 2 seconds.

We'll cover:

- Understanding the Groq LPU Inference Engine.

- Comparing OpenAI and Groq API features and architecture.

- Utilizing Groq online and locally.

- Integrating the Groq API into VSCode.

- Working with the Groq Python API.

- Building context-aware AI applications using Groq API and LlamaIndex.

New to large language models (LLMs)? Consider our "Developing Large Language Models" skill track for foundational knowledge on fine-tuning and building LLMs from scratch.

Groq LPU Inference Engine: A Deep Dive

Groq's LPU Inference Engine is a revolutionary processing system designed for computationally intensive, sequential tasks, especially LLM response generation. This technology significantly improves text processing and generation speed and accuracy.

Compared to CPUs and GPUs, the LPU boasts superior computing power, resulting in dramatically faster word prediction and text generation. It also effectively mitigates memory bottlenecks, a common GPU limitation with LLMs.

Groq's LPU tackles challenges like compute density, memory bandwidth, latency, and throughput, outperforming both GPUs and TPUs. For instance, it achieves over 310 tokens per second per user on Llama-3 70B. Learn more about the LPU architecture in the Groq ISCA 2022 research paper.

OpenAI vs. Groq API: A Performance Comparison

Currently, Groq LLMs are accessible via groq.com, the Groq Cloud API, Groq Playground, and third-party platforms like Poe. This section compares OpenAI and Groq Cloud features and models, benchmarking API call speeds using CURL.

OpenAI: Offers a broad range of features and models, including:

- Embedding models.

- Text generation models (GPT-4o, GPT-4 Turbo).

- Code interpreter and file search.

- Model fine-tuning capabilities.

- Image generation models.

- Audio models (transcription, translation, text-to-speech).

- Vision models (image understanding).

- Function calling.

OpenAI's API is known for its speed and decreasing costs. A sample CURL command (taking approximately 13 seconds):

curl -X POST https://api.openai.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d '{

"model": "gpt-4o",

"messages": [

{ "role": "system", "content": "You are a helpful assistant." },

{ "role": "user", "content": "How do I get better at programming?" }

]

}'

Groq: While newer to the market, Groq offers:

- Text generation models (LLaMA3 70b, Gemma 7b, Mixtral 8x7b).

- Transcription and translation (Whisper Large V3 - not publicly available).

- OpenAI API compatibility.

- Function calling.

Groq Cloud's significantly faster response times are evident in this CURL example (approximately 2 seconds), showcasing a 6.5x speed advantage:

curl -X POST https://api.openai.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d '{

"model": "gpt-4o",

"messages": [

{ "role": "system", "content": "You are a helpful assistant." },

{ "role": "user", "content": "How do I get better at programming?" }

]

}'

Utilizing Groq: Cloud and Local Access

Groq Cloud provides an AI playground for testing models and APIs. Account creation is required. The playground allows you to select models (e.g., llama3-70b-8192) and input prompts.

For local access, generate an API key in the Groq Cloud API Keys section. Jan AI facilitates local LLM usage (OpenAI, Anthropic, Cohere, MistralAI, Groq). After installing and launching Jan AI, configure your Groq API key in the settings.

Note: Free Groq Cloud plans have rate limits.

VSCode Integration and Groq Python API

Integrate Groq into VSCode using the CodeGPT extension. Configure your Groq API key within CodeGPT to leverage Groq's speed for AI-powered coding assistance.

The Groq Python API offers features like streaming and asynchronous chat completion. This section provides examples using DataCamp's DataLab (or a similar Jupyter Notebook environment). Remember to set your GROQ_API_KEY environment variable.

Building Context-Aware Applications with LlamaIndex

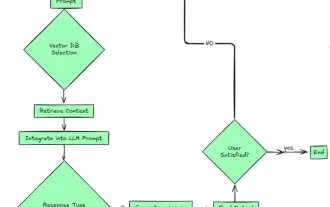

This section demonstrates building a context-aware ChatPDF application using Groq API and LlamaIndex. This involves loading text from a PDF, creating embeddings, storing them in a vector store, and building a RAG chat engine with history.

Conclusion

Groq's LPU Inference Engine significantly accelerates LLM performance. This tutorial explored Groq Cloud, local integration (Jan AI, VSCode), the Python API, and building context-aware applications. Consider exploring LLM fine-tuning as a next step in your learning.

The above is the detailed content of Groq LPU Inference Engine Tutorial. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le