Running OLMo-2 Locally with Gradio and LangChain

OLMo 2: A Powerful Open-Source LLM for Accessible AI

The field of Natural Language Processing (NLP) has seen rapid advancements, particularly with large language models (LLMs). While proprietary models have historically dominated, open-source alternatives are rapidly closing the gap. OLMo 2 represents a significant leap forward, offering performance comparable to closed-source models while maintaining complete transparency and accessibility. This article delves into OLMo 2, exploring its training, performance, and practical application.

Key Learning Points:

- Grasp the importance of open-source LLMs and OLMo 2's contribution to AI research.

- Understand OLMo 2's architecture, training methods, and benchmark results.

- Differentiate between open-weight, partially open, and fully open model architectures.

- Learn to run OLMo 2 locally using Gradio and LangChain.

- Build a chatbot application using OLMo 2 with Python code examples.

(This article is part of the Data Science Blogathon.)

Table of Contents:

- The Need for Open-Source LLMs

- Introducing OLMo 2

- Deconstructing OLMo 2's Training

- Exploring OLMo 2's Capabilities

- Building a Chatbot with OLMo 2

- Conclusion

- Frequently Asked Questions

The Demand for Open-Source LLMs

The initial dominance of proprietary LLMs raised concerns about accessibility, transparency, and bias. Open-source LLMs address these issues by fostering collaboration and allowing for scrutiny, modification, and improvement. This open approach is vital for advancing the field and ensuring equitable access to LLM technology.

The Allen Institute for AI (AI2)'s OLMo project exemplifies this commitment. OLMo 2 goes beyond simply releasing model weights; it provides the training data, code, training recipes, intermediate checkpoints, and instruction-tuned models. This comprehensive release promotes reproducibility and further innovation.

Understanding OLMo 2

OLMo 2 significantly improves upon its predecessor, OLMo-0424. Its 7B and 13B parameter models demonstrate performance comparable to, or exceeding, similar fully open models, even rivaling open-weight models like Llama 3.1 on English academic benchmarks—a remarkable achievement considering its reduced training FLOPs.

Key improvements include:

- Substantial Performance Gains: OLMo-2 (7B and 13B) shows marked improvement over earlier OLMo models, indicating advancements in architecture, data, or training methodology.

- Competitive with MAP-Neo-7B: OLMo-2, particularly the 13B version, achieves scores comparable to MAP-Neo-7B, a strong baseline among fully open models.

OLMo 2's Training Methodology

OLMo 2's architecture builds upon the original OLMo, incorporating refinements for improved stability and performance. The training process comprises two stages:

- Foundation Training: Utilizes the OLMo-Mix-1124 dataset (approximately 3.9 trillion tokens from diverse open sources) to establish a robust foundation for language understanding.

- Refinement and Specialization: Employs the Dolmino-Mix-1124 dataset, a curated mix of high-quality web data and domain-specific data (academic content, Q&A forums, instruction data, math workbooks), to refine the model's knowledge and skills. "Model souping" further enhances the final checkpoint.

Openness Levels in LLMs

Since OLMo-2 is a fully open model, let's clarify the distinctions between different levels of model openness:

- Open-Weight Models: Only the model weights are released.

- Partially Open Models: Release some additional information beyond weights, but not a complete picture of the training process.

- Fully Open Models: Provide complete transparency, including weights, training data, code, recipes, and checkpoints. This allows for full reproducibility.

A table summarizing the key differences is provided below.

| Feature | Open Weight Models | Partially Open Models | Fully Open Models |

|---|---|---|---|

| Weights | Released | Released | Released |

| Training Data | Typically Not | Partially Available | Fully Available |

| Training Code | Typically Not | Partially Available | Fully Available |

| Training Recipe | Typically Not | Partially Available | Fully Available |

| Reproducibility | Limited | Moderate | Full |

| Transparency | Low | Medium | High |

Exploring and Running OLMo 2 Locally

OLMo 2 is readily accessible. Instructions for downloading the model and data, along with the training code and evaluation metrics, are available. To run OLMo 2 locally, use Ollama. After installation, simply run ollama run olmo2:7b in your command line. Necessary libraries (LangChain and Gradio) can be installed via pip.

Building a Chatbot with OLMo 2

The following Python code demonstrates building a chatbot using OLMo 2, Gradio, and LangChain:

import gradio as gr

from langchain_core.prompts import ChatPromptTemplate

from langchain_ollama.llms import OllamaLLM

def generate_response(history, question):

template = """Question: {question}

Answer: Let's think step by step."""

prompt = ChatPromptTemplate.from_template(template)

model = OllamaLLM(model="olmo2")

chain = prompt | model

answer = chain.invoke({"question": question})

history.append({"role": "user", "content": question})

history.append({"role": "assistant", "content": answer})

return history

with gr.Blocks() as iface:

chatbot = gr.Chatbot(type='messages')

with gr.Row():

with gr.Column():

txt = gr.Textbox(show_label=False, placeholder="Type your question here...")

txt.submit(generate_response, [chatbot, txt], chatbot)

iface.launch()This code provides a basic chatbot interface. More sophisticated applications can be built upon this foundation. Example outputs and prompts are shown in the original article.

Conclusion

OLMo 2 represents a significant contribution to the open-source LLM ecosystem. Its strong performance, combined with its full transparency, makes it a valuable tool for researchers and developers. While not universally superior across all tasks, its open nature fosters collaboration and accelerates progress in the field of accessible and transparent AI.

Key Takeaways:

- OLMo-2's 13B parameter model demonstrates excellent performance on various benchmarks, outperforming other open models.

- Full model openness facilitates the development of more effective models.

- The chatbot example showcases the ease of integration with LangChain and Gradio.

Frequently Asked Questions (FAQs) (The FAQs from the original article are included here.)

(Note: Image URLs remain unchanged.)

The above is the detailed content of Running OLMo-2 Locally with Gradio and LangChain. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

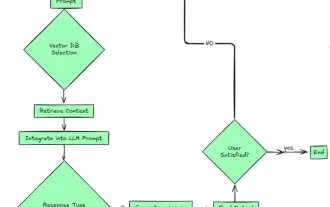

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le