How to Fine-Tune Phi-4 Locally?

This guide demonstrates fine-tuning the Microsoft Phi-4 large language model (LLM) for specialized tasks using Low-Rank Adaptation (LoRA) adapters and Hugging Face. By focusing on specific domains, you can optimize Phi-4's performance for applications like customer support or medical advice. The efficiency of LoRA makes this process faster and less resource-intensive.

Key Learning Outcomes:

- Fine-tune Microsoft Phi-4 using LoRA adapters for targeted applications.

- Configure and load Phi-4 efficiently with 4-bit quantization.

- Prepare and transform datasets for fine-tuning with Hugging Face and the

unslothlibrary. - Optimize model performance using Hugging Face's

SFTTrainer. - Monitor GPU usage and save/upload fine-tuned models to Hugging Face for deployment.

Prerequisites:

Before starting, ensure you have:

- Python 3.8

- PyTorch (with CUDA support for GPU acceleration)

-

unslothlibrary - Hugging Face

transformersanddatasetslibraries

Install necessary libraries using:

pip install unsloth pip install --force-reinstall --no-cache-dir --no-deps git+https://github.com/unslothai/unsloth.git

Fine-Tuning Phi-4: A Step-by-Step Approach

This section details the fine-tuning process, from setup to deployment on Hugging Face.

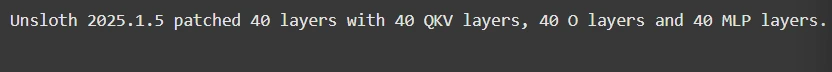

Step 1: Model Setup

This involves loading the model and importing essential libraries:

from unsloth import FastLanguageModel

import torch

max_seq_length = 2048

load_in_4bit = True

model, tokenizer = FastLanguageModel.from_pretrained(

model_name="unsloth/Phi-4",

max_seq_length=max_seq_length,

load_in_4bit=load_in_4bit,

)

model = FastLanguageModel.get_peft_model(

model,

r=16,

target_modules=["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"],

lora_alpha=16,

lora_dropout=0,

bias="none",

use_gradient_checkpointing="unsloth",

random_state=3407,

)

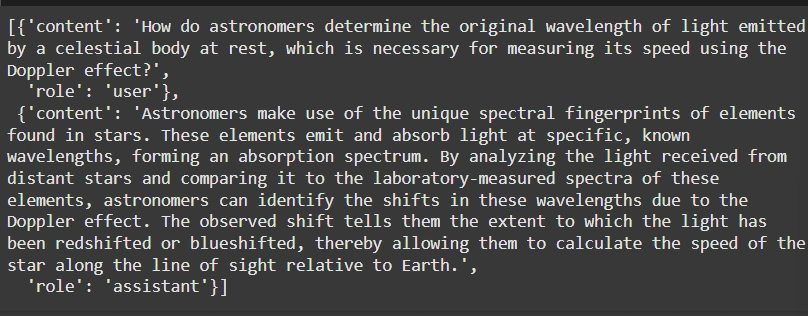

Step 2: Dataset Preparation

We'll use the FineTome-100k dataset in ShareGPT format. unsloth helps convert this to Hugging Face's format:

from datasets import load_dataset

from unsloth.chat_templates import standardize_sharegpt, get_chat_template

dataset = load_dataset("mlabonne/FineTome-100k", split="train")

dataset = standardize_sharegpt(dataset)

tokenizer = get_chat_template(tokenizer, chat_template="phi-4")

def formatting_prompts_func(examples):

texts = [

tokenizer.apply_chat_template(convo, tokenize=False, add_generation_prompt=False)

for convo in examples["conversations"]

]

return {"text": texts}

dataset = dataset.map(formatting_prompts_func, batched=True)

Step 3: Model Fine-tuning

Fine-tune using Hugging Face's SFTTrainer:

from trl import SFTTrainer

from transformers import TrainingArguments, DataCollatorForSeq2Seq

from unsloth import is_bfloat16_supported

from unsloth.chat_templates import train_on_responses_only

trainer = SFTTrainer(

# ... (Trainer configuration as in the original response) ...

)

trainer = train_on_responses_only(

trainer,

instruction_part="user",

response_part="assistant",

)

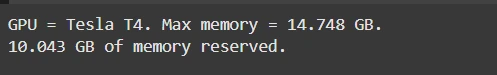

Step 4: GPU Usage Monitoring

Monitor GPU memory usage:

import torch # ... (GPU monitoring code as in the original response) ...

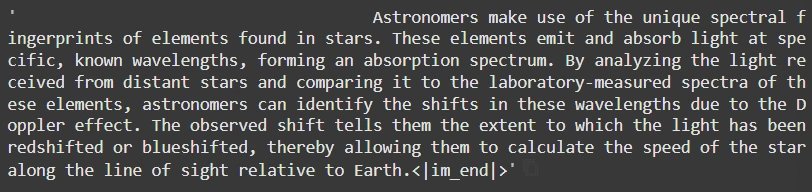

Step 5: Inference

Generate responses:

pip install unsloth pip install --force-reinstall --no-cache-dir --no-deps git+https://github.com/unslothai/unsloth.git

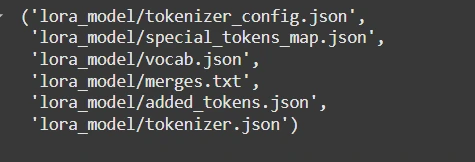

Step 6: Saving and Uploading

Save locally or push to Hugging Face:

from unsloth import FastLanguageModel

import torch

max_seq_length = 2048

load_in_4bit = True

model, tokenizer = FastLanguageModel.from_pretrained(

model_name="unsloth/Phi-4",

max_seq_length=max_seq_length,

load_in_4bit=load_in_4bit,

)

model = FastLanguageModel.get_peft_model(

model,

r=16,

target_modules=["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"],

lora_alpha=16,

lora_dropout=0,

bias="none",

use_gradient_checkpointing="unsloth",

random_state=3407,

)

Remember to replace <your_hf_token></your_hf_token> with your actual Hugging Face token.

Conclusion:

This streamlined guide empowers developers to efficiently fine-tune Phi-4 for specific needs, leveraging the power of LoRA and Hugging Face for optimized performance and easy deployment. Remember to consult the original response for complete code snippets and detailed explanations.

The above is the detailed content of How to Fine-Tune Phi-4 Locally?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

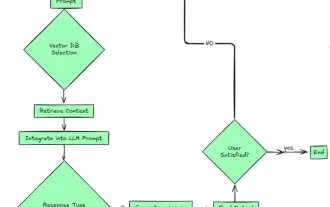

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le