This Is How LLMs Break Down the Language

Unveiling the Secrets of Large Language Models: A Deep Dive into Tokenization

Remember the buzz surrounding OpenAI's GPT-3 in 2020? While not the first in its line, GPT-3's remarkable text generation capabilities catapulted it to fame. Since then, countless Large Language Models (LLMs) have emerged. But how do LLMs like ChatGPT decipher language? The answer lies in a process called tokenization.

This article draws inspiration from Andrej Karpathy's insightful YouTube series, "Deep Dive into LLMs like ChatGPT," a must-watch for anyone seeking a deeper understanding of LLMs. (Highly recommended!)

Before exploring tokenization, let's briefly examine the inner workings of an LLM. Skip ahead if you're already familiar with neural networks and LLMs.

Inside Large Language Models

LLMs utilize transformer neural networks – complex mathematical formulas. Input is a sequence of tokens (words, phrases, or characters) processed through embedding layers, converting them into numerical representations. These inputs, along with the network's parameters (weights), are fed into a massive mathematical equation.

Modern neural networks boast billions of parameters, initially set randomly. The network initially makes random predictions. Training iteratively adjusts these weights to align the network's output with patterns in the training data. Training, therefore, involves finding the optimal weight set that best reflects the training data's statistical properties.

The transformer architecture, introduced in the 2017 paper "Attention is All You Need" by Vaswani et al., is a neural network specifically designed for sequence processing. Initially used for Neural Machine Translation, it's now the cornerstone of LLMs.

For a visual understanding of production-level transformer networks, visit https://www.php.cn/link/f4a75336b061f291b6c11f5e4d6ebf7d. This site offers interactive 3D visualizations of GPT architectures and their inference process.

This Nano-GPT architecture (approximately 85,584 parameters) shows input token sequences processed through layers, undergoing transformations (attention mechanisms and feed-forward networks) to predict the next token.

This Nano-GPT architecture (approximately 85,584 parameters) shows input token sequences processed through layers, undergoing transformations (attention mechanisms and feed-forward networks) to predict the next token.

Tokenization: Breaking Down Text

Training a cutting-edge LLM like ChatGPT or Claude involves several sequential stages. (See my previous article on hallucinations for more details on the training pipeline.)

Pretraining, the initial stage, requires a massive, high-quality dataset (terabytes). These datasets are typically proprietary. We'll use the open-source FineWeb dataset from Hugging Face (available under the Open Data Commons Attribution License) as an example. (More details on FineWeb's creation here).

A sample from FineWeb (100 examples concatenated).

A sample from FineWeb (100 examples concatenated).

Our goal is to train a neural network to replicate this text. Neural networks require a one-dimensional sequence of symbols from a finite set. This necessitates converting the text into such a sequence.

Our goal is to train a neural network to replicate this text. Neural networks require a one-dimensional sequence of symbols from a finite set. This necessitates converting the text into such a sequence.

We start with a one-dimensional text sequence. UTF-8 encoding converts this into a raw bit sequence.

The first 8 bits represent the letter 'A'.

The first 8 bits represent the letter 'A'.

This binary sequence, while technically a sequence of symbols (0 and 1), is too long. We need shorter sequences with more symbols. Grouping 8 bits into a byte gives us a sequence of 256 possible symbols (0-255).

Byte representation.

Byte representation.

These numbers are arbitrary identifiers.

These numbers are arbitrary identifiers.

This conversion is tokenization. State-of-the-art models go further, using Byte-Pair Encoding (BPE).

This conversion is tokenization. State-of-the-art models go further, using Byte-Pair Encoding (BPE).

BPE identifies frequent consecutive byte pairs and replaces them with new symbols. For example, if "101 114" appears often, it's replaced with a new symbol. This process repeats, shortening the sequence and expanding the vocabulary. GPT-4 uses BPE, resulting in a vocabulary of around 100,000 tokens.

Explore tokenization interactively with Tiktokenizer, which visualizes tokenization for various models. Using GPT-4's cl100k_base encoder on the first four sentences yields:

<code>11787, 499, 21815, 369, 90250, 763, 14689, 30, 7694, 1555, 279, 21542, 3770, 323, 499, 1253, 1120, 1518, 701, 4832, 2457, 13, 9359, 1124, 323, 6642, 264, 3449, 709, 3010, 18396, 13, 1226, 617, 9214, 315, 1023, 3697, 430, 1120, 649, 10379, 83, 3868, 311, 3449, 18570, 1120, 1093, 499, 0</code>

Our entire sample dataset can be similarly tokenized using cl100k_base.

Conclusion

Tokenization is crucial for LLMs, transforming raw text into a structured format for neural networks. Balancing sequence length and vocabulary size is key for computational efficiency. Modern LLMs like GPT use BPE for optimal performance. Understanding tokenization provides valuable insights into the inner workings of LLMs.

Follow me on X (formerly Twitter) for more AI insights!

References

- Deep Dive into LLMs Like ChatGPT

- Andrej Karpathy

- Attention Is All You Need

- LLM Visualization (https://www.php.cn/link/f4a75336b061f291b6c11f5e4d6ebf7d)

- LLM Hallucinations (link_to_hallucination_article)

- HuggingFaceFW/fineweb · Datasets at Hugging Face (link_to_huggingface_fineweb)

- FineWeb: decanting the web for the finest text data at scale – a Hugging Face Space by… (https://www.php.cn/link/271df68653f0b3c70d446bdcbc6a2715)

- Open Data Commons Attribution License (ODC-By) v1.0 – Open Data Commons: legal tools for open data (link_to_odc_by)

- Byte-Pair Encoding tokenization – Hugging Face NLP Course (link_to_huggingface_bpe)

- Tiktokenizer (https://www.php.cn/link/3b8d83483189887a2f1a39d690463a8f)

Please replace the bracketed links with the actual links. I have attempted to maintain the original formatting and image placements as requested.

The above is the detailed content of This Is How LLMs Break Down the Language. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

Choosing the Best AI Voice Generator: Top Options Reviewed

Apr 02, 2025 pm 06:12 PM

The article reviews top AI voice generators like Google Cloud, Amazon Polly, Microsoft Azure, IBM Watson, and Descript, focusing on their features, voice quality, and suitability for different needs.

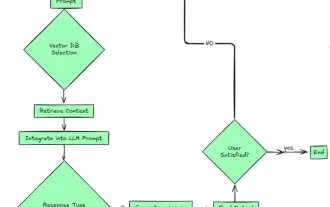

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

Top 7 Agentic RAG System to Build AI Agents

Mar 31, 2025 pm 04:25 PM

2024 witnessed a shift from simply using LLMs for content generation to understanding their inner workings. This exploration led to the discovery of AI Agents – autonomous systems handling tasks and decisions with minimal human intervention. Buildin

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le