Complex Reasoning in LLMs: Why do Smaller Models Struggle?

This research paper, "Not All LLM Reasoners Are Created Equal," explores the limitations of large language models (LLMs) in complex reasoning tasks, particularly those requiring multi-step problem-solving. While LLMs excel at challenging mathematical problems, their performance significantly degrades when faced with interconnected questions where the solution to one problem informs the next – a concept termed "compositional reasoning."

The study, conducted by researchers from Mila, Google DeepMind, and Microsoft Research, reveals a surprising weakness in smaller, more cost-efficient LLMs. These models, while proficient at simpler tasks, struggle with the "second-hop reasoning" needed to solve chained problems. This isn't due to issues like data leakage; rather, it stems from an inability to maintain context and logically connect problem parts. Instruction tuning, a common performance-enhancing technique, provides inconsistent benefits for smaller models, sometimes leading to overfitting.

Key Findings:

- Smaller LLMs exhibit a significant "reasoning gap" when tackling compositional problems.

- Performance drops dramatically when solving interconnected questions.

- Instruction tuning yields inconsistent improvements in smaller models.

- This reasoning limitation restricts the reliability of smaller LLMs in real-world applications.

- Even specialized math models struggle with compositional reasoning.

- More effective training methods are needed to enhance multi-step reasoning capabilities.

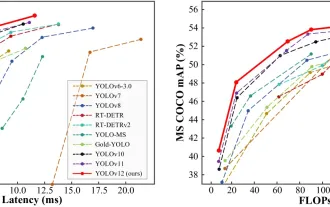

The paper uses a compositional Grade-School Math (GSM) test to illustrate this gap. The test involves two linked questions, where the answer to the first (Q1) becomes a variable (X) in the second (Q2). The results show that most models perform far worse on the compositional task than predicted by their performance on individual questions. Larger, more powerful models like GPT-4o demonstrate superior reasoning abilities, while smaller, cost-effective models, even those specialized in math, show a substantial performance decline.

A graph comparing open-source and closed-source LLMs highlights this reasoning gap. Smaller, cost-effective models consistently exhibit larger negative reasoning gaps, indicating poorer performance on compositional tasks compared to larger models. GPT-4o, for example, shows minimal gap, while others like Phi 3-mini-4k-IT demonstrate significant shortcomings.

Further analysis reveals that the reasoning gap is not solely due to benchmark leakage. The issues stem from overfitting to benchmarks, distraction by irrelevant context, and a failure to transfer information effectively between subtasks.

The study concludes that improving compositional reasoning requires innovative training approaches. While techniques like instruction tuning and math specialization offer some benefits, they are insufficient to bridge the reasoning gap. Exploring alternative methods, such as code-based reasoning, may be necessary to enhance the ability of LLMs to handle complex, multi-step reasoning tasks. The research emphasizes the need for improved training techniques to enable smaller, more cost-effective LLMs to reliably perform complex reasoning tasks.

The above is the detailed content of Complex Reasoning in LLMs: Why do Smaller Models Struggle?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1384

1384

52

52

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

The article discusses AI models surpassing ChatGPT, like LaMDA, LLaMA, and Grok, highlighting their advantages in accuracy, understanding, and industry impact.(159 characters)

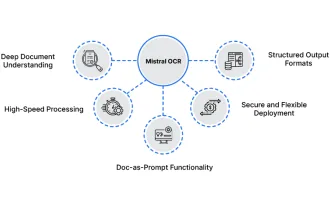

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

Mistral OCR: Revolutionizing Retrieval-Augmented Generation with Multimodal Document Understanding Retrieval-Augmented Generation (RAG) systems have significantly advanced AI capabilities, enabling access to vast data stores for more informed respons

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.