How to Fine-tune LLMs to 1.58 bits? - Analytics Vidhya

Exploring the Efficiency of 1.58-bit Quantized LLMs

Large Language Models (LLMs) are rapidly increasing in size and complexity, leading to escalating computational costs and energy consumption. Quantization, a technique to reduce the precision of model parameters, offers a promising solution. This article delves into BitNet, a novel approach that fine-tunes LLMs to an unprecedented 1.58 bits, achieving remarkable efficiency gains.

The Challenge of Quantization

Traditional LLMs utilize 16-bit (FP16) or 32-bit (FP32) floating-point precision. Quantization reduces this precision to lower-bit formats (e.g., 8-bit, 4-bit), resulting in memory savings and faster computation. However, this often comes at the expense of accuracy. The key challenge lies in minimizing the performance trade-off inherent in extreme precision reduction.

BitNet: A Novel Approach

BitNet introduces a 1.58-bit LLM architecture where each parameter is represented using ternary values {-1, 0, 1}. This innovative approach leverages the BitLinear layer, replacing traditional linear layers in the model's Multi-Head Attention and Feed-Forward Networks. To overcome the non-differentiability of ternary weights, BitNet employs the Straight-Through Estimator (STE).

Straight-Through Estimator (STE)

STE is a crucial component of BitNet. It allows gradients to propagate through the non-differentiable quantization process during backpropagation, enabling effective model training despite the use of discrete weights.

Fine-tuning from Pre-trained Models

While BitNet demonstrates impressive results when training from scratch, the resource requirements for pre-training are substantial. This article explores the feasibility of fine-tuning existing pre-trained models (e.g., Llama3 8B) to 1.58 bits. This approach faces challenges, as quantization can lead to information loss. The authors address this by employing dynamic lambda scheduling and exploring alternative quantization methods (per-row, per-column, per-group).

Optimization Strategies

The research highlights the importance of careful optimization during fine-tuning. Dynamic lambda scheduling, which gradually introduces quantization during training, proves crucial in mitigating information loss and improving convergence. Experiments with different lambda scheduling functions (linear, exponential, sigmoid) are conducted to find the optimal approach.

Experimental Results and Analysis

The study presents comprehensive experimental results, comparing the performance of fine-tuned 1.58-bit models against various baselines. The results demonstrate that while some performance gaps remain compared to full-precision models, the efficiency gains are substantial. The impact of model size and the choice of datasets are also analyzed.

Hugging Face Integration

The fine-tuned models are made accessible through Hugging Face, enabling easy integration into various applications. The article provides code examples demonstrating how to load and utilize these models.

Conclusion

BitNet represents a significant advancement in LLM efficiency. While fine-tuning to 1.58 bits presents challenges, the research demonstrates the potential to achieve comparable performance to higher-precision models with drastically reduced computational costs and energy consumption. This opens exciting possibilities for deploying large-scale LLMs on resource-constrained devices and reducing the environmental impact of AI.

(Note: The images are referenced but not included in this output as they were not provided in a format that could be directly incorporated.)

The above is the detailed content of How to Fine-tune LLMs to 1.58 bits? - Analytics Vidhya. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

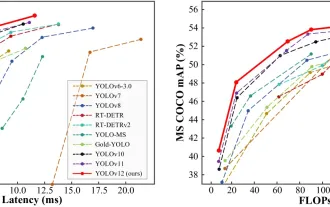

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google DeepMind's GenCast: A Revolutionary AI for Weather Forecasting Weather forecasting has undergone a dramatic transformation, moving from rudimentary observations to sophisticated AI-powered predictions. Google DeepMind's GenCast, a groundbreak

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

The article discusses AI models surpassing ChatGPT, like LaMDA, LLaMA, and Grok, highlighting their advantages in accuracy, understanding, and industry impact.(159 characters)

o1 vs GPT-4o: Is OpenAI's New Model Better Than GPT-4o?

Mar 16, 2025 am 11:47 AM

o1 vs GPT-4o: Is OpenAI's New Model Better Than GPT-4o?

Mar 16, 2025 am 11:47 AM

OpenAI's o1: A 12-Day Gift Spree Begins with Their Most Powerful Model Yet December's arrival brings a global slowdown, snowflakes in some parts of the world, but OpenAI is just getting started. Sam Altman and his team are launching a 12-day gift ex