Google's SigLIP: A Significant Momentum in CLIP's Framework

Google's SigLIP: A Superior Image Classification Model

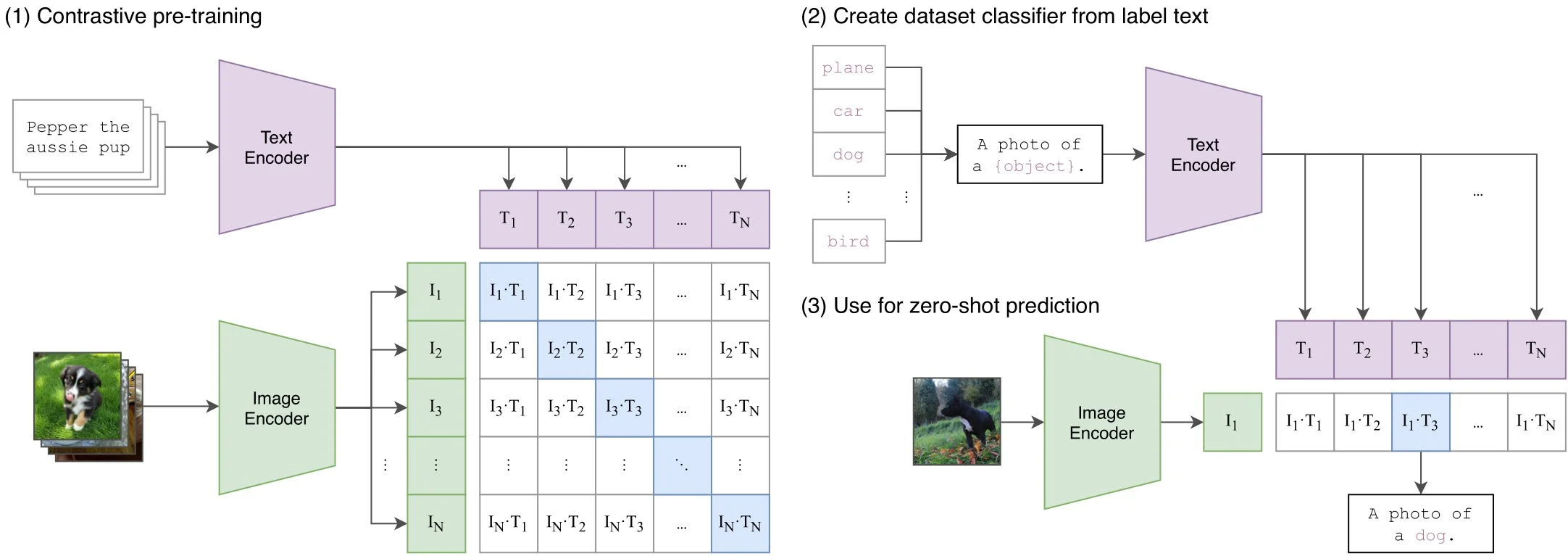

Image classification has revolutionized computer vision, delivering more accurate results through advanced models. Zero-shot classification and image-pair analysis are particularly prominent applications. Google's SigLIP model stands out, boasting impressive performance benchmarks. It's an image embedding model based on the CLIP framework, but enhanced with a superior sigmoid loss function.

SigLIP processes image-text pairs, generating vector representations and probabilities. Its efficiency allows for classification even with smaller datasets while maintaining scalability. The key differentiator is the sigmoid loss function, surpassing CLIP's performance by focusing on individual image-text pair matches rather than overall best matches.

Key Features and Capabilities:

- Multimodal Model: Combines image and text processing for enhanced accuracy.

- Vision Transformer Encoder: Divides images into patches for efficient vector embedding.

- Transformer Encoder for Text: Converts text sequences into dense embeddings.

- Zero-Shot Classification: Classifies images without prior training on specific labels.

- Image-Text Similarity Scores: Provides scores reflecting the similarity between images and their descriptions.

- Scalable Architecture: Handles large datasets efficiently thanks to the sigmoid loss function.

Model Architecture:

SigLIP employs a CLIP-like architecture but with crucial modifications. The image undergoes processing via a vision transformer encoder, while text is handled by a transformer encoder. This multimodal approach allows for both image-based and text-based input, enabling diverse applications.

The model's contrastive learning framework aligns image and text representations, improving overall performance.

Performance and Scalability:

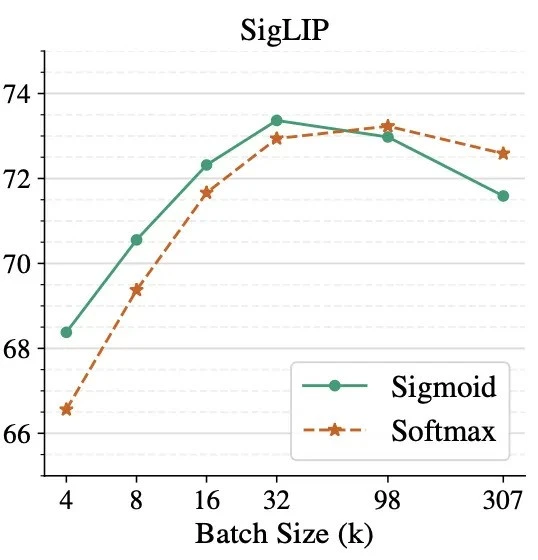

The sigmoid loss function allows for significant scaling improvements compared to CLIP. While further optimization is ongoing (e.g., with SoViT-400m), SigLIP already shows promising results.

Inference with SigLIP:

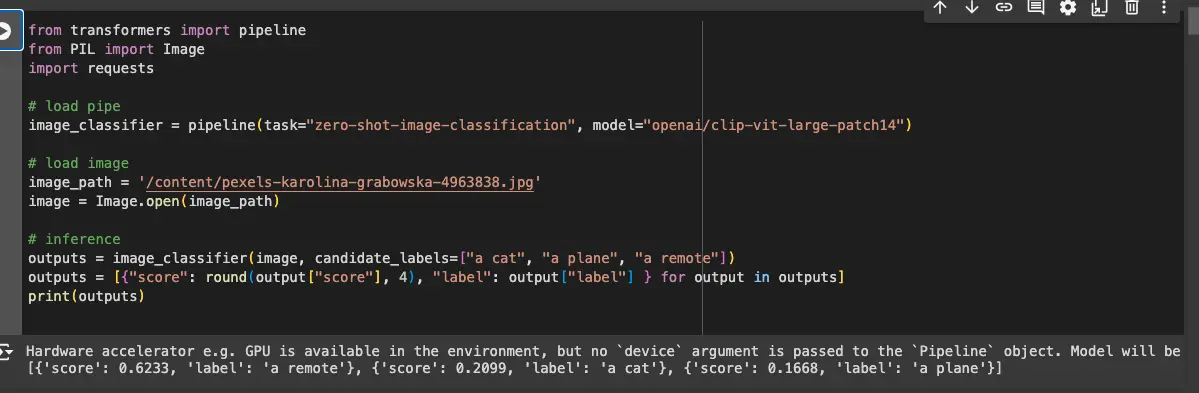

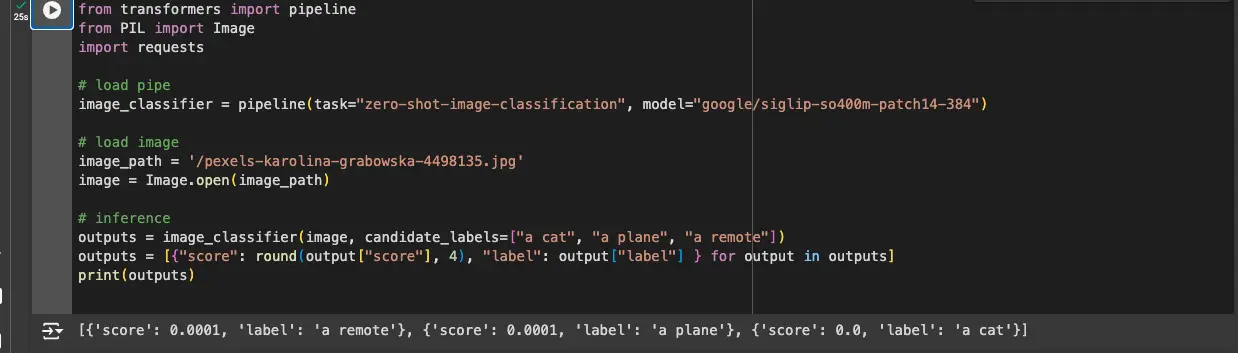

Here's a simplified guide to running inference:

-

Import Libraries: Use

transformers,PIL, andrequests. -

Load the Model: Employ the

pipelinefunction fromtransformersto load the pre-trainedgoogle/siglip-so400m-patch14-384model. -

Prepare the Image: Load the image using PIL from a local path or URL via

requests. -

Perform Inference: Use the loaded model to obtain the

logits(scores) for the image against candidate labels.

SigLIP vs. CLIP:

The key advantage of SigLIP lies in its sigmoid loss function. Unlike CLIP's softmax, which struggles with scenarios where the image class isn't among the labels, SigLIP provides more accurate and nuanced results.

Applications:

SigLIP's capabilities extend to various applications:

- Image Search: Building search engines based on text descriptions.

- Image Captioning: Generating captions for images.

- Visual Question Answering: Answering questions about images.

Conclusion:

Google's SigLIP represents a significant advancement in image classification. Its sigmoid loss function and efficient architecture lead to improved accuracy and scalability, making it a powerful tool for various computer vision tasks.

Key Takeaways:

- SigLIP utilizes a sigmoid loss function for superior zero-shot classification performance.

- Its multimodal approach enhances accuracy and versatility.

- It's highly scalable and suitable for large-scale applications.

Resources:

Frequently Asked Questions:

-

Q1: What's the core difference between SigLIP and CLIP? A1: SigLIP employs a sigmoid loss function for improved accuracy in zero-shot classification.

-

Q2: What are SigLIP's primary applications? A2: Image classification, captioning, retrieval, and visual question answering.

-

Q3: How does SigLIP handle zero-shot classification? A3: By comparing images to provided text labels, even without prior training on those labels.

-

Q4: Why is the sigmoid loss function beneficial? A4: It allows for independent evaluation of image-text pairs, leading to more accurate predictions.

(Note: Replace "https://www.php.cn/https://www.php.cn/https://www.php.cn/link/2bec63f5d312303621583b97ff7c68bf/2bec63f5d312303621583b97ff7c68bf/2bec63f5d312303621583b97ff7c68bf" placeholders with actual https://www.php.cn/https://www.php.cn/https://www.php.cn/link/2bec63f5d312303621583b97ff7c68bf/2bec63f5d312303621583b97ff7c68bf/2bec63f5d312303621583b97ff7c68bfs to the resources.)

The above is the detailed content of Google's SigLIP: A Significant Momentum in CLIP's Framework. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1382

1382

52

52

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

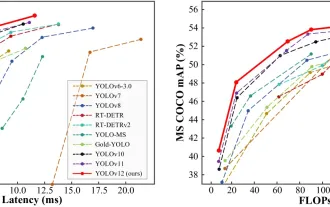

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

The article discusses AI models surpassing ChatGPT, like LaMDA, LLaMA, and Grok, highlighting their advantages in accuracy, understanding, and industry impact.(159 characters)

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

Mistral OCR: Revolutionizing Retrieval-Augmented Generation with Multimodal Document Understanding Retrieval-Augmented Generation (RAG) systems have significantly advanced AI capabilities, enabling access to vast data stores for more informed respons