Classes and Objects in PHP5_PHP教程

第 1 页 第一节 面向对象编程 [1]

第 1 页 第一节 面向对象编程 [1] 第 2 页 第二节 对象模型 [2]

第 2 页 第二节 对象模型 [2] 第 3 页 第三节 定义一个类 [3]

第 3 页 第三节 定义一个类 [3] 第 4 页 第四节 构造函数和析构函数 [4]

第 4 页 第四节 构造函数和析构函数 [4] 第 5 页 第五节 克隆 [5]

第 5 页 第五节 克隆 [5] 第 6 页 第六节 访问属性和方法 [6]

第 6 页 第六节 访问属性和方法 [6] 第 7 页 第七节 类的静态成员 [7]

第 7 页 第七节 类的静态成员 [7] 第 8 页 第八节 访问方式 [8]

第 8 页 第八节 访问方式 [8] 第 9 页 第九节 绑定 [9]

第 9 页 第九节 绑定 [9] 第 10 页 第十节 抽象方法和抽象类 [10]

第 10 页 第十节 抽象方法和抽象类 [10] 第 11 页 第十一节 重载 [11]

第 11 页 第十一节 重载 [11] 第 12 页 第十二节 类的自动加载 [12]

第 12 页 第十二节 类的自动加载 [12] 第 13 页 第十三节 对象串行化 [13]

第 13 页 第十三节 对象串行化 [13] 第 14 页 第十四节 命名空间 [14]

第 14 页 第十四节 命名空间 [14] 第 15 页 第十五节 Zend引擎的发展 [15]

第 15 页 第十五节 Zend引擎的发展 [15]

|

|

作者:Leon Atkinson 翻译:Haohappy 面向对象编程被设计来为大型软件项目提供解决方案,尤其是多人合作的项目. 当源代码增长到一万行甚至更多的时候,每一个更动都可能导致不希望的副作用. 这种情况发生于模块间结成秘密联盟的时候,就像第一次世界大战前的欧洲. |

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1381

1381

52

52

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

In order to align large language models (LLMs) with human values and intentions, it is critical to learn human feedback to ensure that they are useful, honest, and harmless. In terms of aligning LLM, an effective method is reinforcement learning based on human feedback (RLHF). Although the results of the RLHF method are excellent, there are some optimization challenges involved. This involves training a reward model and then optimizing a policy model to maximize that reward. Recently, some researchers have explored simpler offline algorithms, one of which is direct preference optimization (DPO). DPO learns the policy model directly based on preference data by parameterizing the reward function in RLHF, thus eliminating the need for an explicit reward model. This method is simple and stable

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

At the forefront of software technology, UIUC Zhang Lingming's group, together with researchers from the BigCode organization, recently announced the StarCoder2-15B-Instruct large code model. This innovative achievement achieved a significant breakthrough in code generation tasks, successfully surpassing CodeLlama-70B-Instruct and reaching the top of the code generation performance list. The unique feature of StarCoder2-15B-Instruct is its pure self-alignment strategy. The entire training process is open, transparent, and completely autonomous and controllable. The model generates thousands of instructions via StarCoder2-15B in response to fine-tuning the StarCoder-15B base model without relying on expensive manual annotation.

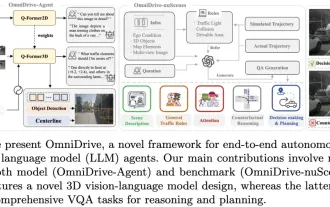

LLM is all done! OmniDrive: Integrating 3D perception and reasoning planning (NVIDIA's latest)

May 09, 2024 pm 04:55 PM

LLM is all done! OmniDrive: Integrating 3D perception and reasoning planning (NVIDIA's latest)

May 09, 2024 pm 04:55 PM

Written above & the author’s personal understanding: This paper is dedicated to solving the key challenges of current multi-modal large language models (MLLMs) in autonomous driving applications, that is, the problem of extending MLLMs from 2D understanding to 3D space. This expansion is particularly important as autonomous vehicles (AVs) need to make accurate decisions about 3D environments. 3D spatial understanding is critical for AVs because it directly impacts the vehicle’s ability to make informed decisions, predict future states, and interact safely with the environment. Current multi-modal large language models (such as LLaVA-1.5) can often only handle lower resolution image inputs (e.g.) due to resolution limitations of the visual encoder, limitations of LLM sequence length. However, autonomous driving applications require

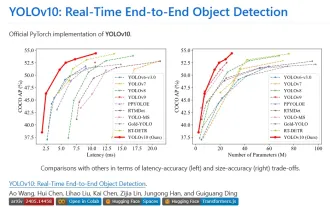

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

The benchmark YOLO series of target detection systems has once again received a major upgrade. Since the release of YOLOv9 in February this year, the baton of the YOLO (YouOnlyLookOnce) series has been passed to the hands of researchers at Tsinghua University. Last weekend, the news of the launch of YOLOv10 attracted the attention of the AI community. It is considered a breakthrough framework in the field of computer vision and is known for its real-time end-to-end object detection capabilities, continuing the legacy of the YOLO series by providing a powerful solution that combines efficiency and accuracy. Paper address: https://arxiv.org/pdf/2405.14458 Project address: https://github.com/THU-MIG/yo

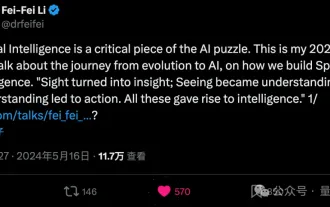

Li Feifei reveals the entrepreneurial direction of 'spatial intelligence': visualization turns into insight, seeing becomes understanding, and understanding leads to action

Jun 01, 2024 pm 02:55 PM

Li Feifei reveals the entrepreneurial direction of 'spatial intelligence': visualization turns into insight, seeing becomes understanding, and understanding leads to action

Jun 01, 2024 pm 02:55 PM

After Stanford's Feifei Li started his business, he unveiled the new concept "spatial intelligence" for the first time. This is not only her entrepreneurial direction, but also the "North Star" that guides her. She considers it "the key puzzle piece to solve the artificial intelligence problem." Visualization leads to insight; seeing leads to understanding; understanding leads to action. Based on Li Feifei's 15-minute TED talk, which is fully open to the public, it starts from the origin of life evolution hundreds of millions of years ago, to how humans are not satisfied with what nature has given them and develops artificial intelligence, to how to build spatial intelligence in the next step. Nine years ago, Li Feifei introduced the newly born ImageNet to the world on the same stage - one of the starting points for this round of deep learning explosion. She herself also encouraged netizens: If you watch both videos, you will be able to understand the computer vision of the past 10 years.

Beating GPT-4o in seconds, beating Llama 3 70B in 22B, Mistral AI opens its first code model

Jun 01, 2024 pm 06:32 PM

Beating GPT-4o in seconds, beating Llama 3 70B in 22B, Mistral AI opens its first code model

Jun 01, 2024 pm 06:32 PM

French AI unicorn MistralAI, which is targeting OpenAI, has made a new move: Codestral, the first large code model, was born. As an open generative AI model designed specifically for code generation tasks, Codestral helps developers write and interact with code by sharing instructions and completion API endpoints. Codestral's proficiency in coding and English allows software developers to design advanced AI applications. The parameter size of Codestral is 22B, it complies with the new MistralAINon-ProductionLicense, and can be used for research and testing purposes, but commercial use is prohibited. Currently, the model is available for download on HuggingFace. download link