Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

PHP processes TXT files to import massive data into the database_PHP tutorial

PHP processes TXT files to import massive data into the database_PHP tutorial

PHP processes TXT files to import massive data into the database_PHP tutorial

There is a TXT file containing 100,000 records in the following format:

Column 1 Column 2 Column 3 Column 4 Column 5

a 00003131 0 0 adductive#1 adducting#1 adducent#1

a 00003356 0 0 nascent#1

a 00003553 0 0 emerging#2 emergent#2

a 00003700 0.25 0 dissilient#1

……………………There are 100,000 following………………

The requirement is to import it into the database. The structure of the data table is

word_id automatic increment

word 【adductive#1 adducting#1 adducent#1】This TXT record needs to be converted into 3 SQL records

value =The third column-the fourth column; if =0, this record will be skipped and not inserted into the data table

[php]

$file = 'words.txt';//TXT source file of 10W records

$lines = file_get_contents($file);

ini_set('memory_limit', '-1');//Do not limit the Mem size, otherwise an error will be reported

$line=explode("n",$lines);

$i=0;

$sql="INSERT INTO words_sentiment (word,senti_type,senti_value,word_type) VALUES ";

foreach($line as $key =>$li)

{

$arr=explode(" ",$li);

$senti_value=$arr[2]-$arr[3];

If($senti_value!=0)

If($i>=20000&&$i<25000)//Import in batches to avoid failure

$mm=explode(" ",$arr[4]);

FOREACH ($ MM AS $ M) // [Adductive#1 AdDucting#1 Adducent#1] This TXT record must be converted to 3 SQL records {

$nn=explode("#",$m);

$word=$nn[0];

$sql.="("$word",1,$senti_value,2),";//It should be noted here that word may contain single quotes (such as jack's), so we need to use double quotes to contain word ( Note the escaping)

$i++;

}

//echo $i;

$sql=substr($sql,0,-1);//Remove the last comma

//echo $sql;

File_put_contents('20000-25000.txt', $sql); //Batch import database, 5000 entries at a time, takes about 40 seconds; importing too many at one time will not be enough max_execution_time, resulting in failure

?>

$file = 'words.txt';//TXT source file of 10W records

$lines = file_get_contents($file);

ini_set('memory_limit', '-1');//Do not limit the Mem size, otherwise an error will be reported

$line=explode("n",$lines);

$i=0;

$sql="INSERT INTO words_sentiment (word,senti_type,senti_value,word_type) VALUES ";

foreach($line as $key =>$li)

{

$arr=explode(" ",$li);

$senti_value=$arr[2]-$arr[3];

If($senti_value!=0)

{

If($i>=20000&&$i<25000)//Import in batches to avoid failure

{

$mm=explode(" ",$arr[4]);

foreach($mm as $m) //[adductive#1 adducting#1 adducent#1] This TXT record needs to be converted into 3 SQL records

$nn=explode("#",$m);

$word=$nn[0];

$sql.="("$word",1,$senti_value,2),";//It should be noted here that word may contain single quotes (such as jack's), so we need to use double quotes to contain word ( Note the escaping)

}

}

$i++;

}

//echo $i;

$sql=substr($sql,0,-1);//Remove the last comma

//echo $sql;

File_put_contents('20000-25000.txt', $sql); //Batch import database, 5000 entries at a time, takes about 40 seconds; importing too many at one time will not be enough max_execution_time, resulting in failure

?>

1. When importing massive data, you must pay attention to some limitations of PHP. You can make temporary adjustments, otherwise an error will be reported

Allowed memory size of 33554432 bytes exhausted (tried to allocate 16 bytes)

2. PHP operates TXT files

file_put_contents()

3. When importing large amounts, it is best to import in batches to reduce the chance of failure

4. Before mass import, the script must be tested multiple times before use, such as testing with 100 pieces of data

5. After importing, if PHP’s mem_limit is still not enough, the program still cannot run

(It is recommended to increase mem_limit by modifying php.ini instead of using temporary statements)

http://www.bkjia.com/PHPjc/477529.html

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

Visual Studio Code, also known as VS Code, is a free source code editor — or integrated development environment (IDE) — available for all major operating systems. With a large collection of extensions for many programming languages, VS Code can be c

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

JWT is an open standard based on JSON, used to securely transmit information between parties, mainly for identity authentication and information exchange. 1. JWT consists of three parts: Header, Payload and Signature. 2. The working principle of JWT includes three steps: generating JWT, verifying JWT and parsing Payload. 3. When using JWT for authentication in PHP, JWT can be generated and verified, and user role and permission information can be included in advanced usage. 4. Common errors include signature verification failure, token expiration, and payload oversized. Debugging skills include using debugging tools and logging. 5. Performance optimization and best practices include using appropriate signature algorithms, setting validity periods reasonably,

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

A string is a sequence of characters, including letters, numbers, and symbols. This tutorial will learn how to calculate the number of vowels in a given string in PHP using different methods. The vowels in English are a, e, i, o, u, and they can be uppercase or lowercase. What is a vowel? Vowels are alphabetic characters that represent a specific pronunciation. There are five vowels in English, including uppercase and lowercase: a, e, i, o, u Example 1 Input: String = "Tutorialspoint" Output: 6 explain The vowels in the string "Tutorialspoint" are u, o, i, a, o, i. There are 6 yuan in total

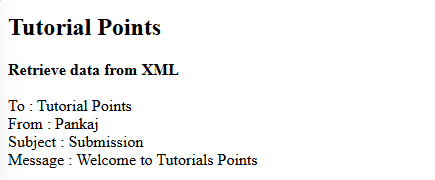

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

This tutorial demonstrates how to efficiently process XML documents using PHP. XML (eXtensible Markup Language) is a versatile text-based markup language designed for both human readability and machine parsing. It's commonly used for data storage an

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Static binding (static::) implements late static binding (LSB) in PHP, allowing calling classes to be referenced in static contexts rather than defining classes. 1) The parsing process is performed at runtime, 2) Look up the call class in the inheritance relationship, 3) It may bring performance overhead.

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are the magic methods of PHP? PHP's magic methods include: 1.\_\_construct, used to initialize objects; 2.\_\_destruct, used to clean up resources; 3.\_\_call, handle non-existent method calls; 4.\_\_get, implement dynamic attribute access; 5.\_\_set, implement dynamic attribute settings. These methods are automatically called in certain situations, improving code flexibility and efficiency.

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL is an open source relational database management system. 1) Create database and tables: Use the CREATEDATABASE and CREATETABLE commands. 2) Basic operations: INSERT, UPDATE, DELETE and SELECT. 3) Advanced operations: JOIN, subquery and transaction processing. 4) Debugging skills: Check syntax, data type and permissions. 5) Optimization suggestions: Use indexes, avoid SELECT* and use transactions.