Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

Method to read TXT files and import massive data into database based on PHP_PHP tutorial

Method to read TXT files and import massive data into database based on PHP_PHP tutorial

Method to read TXT files and import massive data into database based on PHP_PHP tutorial

There is a TXT file containing 100,000 records in the following format: Column 1 Column 2 Column 3 Column 4 Column 5 ……………………There are 100,000 following……………… The requirement is to import it into the database. The structure of the data table is word_id Automatic increment 2. PHP operates TXT files file_get_contents() file_put_contents() 3. When importing large amounts, it is best to import in batches to reduce the chance of failure 4. Before mass import, the script must be tested multiple times before use, such as testing with 100 pieces of data 5. After importing, if PHP’s mem_limit is still not enough, the program still cannot run (It is recommended to increase mem_limit by modifying php.ini instead of using temporary statements)

a 00003131 0 0 adductive#1 adducting#1 adducent#1

a 00003356 0 0 nascent #1

a 00003553 0 0 emerging #2 emergent#2

a 00003700 0.25 0 dissilient#1

word [adductive#1 adducting#1 adducent#1] This TXT record needs to be converted into 3 SQL records

value = third column - fourth column; if =0, this record will be skipped and not inserted into the data table

$file = 'words.txt';//TXT source file of 10W records

$lines = file_get_contents($file);

ini_set('memory_limit', '-1');//Do not limit the Mem size, otherwise an error will be reported

$line=explode("n",$lines);

$i=0;

$ sql="INSERT INTO words_sentiment (word,senti_type,senti_value,word_type) VALUES ";

foreach($line as $key =>$li)

{

$arr=explode(" ",$li);

$senti_value=$arr[2]-$arr[3];

if($senti_value!=0)

i<25000)//Import in batches to avoid failure

adductive #1 adducting#1 adducent#1】This TXT record needs to be converted into 3 SQL records 🎜> $sql.="("$word",1,$senti_value,2),";//It should be noted here that word may contain single quotes (such as jack's), so we need to use double quotes to include it word (note the escaping) > //echo $i;

$sql=substr($sql,0, -1);//Remove the last comma

//echo $sql;

file_put_contents('20000-25000.txt', $sql); //Batch import database, 5000 entries at a time, takes about 40 seconds It looks like; importing too much max_execution_time at one time will not be enough, leading to failure

?>

1. When importing massive data, you should pay attention to some limitations of PHP. You can adjust it temporarily, otherwise an error will be reported

Allowed memory size of 33554432 bytes exhausted (tried to allocate 16 bytes)

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1359

1359

52

52

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

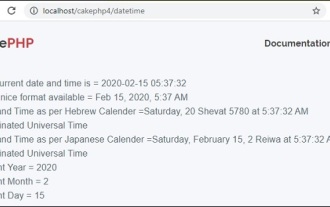

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

To work with date and time in cakephp4, we are going to make use of the available FrozenTime class.

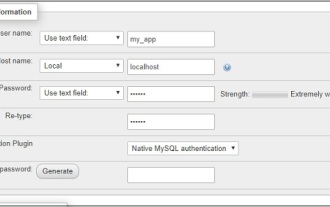

CakePHP Working with Database

Sep 10, 2024 pm 05:25 PM

CakePHP Working with Database

Sep 10, 2024 pm 05:25 PM

Working with database in CakePHP is very easy. We will understand the CRUD (Create, Read, Update, Delete) operations in this chapter.

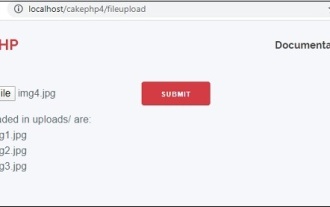

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

To work on file upload we are going to use the form helper. Here, is an example for file upload.

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

CakePHP is an open-source framework for PHP. It is intended to make developing, deploying and maintaining applications much easier. CakePHP is based on a MVC-like architecture that is both powerful and easy to grasp. Models, Views, and Controllers gu

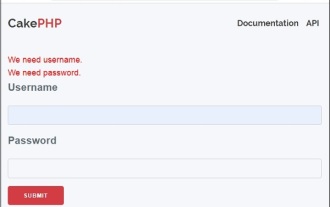

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

Validator can be created by adding the following two lines in the controller.

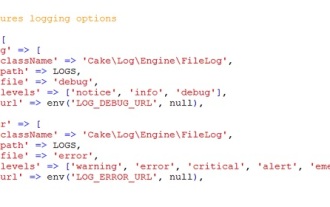

CakePHP Logging

Sep 10, 2024 pm 05:26 PM

CakePHP Logging

Sep 10, 2024 pm 05:26 PM

Logging in CakePHP is a very easy task. You just have to use one function. You can log errors, exceptions, user activities, action taken by users, for any background process like cronjob. Logging data in CakePHP is easy. The log() function is provide

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

Visual Studio Code, also known as VS Code, is a free source code editor — or integrated development environment (IDE) — available for all major operating systems. With a large collection of extensions for many programming languages, VS Code can be c