Section 2 Object Model [2]_PHP Tutorial

PHP5 has a single-inherited, restricted-access, overloadable object model. "Inheritance," discussed in detail later in this chapter, involves parent-child relationships between classes. In addition, PHP supports restrictions on properties and methods. Access. You can declare members as private, disallowing access from external classes. Finally, PHP allows a subclass to overload members from its parent class.

file://haohappyNote: There is no private in PHP4, Only public.private is good for better encapsulation.

PHP5’s object model treats objects as different from any other data type and is passed by reference. PHP does not require you to express it by reference. Sexually passing and returning objects. The handle-based object model will be explained in detail at the end of this chapter. It is the most important new feature in PHP5.

With a more direct object model, the handle-based system has additional Advantages: Improved efficiency, takes up less memory, and has greater flexibility.

In previous versions of PHP, scripts copied objects by default. Now PHP5 only moves the handle, which takes less time. The improvement in script execution efficiency is due to the avoidance of unnecessary copying. While the object system brings complexity, it also brings benefits in execution efficiency. At the same time, reducing copying means occupying less memory and leaving more space. More memory is used for other operations, which also improves efficiency.

file://haohappy Note: Based on handles, that means two objects can point to the same piece of memory, which not only reduces copying operations, but also reduces memory usage. occupancy.

Zand Engine 2 has even more flexibility. A happy development is to allow destructor--executing a class method before the object is destroyed. This is also good for utilizing memory, allowing PHP to Know clearly when there are no references to an object and allocate the freed memory to other uses.

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Detailed explanation of C++ function inheritance: How to use 'base class pointer' and 'derived class pointer' in inheritance?

May 01, 2024 pm 10:27 PM

Detailed explanation of C++ function inheritance: How to use 'base class pointer' and 'derived class pointer' in inheritance?

May 01, 2024 pm 10:27 PM

In function inheritance, use "base class pointer" and "derived class pointer" to understand the inheritance mechanism: when the base class pointer points to the derived class object, upward transformation is performed and only the base class members are accessed. When a derived class pointer points to a base class object, a downward cast is performed (unsafe) and must be used with caution.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

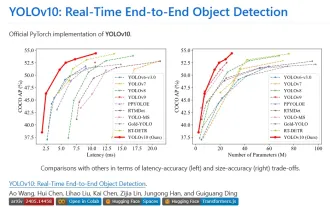

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

Open-Sora comprehensive open source upgrade: supports 16s video generation and 720p resolution

Apr 25, 2024 pm 02:55 PM

Open-Sora comprehensive open source upgrade: supports 16s video generation and 720p resolution

Apr 25, 2024 pm 02:55 PM

Open-Sora has been quietly updated in the open source community. It now supports video generation up to 16 seconds, with resolutions up to 720p, and can handle text-to-image, text-to-video, image-to-video, and video-to-video of any aspect ratio. and the generation needs of infinitely long videos. Let's try it out. Generate a horizontal screen Christmas snow scene, post to B site and then generate a vertical screen, and use Douyin to generate a 16-second long video. Now everyone can have a screenwriting addiction. How to play? Guidance GitHub: https://github.com/hpcaitech/Open-Sora What’s even cooler is that Open-Sora is still all open source, including the latest model architecture, the latest model weights, multi-time/resolution/long-term

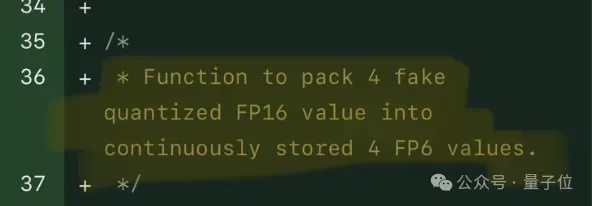

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one