In-depth analysis of Java memory model: locks

Lock release-get the established happens before relationship

Lock is the most important synchronization mechanism in Java concurrent programming. In addition to allowing mutually exclusive execution of critical sections, locks also allow the thread that releases the lock to send messages to the thread that acquires the same lock.

The following is a sample code for lock release-acquisition:

class MonitorExample {

int a = 0;

public synchronized void writer() { //1

a++; //2

} //3

public synchronized void reader() { //4

int i = a; //5

……

} //6

} Assume that thread A executes the writer() method, and then thread B executes the reader() method. According to the happens before rule, the happens before relationship contained in this process can be divided into two categories:

According to the program order rule, 1 happens before 2, 2 happens before 3; 4 happens before 5, 5 happens before 6.

According to monitor lock rules, 3 happens before 4.

According to the transitivity of happens before, 2 happens before 5.

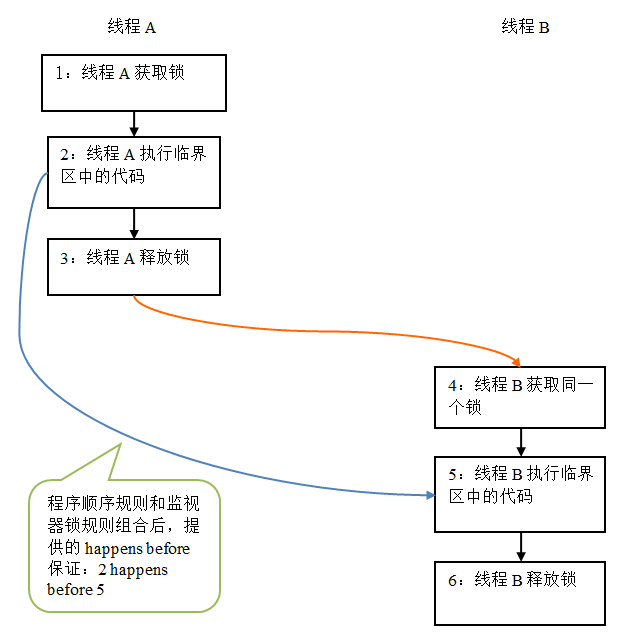

The graphical representation of the above happens before relationship is as follows:

In the above figure, the two nodes linked by each arrow represent a happens before relationship. Black arrows represent program order rules; orange arrows represent monitor lock rules; and blue arrows represent the happens-before guarantees provided by combining these rules.

The above figure shows that after thread A releases the lock, thread B subsequently acquires the same lock. In the picture above, 2 happens before 5. Therefore, all shared variables visible to thread A before releasing the lock will immediately become visible to thread B after thread B acquires the same lock.

Memory semantics of lock release and acquisition

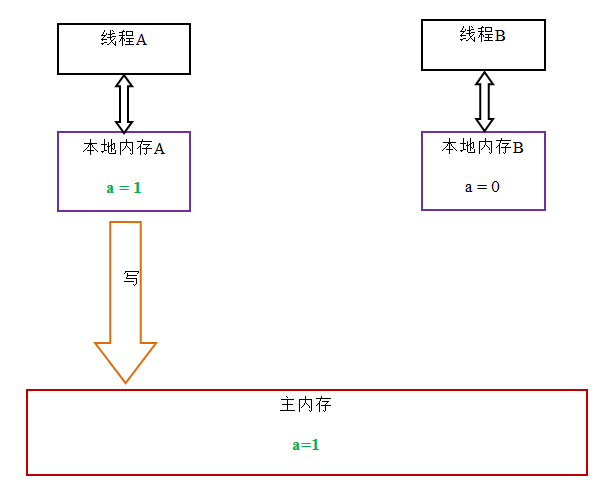

When a thread releases the lock, JMM will refresh the shared variables in the local memory corresponding to the thread to the main memory. Taking the above MonitorExample program as an example, after thread A releases the lock, the status diagram of the shared data is as follows:

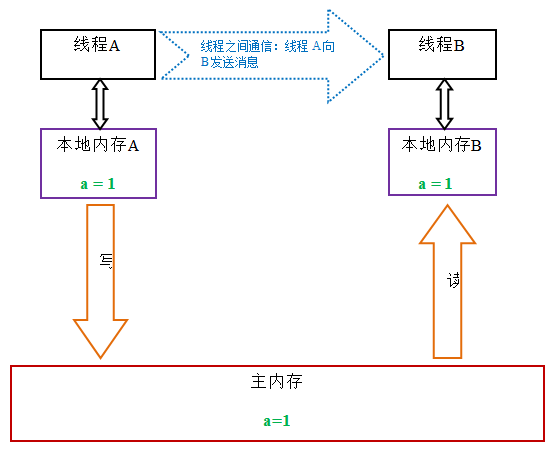

When a thread acquires the lock, JMM will The internal setting is invalid. As a result, the critical section code protected by the monitor must read the shared variables from main memory. The following is a schematic diagram of the status of lock acquisition:

Comparing the memory semantics of lock release-acquisition and the memory semantics of volatile write-read, it can be seen that: lock release and volatile write have The same memory semantics; lock acquisition and volatile read have the same memory semantics.

The following is a summary of the memory semantics of lock release and lock acquisition:

Thread A releases a lock. In essence, thread A issues a (thread A) message about modifications to shared variables.

Thread B acquires a lock. In essence, thread B receives a message sent by a previous thread (modification of shared variables before releasing the lock).

Thread A releases the lock, and then thread B acquires the lock. This process is essentially thread A sending a message to thread B through main memory.

Implementation of lock memory semantics

This article will use the source code of ReentrantLock to analyze the specific implementation mechanism of lock memory semantics.

Please see the following sample code:

class ReentrantLockExample {

int a = 0;

ReentrantLock lock = new ReentrantLock();

public void writer() {

lock.lock(); //获取锁

try {

a++;

} finally {

lock.unlock(); //释放锁

}

}

public void reader () {

lock.lock(); //获取锁

try {

int i = a;

……

} finally {

lock.unlock(); //释放锁

}

}

}In ReentrantLock, call the lock() method to obtain the lock; call the unlock() method to release the lock.

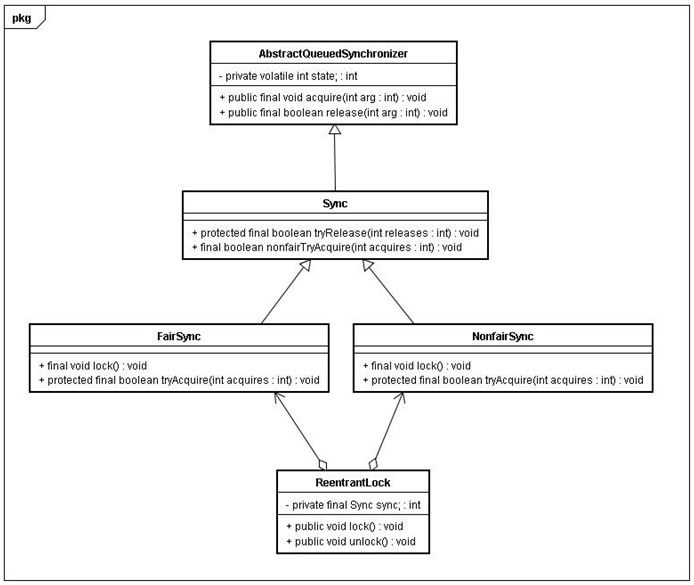

The implementation of ReentrantLock relies on the java synchronizer framework AbstractQueuedSynchronizer (referred to as AQS in this article). AQS uses an integer volatile variable (named state) to maintain the synchronization state. We will see soon that this volatile variable is the key to the implementation of ReentrantLock memory semantics. The following is the class diagram of ReentrantLock (only the parts relevant to this article are drawn):

ReentrantLock is divided into fair lock and unfair lock. We first analyze the fair lock.

When using fair lock, the method calling trace of the locking method lock() is as follows:

ReentrantLock : lock()

FairSync : lock()

AbstractQueuedSynchronizer : acquire(int arg)

ReentrantLock: tryAcquire(int acquires)

The locking actually starts in step 4. The following is the source code of this method:

protected final boolean tryAcquire(int acquires) {

final Thread current = Thread.currentThread();

int c = getState(); //获取锁的开始,首先读volatile变量state

if (c == 0) {

if (isFirst(current) &&

compareAndSetState(0, acquires)) {

setExclusiveOwnerThread(current);

return true;

}

}

else if (current == getExclusiveOwnerThread()) {

int nextc = c + acquires;

if (nextc < 0)

throw new Error("Maximum lock count exceeded");

setState(nextc);

return true;

}

return false;

}We can see from the above source code that adding The lock method first reads the volatile variable state.

When using fair lock, the method calling trace of the unlock method unlock() is as follows:

ReentrantLock: unlock()

AbstractQueuedSynchronizer: release(int arg)

Sync: tryRelease(int releases )

In step 3, the lock actually starts to be released. The following is the source code of this method:

protected final boolean tryRelease(int releases) {

int c = getState() - releases;

if (Thread.currentThread() != getExclusiveOwnerThread())

throw new IllegalMonitorStateException();

boolean free = false;

if (c == 0) {

free = true;

setExclusiveOwnerThread(null);

}

setState(c); //释放锁的最后,写volatile变量state

return free;

}从上面的源代码我们可以看出,在释放锁的最后写volatile变量state。

公平锁在释放锁的最后写volatile变量state;在获取锁时首先读这个volatile变量。根据volatile的happens-before规则,释放锁的线程在写volatile变量之前可见的共享变量,在获取锁的线程读取同一个volatile变量后将立即变的对获取锁的线程可见。

现在我们分析非公平锁的内存语义的实现。

非公平锁的释放和公平锁完全一样,所以这里仅仅分析非公平锁的获取。

使用公平锁时,加锁方法lock()的方法调用轨迹如下:

ReentrantLock : lock()

NonfairSync : lock()

AbstractQueuedSynchronizer : compareAndSetState(int expect, int update)

在第3步真正开始加锁,下面是该方法的源代码:

protected final boolean compareAndSetState(int expect, int update) {

return unsafe.compareAndSwapInt(this, stateOffset, expect, update);

}该方法以原子操作的方式更新state变量,本文把java的compareAndSet()方法调用简称为CAS。JDK文档对该方法的说明如下:如果当前状态值等于预期值,则以原子方式将同步状态设置为给定的更新值。此操作具有 volatile 读和写的内存语义。

这里我们分别从编译器和处理器的角度来分析,CAS如何同时具有volatile读和volatile写的内存语义。

前文我们提到过,编译器不会对volatile读与volatile读后面的任意内存操作重排序;编译器不会对volatile写与volatile写前面的任意内存操作重排序。组合这两个条件,意味着为了同时实现volatile读和volatile写的内存语义,编译器不能对CAS与CAS前面和后面的任意内存操作重排序。

下面我们来分析在常见的intel x86处理器中,CAS是如何同时具有volatile读和volatile写的内存语义的。

下面是sun.misc.Unsafe类的compareAndSwapInt()方法的源代码:

public final native boolean compareAndSwapInt(Object o, long offset,

int expected,

int x);可以看到这是个本地方法调用。这个本地方法在openjdk中依次调用的c++代码为:unsafe.cpp,atomic.cpp和atomicwindowsx86.inline.hpp。这个本地方法的最终实现在openjdk的如下位置:openjdk-7-fcs-src-b147-27jun2011\openjdk\hotspot\src\oscpu\windowsx86\vm\ atomicwindowsx86.inline.hpp(对应于windows操作系统,X86处理器)。下面是对应于intel x86处理器的源代码的片段:

// Adding a lock prefix to an instruction on MP machine

// VC++ doesn't like the lock prefix to be on a single line

// so we can't insert a label after the lock prefix.

// By emitting a lock prefix, we can define a label after it.

#define LOCK_IF_MP(mp) __asm cmp mp, 0 \

__asm je L0 \

__asm _emit 0xF0 \

__asm L0:

inline jint Atomic::cmpxchg (jint exchange_value, volatile jint* dest, jint compare_value) {

// alternative for InterlockedCompareExchange

int mp = os::is_MP();

__asm {

mov edx, dest

mov ecx, exchange_value

mov eax, compare_value

LOCK_IF_MP(mp)

cmpxchg dword ptr [edx], ecx

}

}如上面源代码所示,程序会根据当前处理器的类型来决定是否为cmpxchg指令添加lock前缀。如果程序是在多处理器上运行,就为cmpxchg指令加上lock前缀(lock cmpxchg)。反之,如果程序是在单处理器上运行,就省略lock前缀(单处理器自身会维护单处理器内的顺序一致性,不需要lock前缀提供的内存屏障效果)。

intel的手册对lock前缀的说明如下:

确保对内存的读-改-写操作原子执行。在Pentium及Pentium之前的处理器中,带有lock前缀的指令在执行期间会锁住总线,使得其他处理器暂时无法通过总线访问内存。很显然,这会带来昂贵的开销。从Pentium 4,Intel Xeon及P6处理器开始,intel在原有总线锁的基础上做了一个很有意义的优化:如果要访问的内存区域(area of memory)在lock前缀指令执行期间已经在处理器内部的缓存中被锁定(即包含该内存区域的缓存行当前处于独占或以修改状态),并且该内存区域被完全包含在单个缓存行(cache line)中,那么处理器将直接执行该指令。由于在指令执行期间该缓存行会一直被锁定,其它处理器无法读/写该指令要访问的内存区域,因此能保证指令执行的原子性。这个操作过程叫做缓存锁定(cache locking),缓存锁定将大大降低lock前缀指令的执行开销,但是当多处理器之间的竞争程度很高或者指令访问的内存地址未对齐时,仍然会锁住总线。

禁止该指令与之前和之后的读和写指令重排序。

把写缓冲区中的所有数据刷新到内存中。

上面的第2点和第3点所具有的内存屏障效果,足以同时实现volatile读和volatile写的内存语义。

经过上面的这些分析,现在我们终于能明白为什么JDK文档说CAS同时具有volatile读和volatile写的内存语义了。

现在对公平锁和非公平锁的内存语义做个总结:

公平锁和非公平锁释放时,最后都要写一个volatile变量state。

公平锁获取时,首先会去读这个volatile变量。

非公平锁获取时,首先会用CAS更新这个volatile变量,这个操作同时具有volatile读和volatile写的内存语义。

从本文对ReentrantLock的分析可以看出,锁释放-获取的内存语义的实现至少有下面两种方式:

利用volatile变量的写-读所具有的内存语义。

利用CAS所附带的volatile读和volatile写的内存语义。

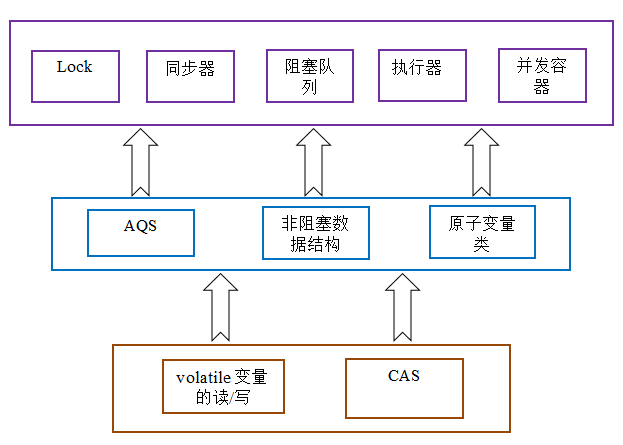

concurrent包的实现

由于java的CAS同时具有 volatile 读和volatile写的内存语义,因此Java线程之间的通信现在有了下面四种方式:

A线程写volatile变量,随后B线程读这个volatile变量。

A线程写volatile变量,随后B线程用CAS更新这个volatile变量。

A线程用CAS更新一个volatile变量,随后B线程用CAS更新这个volatile变量。

A线程用CAS更新一个volatile变量,随后B线程读这个volatile变量。

Java的CAS会使用现代处理器上提供的高效机器级别原子指令,这些原子指令以原子方式对内存执行读-改-写操作,这是在多处理器中实现同步的关键(从本质上来说,能够支持原子性读-改-写指令的计算机器,是顺序计算图灵机的异步等价机器,因此任何现代的多处理器都会去支持某种能对内存执行原子性读-改-写操作的原子指令)。同时,volatile变量的读/写和CAS可以实现线程之间的通信。把这些特性整合在一起,就形成了整个concurrent包得以实现的基石。如果我们仔细分析concurrent包的源代码实现,会发现一个通用化的实现模式:

首先,声明共享变量为volatile;

然后,使用CAS的原子条件更新来实现线程之间的同步;

同时,配合以volatile的读/写和CAS所具有的volatile读和写的内存语义来实现线程之间的通信。

AQS,非阻塞数据结构和原子变量类(java.util.concurrent.atomic包中的类),这些concurrent包中的基础类都是使用这种模式来实现的,而concurrent包中的高层类又是依赖于这些基础类来实现的。从整体来看,concurrent包的实现示意图如下:

以上就是Java内存模型深度解析:锁的内容,更多相关内容请关注PHP中文网(www.php.cn)!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Square Root in Java

Aug 30, 2024 pm 04:26 PM

Square Root in Java

Aug 30, 2024 pm 04:26 PM

Guide to Square Root in Java. Here we discuss how Square Root works in Java with example and its code implementation respectively.

Perfect Number in Java

Aug 30, 2024 pm 04:28 PM

Perfect Number in Java

Aug 30, 2024 pm 04:28 PM

Guide to Perfect Number in Java. Here we discuss the Definition, How to check Perfect number in Java?, examples with code implementation.

Random Number Generator in Java

Aug 30, 2024 pm 04:27 PM

Random Number Generator in Java

Aug 30, 2024 pm 04:27 PM

Guide to Random Number Generator in Java. Here we discuss Functions in Java with examples and two different Generators with ther examples.

Armstrong Number in Java

Aug 30, 2024 pm 04:26 PM

Armstrong Number in Java

Aug 30, 2024 pm 04:26 PM

Guide to the Armstrong Number in Java. Here we discuss an introduction to Armstrong's number in java along with some of the code.

Weka in Java

Aug 30, 2024 pm 04:28 PM

Weka in Java

Aug 30, 2024 pm 04:28 PM

Guide to Weka in Java. Here we discuss the Introduction, how to use weka java, the type of platform, and advantages with examples.

Smith Number in Java

Aug 30, 2024 pm 04:28 PM

Smith Number in Java

Aug 30, 2024 pm 04:28 PM

Guide to Smith Number in Java. Here we discuss the Definition, How to check smith number in Java? example with code implementation.

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

In this article, we have kept the most asked Java Spring Interview Questions with their detailed answers. So that you can crack the interview.

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Java 8 introduces the Stream API, providing a powerful and expressive way to process data collections. However, a common question when using Stream is: How to break or return from a forEach operation? Traditional loops allow for early interruption or return, but Stream's forEach method does not directly support this method. This article will explain the reasons and explore alternative methods for implementing premature termination in Stream processing systems. Further reading: Java Stream API improvements Understand Stream forEach The forEach method is a terminal operation that performs one operation on each element in the Stream. Its design intention is