Javascript high-performance animation and page rendering

No setTimeout, No setInterval

If you have to use setTimeout or setInterval to implement animation, the reason can only be that you need precise control of the animation. But I think that at least at this point in time, advanced browsers and even mobile browsers are popular enough to give you reason to use more efficient methods when implementing animations.

What is efficient

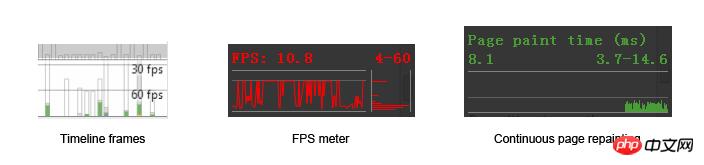

The page is drawn by the system (GPU or CPU) for every frame change. But this kind of drawing is different from the drawing of PC games. Its maximum drawing frequency is limited by the refresh frequency of the monitor (not the graphics card), so in most cases the highest drawing frequency can only be 60 frames per second (frame per second). (hereinafter referred to as fps), corresponding to 60Hz of the display. 60fps is the most ideal state. In daily page performance testing, 60fps is also an important indicator, the closer the better. Among Chrome's debugging tools, there are many tools used to measure the current frame number:

In the following work, we will use these tools to See the performance of our pages in real time.

60fps is both motivation and pressure because it means we only have 16.7 milliseconds (1000 / 60) to draw each frame. If you use setTimeout or setInterval (hereinafter collectively referred to as timer) to control drawing, problems arise.

First of all, Timer’s delay calculation is not accurate enough. The calculation of the delay relies on the browser's built-in clock, and the accuracy of the clock depends on the frequency of clock updates (Timer resolution). The update interval for IE8 and previous IE versions is 15.6 milliseconds. Assume that the setTimeout delay you set is 16.7ms, then it needs to be updated twice by 15.6 milliseconds before the delay is triggered. This also means an unexplained delay of 15.6 x 2 – 16.7 = 14.5 milliseconds.

16.7ms

DELAY: |------------|

CLOCK: |----------|----------|

15.6ms 15.6msSo even if you set the delay for setTimeout to 0ms, it will not trigger immediately. The current update frequency of Chrome and IE9+ browsers is 4ms (if you are using a laptop and using battery instead of power supply mode, in order to save resources, the browser will switch the update frequency to the same system time, that is means less frequent updates).

Taking a step back, if the timer resolution can reach 16.7ms, it will also face an asynchronous queue problem. Because of the asynchronous relationship, the callback function in setTimeout is not executed immediately, but needs to be added to the waiting queue. But the problem is that if there is a new synchronization script that needs to be executed while waiting for the delayed trigger, then the synchronization script will not be ranked after the timer's callback, but will be executed immediately, such as the following code:

function runForSeconds(s) {

var start = +new Date();

while (start + s * 1000 > (+new Date())) {}

}

document.body.addEventListener("click", function () {

runForSeconds(10);

}, false);

setTimeout(function () {

console.log("Done!");

}, 1000 * 3);If someone clicks the body while waiting for the 3 seconds to trigger the delay, will the callback still be triggered on time when 3 seconds is completed? Of course not, it will wait for 10s. Synchronous functions always take precedence over asynchronous functions:

等待3秒延迟 | 1s | 2s | 3s |--->console.log("Done!");

经过2秒 |----1s----|----2s----| |--->console.log("Done!");

点击body后

以为是这样:|----1s----|----2s----|----3s----|--->console.log("Done!")--->|------------------10s----------------|

其实是这样:|----1s----|----2s----|------------------10s----------------|--->console.log("Done!");John Resign has three articles about Timer performance and accuracy: 1.Accuracy of JavaScript Time, 2.Analyzing Timer Performance, 3.How JavaScript Timers Work. From the article, you can see some problems with Timer under different platform browsers and operating systems.

Take a step back and assume that the timer resolution can reach 16.7ms, and assuming that the asynchronous function will not be delayed, the animation controlled by the timer is still unsatisfactory. This is what the next section will talk about.

Vertical synchronization problem

Please allow me to introduce another constant 60 - the screen refresh rate 60Hz.

What is the relationship between 60Hz and 60fps? not related. fps represents the frequency at which the GPU renders the image, and Hz represents the frequency at which the monitor refreshes the screen. For a static picture, you can say that the fps of this picture is 0 frames/second, but you can never say that the refresh rate of the screen at this time is 0Hz, which means that the refresh rate does not change with the change of the image content. Whether it’s a game or a browser, when we talk about frame drops, it means that the GPU renders the picture less frequently. For example, it drops to 30fps or even 20fps, but because of the principle of persistence of vision, the picture we see is still moving and coherent.

Continuing from the previous section, we assume that each timer will not be delayed or interfered by the synchronization function, and can even shorten the time to 16ms, then what will happen:

(Click the image to enlarge)

Frame loss occurred at 22 seconds

If the delay time is shortened, the number of lost frames will be More:

The actual situation will be much more complicated than imagined above. Even if you can give a fixed delay to solve the problem of frame loss on a 60Hz screen, what should you do with monitors with other refresh rates? You must know that different devices, or even the same device, have different screen refresh rates under different battery states. .

以上同时还忽略了屏幕刷新画面的时间成本。问题产生于GPU渲染画面的频率和屏幕刷新频率的不一致:如果GPU渲染出一帧画面的时间比显示器刷新一张画面的时间要短(更快),那么当显示器还没有刷新完一张图片时,GPU渲染出的另一张图片已经送达并覆盖了前一张,导致屏幕上画面的撕裂,也就是是上半部分是前一张图片,下半部分是后一张图片:

PC游戏中解决这个问题的方法是开启垂直同步(v-sync),也就是让GPU妥协,GPU渲染图片必须在屏幕两次刷新之间,且必须等待屏幕发出的垂直同步信号。但这样同样也是要付出代价的:降低了GPU的输出频率,也就降低了画面的帧数。以至于你在玩需要高帧数运行的游戏时(比如竞速、第一人称射击)感觉到“顿卡”,因为掉帧。

requestAnimationFrame

在这里不谈requestAnimationFrame(以下简称rAF)用法,具体请参考MDN:Window.requestAnimationFrame()。我们来具体谈谈rAF所解决的问题。

从上一节我们可以总结出实现平滑动画的两个因素

时机(Frame Timing): 新的一帧准备好的时机

成本(Frame Budget): 渲染新的一帧需要多长的时间

这个Native API把我们从纠结于多久刷新的一次的困境中解救出来(其实rAF也不关心距离下次屏幕刷新页面还需要多久)。当我们调用这个函数的时候,我们告诉它需要做两件事: 1. 我们需要新的一帧;2.当你渲染新的一帧时需要执行我传给你的回调函数

那么它解决了我们上面描述的第一个问题,产生新的一帧的时机。

那么第二个问题呢。不,它无能为力。比如可以对比下面两个页面:

DEMO

DEMO-FIXED

对比两个页面的源码,你会发现只有一处不同:

// animation loop

function update(timestamp) {

for(var m = 0; m < movers.length; m++) {

// DEMO 版本

//movers[m].style.left = ((Math.sin(movers[m].offsetTop + timestamp/1000)+1) * 500) + 'px';

// FIXED 版本

movers[m].style.left = ((Math.sin(m + timestamp/1000)+1) * 500) + 'px';

}

rAF(update);

};

rAF(update);DEMO版本之所以慢的原因是,在修改每一个物体的left值时,会请求这个物体的offsetTop值。这是一个非常耗时的reflow操作(具体还有哪些耗时的reflow操作可以参考这篇: How (not) to trigger a layout in WebKit)。这一点从Chrome调试工具中可以看出来(截图中的某些功能需要在Chrome canary版本中才可启用)

未矫正的版本

可见大部分时间都花在了rendering上,而矫正之后的版本:

rendering时间大大减少了

但如果你的回调函数耗时真的很严重,rAF还是可以为你做一些什么的。比如当它发现无法维持60fps的频率时,它会把频率降低到30fps,至少能够保持帧数的稳定,保持动画的连贯。

使用rAF推迟代码

没有什么是万能的,面对上面的情况,我们需要对代码进行组织和优化。

看看下面这样一段代码:

function jank(second) {

var start = +new Date();

while (start + second * 1000 > (+new Date())) {}

}

p.style.backgroundColor = "red";

// some long run task

jank(5);

p.style.backgroundColor = "blue";无论在任何的浏览器中运行上面的代码,你都不会看到p变为红色,页面通常会在假死5秒,然后容器变为蓝色。这是因为浏览器的始终只有一个线程在运行(可以这么理解,因为js引擎与UI引擎互斥)。虽然你告诉浏览器此时p背景颜色应该为红色,但是它此时还在执行脚本,无法调用UI线程。

有了这个前提,我们接下来看这段代码:

var p = document.getElementById("foo");

var currentWidth = p.innerWidth;

p.style.backgroundColor = "blue";

// do some "long running" task, like sorting data这个时候我们不仅仅需要更新背景颜色,还需要获取容器的宽度。可以想象它的执行顺序如下:

当我们请求innerWidth一类的属性时,浏览器会以为我们马上需要,于是它会立即更新容器的样式(通常浏览器会攒着一批,等待时机一次性的repaint,以便节省性能),并把计算的结果告诉我们。这通常是性能消耗量大的工作。

但如果我们并非立即需要得到结果呢?

上面的代码有两处不足,

更新背景颜色的代码过于提前,根据前一个例子,我们知道,即使在这里告知了浏览器我需要更新背景颜色,浏览器至少也要等到js运行完毕才能调用UI线程;

假设后面部分的long runing代码会启动一些异步代码,比如setTimeout或者Ajax请求又或者web-worker,那应该尽早为妙。

综上所述,如果我们不是那么迫切的需要知道innerWidth,我们可以使用rAF推迟这部分代码的发生:

requestAnimationFrame(function(){

var el = document.getElementById("foo");

var currentWidth = el.innerWidth;

el.style.backgroundColor = "blue";

// ...

});

// do some "long running" task, like sorting data可见即使我们在这里没有使用到动画,但仍然可以使用rAF优化我们的代码。执行的顺序会变成:

在这里rAF的用法变成了:把代码推迟到下一帧执行。

有时候我们需要把代码推迟的更远,比如这个样子:

再比如我们想要一个效果分两步执行:1.p的display变为block;2. p的top值缩短移动到某处。如果这两项操作都放入同一帧中的话,浏览器会同时把这两项更改应用于容器,在同一帧内。于是我们需要两帧把这两项操作区分开来:

requestAnimationFrame(function(){

el.style.display = "block";

requestAnimationFrame(function(){

// fire off a CSS transition on its `top` property

el.style.top = "300px";

});

});这样的写法好像有些不太讲究,Kyle Simpson有一个开源项目h5ive,它把上面的用法封装了起来,并且提供了API。实现起来非常简单,摘一段代码瞧瞧:

function qID(){

var id;

do {

id = Math.floor(Math.random() * 1E9);

} while (id in q_ids);

return id;

}

function queue(cb) {

var qid = qID();

q_ids[qid] = rAF(function(){

delete q_ids[qid];

cb.apply(publicAPI,arguments);

});

return qid;

}

function queueAfter(cb) {

var qid;

qid = queue(function(){

// do our own rAF call here because we want to re-use the same `qid` for both frames

q_ids[qid] = rAF(function(){

delete q_ids[qid];

cb.apply(publicAPI,arguments);

});

});

return qid;

}使用方法:

// 插入下一帧

id1 = aFrame.queue(function(){

text = document.createTextNode("##");

body.appendChild(text);

});

// 插入下下一帧

id2 = aFrame.queueAfter(function(){

text = document.createTextNode("!!");

body.appendChild(text);

});使用rAF解耦代码

先从一个2011年twitter遇到的bug说起。

当时twitter加入了一个新功能:“无限滚动”。也就是当页面滚至底部的时候,去加载更多的twitter:

$(window).bind('scroll', function () {

if (nearBottomOfPage()) {

// load more tweets ...

}

});但是在这个功能上线之后,发现了一个严重的bug:经过几次滚动到最底部之后,滚动就会变得奇慢无比。

经过排查发现,原来是一条语句引起的:$details.find(“.details-pane-outer”);

这还不是真正的罪魁祸首,真正的原因是因为他们将使用的jQuery类库从1.4.2升级到了1.4.4版。而这jQuery其中一个重要的升级是把Sizzle的上下文选择器全部替换为了querySelectorAll。但是这个接口原实现使用的是getElementsByClassName。虽然querySelectorAll在大部分情况下性能还是不错的。但在通过Class名称选择元素这一项是占了下风。有两个对比测试可以看出来:1.querySelectorAll v getElementsByClassName 2.jQuery Simple Selector

通过这个bug,John Resig给出了一条(实际上是两条,但是今天只取与我们话题有关的)非常重要的建议

It’s a very, very, bad idea to attach handlers to the window scroll event.

他想表达的意思是,像scroll,resize这一类的事件会非常频繁的触发,如果把太多的代码放进这一类的回调函数中,会延迟页面的滚动,甚至造成无法响应。所以应该把这一类代码分离出来,放在一个timer中,有间隔的去检查是否滚动,再做适当的处理。比如如下代码:

var didScroll = false;

$(window).scroll(function() {

didScroll = true;

});

setInterval(function() {

if ( didScroll ) {

didScroll = false;

// Check your page position and then

// Load in more results

}

}, 250)这样的作法类似于Nicholas将需要长时间运算的循环分解为“片”来进行运算:

// 具体可以参考他写的《javascript高级程序设计》

// 也可以参考他的这篇博客: http://www.php.cn/

function chunk(array, process, context){

var items = array.concat(); //clone the array

setTimeout(function(){

var item = items.shift();

process.call(context, item);

if (items.length > 0){

setTimeout(arguments.callee, 100);

}

}, 100);

}原理其实是一样的,为了优化性能、为了防止浏览器假死,将需要长时间运行的代码分解为小段执行,能够使浏览器有时间响应其他的请求。

回到rAF上来,其实rAF也可以完成相同的功能。比如最初的滚动代码是这样:

function onScroll() {

update();

}

function update() {

// assume domElements has been declared

for(var i = 0; i < domElements.length; i++) {

// read offset of DOM elements

// to determine visibility - a reflow

// then apply some CSS classes

// to the visible items - a repaint

}

}

window.addEventListener('scroll', onScroll, false);这是很典型的反例:每一次滚动都需要遍历所有元素,而且每一次遍历都会引起reflow和repaint。接下来我们要做的事情就是把这些费时的代码从update中解耦出来。

首先我们仍然需要给scroll事件添加回调函数,用于记录滚动的情况,以方便其他函数的查询:

var latestKnownScrollY = 0;

function onScroll() {

latestKnownScrollY = window.scrollY;

}接下来把分离出来的repaint或者reflow操作全部放入一个update函数中,并且使用rAF进行调用:

function update() {

requestAnimationFrame(update);

var currentScrollY = latestKnownScrollY;

// read offset of DOM elements

// and compare to the currentScrollY value

// then apply some CSS classes

// to the visible items

}

// kick off

requestAnimationFrame(update);其实解耦的目的已经达到了,但还需要做一些优化,比如不能让update无限执行下去,需要设标志位来控制它的执行:

var latestKnownScrollY = 0,

ticking = false;

function onScroll() {

latestKnownScrollY = window.scrollY;

requestTick();

}

function requestTick() {

if(!ticking) {

requestAnimationFrame(update);

}

ticking = true;

}并且我们始终只需要一个rAF实例的存在,也不允许无限次的update下去,于是我们还需要一个出口:

function update() {

// reset the tick so we can

// capture the next onScroll

ticking = false;

var currentScrollY = latestKnownScrollY;

// read offset of DOM elements

// and compare to the currentScrollY value

// then apply some CSS classes

// to the visible items

}

// kick off - no longer needed! Woo.

// update();理解Layer

Kyle Simpson说:

Rule of thumb: don’t do in JS what you can do in CSS.

如以上所说,即使使用rAF,还是会有诸多的不便。我们还有一个选择是使用css动画:虽然浏览器中UI线程与js线程是互斥,但这一点对css动画不成立。

在这里不聊css动画的用法。css动画运用的是什么原理来提升浏览器性能的。

首先我们看看淘宝首页的焦点图:

我想提出一个问题,为什么明明可以使用translate 2d去实现的动画,它要用3d去实现呢?

I am not a Taobao employee, but my first guess is that the reason for doing this is to use the translate3d hack. Simply put, if you add the -webkit-transform: translateZ(0); or -webkit-transform: translate3d(0,0,0); attribute to an element, then you are telling the browser to use the GPU to render the element. layers, improving speed and performance compared to regular CPU rendering. (I'm pretty sure that doing this will enable hardware acceleration in Chrome, but there is no guarantee on other platforms. As far as the information I have obtained, it is also applicable in most browsers such as Firefox and Safari).

But this statement is actually not accurate, at least in the current version of Chrome, this is not a hack. Because by default all web pages will go through the GPU when rendering. So is this still necessary? have. Before understanding the principle, you must first understand the concept of a layer.

html will be converted into a DOM tree in the browser, and each node of the DOM tree will be converted into a RenderObject. Multiple RenderObjects may correspond to one or more RenderLayer. The process of browser rendering is as follows:

Get the DOM and split it into multiple layers (RenderLayer)

Rasterize each layer ized and drawn independently into the bitmap

Upload these bitmaps to the GPU as textures

Composite multiple layers to generate the final screen image (ultimate layer).

This is similar to the 3D rendering in the game. Although we see a three-dimensional character, the skin of this character is "pasted" and "pieced together" by different pictures. of. The web page has one more step than this, and although the final web page is composed of multiple bitmap layers, what we see is just a photocopy, and ultimately there is only one layer. Of course, some layers cannot be combined, such as flash. Taking a playback page of iQiyi (http://www.php.cn/) as an example, we can use Chrome’s Layer panel (not enabled by default and needs to be turned on manually) to view all the layers on the page:

We can see that the page is composed of the following layers:

OK, then here comes the question.

Suppose I now want to change the style of a container (can be seen as a step of animation), and in the worst case, change its length and width - why is changing the length and width the best? What a bad situation. Usually changing the style of an object requires the following four steps:

Any change in attributes will cause the browser to recalculate the style of the container. For example, if you change the size of the container or position (reflow), then the first thing affected is the size and position of the container (it also affects the position of adjacent nodes of its related parent node, etc.), and then the browser needs to repaint the container; but If you only change the background color of the container and other properties that have nothing to do with the size of the container, then you will save the time of calculating the position in the first step. In other words, if the attribute changes are started earlier (the higher up) in the waterfall chart, the impact will be greater and the efficiency will be lower. Reflow and repaint will cause the bitmaps of the layer where all affected nodes are located to be redrawn, and the above process will be executed repeatedly, resulting in reduced efficiency.

In order to minimize the cost, of course it is best to only leave the compositing layer step. Suppose that when we change the style of a container, it only affects itself, and there is no need to redraw it. Wouldn't it be better to change the style directly by changing the attributes of the texture in the GPU? This is of course achievable, provided you have your own layer

This is also the principle of the hardware acceleration hack above, and the principle of css animation - create your own layer for the element, rather than with most of the elements on the page Elements share a layer.

What kind of elements can create their own layer? In Chrome, at least one of the following conditions must be met:

Layer has 3D or perspective transform CSS properties (properties with 3D elements)

Layer is used by

Layer is used by a

Layer is used for a composited plugin(plug-in, such as flash)

Layer uses a CSS animation for its opacity or uses an animated webkit transform(CSS animation)

Layer uses accelerated CSS filters(CSS filter)

Layer with a composited descendant has information that needs to be in the composited layer tree, such as a clip or reflection (there is a descendant element that is an independent layer)

- ##Layer has a sibling with a lower z-index which has a compositing layer (in other words the layer is rendered on top of a composited layer)(The adjacent elements of the element are independent layers)

同时你也可以勾选Chrome开发工具中的rendering选显卡下的Show composited layer borders 选项。页面上的layer便会加以边框区别开来。为了验证我们的想法,看下面这样一段代码:

<html>

<head>

<style type="text/css">

p {

-webkit-animation-duration: 5s;

-webkit-animation-name: slide;

-webkit-animation-iteration-count: infinite;

-webkit-animation-direction: alternate;

width: 200px;

height: 200px;

margin: 100px;

background-color: skyblue;

}

@-webkit-keyframes slide {

from {

-webkit-transform: rotate(0deg);

}

to {

-webkit-transform: rotate(120deg);

}

}

</style>

</head>

<body>

<p id="foo">I am a strange root.</p>

</body>

</html>运行时的timeline截图如下:

可见元素有自己的layer,并且在动画的过程中没有触发reflow和repaint。

最后再看看淘宝首页,不仅仅只有焦点图才拥有了独立的layer:

但太多的layer也未必是一件好事情,有兴趣的同学可以看一看这篇文章:Jank Busting Apple’s Home Page。看一看在苹果首页太多layer时出现的问题。

以上就是Javascript高性能动画与页面渲染的内容,更多相关内容请关注PHP中文网(www.php.cn)!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

How to implement an online speech recognition system using WebSocket and JavaScript

Dec 17, 2023 pm 02:54 PM

How to implement an online speech recognition system using WebSocket and JavaScript

Dec 17, 2023 pm 02:54 PM

How to use WebSocket and JavaScript to implement an online speech recognition system Introduction: With the continuous development of technology, speech recognition technology has become an important part of the field of artificial intelligence. The online speech recognition system based on WebSocket and JavaScript has the characteristics of low latency, real-time and cross-platform, and has become a widely used solution. This article will introduce how to use WebSocket and JavaScript to implement an online speech recognition system.

WebSocket and JavaScript: key technologies for implementing real-time monitoring systems

Dec 17, 2023 pm 05:30 PM

WebSocket and JavaScript: key technologies for implementing real-time monitoring systems

Dec 17, 2023 pm 05:30 PM

WebSocket and JavaScript: Key technologies for realizing real-time monitoring systems Introduction: With the rapid development of Internet technology, real-time monitoring systems have been widely used in various fields. One of the key technologies to achieve real-time monitoring is the combination of WebSocket and JavaScript. This article will introduce the application of WebSocket and JavaScript in real-time monitoring systems, give code examples, and explain their implementation principles in detail. 1. WebSocket technology

How to use JavaScript and WebSocket to implement a real-time online ordering system

Dec 17, 2023 pm 12:09 PM

How to use JavaScript and WebSocket to implement a real-time online ordering system

Dec 17, 2023 pm 12:09 PM

Introduction to how to use JavaScript and WebSocket to implement a real-time online ordering system: With the popularity of the Internet and the advancement of technology, more and more restaurants have begun to provide online ordering services. In order to implement a real-time online ordering system, we can use JavaScript and WebSocket technology. WebSocket is a full-duplex communication protocol based on the TCP protocol, which can realize real-time two-way communication between the client and the server. In the real-time online ordering system, when the user selects dishes and places an order

How to implement an online reservation system using WebSocket and JavaScript

Dec 17, 2023 am 09:39 AM

How to implement an online reservation system using WebSocket and JavaScript

Dec 17, 2023 am 09:39 AM

How to use WebSocket and JavaScript to implement an online reservation system. In today's digital era, more and more businesses and services need to provide online reservation functions. It is crucial to implement an efficient and real-time online reservation system. This article will introduce how to use WebSocket and JavaScript to implement an online reservation system, and provide specific code examples. 1. What is WebSocket? WebSocket is a full-duplex method on a single TCP connection.

JavaScript and WebSocket: Building an efficient real-time weather forecasting system

Dec 17, 2023 pm 05:13 PM

JavaScript and WebSocket: Building an efficient real-time weather forecasting system

Dec 17, 2023 pm 05:13 PM

JavaScript and WebSocket: Building an efficient real-time weather forecast system Introduction: Today, the accuracy of weather forecasts is of great significance to daily life and decision-making. As technology develops, we can provide more accurate and reliable weather forecasts by obtaining weather data in real time. In this article, we will learn how to use JavaScript and WebSocket technology to build an efficient real-time weather forecast system. This article will demonstrate the implementation process through specific code examples. We

Simple JavaScript Tutorial: How to Get HTTP Status Code

Jan 05, 2024 pm 06:08 PM

Simple JavaScript Tutorial: How to Get HTTP Status Code

Jan 05, 2024 pm 06:08 PM

JavaScript tutorial: How to get HTTP status code, specific code examples are required. Preface: In web development, data interaction with the server is often involved. When communicating with the server, we often need to obtain the returned HTTP status code to determine whether the operation is successful, and perform corresponding processing based on different status codes. This article will teach you how to use JavaScript to obtain HTTP status codes and provide some practical code examples. Using XMLHttpRequest

How to use insertBefore in javascript

Nov 24, 2023 am 11:56 AM

How to use insertBefore in javascript

Nov 24, 2023 am 11:56 AM

Usage: In JavaScript, the insertBefore() method is used to insert a new node in the DOM tree. This method requires two parameters: the new node to be inserted and the reference node (that is, the node where the new node will be inserted).

JavaScript and WebSocket: Building an efficient real-time image processing system

Dec 17, 2023 am 08:41 AM

JavaScript and WebSocket: Building an efficient real-time image processing system

Dec 17, 2023 am 08:41 AM

JavaScript is a programming language widely used in web development, while WebSocket is a network protocol used for real-time communication. Combining the powerful functions of the two, we can create an efficient real-time image processing system. This article will introduce how to implement this system using JavaScript and WebSocket, and provide specific code examples. First, we need to clarify the requirements and goals of the real-time image processing system. Suppose we have a camera device that can collect real-time image data