Backend Development

Backend Development

Python Tutorial

Python Tutorial

Example diagram of using python Selenium to crawl content and store MySQL database

Example diagram of using python Selenium to crawl content and store MySQL database

Example diagram of using python Selenium to crawl content and store MySQL database

This article mainly introduces the implementation code of python Selenium crawling content and storing it in MySQL database. Friends who need it can refer to

I passed it earlier An article describes how to crawl CSDN blog summaries and other information. Usually, after using Selenium crawler to crawl data, it needs to be stored in TXT text, but this is difficult to perform data processing and data analysis. This article mainly talks about crawling my personal blog information through Selenium, and then storing it in the database MySQL to analyze the data, such as analyzing which time period there are more blogs, combining WordCloud to analyze the topic of the article, article reading ranking, etc. .

This is a basic article. I hope it will be helpful to you. If there are any errors or deficiencies in the article, please forgive me. The next article will briefly explain the process of data analysis.

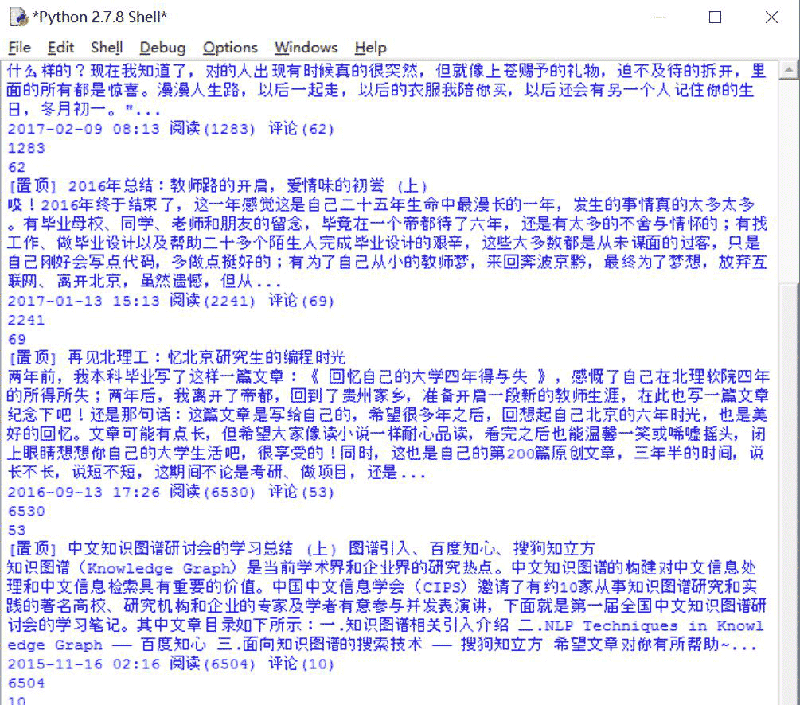

1. Crawling results

The crawled address is: http://blog.csdn.net/Eastmount

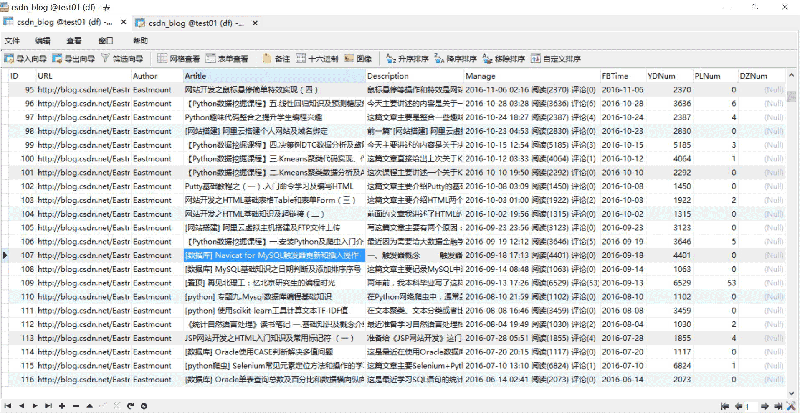

## The result of crawling and stored to the MySQL database is shown below:

The complete code is as follows:

# coding=utf-8

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

import selenium.webdriver.support.ui as ui

import re

import time

import os

import codecs

import MySQLdb

#打开Firefox浏览器 设定等待加载时间

driver = webdriver.Firefox()

wait = ui.WebDriverWait(driver,10)

#获取每个博主的博客页面低端总页码

def getPage():

print 'getPage'

number = 0

texts = driver.find_element_by_xpath("//p[@id='papelist']").text

print '页码', texts

m = re.findall(r'(\w*[0-9]+)\w*',texts) #正则表达式寻找数字

print '页数:' + str(m[1])

return int(m[1])

#主函数

def main():

#获取txt文件总行数

count = len(open("Blog_URL.txt",'rU').readlines())

print count

n = 0

urlfile = open("Blog_URL.txt",'r')

#循环获取每个博主的文章摘信息

while n < count: #这里爬取2个人博客信息,正常情况count个博主信息

url = urlfile.readline()

url = url.strip("\n")

print url

driver.get(url)

#获取总页码

allPage = getPage()

print u'页码总数为:', allPage

time.sleep(2)

#数据库操作结合

try:

conn=MySQLdb.connect(host='localhost',user='root',

passwd='123456',port=3306, db='test01')

cur=conn.cursor() #数据库游标

#报错:UnicodeEncodeError: 'latin-1' codec can't encode character

conn.set_character_set('utf8')

cur.execute('SET NAMES utf8;')

cur.execute('SET CHARACTER SET utf8;')

cur.execute('SET character_set_connection=utf8;')

#具体内容处理

m = 1 #第1页

while m <= allPage:

ur = url + "/article/list/" + str(m)

print ur

driver.get(ur)

#标题

article_title = driver.find_elements_by_xpath("//p[@class='article_title']")

for title in article_title:

#print url

con = title.text

con = con.strip("\n")

#print con + '\n'

#摘要

article_description = driver.find_elements_by_xpath("//p[@class='article_description']")

for description in article_description:

con = description.text

con = con.strip("\n")

#print con + '\n'

#信息

article_manage = driver.find_elements_by_xpath("//p[@class='article_manage']")

for manage in article_manage:

con = manage.text

con = con.strip("\n")

#print con + '\n'

num = 0

print u'长度', len(article_title)

while num < len(article_title):

#插入数据 8个值

sql = '''insert into csdn_blog

(URL,Author,Artitle,Description,Manage,FBTime,YDNum,PLNum)

values(%s, %s, %s, %s, %s, %s, %s, %s)'''

Artitle = article_title[num].text

Description = article_description[num].text

Manage = article_manage[num].text

print Artitle

print Description

print Manage

#获取作者

Author = url.split('/')[-1]

#获取阅读数和评论数

mode = re.compile(r'\d+\.?\d*')

YDNum = mode.findall(Manage)[-2]

PLNum = mode.findall(Manage)[-1]

print YDNum

print PLNum

#获取发布时间

end = Manage.find(u' 阅读')

FBTime = Manage[:end]

cur.execute(sql, (url, Author, Artitle, Description, Manage,FBTime,YDNum,PLNum))

num = num + 1

else:

print u'数据库插入成功'

m = m + 1

#异常处理

except MySQLdb.Error,e:

print "Mysql Error %d: %s" % (e.args[0], e.args[1])

finally:

cur.close()

conn.commit()

conn.close()

n = n + 1

else:

urlfile.close()

print 'Load Over'

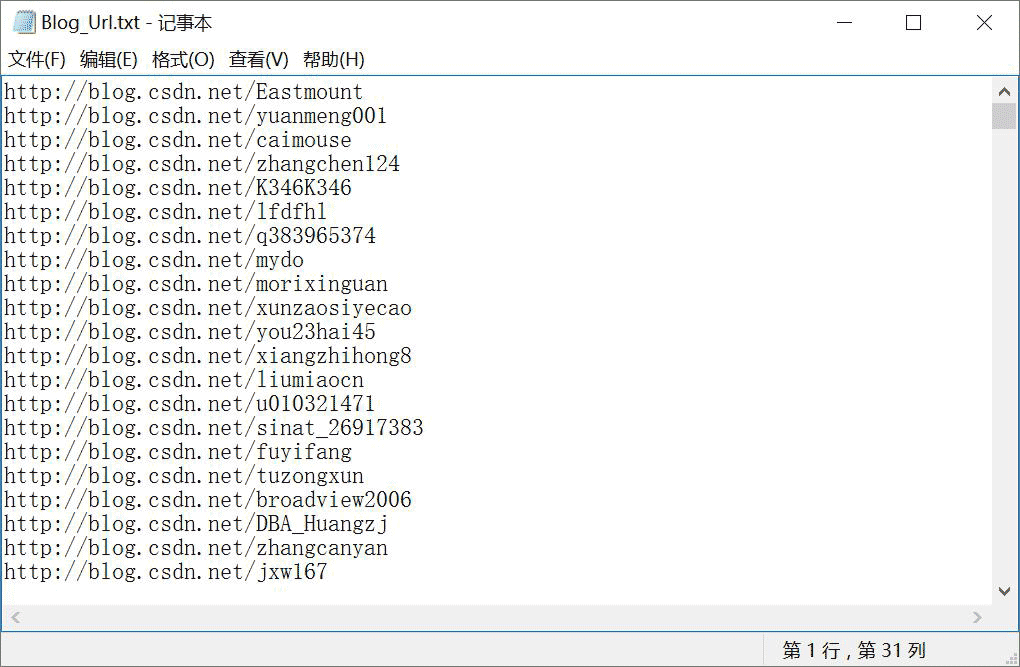

main()Place the blog address URL of the user that needs to be crawled in the Blog_Url.txt file, as shown in the figure below. Note that here, the author has pre-written a URL code to crawl all CSDN experts, which has been omitted here to access other people's resources to increase reading volume.

The analysis process is as follows.

The analysis process is as follows.

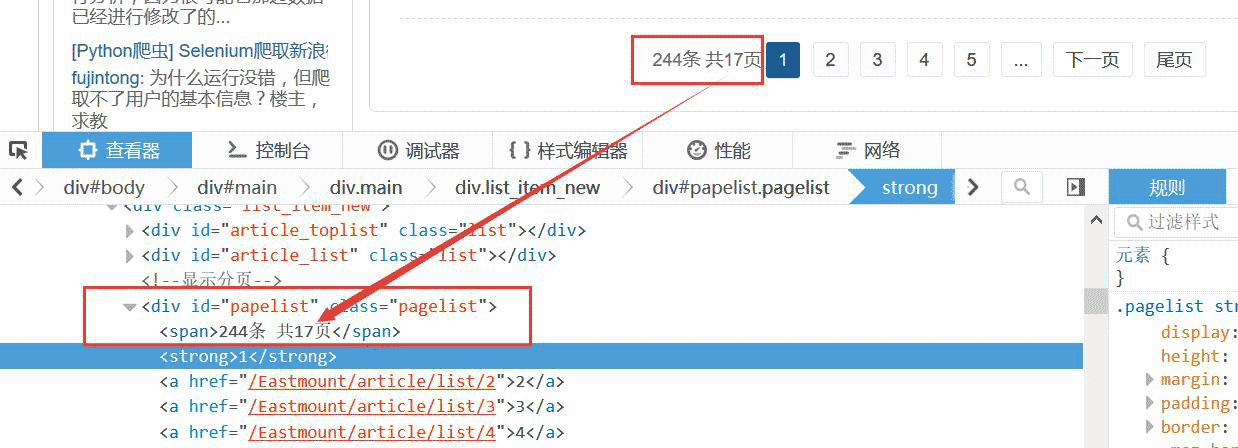

1. Get the blogger’s total page number

First read the blogger’s address from Blog_Url.txt, and then access and obtain the total page number. The code is as follows:

#获取每个博主的博客页面低端总页码

def getPage():

print 'getPage'

number = 0

texts = driver.find_element_by_xpath("//p[@id='papelist']").text

print '页码', texts

m = re.findall(r'(\w*[0-9]+)\w*',texts) #正则表达式寻找数字

print '页数:' + str(m[1])

return int(m[1])For example, the total page number is 17 pages, as shown in the figure below:

The blog page turning here uses URL connection, which is more convenient. For example: http://blog.csdn.net/Eastmount/article/list/2

So you only need: 1. Get the total page number; 2. Crawl the information of each page; 3. Set the URL to loop through Page; 4. Crawl again.

You can also click "Next Page" to jump. If there is no "Next Page", the jump will stop, the crawler will end, and then the next blogger will be crawled.

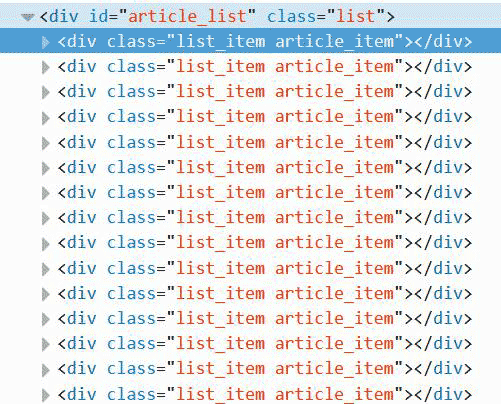

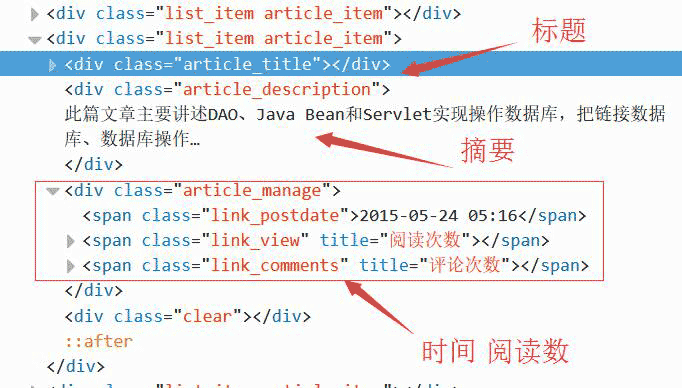

Then review the elements and analyze each blog page, if using Beaut if

ulSoup crawling will report an error "Forbidden". It is found that each article is composed of a, as shown below, you only need to locate the position.

This can be climbed here to position the location. Here you need to locate the title, Abstract, and time.

This can be climbed here to position the location. Here you need to locate the title, Abstract, and time.

代码如下所示。注意,在while中同时获取三个值,它们是对应的。

#标题

article_title = driver.find_elements_by_xpath("//p[@class='article_title']")

for title in article_title:

con = title.text

con = con.strip("\n")

print con + '\n'

#摘要

article_description = driver.find_elements_by_xpath("//p[@class='article_description']")

for description in article_description:

con = description.text

con = con.strip("\n")

print con + '\n'

#信息

article_manage = driver.find_elements_by_xpath("//p[@class='article_manage']")

for manage in article_manage:

con = manage.text

con = con.strip("\n")

print con + '\n'

num = 0

print u'长度', len(article_title)

while num < len(article_title):

Artitle = article_title[num].text

Description = article_description[num].text

Manage = article_manage[num].text

print Artitle, Description, Manage 4.特殊字符串处理

获取URL最后一个/后的博主名称、获取字符串时间、阅读数代码如下:

#获取博主姓名 url = "http://blog.csdn.net/Eastmount" print url.split('/')[-1] #输出: Eastmount #获取数字 name = "2015-09-08 18:06 阅读(909) 评论(0)" print name import re mode = re.compile(r'\d+\.?\d*') print mode.findall(name) #输出: ['2015', '09', '08', '18', '06', '909', '0'] print mode.findall(name)[-2] #输出: 909 #获取时间 end = name.find(r' 阅读') print name[:end] #输出: 2015-09-08 18:06 import time, datetime a = time.strptime(name[:end],'%Y-%m-%d %H:%M') print a #输出: time.struct_time(tm_year=2015, tm_mon=9, tm_mday=8, tm_hour=18, tm_min=6, # tm_sec=0, tm_wday=1, tm_yday=251, tm_isdst=-1)

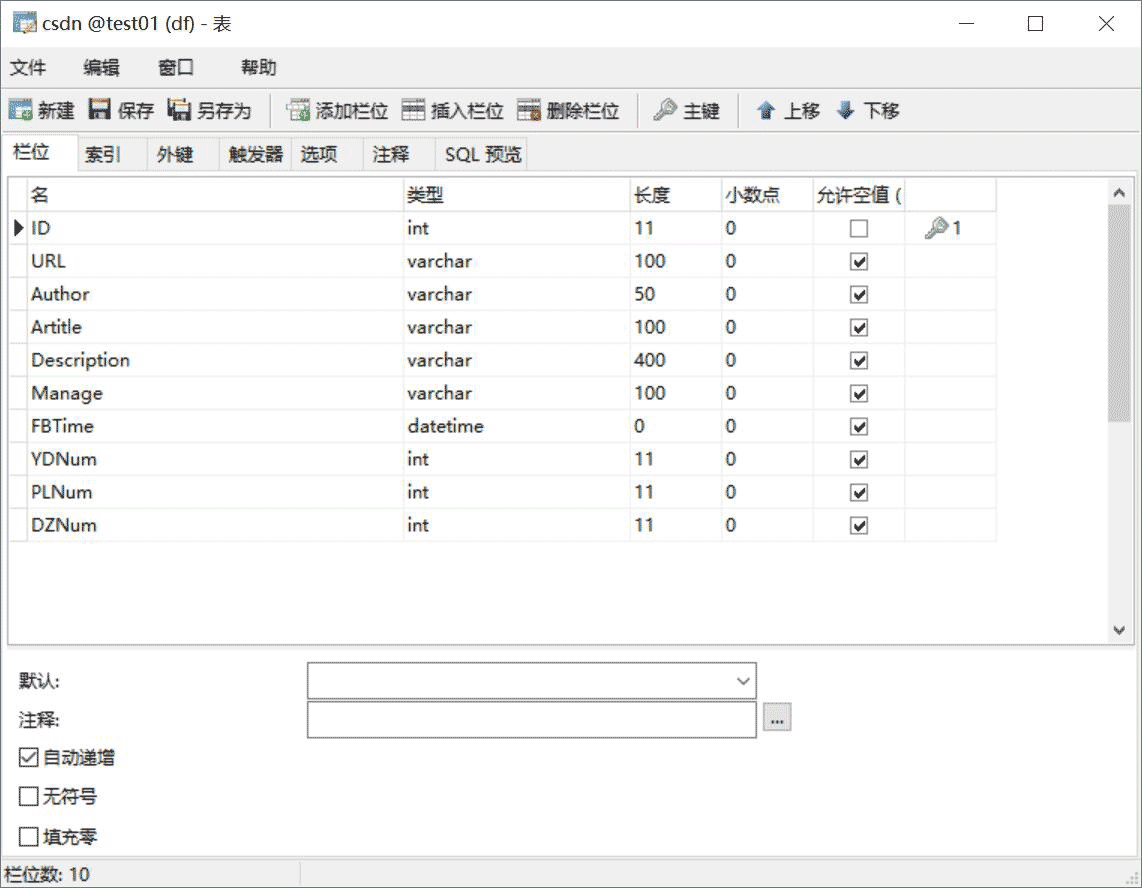

三. 数据库相关操作

SQL语句创建表代码如下:

CREATE TABLE `csdn` ( `ID` int(11) NOT NULL AUTO_INCREMENT, `URL` varchar(100) COLLATE utf8_bin DEFAULT NULL, `Author` varchar(50) COLLATE utf8_bin DEFAULT NULL COMMENT '作者', `Artitle` varchar(100) COLLATE utf8_bin DEFAULT NULL COMMENT '标题', `Description` varchar(400) COLLATE utf8_bin DEFAULT NULL COMMENT '摘要', `Manage` varchar(100) COLLATE utf8_bin DEFAULT NULL COMMENT '信息', `FBTime` datetime DEFAULT NULL COMMENT '发布日期', `YDNum` int(11) DEFAULT NULL COMMENT '阅读数', `PLNum` int(11) DEFAULT NULL COMMENT '评论数', `DZNum` int(11) DEFAULT NULL COMMENT '点赞数', PRIMARY KEY (`ID`) ) ENGINE=InnoDB AUTO_INCREMENT=9371 DEFAULT CHARSET=utf8 COLLATE=utf8_bin;

显示如下图所示:

其中,Python调用MySQL推荐下面这篇文字。

python专题九.Mysql数据库编程基础知识

核心代码如下所示:

# coding:utf-8 import MySQLdb try: conn=MySQLdb.connect(host='localhost',user='root',passwd='123456',port=3306, db='test01') cur=conn.cursor() #插入数据 sql = '''insert into student values(%s, %s, %s)''' cur.execute(sql, ('yxz','111111', '10')) #查看数据 print u'\n插入数据:' cur.execute('select * from student') for data in cur.fetchall(): print '%s %s %s' % data cur.close() conn.commit() conn.close() except MySQLdb.Error,e: print "Mysql Error %d: %s" % (e.args[0], e.args[1])

注意,在下载过程中,有的网站是新版本的,无法获取页码。

比如:http://blog.csdn.net/michaelzhou224

这时需要简单设置,跳过这些链接,并保存到文件中,核心代码如下所示:

#获取每个博主的博客页面低端总页码

def getPage():

print 'getPage'

number = 0

#texts = driver.find_element_by_xpath("//p[@id='papelist']").text

texts = driver.find_element_by_xpath("//p[@class='pagelist']").text

print 'testsss'

print u'页码', texts

if texts=="":

print u'页码为0 网站错误'

return 0

m = re.findall(r'(\w*[0-9]+)\w*',texts) #正则表达式寻找数字

print u'页数:' + str(m[1])

return int(m[1])主函数修改:

error = codecs.open("Blog_Error.txt", 'a', 'utf-8')

#循环获取每个博主的文章摘信息

while n < count: #这里爬取2个人博客信息,正常情况count个博主信息

url = urlfile.readline()

url = url.strip("\n")

print url

driver.get(url+"/article/list/1")

#print driver.page_source

#获取总页码

allPage = getPage()

print u'页码总数为:', allPage

#返回错误,否则程序总截住

if allPage==0:

error.write(url + "\r\n")

print u'错误URL'

continue; #跳过进入下一个博主

time.sleep(2)

#数据库操作结合

try:

.....The above is the detailed content of Example diagram of using python Selenium to crawl content and store MySQL database. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1376

1376

52

52

What is the difference between syntax for adding columns in different database systems

Apr 09, 2025 pm 02:15 PM

What is the difference between syntax for adding columns in different database systems

Apr 09, 2025 pm 02:15 PM

不同数据库系统添加列的语法为:MySQL:ALTER TABLE table_name ADD column_name data_type;PostgreSQL:ALTER TABLE table_name ADD COLUMN column_name data_type;Oracle:ALTER TABLE table_name ADD (column_name data_type);SQL Server:ALTER TABLE table_name ADD column_name data_

How to add columns in SQL graphical tools?

Apr 09, 2025 pm 12:54 PM

How to add columns in SQL graphical tools?

Apr 09, 2025 pm 12:54 PM

Add Columns in SQL Graphics Tool: Select the table to which columns you want to add. Right-click and select "Alter Table" or similar options. Defines the properties of the new column (name, data type, length, or whether it is empty). Specifies the default value for the new column, if applicable. Select the appropriate data type to avoid data errors. Use meaningful column names. Consider the performance impact when performing column addition operations on large tables. Always back up the database before the operation to prevent data loss.

How to use AWS Glue crawler with Amazon Athena

Apr 09, 2025 pm 03:09 PM

How to use AWS Glue crawler with Amazon Athena

Apr 09, 2025 pm 03:09 PM

As a data professional, you need to process large amounts of data from various sources. This can pose challenges to data management and analysis. Fortunately, two AWS services can help: AWS Glue and Amazon Athena.

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL is an open source relational database management system. 1) Create database and tables: Use the CREATEDATABASE and CREATETABLE commands. 2) Basic operations: INSERT, UPDATE, DELETE and SELECT. 3) Advanced operations: JOIN, subquery and transaction processing. 4) Debugging skills: Check syntax, data type and permissions. 5) Optimization suggestions: Use indexes, avoid SELECT* and use transactions.

SQL Classic 50 Question Answers

Apr 09, 2025 pm 01:33 PM

SQL Classic 50 Question Answers

Apr 09, 2025 pm 01:33 PM

SQL (Structured Query Language) is a programming language used to create, manage, and query databases. The main functions include: creating databases and tables, inserting, updating and deleting data, sorting and filtering results, aggregating functions, joining tables, subqueries, operators, functions, keywords, data manipulation/definition/control language, connection types, query optimization, security, tools, resources, versions, common errors, debugging techniques, best practices, trends and row locking.

How to build a SQL database

Apr 09, 2025 pm 04:24 PM

How to build a SQL database

Apr 09, 2025 pm 04:24 PM

Building an SQL database involves 10 steps: selecting DBMS; installing DBMS; creating a database; creating a table; inserting data; retrieving data; updating data; deleting data; managing users; backing up the database.

MySQL and SQL: Essential Skills for Developers

Apr 10, 2025 am 09:30 AM

MySQL and SQL: Essential Skills for Developers

Apr 10, 2025 am 09:30 AM

MySQL and SQL are essential skills for developers. 1.MySQL is an open source relational database management system, and SQL is the standard language used to manage and operate databases. 2.MySQL supports multiple storage engines through efficient data storage and retrieval functions, and SQL completes complex data operations through simple statements. 3. Examples of usage include basic queries and advanced queries, such as filtering and sorting by condition. 4. Common errors include syntax errors and performance issues, which can be optimized by checking SQL statements and using EXPLAIN commands. 5. Performance optimization techniques include using indexes, avoiding full table scanning, optimizing JOIN operations and improving code readability.

How to import sql files into database

Apr 09, 2025 pm 04:27 PM

How to import sql files into database

Apr 09, 2025 pm 04:27 PM

Importing SQL files allows you to load SQL statements from text files into a database. The steps include: Connect to the database. Prepare the SQL file to make sure it is valid and the statement ends in a semicolon. Import files via the command line or database client tools. Query the database verification import results.