Learning and using Java thread pool principles (picture)

Technical background of thread pool

In Object-oriented programming, creating and destroying objects is very time-consuming, becauseCreate an objectTo obtain memory resources or other more resources. This is especially true in Java, where the virtual machine will try to keep track of every object so that it can be garbage collected after the object is destroyed. So one way to improve the efficiency of service programs is to reduce the number of object creation and destruction as much as possible, especially the creation and destruction of some resource-intensive objects. How to use existing objects to serve is a key issue that needs to be solved. In fact, this is the reason for the emergence of some "pooled resource" technologies.

For example, many common components commonly seen in Android are generally inseparable from the concept of "pool", such as various image loading libraries, network request libraries, even Meaasge in Android's messaging mechanism should use Meaasge. obtain() is the object in the Meaasge pool used, so this concept is very important. The thread pool technology introduced in this article is also consistent with this idea.

Advantages of thread pool:

- Reuse threads in the thread pool to reduce performance overhead caused by object creation and destruction;

- It can effectively control the maximum number of concurrent threads, improve system resource utilization, and avoid excessive resource competition and congestion;

- It can perform simple management of multi-threads, making the use of threads simple and efficient.

- Thread pool framework

The thread pool in java is implemented through the Executor framework. The Executor framework includes classes: Executor, Executors, ExecutorService , ThreadPoolExecutor, C

allable and the use of Future and FutureTask, etc.

: All thread pool interfaces have only one method. public interface Executor {

void execute(Runnable command);

}

: Adding Executor behavior is the most direct interface of the Executor implementation class.

Executors: Provides a series of factory methods for creating thread pools, and the returned thread pools all implement the ExecutorService interface.

ThreadPoolExecutor: The specific implementation class of the thread pool. Various thread pools in general use are implemented based on this class. Construction method

is as follows:public ThreadPoolExecutor(int corePoolSize,

int maximumPoolSize,

long keepAliveTime,

TimeUnit unit,

BlockingQueue<Runnable> workQueue) {

this(corePoolSize, maximumPoolSize, keepAliveTime, unit, workQueue,

Executors.defaultThreadFactory(), defaultHandler);

}

- corePoolSize

: The number of core threads in the thread pool, and the number of threads running in the thread pool will always be It will not exceed corePoolSize and can always survive by default. You can set allowCoreThreadTimeOut to True. At this time, the number of core threads is 0. At this time, keepAliveTime controls the timeout of all threads.

maximumPoolSize - : The maximum number of threads allowed by the thread pool;

- : Refers to Is the timeout for the idle thread to end;

- : Is an enumeration indicating the unit of keepAliveTime;

- : Represents the BlockingQueue

- : Blocking Queue (BlockingQueue) is a tool mainly used to control thread synchronization under java.util.concurrent. If the BlockQueue is empty, the operation of fetching things from the BlockingQueue will be blocked and enter the waiting state, and will not be awakened until something enters the BlockingQueue. Similarly, if the BlockingQueue is full, any operation that attempts to store something in it will also be blocked and enter a waiting state. It will not be awakened to continue the operation until there is space in the BlockingQueue.

Blocking queues are often used in producer and consumer scenarios. The producer is the thread that adds elements to the queue, and the consumer is the thread that takes elements from the queue. The blocking queue is a container where the producer stores elements, and the consumer only takes elements from the container. Specific implementation classes include LinkedBlockingQueue, ArrayBlockingQueued, etc. Generally, blocking and awakening are implemented internally through Lock and Condition (display lock (Lock) and Condition learning and use).

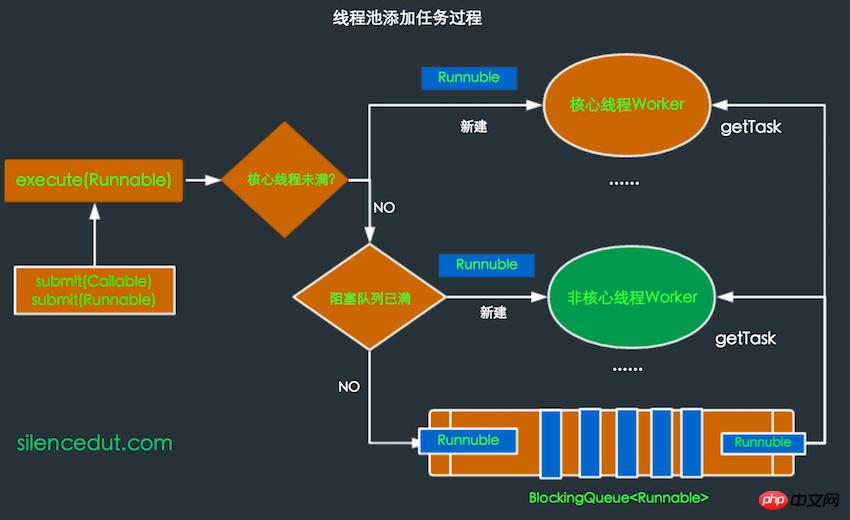

The working process of the thread pool is as follows:

When the thread pool is first created, there is no thread in it. The task queue is passed in as a parameter. However, even if there are tasks in the queue, the thread pool will not execute them immediately.

- When the execute() method is called to add a task, the thread pool will make the following judgment:

- If the thread is running If the number is less than corePoolSize, then create a thread immediately to run the task;

- If the number of running threads is greater than or equal to corePoolSize, then put the task into the queue;

如果这时候队列满了,而且正在运行的线程数量小于 maximumPoolSize,那么还是要创建非核心线程立刻运行这个任务;

如果队列满了,而且正在运行的线程数量大于或等于 maximumPoolSize,那么线程池会抛出异常RejectExecutionException。

当一个线程完成任务时,它会从队列中取下一个任务来执行。

当一个线程无事可做,超过一定的时间(keepAliveTime)时,线程池会判断,如果当前运行的线程数大于 corePoolSize,那么这个线程就被停掉。所以线程池的所有任务完成后,它最终会收缩到 corePoolSize 的大小。

线程池的创建和使用

生成线程池采用了工具类Executors的静态方法,以下是几种常见的线程池。

SingleThreadExecutor:单个后台线程 (其缓冲队列是无界的)

public static ExecutorService newSingleThreadExecutor() {

return new FinalizableDelegatedExecutorService (

new ThreadPoolExecutor(1, 1,

0L, TimeUnit.MILLISECONDS,

new LinkedBlockingQueue<Runnable>()));

}创建一个单线程的线程池。这个线程池只有一个核心线程在工作,也就是相当于单线程串行执行所有任务。如果这个唯一的线程因为异常结束,那么会有一个新的线程来替代它。此线程池保证所有任务的执行顺序按照任务的提交顺序执行。

FixedThreadPool:只有核心线程的线程池,大小固定 (其缓冲队列是无界的) 。

public static ExecutorService newFixedThreadPool(int nThreads) {

return new ThreadPoolExecutor(nThreads, nThreads,

0L, TimeUnit.MILLISECONDS,

new LinkedBlockingQueue<Runnable>());

}创建固定大小的线程池。每次提交一个任务就创建一个线程,直到线程达到线程池的最大大小。线程池的大小一旦达到最大值就会保持不变,如果某个线程因为执行异常而结束,那么线程池会补充一个新线程。

CachedThreadPool:无界线程池,可以进行自动线程回收。

public static ExecutorService newCachedThreadPool() {

return new ThreadPoolExecutor(0,Integer.MAX_VALUE,

60L, TimeUnit.SECONDS,

new SynchronousQueue<Runnable>());

}如果线程池的大小超过了处理任务所需要的线程,那么就会回收部分空闲(60秒不执行任务)的线程,当任务数增加时,此线程池又可以智能的添加新线程来处理任务。此线程池不会对线程池大小做限制,线程池大小完全依赖于操作系统(或者说JVM)能够创建的最大线程大小。SynchronousQueue是一个是缓冲区为1的阻塞队列。

ScheduledThreadPool:核心线程池固定,大小无限的线程池。此线程池支持定时以及周期性执行任务的需求。

public static ExecutorService newScheduledThreadPool(int corePoolSize) {

return new ScheduledThreadPool(corePoolSize,

Integer.MAX_VALUE,

DEFAULT_KEEPALIVE_MILLIS, MILLISECONDS,

new DelayedWorkQueue());

}创建一个周期性执行任务的线程池。如果闲置,非核心线程池会在DEFAULT_KEEPALIVEMILLIS时间内回收。

线程池最常用的提交任务的方法有两种:

execute:

ExecutorService.execute(Runnable runable);

submit:

FutureTask task = ExecutorService.submit(Runnable runnable); FutureTask<T> task = ExecutorService.submit(Runnable runnable,T Result); FutureTask<T> task = ExecutorService.submit(Callable<T> callable);

submit(Callable callable)的实现,submit(Runnable runnable)同理。

public <T> Future<T> submit(Callable<T> task) {

if (task == null) throw new NullPointerException();

FutureTask<T> ftask = newTaskFor(task);

execute(ftask);

return ftask;

}可以看出submit开启的是有返回结果的任务,会返回一个FutureTask对象,这样就能通过get()方法得到结果。submit最终调用的也是execute(Runnable runable),submit只是将Callable对象或Runnable封装成一个FutureTask对象,因为FutureTask是个Runnable,所以可以在execute中执行。关于Callable对象和Runnable怎么封装成FutureTask对象,见Callable和Future、FutureTask的使用。

线程池实现的原理

如果只讲线程池的使用,那这篇博客没有什么大的价值,充其量也就是熟悉Executor相关API的过程。线程池的实现过程没有用到Synchronized关键字,用的都是Volatile,Lock和同步(阻塞)队列,Atomic相关类,FutureTask等等,因为后者的性能更优。理解的过程可以很好的学习源码中并发控制的思想。

在开篇提到过线程池的优点是可总结为以下三点:

线程复用

控制最大并发数

管理线程

1.线程复用过程

理解线程复用原理首先应了解线程生命周期。

在线程的生命周期中,它要经过新建(New)、就绪(Runnable)、运行(Running)、阻塞(Blocked)和死亡(Dead)5种状态。

Thread通过new来新建一个线程,这个过程是是初始化一些线程信息,如线程名,id,线程所属group等,可以认为只是个普通的对象。调用Thread的start()后Java虚拟机会为其创建方法调用栈和程序计数器,同时将hasBeenStarted为true,之后调用start方法就会有异常。

处于这个状态中的线程并没有开始运行,只是表示该线程可以运行了。至于该线程何时开始运行,取决于JVM里线程调度器的调度。当线程获取cpu后,run()方法会被调用。不要自己去调用Thread的run()方法。之后根据CPU的调度在就绪——运行——阻塞间切换,直到run()方法结束或其他方式停止线程,进入dead状态。

所以实现线程复用的原理应该就是要保持线程处于存活状态(就绪,运行或阻塞)。接下来来看下ThreadPoolExecutor是怎么实现线程复用的。

在ThreadPoolExecutor主要Worker类来控制线程的复用。看下Worker类简化后的代码,这样方便理解:

private final class Worker implements Runnable {

final Thread thread;

Runnable firstTask;

Worker(Runnable firstTask) {

this.firstTask = firstTask;

this.thread = getThreadFactory().newThread(this);

}

public void run() {

runWorker(this);

}

final void runWorker(Worker w) {

Runnable task = w.firstTask;

w.firstTask = null;

while (task != null || (task = getTask()) != null){

task.run();

}

}Worker是一个Runnable,同时拥有一个thread,这个thread就是要开启的线程,在新建Worker对象时同时新建一个Thread对象,同时将Worker自己作为参数传入TThread,这样当Thread的start()方法调用时,运行的实际上是Worker的run()方法,接着到runWorker()中,有个while循环,一直从getTask()里得到Runnable对象,顺序执行。getTask()又是怎么得到Runnable对象的呢?

依旧是简化后的代码:

private Runnable getTask() {

if(一些特殊情况) {

return null;

}

Runnable r = workQueue.take();

return r;

}这个workQueue就是初始化ThreadPoolExecutor时存放任务的BlockingQueue队列,这个队列里的存放的都是将要执行的Runnable任务。因为BlockingQueue是个阻塞队列,BlockingQueue.take()得到如果是空,则进入等待状态直到BlockingQueue有新的对象被加入时唤醒阻塞的线程。所以一般情况Thread的run()方法就不会结束,而是不断执行从workQueue里的Runnable任务,这就达到了线程复用的原理了。

2.控制最大并发数

那Runnable是什么时候放入workQueue?Worker又是什么时候创建,Worker里的Thread的又是什么时候调用start()开启新线程来执行Worker的run()方法的呢?有上面的分析看出Worker里的runWorker()执行任务时是一个接一个,串行进行的,那并发是怎么体现的呢?

很容易想到是在execute(Runnable runnable)时会做上面的一些任务。看下execute里是怎么做的。

execute:

简化后的代码

public void execute(Runnable command) {

if (command == null)

throw new NullPointerException();

int c = ctl.get();

// 当前线程数 < corePoolSize

if (workerCountOf(c) < corePoolSize) {

// 直接启动新的线程。

if (addWorker(command, true))

return;

c = ctl.get();

}

// 活动线程数 >= corePoolSize

// runState为RUNNING && 队列未满

if (isRunning(c) && workQueue.offer(command)) {

int recheck = ctl.get();

// 再次检验是否为RUNNING状态

// 非RUNNING状态 则从workQueue中移除任务并拒绝

if (!isRunning(recheck) && remove(command))

reject(command);// 采用线程池指定的策略拒绝任务

// 两种情况:

// 1.非RUNNING状态拒绝新的任务

// 2.队列满了启动新的线程失败(workCount > maximumPoolSize)

} else if (!addWorker(command, false))

reject(command);

}addWorker:

简化后的代码

private boolean addWorker(Runnable firstTask, boolean core) {

int wc = workerCountOf(c);

if (wc >= (core ? corePoolSize : maximumPoolSize)) {

return false;

}

w = new Worker(firstTask);

final Thread t = w.thread;

t.start();

}根据代码再来看上面提到的线程池工作过程中的添加任务的情况:

* 如果正在运行的线程数量小于 corePoolSize,那么马上创建线程运行这个任务; * 如果正在运行的线程数量大于或等于 corePoolSize,那么将这个任务放入队列; * 如果这时候队列满了,而且正在运行的线程数量小于 maximumPoolSize,那么还是要创建非核心线程立刻运行这个任务; * 如果队列满了,而且正在运行的线程数量大于或等于 maximumPoolSize,那么线程池会抛出异常RejectExecutionException。

这就是Android的AsyncTask在并行执行是在超出最大任务数是抛出RejectExecutionException的原因所在,详见基于最新版本的AsyncTask源码解读及AsyncTask的黑暗面

通过addWorker如果成功创建新的线程成功,则通过start()开启新线程,同时将firstTask作为这个Worker里的run()中执行的第一个任务。

虽然每个Worker的任务是串行处理,但如果创建了多个Worker,因为共用一个workQueue,所以就会并行处理了。

所以根据corePoolSize和maximumPoolSize来控制最大并发数。大致过程可用下图表示。

上面的讲解和图来可以很好的理解的这个过程。

如果是做Android开发的,并且对Handler原理比较熟悉,你可能会觉得这个图挺熟悉,其中的一些过程和Handler,Looper,Meaasge使用中,很相似。Handler.send(Message)相当于execute(Runnuble),Looper中维护的Meaasge队列相当于BlockingQueue,只不过需要自己通过同步来维护这个队列,Looper中的loop()函数循环从Meaasge队列取Meaasge和Worker中的runWork()不断从BlockingQueue取Runnable是同样的道理。

3.管理线程

通过线程池可以很好的管理线程的复用,控制并发数,以及销毁等过程,线程的复用和控制并发上面已经讲了,而线程的管理过程已经穿插在其中了,也很好理解。

在ThreadPoolExecutor有个ctl的AtomicInteger变量。通过这一个变量保存了两个内容:

所有线程的数量

每个线程所处的状态

其中低29位存线程数,高3位存runState,通过位运算来得到不同的值。

private final AtomicInteger ctl = new AtomicInteger(ctlOf(RUNNING, 0));

//得到线程的状态

private static int runStateOf(int c) {

return c & ~CAPACITY;

}

//得到Worker的的数量

private static int workerCountOf(int c) {

return c & CAPACITY;

}

// 判断线程是否在运行

private static boolean isRunning(int c) {

return c < SHUTDOWN;

}这里主要通过shutdown和shutdownNow()来分析线程池的关闭过程。首先线程池有五种状态来控制任务添加与执行。主要介绍以下三种:

RUNNING状态:线程池正常运行,可以接受新的任务并处理队列中的任务;

SHUTDOWN状态:不再接受新的任务,但是会执行队列中的任务;

STOP状态:不再接受新任务,不处理队列中的任务

shutdown This method will set the runState to SHUTDOWN and terminate all idle threads. The threads that are still working will not be affected, so the tasks in the queue will be executed. The shutdownNow method sets runState to STOP. The difference from the shutdown method is that this method will terminate all threads, so the tasks in the queue will not be executed.

Summary

Through the analysis of the ThreadPoolExecutor source code, we have an overall understanding of the creation of the thread pool, the addition of tasks, and the execution of tasks. If you are familiar with these processes, it will be easier to use the thread pool. .

The usage of concurrency control and producer-consumer model task processing learned from it will be of great help in understanding or solving other related problems in the future. For example, the Handler mechanism in Android, and the Messager queue in Looper can also be processed by a BlookQueue. This is what I gain from reading the source code.

The above is the detailed content of Learning and using Java thread pool principles (picture). For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1384

1384

52

52

Perfect Number in Java

Aug 30, 2024 pm 04:28 PM

Perfect Number in Java

Aug 30, 2024 pm 04:28 PM

Guide to Perfect Number in Java. Here we discuss the Definition, How to check Perfect number in Java?, examples with code implementation.

Weka in Java

Aug 30, 2024 pm 04:28 PM

Weka in Java

Aug 30, 2024 pm 04:28 PM

Guide to Weka in Java. Here we discuss the Introduction, how to use weka java, the type of platform, and advantages with examples.

Smith Number in Java

Aug 30, 2024 pm 04:28 PM

Smith Number in Java

Aug 30, 2024 pm 04:28 PM

Guide to Smith Number in Java. Here we discuss the Definition, How to check smith number in Java? example with code implementation.

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

In this article, we have kept the most asked Java Spring Interview Questions with their detailed answers. So that you can crack the interview.

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Java 8 introduces the Stream API, providing a powerful and expressive way to process data collections. However, a common question when using Stream is: How to break or return from a forEach operation? Traditional loops allow for early interruption or return, but Stream's forEach method does not directly support this method. This article will explain the reasons and explore alternative methods for implementing premature termination in Stream processing systems. Further reading: Java Stream API improvements Understand Stream forEach The forEach method is a terminal operation that performs one operation on each element in the Stream. Its design intention is

TimeStamp to Date in Java

Aug 30, 2024 pm 04:28 PM

TimeStamp to Date in Java

Aug 30, 2024 pm 04:28 PM

Guide to TimeStamp to Date in Java. Here we also discuss the introduction and how to convert timestamp to date in java along with examples.

Java Program to Find the Volume of Capsule

Feb 07, 2025 am 11:37 AM

Java Program to Find the Volume of Capsule

Feb 07, 2025 am 11:37 AM

Capsules are three-dimensional geometric figures, composed of a cylinder and a hemisphere at both ends. The volume of the capsule can be calculated by adding the volume of the cylinder and the volume of the hemisphere at both ends. This tutorial will discuss how to calculate the volume of a given capsule in Java using different methods. Capsule volume formula The formula for capsule volume is as follows: Capsule volume = Cylindrical volume Volume Two hemisphere volume in, r: The radius of the hemisphere. h: The height of the cylinder (excluding the hemisphere). Example 1 enter Radius = 5 units Height = 10 units Output Volume = 1570.8 cubic units explain Calculate volume using formula: Volume = π × r2 × h (4

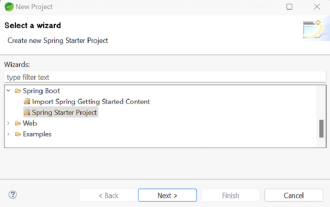

How to Run Your First Spring Boot Application in Spring Tool Suite?

Feb 07, 2025 pm 12:11 PM

How to Run Your First Spring Boot Application in Spring Tool Suite?

Feb 07, 2025 pm 12:11 PM

Spring Boot simplifies the creation of robust, scalable, and production-ready Java applications, revolutionizing Java development. Its "convention over configuration" approach, inherent to the Spring ecosystem, minimizes manual setup, allo