In the previous article node entry scenario - crawler, we have introduced the simplest node crawler implementation. This article goes one step further on the original basis and discusses how to bypass the login and crawl the data in the login area.

Theoretical basis

verification codeHow to break it

. The client and server will not maintain a long connection. How can the server identify which interfaces are from the same client between independent requests and responses? You can easily think of the following mechanism:

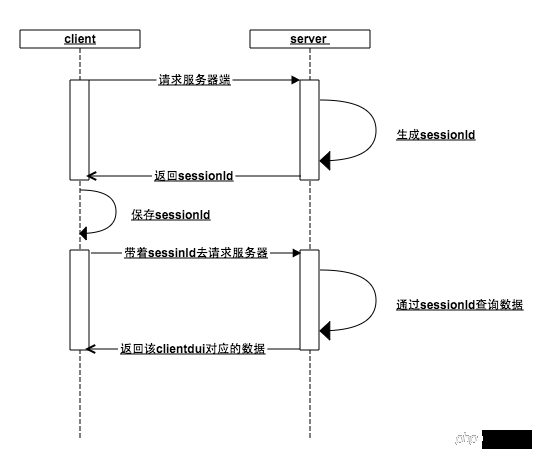

##session

The core of this mechanism is Session id (sessionId):

When the client requests the server, the server determines that the client has not passed in the sessionId. Okay, this guy is new, generate it for it A sessionId is stored in the memory, and the sessionId is returned to the client.in the memory at this moment, and the user data is saved in the memory ), the server can return the data corresponding to the client based on the unique identifier of sessionId

Whether the client or the server loses the sessionId, the previous steps will be repeated. No one knows anyone anymore, start overHow does the browser do it

##bs-sid.png

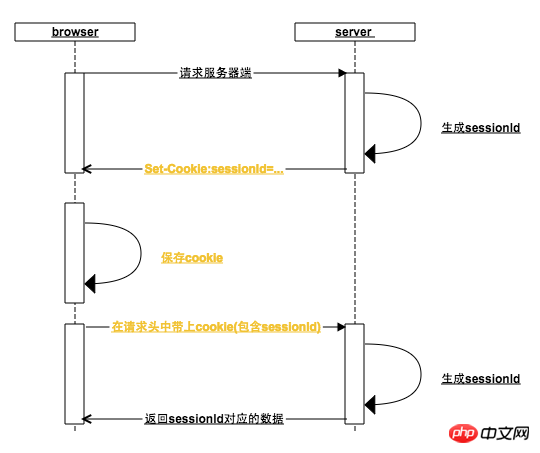

What does the browser do:

in the cookie yet. 2. The browser sets the cookie according to the

Set-Cookie in the server response header. For this reason, the server will put the generated sessionId into Set-cookie

When the browser receives the Set-Cookie instruction, it will set a local cookie with the domain name of the request address as the key. Generally, when the server returns the Set-cookie, the expiration time of the sessionId is set to the browser to close by default. It expires when the browser is opened, which is why it is a session from opening to closing the browser (some websites can also be set to stay logged in and set cookies that will not expire for a long time)

3. When the browser opens again When a request is initiated in the background, the cookie in the request header already contains the sessionId. If the user has visited the login interface before, then the user data can bequeried

based on the sessionId

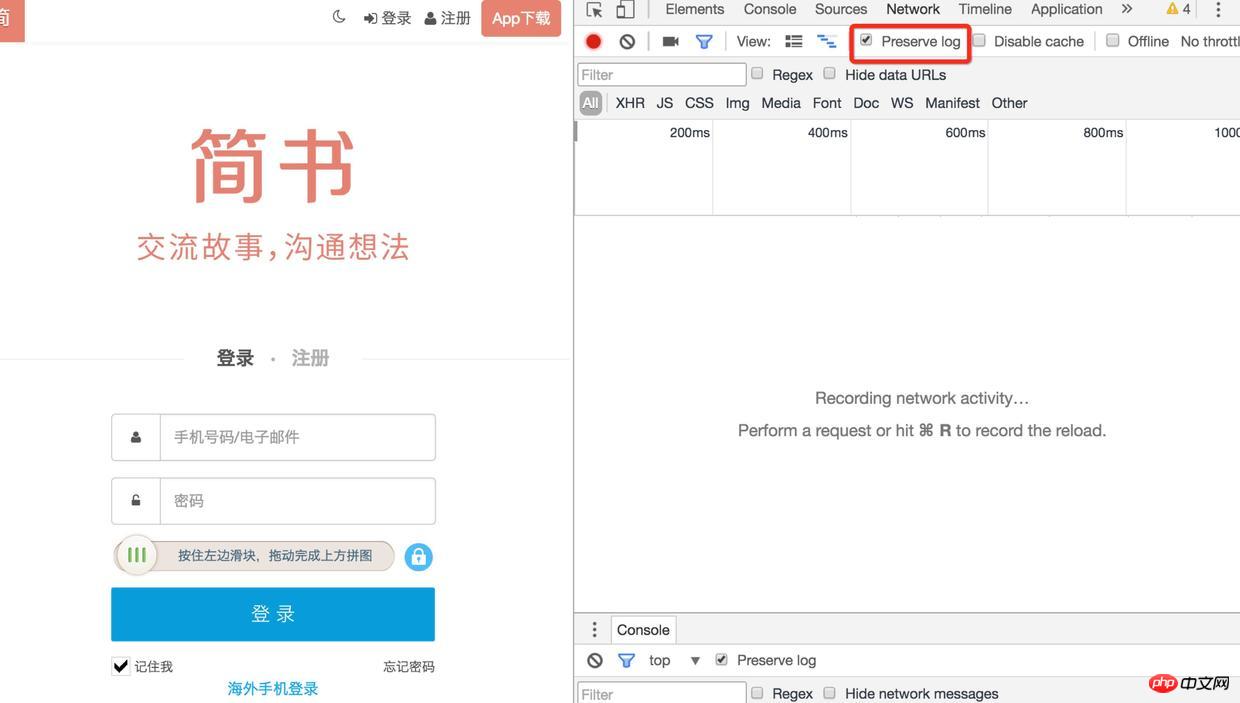

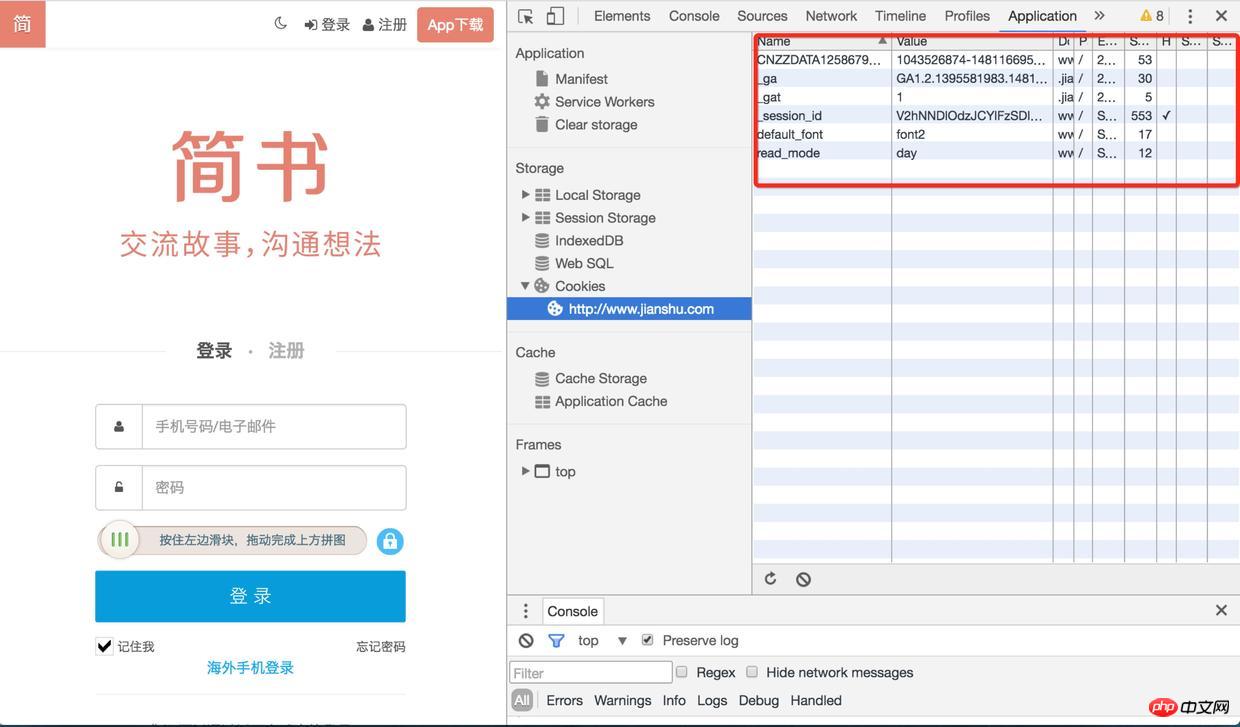

There is no proof, here is an example: 1). First use the login page opened by chr

ome, and find all the files under http://www.jianshu.com in the application Cookie, enter the Network item and check preserve log (otherwise you will not be able to see the previous log after the page is redirected)Login

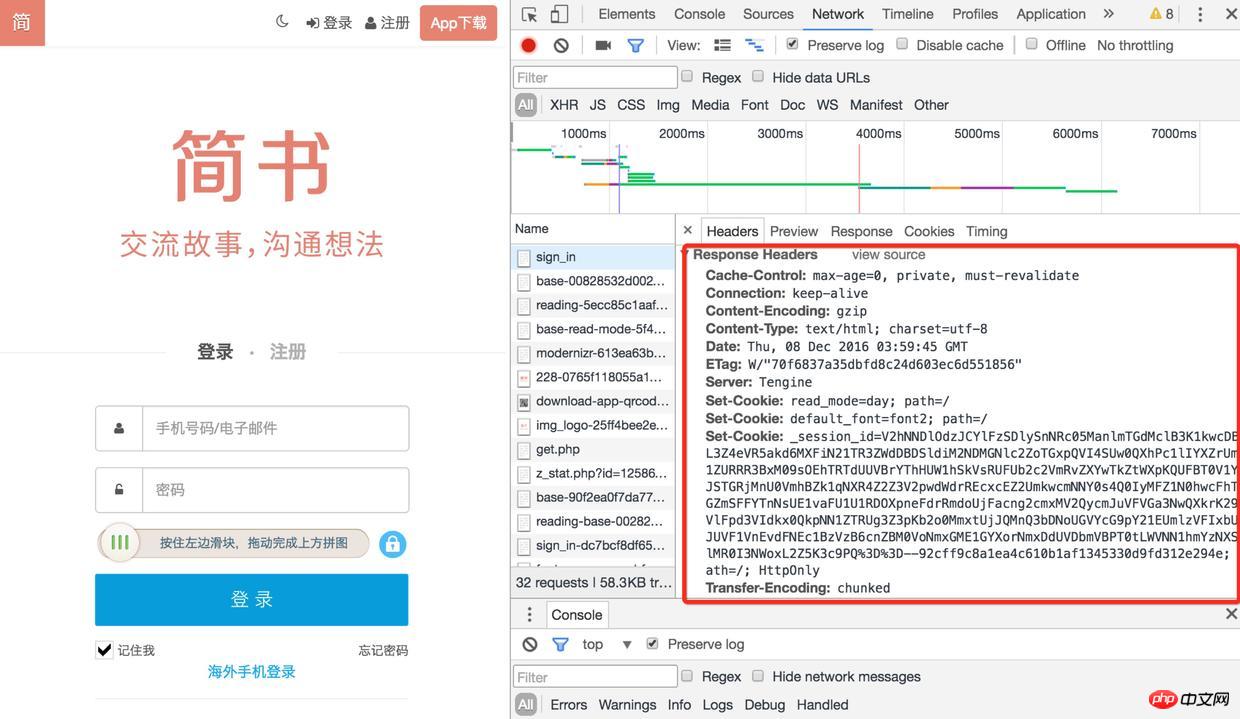

2). Then refresh the page and find the sign-in interface. There are many Set-Cookies in its response header. Are there any

Login

3). When you check the cookie again, the session-id has been saved, and you will request others next time When accessing the interface (such as obtaining verification code, logging in), this session-id will be brought. After logging in, the user's information will also be associated with the session-id

Login

We need to simulate the working mode of the browser and crawl the data in the website login area

I found one without verification Test the website with the verification code. If there is a verification code, verification code identification is involved (the login is not considered, the complexity of the verification code is impressive). The next section explains

// 浏览器请求报文头部部分信息

var browserMsg={

"User-Agent":"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/54.0.2840.71 Safari/537.36",

'Content-Type':'application/x-www-form-urlencoded'

};

//访问登录接口获取cookie

function getLoginCookie(userid, pwd) {

userid = userid.toUpperCase();

return new Promise(function(resolve, reject) {

superagent.post(url.login_url).set(browserMsg).send({

userid: userid,

pwd: pwd,

timezoneOffset: '0'

}).redirects(0).end(function (err, response) {

//获取cookie

var cookie = response.headers["set-cookie"];

resolve(cookie);

});

});

}You need to capture a request under chrome and obtain some request header information, because the server may verify these request header information. For example, on the website I experimented with, I did not pass in the User-Agent at first. The server found that the request was not from the server and returned a string of error messages, so I later set up the User-Agent and put I pretend to be a chrome browser~~

superagent is a client-side HTTP request library. You can use it to easily send requests and process cookies (call http.request yourself to operate The header field data is not so convenient. After obtaining the set-cookie, you have to assemble it into a suitable format cookie). redirects(0) is mainly set not to redirect

function getData(cookie) {

return new Promise(function(resolve, reject) {

//传入cookie

superagent.get(url.target_url).set("Cookie",cookie).set(browserMsg).end(function(err,res) {

var $ = cheerio.load(res.text);

resolve({

cookie: cookie,

doc: $

});

});

});

}After getting the set-cookie in the previous step, pass in the getData method , after setting it into the request through the superagent (set-cookie will be formatted into a cookie), you can get the login data normally

In the actual scenario, it may not be so smooth, because it is different Websites have different security measures. For example: some websites may need to request a token first, some websites need to encrypt parameters, and some with higher security also have anti-replay mechanisms. In directional crawlers, this requires a detailed analysis of the website's processing mechanism. If it cannot be circumvented, then enough is enough~~

But it is still enough to deal with general content information websites

What is requested through the above method is only a piece of html string. Here is the old method. Use the cheerio library to load the string, and you can get a ## similar to jquery dom. #Object, you can operate dom like jquery. This is really an artifact, made with conscience!

3. How to break the verification code if there is one?How many websites can you log in to without entering the verification code? Of course, we won’t try to identify the verification code of 12306. We don’t expect such a conscientious verification code. Too young and too simple verification codes like Zhihu can still be challenged.

node.jsRealizing simple recognition of verification codes

However, even if graphicsmagick is used to preprocess Whether you can achieve a high recognition rate depends on your character~~~ 4. Extension There is a simpler way to bypass the login state, which is to use PhantomJS. Phantomjs is an open source server js based on webkitapi. It can be considered as a browser , but you can control it through js script.

Since it completely simulates thebehavior of the browser, you don’t need to care about set-cookie, cookie at all, you only need to simulate the user’s click operation (of course, if there is verification code, you still have to identify it)

This method is not without its shortcomings. It completely simulates the behavior of the browser, which means that it does not miss any request and needs to load js, css, and images that you may not need.Static For resources, you need to click on multiple pages to reach the destination page, which is less efficient than directly accessing the target url

Search if you are interestedSearch

phontomJS

Although I am talking about the login of node crawler, I have talked about a lot of principles before. The purpose is that if you want to change the language to implement it, you can do it with ease. Still the same sentence: It is important to understand the principle

Welcome to leave a message for discussion. If it is helpful to you, please leave a like~~

The above is the detailed content of Node crawler advanced - login. For more information, please follow other related articles on the PHP Chinese website!