Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

Practical sharing of using Swoole to asynchronously crawl web pages

Practical sharing of using Swoole to asynchronously crawl web pages

Practical sharing of using Swoole to asynchronously crawl web pages

php programmers all know that programs written in php are all synchronous. How to write an asynchronous program in php? The answer is Swoole. Here we take grabbing web content as an example to show how to use Swoole to write asynchronous programs.

php synchronization program

Before writing an asynchronous program, don’t worry, first use php to implement the synchronization program.

<?php

/**

* Class Crawler

* Path: /Sync/Crawler.php

*/

class Crawler

{

private $url;

private $toVisit = [];

public function __construct($url)

{

$this->url = $url;

}

public function visitOneDegree()

{

$this->loadPageUrls();

$this->visitAll();

}

private function loadPageUrls()

{

$content = $this->visit($this->url);

$pattern = '#((http|ftp)://(\S*?\.\S*?))([\s)\[\]{},;"\':<]|\.\s|$)#i';

preg_match_all($pattern, $content, $matched);

foreach ($matched[0] as $url) {

if (in_array($url, $this->toVisit)) {

continue;

}

$this->toVisit[] = $url;

}

}

private function visitAll()

{

foreach ($this->toVisit as $url) {

$this->visit($url);

}

}

private function visit($url)

{

return @file_get_contents($url);

}

}<?php /** * crawler.php */ require_once 'Sync/Crawler.php'; $start = microtime(true); $url = 'http://www.swoole.com/'; $ins = new Crawler($url); $ins->visitOneDegree(); $timeUsed = microtime(true) - $start; echo "time used: " . $timeUsed; /* output: time used: 6.2610177993774 */

A preliminary study on Swoole's implementation of asynchronous crawlers

First refer to the official asynchronous crawling page.

Usage example

Swoole\Async::dnsLookup("www.baidu.com", function ($domainName, $ip) {

$cli = new swoole_http_client($ip, 80);

$cli->setHeaders([

'Host' => $domainName,

"User-Agent" => 'Chrome/49.0.2587.3',

'Accept' => 'text/html,application/xhtml+xml,application/xml',

'Accept-Encoding' => 'gzip',

]);

$cli->get('/index.html', function ($cli) {

echo "Length: " . strlen($cli->body) . "\n";

echo $cli->body;

});

});It seems that by slightly modifying the synchronous file_get_contents code, asynchronous implementation can be achieved. It seems that success is easy.

So, we got the following code:

<?php

/**

* Class Crawler

* Path: /Async/CrawlerV1.php

*/

class Crawler

{

private $url;

private $toVisit = [];

private $loaded = false;

public function __construct($url)

{

$this->url = $url;

}

public function visitOneDegree()

{

$this->visit($this->url, true);

$retryCount = 3;

do {

sleep(1);

$retryCount--;

} while ($retryCount > 0 && $this->loaded == false);

$this->visitAll();

}

private function loadPage($content)

{

$pattern = '#((http|ftp)://(\S*?\.\S*?))([\s)\[\]{},;"\':<]|\.\s|$)#i';

preg_match_all($pattern, $content, $matched);

foreach ($matched[0] as $url) {

if (in_array($url, $this->toVisit)) {

continue;

}

$this->toVisit[] = $url;

}

}

private function visitAll()

{

foreach ($this->toVisit as $url) {

$this->visit($url);

}

}

private function visit($url, $root = false)

{

$urlInfo = parse_url($url);

Swoole\Async::dnsLookup($urlInfo['host'], function ($domainName, $ip) use($urlInfo, $root) {

$cli = new swoole_http_client($ip, 80);

$cli->setHeaders([

'Host' => $domainName,

"User-Agent" => 'Chrome/49.0.2587.3',

'Accept' => 'text/html,application/xhtml+xml,application/xml',

'Accept-Encoding' => 'gzip',

]);

$cli->get($urlInfo['path'], function ($cli) use ($root) {

if ($root) {

$this->loadPage($cli->body);

$this->loaded = true;

}

});

});

}

}<?php /** * crawler.php */ require_once 'Async/CrawlerV1.php'; $start = microtime(true); $url = 'http://www.swoole.com/'; $ins = new Crawler($url); $ins->visitOneDegree(); $timeUsed = microtime(true) - $start; echo "time used: " . $timeUsed; /* output: time used: 3.011773109436 */

The result ran for 3 seconds. Pay attention to my implementation. After initiating a request to crawl the home page, I will poll for the results every second, and it will end after polling three times. The 3 seconds here seem to be the exit caused by polling 3 times without results.

It seems that I was too impatient and did not give them enough preparation time. Okay, let's change the number of polls to 10 and see the results.

time used: 10.034232854843

You know how I feel at this time.

Is it a performance problem with swoole? Why is there no result after 10 seconds? Is it because my posture is wrong? The old man Marx said: "Practice is the only criterion for testing truth." It seems that we need to debug it to find out the reason.

So, I added breakpoints at

$this->visitAll();

and

$this->loadPage($cli->body);

. Finally, I found that visitAll() is always executed first, and then loadPage() is executed.

After thinking about it for a while, I probably understand the reason. what is the reason behind the scene?

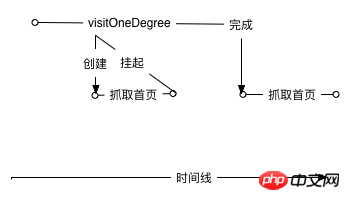

The asynchronous dynamic model I expect is like this:

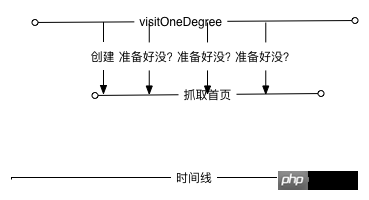

However, the real scene is not like this. Through debugging, I roughly understand that the actual model should be like this:

In other words, no matter how I increase the number of retries , the data will never be ready. The data will only start executing after the current function is ready. The asynchronous here only reduces the time to prepare the connection.

Then the question is, how can I make the program perform the functions I expect after preparing the data.

Let’s first take a look at how Swoole’s official code for executing asynchronous tasks is written

$serv = new swoole_server("127.0.0.1", 9501);

//设置异步任务的工作进程数量

$serv->set(array('task_worker_num' => 4));

$serv->on('receive', function($serv, $fd, $from_id, $data) {

//投递异步任务

$task_id = $serv->task($data);

echo "Dispath AsyncTask: id=$task_id\n";

});

//处理异步任务

$serv->on('task', function ($serv, $task_id, $from_id, $data) {

echo "New AsyncTask[id=$task_id]".PHP_EOL;

//返回任务执行的结果

$serv->finish("$data -> OK");

});

//处理异步任务的结果

$serv->on('finish', function ($serv, $task_id, $data) {

echo "AsyncTask[$task_id] Finish: $data".PHP_EOL;

});

$serv->start();It can be seen that the official pass the subsequent execution logic through a function anonymous function. Seen this way, things become much simpler.

url = $url;

}

public function visitOneDegree()

{

$this->visit($this->url, function ($content) {

$this->loadPage($content);

$this->visitAll();

});

}

private function loadPage($content)

{

$pattern = '#((http|ftp)://(\S*?\.\S*?))([\s)\[\]{},;"\':<]|\.\s|$)#i';

preg_match_all($pattern, $content, $matched);

foreach ($matched[0] as $url) {

if (in_array($url, $this->toVisit)) {

continue;

}

$this->toVisit[] = $url;

}

}

private function visitAll()

{

foreach ($this->toVisit as $url) {

$this->visit($url);

}

}

private function visit($url, $callBack = null)

{

$urlInfo = parse_url($url);

Swoole\Async::dnsLookup($urlInfo['host'], function ($domainName, $ip) use($urlInfo, $callBack) {

if (!$ip) {

return;

}

$cli = new swoole_http_client($ip, 80);

$cli->setHeaders([

'Host' => $domainName,

"User-Agent" => 'Chrome/49.0.2587.3',

'Accept' => 'text/html,application/xhtml+xml,application/xml',

'Accept-Encoding' => 'gzip',

]);

$cli->get($urlInfo['path'], function ($cli) use ($callBack) {

if ($callBack) {

call_user_func($callBack, $cli->body);

}

$cli->close();

});

});

}

} After reading this code, I feel like I have seen it before. In nodejs development, the callbacks that can be seen everywhere have their own reasons. Now I suddenly understand that callback exists to solve asynchronous problems.

I executed the program, and it only took 0.0007s, and it was over before it even started! Can asynchronous efficiency really be improved so much? The answer is of course no, there is something wrong with our code.

Due to the use of asynchronous, the logic of calculating the end time has been executed without waiting for the task to be completely completed. It seems it's time to use callback again.

/**

Async/Crawler.php

**/

public function visitOneDegree($callBack)

{

$this->visit($this->url, function ($content) use($callBack) {

$this->loadPage($content);

$this->visitAll();

call_user_func($callBack);

});

}<?php

/**

* crawler.php

*/

require_once 'Async/Crawler.php';

$start = microtime(true);

$url = 'http://www.swoole.com/';

$ins = new Crawler($url);

$ins->visitOneDegree(function () use($start) {

$timeUsed = microtime(true) - $start;

echo "time used: " . $timeUsed;

});

/*output:

time used: 0.068463802337646

*/Looking at it now, the results are much more credible.

Let us compare the difference between synchronous and asynchronous. Synchronization takes 6.26s and asynchronous takes 0.068 seconds, which is a full 6.192s difference. No, to put it more accurately, it should be nearly 10 times worse!

Of course, in terms of efficiency, asynchronous code is much higher than synchronous code, but logically speaking, asynchronous logic is more convoluted than synchronous, and the code will bring a lot of callbacks, which is not easy to understand.

Swoole official has a description about the choice of asynchronous and synchronous, which is very pertinent. I would like to share it with you:

我们不赞成用异步回调的方式去做功能开发,传统的PHP同步方式实现功能和逻辑是最简单的,也是最佳的方案。像node.js这样到处callback,只是牺牲可维护性和开发效率。

Related reading:

How to install swoole extension with php7

Swoole development key points introduction

php asynchronous Multi-threaded swoole usage examples

The above is all the content of this article. If students have any questions, they can discuss it in the comment area below~

The above is the detailed content of Practical sharing of using Swoole to asynchronously crawl web pages. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

How to use swoole coroutine in laravel

Apr 09, 2024 pm 06:48 PM

How to use swoole coroutine in laravel

Apr 09, 2024 pm 06:48 PM

Using Swoole coroutines in Laravel can process a large number of requests concurrently. The advantages include: Concurrent processing: allows multiple requests to be processed at the same time. High performance: Based on the Linux epoll event mechanism, it processes requests efficiently. Low resource consumption: requires fewer server resources. Easy to integrate: Seamless integration with Laravel framework, simple to use.

Which one is better, swoole or workerman?

Apr 09, 2024 pm 07:00 PM

Which one is better, swoole or workerman?

Apr 09, 2024 pm 07:00 PM

Swoole and Workerman are both high-performance PHP server frameworks. Known for its asynchronous processing, excellent performance, and scalability, Swoole is suitable for projects that need to handle a large number of concurrent requests and high throughput. Workerman offers the flexibility of both asynchronous and synchronous modes, with an intuitive API that is better suited for ease of use and projects that handle lower concurrency volumes.

How does swoole_process allow users to switch?

Apr 09, 2024 pm 06:21 PM

How does swoole_process allow users to switch?

Apr 09, 2024 pm 06:21 PM

Swoole Process allows users to switch. The specific steps are: create a process; set the process user; start the process.

How to restart the service in swoole framework

Apr 09, 2024 pm 06:15 PM

How to restart the service in swoole framework

Apr 09, 2024 pm 06:15 PM

To restart the Swoole service, follow these steps: Check the service status and get the PID. Use "kill -15 PID" to stop the service. Restart the service using the same command that was used to start the service.

Which one has better performance, swoole or java?

Apr 09, 2024 pm 07:03 PM

Which one has better performance, swoole or java?

Apr 09, 2024 pm 07:03 PM

Performance comparison: Throughput: Swoole has higher throughput thanks to its coroutine mechanism. Latency: Swoole's coroutine context switching has lower overhead and smaller latency. Memory consumption: Swoole's coroutines occupy less memory. Ease of use: Swoole provides an easier-to-use concurrent programming API.

Swoole in action: How to use coroutines for concurrent task processing

Nov 07, 2023 pm 02:55 PM

Swoole in action: How to use coroutines for concurrent task processing

Nov 07, 2023 pm 02:55 PM

Swoole in action: How to use coroutines for concurrent task processing Introduction In daily development, we often encounter situations where we need to handle multiple tasks at the same time. The traditional processing method is to use multi-threads or multi-processes to achieve concurrent processing, but this method has certain problems in performance and resource consumption. As a scripting language, PHP usually cannot directly use multi-threading or multi-process methods to handle tasks. However, with the help of the Swoole coroutine library, we can use coroutines to achieve high-performance concurrent task processing. This article will introduce

Swoole Advanced: How to Optimize Server CPU Utilization

Nov 07, 2023 pm 12:27 PM

Swoole Advanced: How to Optimize Server CPU Utilization

Nov 07, 2023 pm 12:27 PM

Swoole is a high-performance PHP network development framework. With its powerful asynchronous mechanism and event-driven features, it can quickly build high-concurrency and high-throughput server applications. However, as the business continues to expand and the amount of concurrency increases, the CPU utilization of the server may become a bottleneck, affecting the performance and stability of the server. Therefore, in this article, we will introduce how to optimize the CPU utilization of the server while improving the performance and stability of the Swoole server, and provide specific optimization code examples. one,

How is the swoole coroutine scheduled?

Apr 09, 2024 pm 07:06 PM

How is the swoole coroutine scheduled?

Apr 09, 2024 pm 07:06 PM

Swoole coroutine is a lightweight concurrency library that allows developers to write concurrent programs. The Swoole coroutine scheduling mechanism is based on the coroutine mode and event loop, using the coroutine stack to manage coroutine execution, and suspend them after the coroutine gives up control. The event loop handles IO and timer events. When the coroutine gives up control, it is suspended and returns to the event loop. When an event occurs, Swoole switches from the event loop to the pending coroutine, completing the switch by saving and loading the coroutine state. Coroutine scheduling uses a priority mechanism and supports suspend, sleep, and resume operations to flexibly control coroutine execution.