Backend Development

Backend Development

Python Tutorial

Python Tutorial

mnist classification example for getting started with Pytorch

mnist classification example for getting started with Pytorch

mnist classification example for getting started with Pytorch

This article mainly introduces the mnist classification example for getting started with Pytorch in detail. It has certain reference value. Interested friends can refer to it.

The example in this article shares with you the mnist for getting started with Pytorch. The specific code of the classification is for your reference. The specific content is as follows

#!/usr/bin/env python

# -*- coding: utf-8 -*-

__author__ = 'denny'

__time__ = '2017-9-9 9:03'

import torch

import torchvision

from torch.autograd import Variable

import torch.utils.data.dataloader as Data

train_data = torchvision.datasets.MNIST(

'./mnist', train=True, transform=torchvision.transforms.ToTensor(), download=True

)

test_data = torchvision.datasets.MNIST(

'./mnist', train=False, transform=torchvision.transforms.ToTensor()

)

print("train_data:", train_data.train_data.size())

print("train_labels:", train_data.train_labels.size())

print("test_data:", test_data.test_data.size())

train_loader = Data.DataLoader(dataset=train_data, batch_size=64, shuffle=True)

test_loader = Data.DataLoader(dataset=test_data, batch_size=64)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(1, 32, 3, 1, 1),

torch.nn.ReLU(),

torch.nn.MaxPool2d(2))

self.conv2 = torch.nn.Sequential(

torch.nn.Conv2d(32, 64, 3, 1, 1),

torch.nn.ReLU(),

torch.nn.MaxPool2d(2)

)

self.conv3 = torch.nn.Sequential(

torch.nn.Conv2d(64, 64, 3, 1, 1),

torch.nn.ReLU(),

torch.nn.MaxPool2d(2)

)

self.dense = torch.nn.Sequential(

torch.nn.Linear(64 * 3 * 3, 128),

torch.nn.ReLU(),

torch.nn.Linear(128, 10)

)

def forward(self, x):

conv1_out = self.conv1(x)

conv2_out = self.conv2(conv1_out)

conv3_out = self.conv3(conv2_out)

res = conv3_out.view(conv3_out.size(0), -1)

out = self.dense(res)

return out

model = Net()

print(model)

optimizer = torch.optim.Adam(model.parameters())

loss_func = torch.nn.CrossEntropyLoss()

for epoch in range(10):

print('epoch {}'.format(epoch + 1))

# training-----------------------------

train_loss = 0.

train_acc = 0.

for batch_x, batch_y in train_loader:

batch_x, batch_y = Variable(batch_x), Variable(batch_y)

out = model(batch_x)

loss = loss_func(out, batch_y)

train_loss += loss.data[0]

pred = torch.max(out, 1)[1]

train_correct = (pred == batch_y).sum()

train_acc += train_correct.data[0]

optimizer.zero_grad()

loss.backward()

optimizer.step()

print('Train Loss: {:.6f}, Acc: {:.6f}'.format(train_loss / (len(

train_data)), train_acc / (len(train_data))))

# evaluation--------------------------------

model.eval()

eval_loss = 0.

eval_acc = 0.

for batch_x, batch_y in test_loader:

batch_x, batch_y = Variable(batch_x, volatile=True), Variable(batch_y, volatile=True)

out = model(batch_x)

loss = loss_func(out, batch_y)

eval_loss += loss.data[0]

pred = torch.max(out, 1)[1]

num_correct = (pred == batch_y).sum()

eval_acc += num_correct.data[0]

print('Test Loss: {:.6f}, Acc: {:.6f}'.format(eval_loss / (len(

test_data)), eval_acc / (len(test_data))))Related recommendations:

How to read it in python Detailed explanation of binary mnist examples

A good introductory tutorial to Python_python

The above is the detailed content of mnist classification example for getting started with Pytorch. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1382

1382

52

52

iFlytek: Huawei's Ascend 910B's capabilities are basically comparable to Nvidia's A100, and they are working together to create a new base for my country's general artificial intelligence

Oct 22, 2023 pm 06:13 PM

iFlytek: Huawei's Ascend 910B's capabilities are basically comparable to Nvidia's A100, and they are working together to create a new base for my country's general artificial intelligence

Oct 22, 2023 pm 06:13 PM

This site reported on October 22 that in the third quarter of this year, iFlytek achieved a net profit of 25.79 million yuan, a year-on-year decrease of 81.86%; the net profit in the first three quarters was 99.36 million yuan, a year-on-year decrease of 76.36%. Jiang Tao, Vice President of iFlytek, revealed at the Q3 performance briefing that iFlytek has launched a special research project with Huawei Shengteng in early 2023, and jointly developed a high-performance operator library with Huawei to jointly create a new base for China's general artificial intelligence, allowing domestic large-scale models to be used. The architecture is based on independently innovative software and hardware. He pointed out that the current capabilities of Huawei’s Ascend 910B are basically comparable to Nvidia’s A100. At the upcoming iFlytek 1024 Global Developer Festival, iFlytek and Huawei will make further joint announcements on the artificial intelligence computing power base. He also mentioned,

The perfect combination of PyCharm and PyTorch: detailed installation and configuration steps

Feb 21, 2024 pm 12:00 PM

The perfect combination of PyCharm and PyTorch: detailed installation and configuration steps

Feb 21, 2024 pm 12:00 PM

PyCharm is a powerful integrated development environment (IDE), and PyTorch is a popular open source framework in the field of deep learning. In the field of machine learning and deep learning, using PyCharm and PyTorch for development can greatly improve development efficiency and code quality. This article will introduce in detail how to install and configure PyTorch in PyCharm, and attach specific code examples to help readers better utilize the powerful functions of these two. Step 1: Install PyCharm and Python

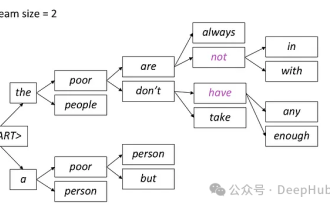

Introduction to five sampling methods in natural language generation tasks and Pytorch code implementation

Feb 20, 2024 am 08:50 AM

Introduction to five sampling methods in natural language generation tasks and Pytorch code implementation

Feb 20, 2024 am 08:50 AM

In natural language generation tasks, sampling method is a technique to obtain text output from a generative model. This article will discuss 5 common methods and implement them using PyTorch. 1. GreedyDecoding In greedy decoding, the generative model predicts the words of the output sequence based on the input sequence time step by time. At each time step, the model calculates the conditional probability distribution of each word, and then selects the word with the highest conditional probability as the output of the current time step. This word becomes the input to the next time step, and the generation process continues until some termination condition is met, such as a sequence of a specified length or a special end marker. The characteristic of GreedyDecoding is that each time the current conditional probability is the best

SVM examples in Python

Jun 11, 2023 pm 08:42 PM

SVM examples in Python

Jun 11, 2023 pm 08:42 PM

Support Vector Machine (SVM) in Python is a powerful supervised learning algorithm that can be used to solve classification and regression problems. SVM performs well when dealing with high-dimensional data and non-linear problems, and is widely used in data mining, image classification, text classification, bioinformatics and other fields. In this article, we will introduce an example of using SVM for classification in Python. We will use the SVM model from the scikit-learn library

Implementing noise removal diffusion model using PyTorch

Jan 14, 2024 pm 10:33 PM

Implementing noise removal diffusion model using PyTorch

Jan 14, 2024 pm 10:33 PM

Before we understand the working principle of the Denoising Diffusion Probabilistic Model (DDPM) in detail, let us first understand some of the development of generative artificial intelligence, which is also one of the basic research of DDPM. VAEVAE uses an encoder, a probabilistic latent space, and a decoder. During training, the encoder predicts the mean and variance of each image and samples these values from a Gaussian distribution. The result of the sampling is passed to the decoder, which converts the input image into a form similar to the output image. KL divergence is used to calculate the loss. A significant advantage of VAE is its ability to generate diverse images. In the sampling stage, one can directly sample from the Gaussian distribution and generate new images through the decoder. GAN has made great progress in variational autoencoders (VAEs) in just one year.

Tutorial on installing PyCharm with PyTorch

Feb 24, 2024 am 10:09 AM

Tutorial on installing PyCharm with PyTorch

Feb 24, 2024 am 10:09 AM

As a powerful deep learning framework, PyTorch is widely used in various machine learning projects. As a powerful Python integrated development environment, PyCharm can also provide good support when implementing deep learning tasks. This article will introduce in detail how to install PyTorch in PyCharm and provide specific code examples to help readers quickly get started using PyTorch for deep learning tasks. Step 1: Install PyCharm First, we need to make sure we have

Deep Learning with PHP and PyTorch

Jun 19, 2023 pm 02:43 PM

Deep Learning with PHP and PyTorch

Jun 19, 2023 pm 02:43 PM

Deep learning is an important branch in the field of artificial intelligence and has received more and more attention in recent years. In order to be able to conduct deep learning research and applications, it is often necessary to use some deep learning frameworks to help achieve it. In this article, we will introduce how to use PHP and PyTorch for deep learning. 1. What is PyTorch? PyTorch is an open source machine learning framework developed by Facebook. It can help us quickly create and train deep learning models. PyTorc

so fast! Recognize video speech into text in just a few minutes with less than 10 lines of code

Feb 27, 2024 pm 01:55 PM

so fast! Recognize video speech into text in just a few minutes with less than 10 lines of code

Feb 27, 2024 pm 01:55 PM

Hello everyone, I am Kite. Two years ago, the need to convert audio and video files into text content was difficult to achieve, but now it can be easily solved in just a few minutes. It is said that in order to obtain training data, some companies have fully crawled videos on short video platforms such as Douyin and Kuaishou, and then extracted the audio from the videos and converted them into text form to be used as training corpus for big data models. If you need to convert a video or audio file to text, you can try this open source solution available today. For example, you can search for the specific time points when dialogues in film and television programs appear. Without further ado, let’s get to the point. Whisper is OpenAI’s open source Whisper. Of course it is written in Python. It only requires a few simple installation packages.