This time I will bring you a detailed explanation of the steps for node to simulate login and capture based on puppeteer. What are the precautions for node to simulate login and capture based on puppeteer? The following is a practical case, let's take a look.

About heat map

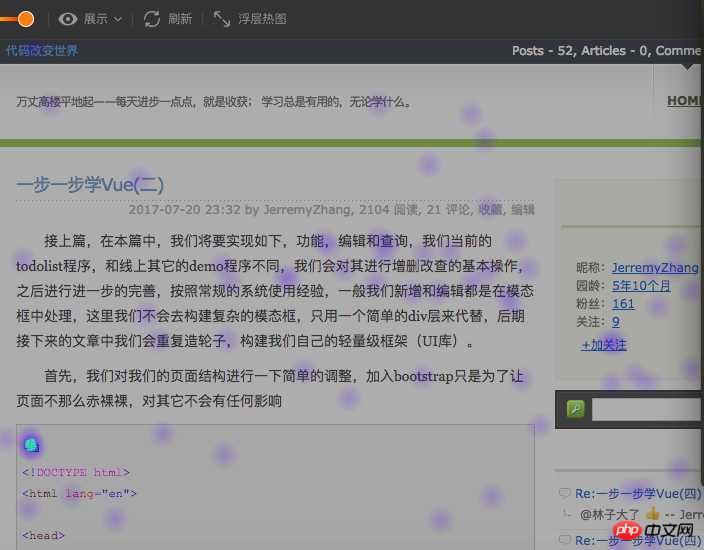

In the website analysis industry, website heat map can well reflect the user's operating behavior on the website. Specific analysis User preferences, targeted optimization of the website, an example of a heat map (from ptengine)

Mainstream implementation methods of heat maps

Generally, the following stages are required to implement heat map display: 1. Obtain the website Page2. Obtain processed user data

3. Draw heat map

This article mainly focuses on stage 1 to introduce in detail the mainstream implementation method of obtaining website pages in heat map

4. Use iframe to directly embed the user website

5. Grab the user page and save it locally, and embed local resources through iframe (the so-called local resources are considered to be the analysis tool side)

if(window.top !== window.self){ window.top.location = window.location;} ), in this case you need The client's website has to do some work before it can be loaded by the iframe of the analysis tool, and it may not be so convenient to use because not all website users who need to detect and analyze the website can manage the website.

user login authorization, cannot crawl the page where the user has set a clear setting, etc.

Both methods have https and http resources. Another problem caused by the same-origin policy is that the https station cannot load http resources, so for the best compatibility, the heat map analysis tool needs to be applied with the http protocol. , of course, specific sub-station optimization can be carried out according to the customer websites visited.How to optimize the crawling of website pages

Here we do some optimization based on puppeteer to improve the crawling of the problems encountered in crawling website pages The probability of success mainly optimizes the following two pages:1.spa page

The spa page is considered mainstream on the current page, but it is always well-known for its Unfriendly to search engines; the usual page crawler is actually a simple crawler, and the process usually involves initiating an http get request to the user's website (should be the user's website server). This crawling method itself has problems. First of all, the direct request is to the user server. The user server should have many restrictions on non-browser agents and need to be bypassed. Secondly, the request returns the original content, which needs to be bypassed. The part rendered through js in the browser cannot be obtained (of course, after the iframe is embedded, js execution will still make up for this problem to a certain extent). Finally, if the page is a spa page, then only the template is obtained at this time. In the heat map The display effect is very unfriendly.In response to this situation, if it is done based on puppeteer, the process becomes

puppeteer starts the browser to open the user website-->page rendering-->returns the rendered result. Simply use pseudo code to implement it as follows:const puppeteer = require('puppeteer');

async getHtml = (url) =>{

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.goto(url);

return await page.content();

}Pages that require login

There are actually many situations for pages that require login:

需要登录才可以查看页面,如果没有登录,则跳转到login页面(各种管理系统)

对于这种类型的页面我们需要做的就是模拟登录,所谓模拟登录就是让浏览器去登录,这里需要用户提供对应网站的用户名和密码,然后我们走如下的流程:

访问用户网站-->用户网站检测到未登录跳转到login-->puppeteer控制浏览器自动登录后跳转到真正需要抓取的页面,可用如下伪代码来说明:

const puppeteer = require("puppeteer");

async autoLogin =(url)=>{

const browser = await puppeteer.launch();

const page =await browser.newPage();

await page.goto(url);

await page.waitForNavigation();

//登录

await page.type('#username',"用户提供的用户名");

await page.type('#password','用户提供的密码');

await page.click('#btn_login');

//页面登录成功后,需要保证redirect 跳转到请求的页面

await page.waitForNavigation();

return await page.content();

}登录与否都可以查看页面,只是登录后看到内容会所有不同 (各种电商或者portal页面)

这种情况处理会比较简单一些,可以简单的认为是如下步骤:

通过puppeteer启动浏览器打开请求页面-->点击登录按钮-->输入用户名和密码登录 -->重新加载页面

基本代码如下图:

const puppeteer = require("puppeteer");

async autoLoginV2 =(url)=>{

const browser = await puppeteer.launch();

const page =await browser.newPage();

await page.goto(url);

await page.click('#btn_show_login');

//登录

await page.type('#username',"用户提供的用户名");

await page.type('#password','用户提供的密码');

await page.click('#btn_login');

//页面登录成功后,是否需要reload 根据实际情况来确定

await page.reload();

return await page.content();

}总结

明天总结吧,今天下班了。

补充(还昨天的债):基于puppeteer虽然可以很友好的抓取页面内容,但是也存在这很多的局限

1.抓取的内容为渲染后的原始html,即资源路径(css、image、javascript)等都是相对路径,保存到本地后无法正常显示,需要特殊处理(js不需要特殊处理,甚至可以移除,因为渲染的结构已经完成)

2.通过puppeteer抓取页面性能会比直接http get 性能会差一些,因为多了渲染的过程

3.同样无法保证页面的完整性,只是很大的提高了完整的概率,虽然通过page对象提供的各种wait 方法能够解决这个问题,但是网站不同,处理方式就会不同,无法复用。

相信看了本文案例你已经掌握了方法,更多精彩请关注php中文网其它相关文章!

推荐阅读:

The above is the detailed content of Detailed explanation of node's simulated login and crawling steps based on puppeteer. For more information, please follow other related articles on the PHP Chinese website!